Network Clustering Approximation Algorithm Using One Pass Black Box Sampling

Finding a good clustering of vertices in a network, where vertices in the same cluster are more tightly connected than those in different clusters, is a useful, important, and well-studied task. Many clustering algorithms scale well, however they are not designed to operate upon internet-scale networks with billions of nodes or more. We study one of the fastest and most memory efficient algorithms possible - clustering based on the connected components in a random edge-induced subgraph. When defining the cost of a clustering to be its distance from such a random clustering, we show that this surprisingly simple algorithm gives a solution that is within an expected factor of two or three of optimal with either of two natural distance functions. In fact, this approximation guarantee works for any problem where there is a probability distribution on clusterings. We then examine the behavior of this algorithm in the context of social network trust inference.

💡 Research Summary

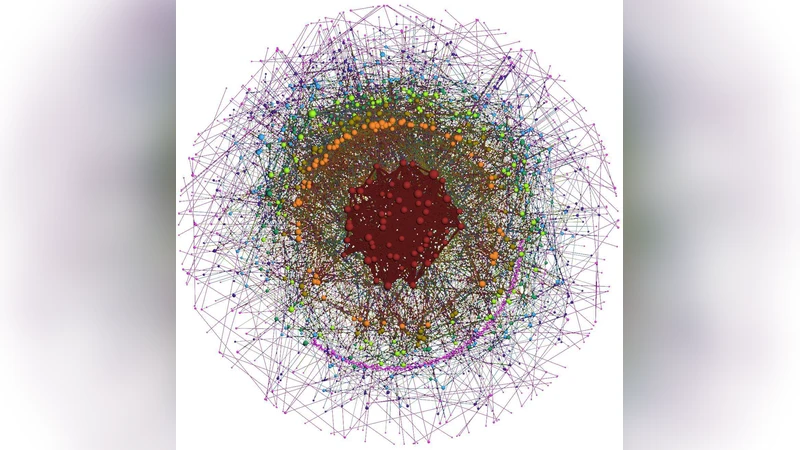

The paper tackles the problem of clustering massive networks—those with billions of vertices and potentially trillions of edges—by proposing an algorithm that is both extremely fast and memory‑efficient. The core idea is deceptively simple: draw each edge of the original graph independently with a fixed probability p (e.g., 1 %). The resulting random edge‑induced subgraph contains only a tiny fraction of the original edges, yet its connected components are used directly as the clustering output. Because the algorithm requires only a single pass over the edge list and stores only the vertex set, its time complexity is O(|E|·p) and its space complexity is O(|V|), making it feasible on commodity hardware or in streaming environments where the full graph cannot be held in memory.

To evaluate the quality of the clustering, the authors define two natural distance (or cost) functions between a candidate clustering and a reference clustering: (1) Normalized Edit Distance, which counts the fraction of vertices that must be reassigned to transform one partition into another, and (2) Normalized Information Loss, which measures the loss of mutual information between the two partitions. Both distances are treated as random variables because the reference clustering itself is drawn from a probability distribution over all possible partitions. The main theoretical contribution is a proof that, for either distance, the expected cost of the random‑sampling clustering (\hat{C}) satisfies

\

Comments & Academic Discussion

Loading comments...

Leave a Comment