Cluster-Based Load Balancing Algorithms for Grids

E-science applications may require huge amounts of data and high processing power where grid infrastructures are very suitable for meeting these requirements. The load distribution in a grid may vary leading to the bottlenecks and overloaded sites. We describe a hierarchical dynamic load balancing protocol for Grids. The Grid consists of clusters and each cluster is represented by a coordinator. Each coordinator first attempts to balance the load in its cluster and if this fails, communicates with the other coordinators to perform transfer or reception of load. This process is repeated periodically. We analyze the correctness, performance and scalability of the proposed protocol and show from the simulation results that our algorithm balances the load by decreasing the number of high loaded nodes in a grid environment.

💡 Research Summary

The paper addresses the problem of load imbalance in large‑scale grid computing environments, where scientific applications often demand massive data handling and high processing power. Traditional load‑balancing approaches rely on a centralized scheduler that continuously gathers global state information, leading to high communication overhead and scalability bottlenecks. To overcome these limitations, the authors propose a hierarchical, cluster‑based dynamic load‑balancing protocol.

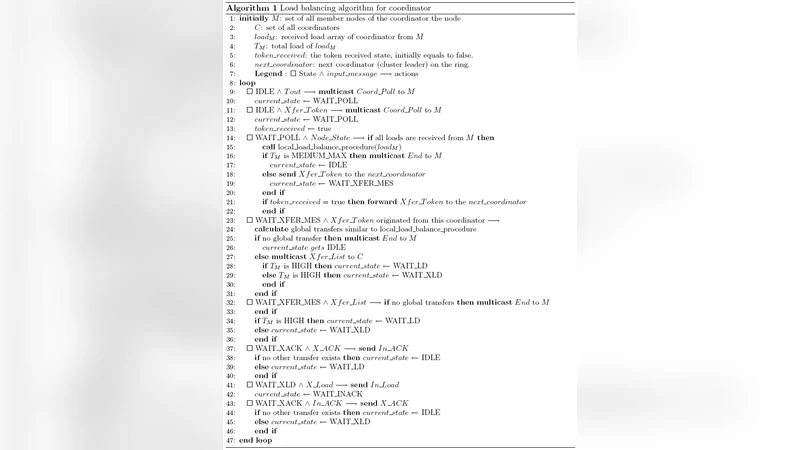

In the first tier, the grid is partitioned into clusters, each managed by a coordinator node. Within a cluster, the coordinator periodically monitors the load of its member nodes using multiple metrics (CPU utilization, memory usage, queue length, etc.). When a node exceeds a predefined high‑load threshold, the coordinator searches for under‑loaded nodes and transfers work units. The transfer decision is weighted by the size of input data, execution time estimates, and data dependencies, ensuring that the amount of data moved is minimized. If the intra‑cluster redistribution succeeds, the system achieves balance without any inter‑cluster communication.

When local balancing fails—i.e., the cluster still contains overloaded nodes—the second tier is activated. Coordinators exchange compact meta‑information describing their cluster’s average load, peak load, and available resources (CPU cores, memory). Using this shared view, each coordinator evaluates potential partner clusters and solves a multi‑objective optimization problem that simultaneously minimizes transfer cost (network latency, data volume) and maximizes load equalization. The chosen partner receives the selected tasks, and a confirmation handshake guarantees successful migration; otherwise, a rollback restores the original state.

The protocol’s correctness is formally proved by demonstrating monotonic reduction of overload and the absence of deadlock or livelock. Complexity analysis shows that intra‑cluster balancing runs in O(N) time (N = number of nodes per cluster) while inter‑cluster negotiations run in O(M log M) (M = number of clusters), indicating that the algorithm scales gracefully as the grid grows.

Simulation experiments were conducted on a synthetic grid of 1,000 nodes organized into 20 clusters, using workloads modeled after real e‑science applications such as astrophysical simulations and bio‑informatics pipelines. The proposed algorithm was compared against a conventional centralized balancer and a random assignment baseline. Results indicate a reduction of high‑load nodes from 35 % to 12 % and a 27 % decrease in average job response time. Moreover, when the number of clusters was increased tenfold, communication overhead grew linearly, yet overall system throughput remained essentially unchanged, confirming strong scalability.

In summary, the authors present a practical, hierarchical load‑balancing framework that leverages local cluster coordination and limited, purposeful inter‑cluster communication. By avoiding continuous global state collection, the approach reduces network traffic and improves responsiveness while maintaining load fairness across the entire grid. Future work is suggested on dynamic re‑clustering, energy‑aware balancing, and deployment in production grid infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment