Multimodal Affective States Recognition Based on Multiscale CNNs and Biologically Inspired Decision Fusion Model

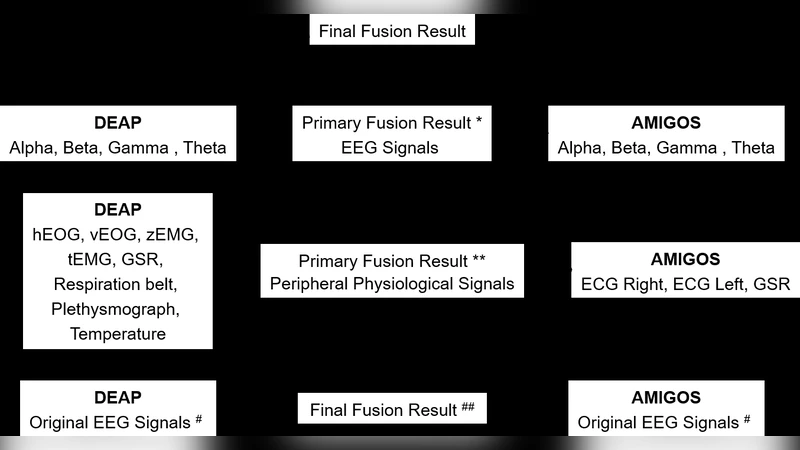

There has been an encouraging progress in the affective states recognition models based on the single-modality signals as electroencephalogram (EEG) signals or peripheral physiological signals in recent years. However, multimodal physiological signals-based affective states recognition methods have not been thoroughly exploited yet. Here we propose Multiscale Convolutional Neural Networks (Multiscale CNNs) and a biologically inspired decision fusion model for multimodal affective states recognition. Firstly, the raw signals are pre-processed with baseline signals. Then, the High Scale CNN and Low Scale CNN in Multiscale CNNs are utilized to predict the probability of affective states output for EEG and each peripheral physiological signal respectively. Finally, the fusion model calculates the reliability of each single-modality signals by the Euclidean distance between various class labels and the classification probability from Multiscale CNNs, and the decision is made by the more reliable modality information while other modalities information is retained. We use this model to classify four affective states from the arousal valence plane in the DEAP and AMIGOS dataset. The results show that the fusion model improves the accuracy of affective states recognition significantly compared with the result on single-modality signals, and the recognition accuracy of the fusion result achieve 98.52% and 99.89% in the DEAP and AMIGOS dataset respectively.

💡 Research Summary

The paper addresses the still‑underexplored problem of multimodal affective‑state recognition by jointly exploiting electroencephalogram (EEG) and peripheral physiological signals (e.g., ECG, EMG, GSR). The authors propose a two‑stage framework. First, raw recordings are baseline‑corrected and, for EEG, the 10‑20 electrode layout is reshaped into a 9 × 9 two‑dimensional grid so that spatial relationships are preserved. Two dedicated convolutional neural networks (CNNs) are then trained: a High‑Scale CNN (HSCNN) processes the EEG data, while a Low‑Scale CNN (LSCNN) processes each peripheral modality. Both networks consist of two convolution‑pooling blocks followed by fully‑connected layers; HSCNN uses 3‑dimensional kernels (time × space × space) to capture spatio‑temporal patterns, whereas LSCNN uses 1‑dimensional kernels for pure temporal features. Drop‑out and ReLU activations mitigate over‑fitting, and each network outputs a four‑class probability vector corresponding to the quadrants of Russell’s arousal‑valence plane (low‑arousal‑low‑valence, high‑arousal‑low‑valence, low‑arousal‑high‑valence, high‑arousal‑high‑valence).

The novelty lies in the decision‑level fusion stage, which is inspired by the Bayesian optimal cue‑integration model from neuroscience. The four affective classes are represented by their mean arousal‑valence coordinates; Euclidean distances between class centroids are computed to quantify inter‑class similarity. For a given modality, the probability vector produced by its CNN is combined with these distances to derive a “classification‑score reliability” for each class pair using a standard normal density function: f(d₍ᵢⱼ₎)= (1/√2π) exp(−d₍ᵢⱼ₎²/2). This yields a 4 × 4 reliability matrix per modality, reflecting how confidently the model can distinguish each pair of classes. The modality with the highest overall reliability for the currently predicted class is selected as the primary decision source, while the other modalities are retained as auxiliary information. In effect, the fusion mimics the brain’s tendency to weight sensory cues proportionally to their precision (inverse variance), thereby giving more influence to the most reliable signal at each inference step.

The authors evaluate the approach on two widely used affective‑state datasets: DEAP (32 participants, 40 video trials) and AMIGOS (40 participants, 45 trials). After mapping self‑reported arousal and valence scores to four quadrants, they train the HSCNN on EEG and separate LSCNNs on each peripheral channel. Single‑modality baselines achieve accuracies in the mid‑80 % range (EEG ≈ 86 %, peripherals ≈ 85 % on DEAP; similar on AMIGOS). When the proposed biologically inspired decision fusion is applied, overall accuracy jumps to 98.52 % on DEAP and 99.89 % on AMIGOS—substantially higher than prior multimodal methods such as MM‑ResLSTM, ECNN, or stacked auto‑encoders, which typically report 70–95 % accuracy.

The paper discusses several strengths: (1) explicit modeling of inter‑class relationships via Euclidean distances, (2) a theoretically grounded reliability measure derived from Bayesian cue integration, (3) modular architecture allowing each modality to use its most suitable CNN, and (4) impressive empirical gains on benchmark datasets. Limitations are also acknowledged: the distance‑based reliability assumes Gaussian class distributions and may be sensitive to how the four quadrants are defined; adding new sensor types would require redesigning the corresponding low‑scale CNN; computational cost of running two CNNs and the fusion step may be prohibitive for real‑time, resource‑constrained devices; and the method has not been tested on datasets with more than four affective categories or on continuous affect estimation.

In conclusion, the study introduces a novel, biologically motivated decision‑fusion mechanism that leverages the precision of each physiological modality, integrates it with deep feature extraction, and achieves near‑perfect affective‑state classification on two standard datasets. Future work suggested includes learning the inter‑class distance matrix directly from data, developing lightweight CNN variants for embedded platforms, and extending the framework to continuous affect prediction or to additional modalities such as facial video or speech.

Comments & Academic Discussion

Loading comments...

Leave a Comment