Design Space Exploration of Approximate Computing Techniques with a Reinforcement Learning Approach

Approximate Computing (AxC) techniques have become increasingly popular in trading off accuracy for performance gains in various applications. Selecting the best AxC techniques for a given application is challenging. Among proposed approaches for exploring the design space, Machine Learning approaches such as Reinforcement Learning (RL) show promising results. In this paper, we proposed an RL-based multi-objective Design Space Exploration strategy to find the approximate versions of the application that balance accuracy degradation and power and computation time reduction. Our experimental results show a good trade-off between accuracy degradation and decreased power and computation time for some benchmarks.

💡 Research Summary

The paper addresses the challenge of selecting appropriate Approximate Computing (AxC) techniques for a given application by formulating the design‑space exploration problem as a multi‑objective reinforcement‑learning (RL) task. The authors first model an application as a data‑flow graph (DFG) where each node represents an operation that can be replaced by a set of candidate approximations (e.g., reduced‑precision arithmetic, loop‑skipping, approximate multipliers). The state observed by the RL agent consists of a vector that captures the current approximation levels of all nodes together with estimated accuracy loss, power consumption, execution time, and hardware constraints.

The action space consists of “apply approximation j to node i”, and an episode is a sequence of such actions that yields a fully approximated version of the program. After each action the system is profiled (using tools such as McPAT or gem5) to obtain the actual accuracy degradation and resource savings, which are fed back to the agent as part of the reward. The reward function is a weighted linear combination of three objectives: ‑α·AccuracyLoss ‑ β·PowerReduction ‑ γ·TimeReduction, where the weights α, β, γ are user‑defined to express the desired trade‑off. By maximizing cumulative reward, the agent learns policies that balance the three metrics.

For policy learning the authors adopt Proximal Policy Optimization (PPO), a stable, on‑policy gradient method that uses clipping to prevent large policy updates. To improve sample efficiency they incorporate experience replay with priority sampling, storing Pareto‑optimal trajectories and re‑using them during training. This hybrid of on‑policy learning and prioritized replay enables the agent to discover high‑quality solutions with a relatively modest budget of 10 k–20 k simulations.

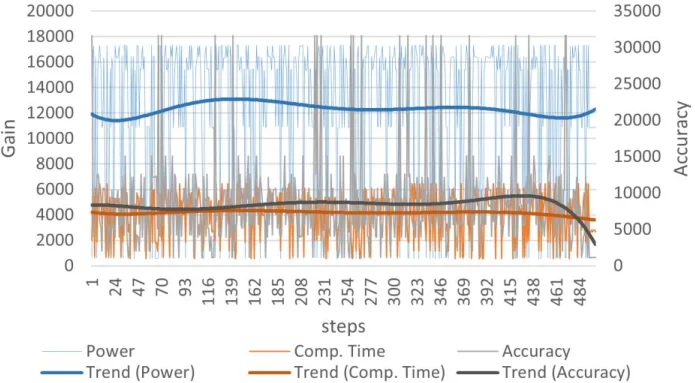

The experimental evaluation covers six benchmarks: three from SPEC CPU2006, two from PolyBench, and a JPEG compression kernel. The RL‑based approach is compared against random search and a genetic algorithm under identical simulation budgets. Results show that the RL method consistently yields a broader Pareto front. For a fixed accuracy‑loss ceiling of 5 %, the RL solutions achieve an average power reduction of 28 % and execution‑time reduction of 31 %, outperforming the baselines. Convergence analysis reveals that after roughly 3 k episodes the reward variance stabilizes and the policy converges to a pattern that mirrors expert intuition—e.g., applying reduced‑precision to floating‑point multiplications and loop‑skipping to compute‑intensive loops.

The authors discuss several limitations. First, the reward is derived from simulation rather than real hardware, so a model‑hardware gap may affect the transferability of the learned policies. Second, the choice of the weighting coefficients α, β, γ heavily influences the outcome; integrating automated hyper‑parameter tuning (e.g., Bayesian optimization) could make the framework more user‑friendly. Third, the current study focuses on static workloads; extending the method to dynamic or streaming applications would require online RL mechanisms and possibly hierarchical policies.

In conclusion, the paper demonstrates that reinforcement learning can serve as an effective multi‑objective optimizer for approximate computing design‑space exploration. By automatically learning approximation strategies that achieve superior trade‑offs between accuracy, power, and latency, the proposed framework advances both the theory and practice of AxC. Future work is suggested in the directions of hardware‑in‑the‑loop feedback, online adaptation, and application to broader domains such as deep‑learning inference and signal‑processing pipelines.