Can everyday AI be ethical. Fairness of Machine Learning Algorithms

Combining big data and machine learning algorithms, the power of automatic decision tools induces as much hope as fear. Many recently enacted European legislation (GDPR) and French laws attempt to regulate the use of these tools. Leaving aside the well-identified problems of data confidentiality and impediments to competition, we focus on the risks of discrimination, the problems of transparency and the quality of algorithmic decisions. The detailed perspective of the legal texts, faced with the complexity and opacity of the learning algorithms, reveals the need for important technological disruptions for the detection or reduction of the discrimination risk, and for addressing the right to obtain an explanation of the auto- matic decision. Since trust of the developers and above all of the users (citizens, litigants, customers) is essential, algorithms exploiting personal data must be deployed in a strict ethical framework. In conclusion, to answer this need, we list some ways of controls to be developed: institutional control, ethical charter, external audit attached to the issue of a label.

💡 Research Summary

The paper provides a comprehensive examination of the ethical challenges posed by everyday artificial intelligence, focusing specifically on fairness, discrimination risk, and transparency in machine‑learning‑driven decision‑making systems. It begins by noting the rapid diffusion of automated decision tools across sectors such as finance, employment, and healthcare, and observes that this diffusion has spurred both hope for efficiency gains and fear of unintended harms. Recent European regulatory developments—most notably the General Data Protection Regulation (GDPR) and France’s AI‑specific legislation—are presented as the legal backdrop against which the analysis is framed.

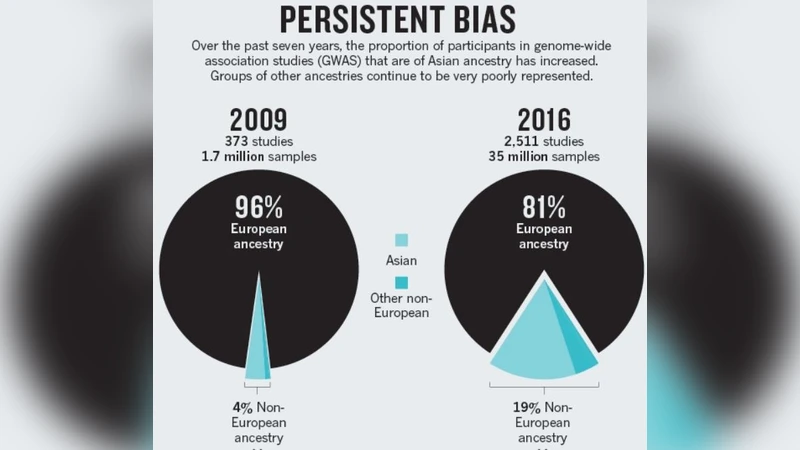

The authors set aside well‑known concerns about data confidentiality and market competition, choosing instead to concentrate on three interrelated problems. First, the risk of discrimination: training data often embed historical biases that can be reproduced or amplified by statistical models, leading to adverse outcomes for protected groups (e.g., gender, race, age). Second, the opacity of modern learning algorithms, especially deep neural networks, which makes it difficult for humans to understand why a particular decision was made. Third, the quality of algorithmic outcomes, which is closely tied to the previous two issues because biased or opaque models can generate unreliable or unjustified predictions.

To address these challenges, the paper proposes a two‑pronged technical strategy. On the statistical side, it recommends the systematic use of fairness metrics—such as demographic parity, equalized odds, disparate impact, and group‑specific error rates—to detect and quantify bias before deployment. On the interpretability side, it advocates for Explainable AI (XAI) techniques, including model‑agnostic methods like LIME and SHAP, as well as counterfactual explanations that answer “what‑if” questions about individual decisions. These tools are positioned as essential for satisfying GDPR’s “right to explanation” and for providing affected individuals with understandable reasons for automated outcomes.

Beyond technology, the authors argue that a robust ethical framework must be institutionalized. They outline four complementary governance mechanisms: (1) the creation of an independent ethics board at the organizational or sectoral level to oversee AI projects; (2) the adoption of an internal ethical charter that codifies commitments to fairness, transparency, and accountability; (3) regular external audits by certified third parties that evaluate models against predefined fairness and explainability criteria; and (4) a labeling scheme that publicly displays key information about an algorithm’s data sources, modeling approach, and bias‑assessment results. The label functions as a “quality seal,” enabling regulators, consumers, and other stakeholders to quickly assess the trustworthiness of a system.

The paper stresses that trust is the linchpin linking developers, users, and regulators. Developers must embed fairness checks into the model‑building pipeline, from data collection through feature engineering to post‑deployment monitoring. Users—citizens, customers, litigants—must be granted clear, accessible explanations of how decisions affecting them were derived, thereby operationalizing the legal right to explanation. The authors contend that such transparency not only fulfills legal obligations but also mitigates reputational risk and fosters broader societal acceptance of AI.

In conclusion, the authors acknowledge that current legal texts and technical capabilities are not yet perfectly aligned, but they maintain that a multi‑layered approach—combining statistical fairness assessments, XAI methods, and strong institutional oversight—can substantially reduce discrimination risk and enhance explainability. By implementing ethical charters, external audits, and algorithmic labeling, everyday AI can evolve from a source of uncertainty to a trustworthy, ethically grounded component of modern life.

Comments & Academic Discussion

Loading comments...

Leave a Comment