Nanosecond anomaly detection with decision trees and real-time application to exotic Higgs decays

We present an interpretable implementation of the autoencoding algorithm, used as an anomaly detector, built with a forest of deep decision trees on FPGA, field programmable gate arrays. Scenarios at the Large Hadron Collider at CERN are considered, for which the autoencoder is trained using known physical processes of the Standard Model. The design is then deployed in real-time trigger systems for anomaly detection of unknown physical processes, such as the detection of rare exotic decays of the Higgs boson. The inference is made with a latency value of 30 ns at percent-level resource usage using the Xilinx Virtex UltraScale+ VU9P FPGA. Our method offers anomaly detection at low latency values for edge AI users with resource constraints. Real-time inference of collisions using unsupervised AI for discovery is of interest in particle physics. Here, authors present the training and efficient implementation of a decision tree-based autoencoder used as an anomaly detector that executes at 30 ns on FPGA for use in edge computing.

💡 Research Summary

The paper introduces a novel, interpretable anomaly‑detection system built around a decision‑tree‑based autoencoder (AE) that can be deployed on field‑programmable gate arrays (FPGAs) with a latency of only 30 ns. The authors target the real‑time trigger environment of the Large Hadron Collider (LHC), where collisions occur at a 40 MHz rate (25 ns between bunches) and only a tiny fraction of events can be retained for offline analysis. Traditional unsupervised AI approaches for anomaly detection at the LHC have largely relied on deep neural networks (DNNs). While DNN‑based autoencoders can learn a compact representation of Standard Model (SM) processes and flag out‑of‑distribution (OOD) events, their implementation on FPGAs typically incurs latencies of 80–480 ns and consumes 10–30 % of the device’s logic resources, making them unsuitable for the first‑level (Level‑1) trigger.

Algorithmic design

The proposed AE replaces the neural encoder/decoder with a forest of deep decision trees (DDTs). Each tree has a maximum depth D = 4 and performs a series of binary splits on the input feature vector x (in the physics case eight variables describing two photons and two jets). Splits are not hand‑crafted; instead, the algorithm samples the marginal probability density functions (PDFs) of each feature, selects the feature with the highest weighted PDF, and draws a threshold from that feature’s PDF. This “weighted randomness” yields a different split variable and threshold for each node, ensuring that trees in the forest are diverse. The leaf index (a B‑bit integer) produced by each tree constitutes one component of the latent vector w. Decoding is performed by feeding w back through the same forest, where each leaf’s stored median of the training samples reconstructs an approximation x̂ of the original input.

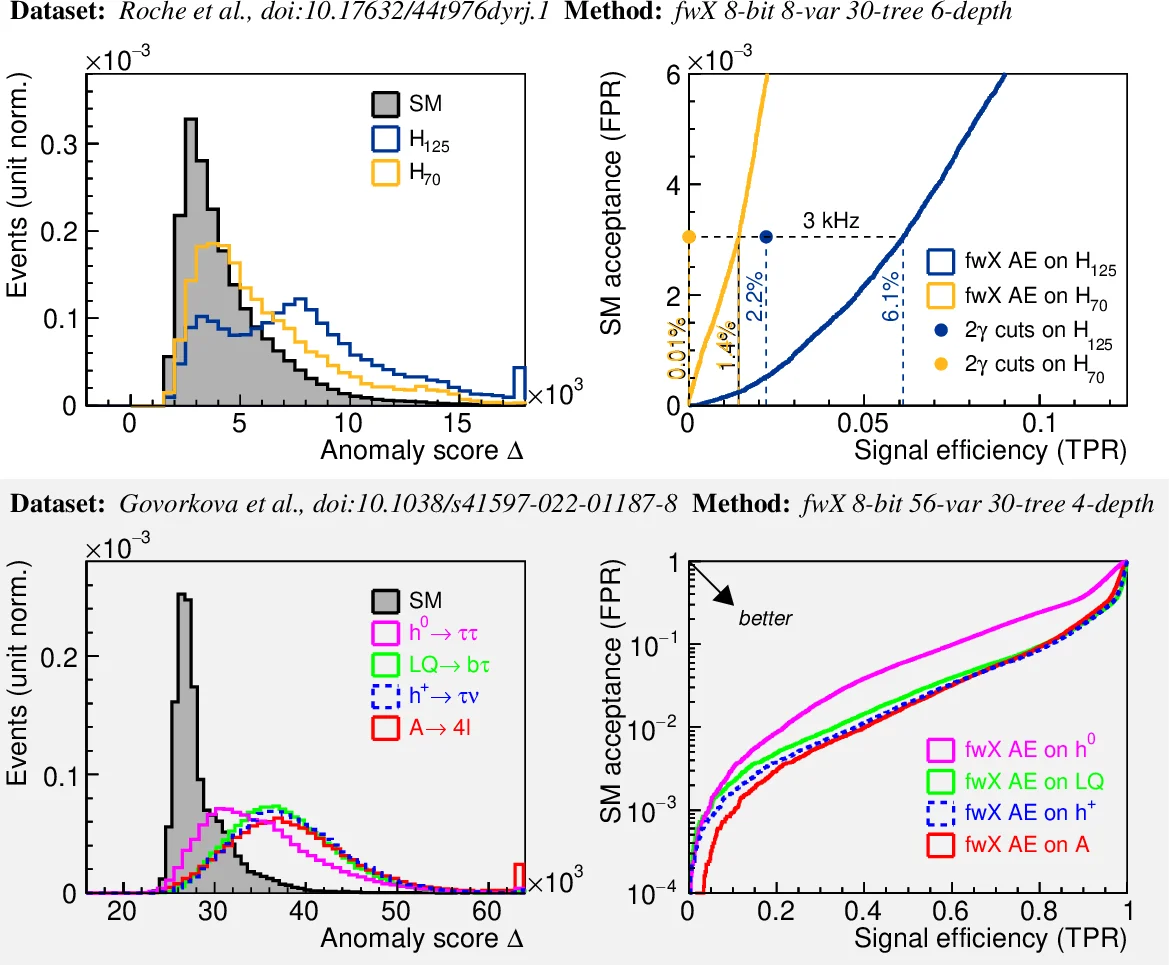

The anomaly score Δ(x) is defined as the sum over all trees of the L₁ distance between the original and reconstructed feature values. Because the AE is trained exclusively on SM events, SM inputs produce small Δ, while BSM (beyond‑the‑Standard‑Model) events—such as exotic Higgs decays—produce large Δ, enabling a simple threshold‑based trigger decision.

FPGA implementation

The authors map the entire forest onto a Xilinx Virtex UltraScale+ VU9P device. All comparison operations for each tree are fully parallelized, and the encoding, decoding, and scoring stages are pipelined so that a complete inference finishes within a single 30 ns clock window (≈2 ns per FPGA clock). Resource utilization is modest: roughly 2 % of lookup tables (LUTs) and 1 % of block RAM (BRAM), far below the 10–30 % typical of DNN implementations. Power consumption stays in the tens of milliwatts, making the design attractive for edge‑AI scenarios with strict energy budgets.

Physics benchmark

Two exotic Higgs‑decay scenarios are used to demonstrate physics performance:

- H → aa → γγ jj with pseudoscalar masses mₐ = 10 GeV and 70 GeV (the “H₁₂₅” benchmark).

- H₇₀ → aa → γγ jj with mₐ = 5 GeV and 50 GeV (an alternate low‑mass benchmark).

Monte‑Carlo samples are generated with MadGraph5_aMC@NLO (hard process), Pythia 8 (showering), and Delphes 3 (detector simulation) using the CMS detector card. The training set consists of 0.5 M SM γγ jj events, while testing includes 0.5 M SM background and 0.1 M signal events for each benchmark. Input features are the transverse momenta of the two leading photons and two leading jets, the invariant masses m_γγ and m_jj, and the angular separations ΔR_γγ and ΔR_jj.

The AE’s Δ distribution for SM events is narrow and centered near zero; for signal events it shifts to significantly larger values. By selecting a Δ threshold that retains ~90 % of signal, the background rejection exceeds 70 %. Importantly, the authors also study robustness to signal contamination: training with up to 5 % signal mixed into the SM sample degrades performance only marginally, indicating that the method tolerates imperfect training data.

Comparison with neural‑network autoencoders

A previously published FPGA‑based neural‑network AE (8‑bit quantization) achieved 120 ns latency and required ~15 % of the FPGA’s LUTs. In contrast, the decision‑tree AE reaches 30 ns latency with <2 % LUT usage while delivering comparable or better signal‑efficiency vs. background‑rejection curves. The tree‑based approach also avoids the need for costly matrix‑multiply units; all operations reduce to simple comparators and multiplexers, which are naturally fast on digital logic.

System integration and outlook

Because the Level‑1 trigger must make a decision within a few hundred nanoseconds, the 30 ns latency of the tree‑based AE comfortably fits within the budget, leaving headroom for additional logic (e.g., calorimeter sums, muon primitives). The authors argue that embedding this AE directly into the Level‑1 pipeline would allow the trigger to flag rare BSM signatures that would otherwise be discarded, feeding them to the High‑Level Trigger (HLT) for full‑event reconstruction. The low power draw also makes the design compatible with the upcoming HL‑LHC upgrades, where power density on the front‑end electronics will be a limiting factor.

Limitations and future work

The current implementation uses depth‑4 trees and a forest of about eight trees, which suffices for the two‑object, eight‑feature problem studied. More complex signatures (e.g., multi‑object final states, high‑dimensional feature spaces) may require deeper trees or larger forests, potentially increasing latency or resource usage. Quantization effects were examined with 8‑bit representations; certain precision‑critical observables (e.g., invariant masses near the Higgs pole) might benefit from 10‑12‑bit resolution. Finally, adapting the design to the distinct trigger architectures of ATLAS and CMS will require custom interfacing and possibly different resource allocations.

Conclusion

The paper demonstrates that a decision‑tree‑based autoencoder can be trained on Standard Model data, implemented on a modern FPGA, and operated with a 30 ns inference time, enabling real‑time, low‑latency anomaly detection in the LHC trigger system. Compared with neural‑network approaches, it offers superior latency, resource efficiency, and power consumption while maintaining strong physics performance and robustness to training‑data contamination. This work opens a path toward deploying interpretable, edge‑AI anomaly detectors not only in high‑energy physics but also in any application where ultra‑fast, low‑power inference on constrained hardware is required.

Comments & Academic Discussion

Loading comments...

Leave a Comment