Rethinking Learning-based Demosaicing, Denoising, and Super-Resolution Pipeline

Imaging is usually a mixture problem of incomplete color sampling, noise degradation, and limited resolution. This mixture problem is typically solved by a sequential solution that applies demosaicing (DM), denoising (DN), and super-resolution (SR) s…

Authors: Guocheng Qian, Yuanhao Wang, Jinjin Gu

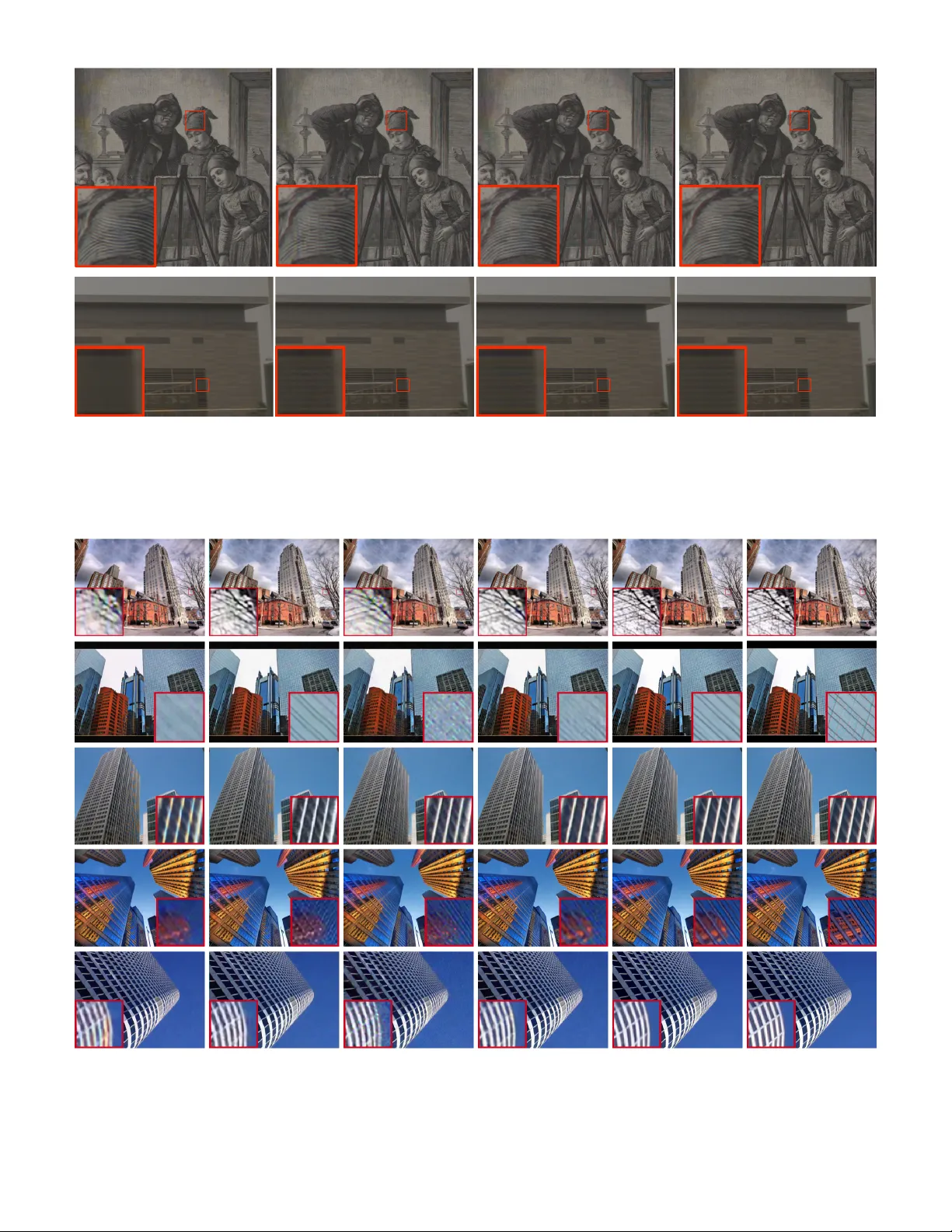

Rethinking Lear ning-based Demosaicing, Denoising, and Super-Resolution Pipeline Guocheng Qian 1 ∗ , Y uanhao W ang 1 ∗ , Jinjin Gu 2 , Chao Dong 3 , 4 , W olfgang Heidr ich 1 , Ber nard Ghanem 1 , Jimmy S . Ren 5 , 6 Abstract —Imaging is usually a mixture problem of incomplete color sampling, noise degr adation, and limited resolution. This mixture problem is typically solv ed by a sequential solution that applies demosaicing (DM), denoising (DN), and super-resolution (SR) sequentially in a fixed and predefined pipeline (e x ecution order of tasks), DM → DN → SR. The most recent work on image processing focuses on de veloping more sophisticated architectures to achie ve higher image quality . Little attention has been paid to the design of the pipeline, and it is still not clear how significant the pipeline is to image quality . In this work, we comprehensively study the eff ects of pipelines on the mixture problem of learning-based DN, DM, and SR, in both sequential and joint solutions. On the one hand, in sequential solutions, we find that the pipeline has a non-trivial eff ect on the resulted image quality . Our suggested pipeline DN → SR → DM yields consistently better performance than other sequential pipelines in v arious experimental settings and benchmarks. On the other hand, in joint solutions, we propose an end-to-end T rinity Pixel Enhancement NET work (TENet) that achieves the state-of-the-ar t performance for the mixture prob lem. We further present a novel and simple method that can integ rate a cer tain pipeline into a given end-to-end netw or k by pro viding inter mediate super vision using a detachable head. Extensiv e experiments show that an end-to-end network with the proposed pipeline can attain only a consistent but insignificant improv ement. Our work indicates that the inv estigation of pipelines is applicable in sequential solutions, b ut is not very necessar y in end-to-end networks. Code, models, and our contributed Pix elShift200 dataset are availab le at https://github.com/guochengqian/TENet. Index T erms —Image Demosaicing, Image Denoising, Image Super-resolution, ISP , Deep Learning F 1 I N T R O D U C T I O N O B TA I N I N G high-quality , high-r esolution images has at- tracted increasing attention. Acquiring such images is difficult in practice due to har dware limitations, espe- cially for mobile devices. First, most digital cameras capture images using a single image sensor overlaid with a color filter array ( e.g. Bayer pattern), which causes incomplete color sampling, i.e. r esulting in mosaic images instead of RGB images. Second, images taken dir ectly from the image sensor ar e inevitably noisy . Third, typical mobile devices are equipped with limited pixel numbers and lenses with fixed and short focal lengths, which makes imaging of distant or small objects challenging and limits image resolution. The real-shot image captur ed by an iPhone X shown in Fig. 1 shows unnatural colorization, noise, and loss of detail due to these limitations. Demosaicing (DM) [1], denoising (DN) [2] and super-r esolution (SR) [3] ar e the three fundamental tasks that have been studied and included in image pro- cessing pipelines (ISPs 1 ) to resolve the hardwar e limitations mentioned above and to improve image quality . Deep learning technologies [4], [5], [6] have recently led to breakthrough progr ess in DN, DM, and SR algorithms, and have spawned commercial products using learning- based image processing such as modern mobile phones • 1 KAUST , 2 The University of Sydney , 3 Shanghai AI Laboratory , 4 Shenzhen Institutes of Advanced T echnology , Chinese Academy of Sciences 5 SenseT ime Research, 6 Qing Y uan Research Institute, Shanghai Jiao T ong University , • ∗ Equal contribution. 1. ISP can be the abbreviation for image processing pipeline or image signal processor . W e use these terms interchangeably . (iPhone, Google Pixel, etc. ). Despite the achievement of deep learning in each task, imaging is usually a mixtur e pr oblem of incomplete color sampling, noise degradation, and resolution limitation . The combination of DN, DM, and SR is more common and mor e complicated than any single problem in practical application. Previous methods handle the mixtur e pr oblem through a sequential solution that performs DM, DN, and SR inde- pendently in a pr edefined and fixed order: DM → DN → SR [7], i.e. firstly DM, followed by DN, and then SR. Recent methods instead show a tr end in performing DN and SR in mosaic space before DM [8], [9], [10]. However , these works do not consider the important but under-explored mixture problem of DN, DM, and SR. Furthermor e, it is not clear how significant the execution order of tasks ( i.e. pipeline) is to the performance of this mixture problem. In this paper , we analyze the characteristics of DN, DM, and SR and the behaviors of their interactions. W e find that issues caused by interactions between tasks occur when the corresponding algorithms ar e applied sequentially to solve the mixtur e problem. For example, superr esolving a demo- saiced image will magnify artifacts ( e.g. moir ´ e) intr oduced by the DM algorithm (see Fig. 4). We pr opose a novel image processing pipeline: DN → SR → DM, for sequential solutions . W e find that the pr oposed pipeline can alleviate pr oblems caused by task interactions to a gr eat extent. Extensive ex- periments of learning-based DN, DM, and SR show that our pipeline can consistently improve image quality of sequential solutions, r egardless of architectur e, dataset, and SR factor (see Sec. 6). W e further study the effect of pipelines in joint solutions (end-to-end networks) for the mixture problem. W e first propose a T rinity E nhancement Net work (TENet++ 2 ) to address the mixtur e problem. W e then present a simple yet effective way that enforces an end-to-end network to follow a certain pipeline by pr oviding intermediate supervision. Through experiments on TENet++ and other architectures [11], [12], [13], we notice marginal but consistent improve- ments after inserting the pr oposed pipeline. Our studies suggest that the investigation of pipelines in end-to-end networks can impr ove the performance but is not very necessary considering the insignificant improvement. Contributions: (1) W e are the first to pr opose and analyze the mixture problem of learning-based denoising, demosaicing, and super -resolution. (2) W e suggest a new pipeline: DN → SR → DM for solving the mixture pr oblem of DN, DM, and SR. Extensive experiments show that the proposed pipeline can consistently impr ove performance for sequential solutions. (3) W e propose an end-to-end network named T rinity Pixel Enhancement Network (TENet++) that achieves SOT A performance for joint DN, DM, and SR. (4) W e show how to make an end-to-end network follow a cer- tain pipeline. W e indicate an insignificant effect of pipelines on end-to-end networks. (5) W e notice that there is a lack of full-color sampled datasets in the literature. W e contribute a new real-world dataset, namely PixelShift200 , which consists of r ed, green, and blue channels without the need for color interpolation. W e demonstrate the benefits of PixelShift200 in training and evaluating raw image processing tasks. 2 R E L AT E D W O R K Demosaicing. Digital cameras take subsampled color mea- surements at alternating pixel locations. The resulting im- ages of the subsampled measurements are named mosaic images. The mosaic images ar e then interpolated to cr eate full-color images with per-pixel red, green, and blue infor - mation by a so-called demosaicing (DM) process. Early DM methods ar e model-based [14], [15], [16], which focus on the constr uction of filters ( e.g. edge-aware interpolation) and image priors ( e.g. chrominance continuity). Model-based methods are still commonly used in camera systems and software; e.g. the image processing library DCRaw utilizes [15]. Pioneering works also explor ed data-driven methods [17], [18] that learn a mapping from a raw image to an RGB image. Recently , deep learning has achieved overwhelming performance in DM. [4] presented DemosaicNet, a deep convolutional neural network (CNN)-based DM algorithm that outperforms the pr evious methods by a large mar gin. Following DemosaicNet, many works [19], [20] design dif- ferent architectures to improve the demosaicing quality . Denoising. Noise is inevitable during the imaging process. Early denoising (DN) methods exploited image priors, such as content variance [21], self-similarity [22], and sparse repr esentation [23] for image denoising. The most recent de- noisers are entirely data-driven, consisting of CNNs trained to r ecover noisy images to noise-free targets [5], [24]. De- spite the effectiven ess of these learning-based denoisers on 2. W e add ++ after TENet to avoid confusion with the outdated architectur e used in previous arXiv version of this paper synthesized benchmarks [25], they generalize poorly to r eal- shot images due to their oversimplified assumption that noise is additive, white, and Gaussian [26]. While the noise pattern of a color image is complex because of nonlinear im- age pr ocessing (DM, color mapping, and compr ession), the noise patterns on raw images ar e well studied. [27] charac- terized how sensor noise primarily comes from two sources: Poisson noise (shot noise) and Gaussian noise (read noise). T o improve the generalization ability of deep denoisers, [9], [28] proposed denoising on raw images using Poisson- Gaussian noise, which outperformed previous methods on the real-world image denoising dataset DND [29]. In this paper , we find that denoising RA W images directly yields higher quality , regardless of the network architectur e. Super-resolution. Due to the limited sensor size, image resolution is usually not as high as desired. Image SR aims to recover a high-resolution (HR) image from its low-resolution (LR) version. Previously , example-based SR methods [30], [31] that exploit the self-similarity property provided state-of-the-art performance. Recently , learning- based methods [6], [32] developed the CNN-based SR al- gorithms SRCNN and FSRCNN, outperforming example- based methods. After these seminal works, many learning- based SR methods have emerged [11], [33]. However , most of them focus on color image SR. Only a few works have paid attention to the SR of raw images [10], [12], [34]. ISP and Mixture Problem. Image processing is always accompanied by a mixture problem of DN, DM and SR. An ISP is embedded in a modern camera to perform all these tasks. Most ISPs solve tasks independently and se- quentially through the predefined pipeline DM → DN → SR [7]. Although some previous works proposed new pipelines, such as performing DN before DM [8], [9], less attention has been paid to the execution order of joint DN, DM, and SR, especially since many ISP methods in the deep learning era are now learning-based and leverage end-to-end algorithms [10], [12], [13], [35], [36]. These end-to-end solutions map a raw image to a desir ed RGB image directly , without focusing on the pipeline. In this work, we diver ge from the common architectur e engineering in the area of image processing and rethink the mixture problem of DM, DN and SR fr om a holistic perspective, and more especially , the execution order (pipeline) of tasks. 3 M E T H O D O L O G Y 3.1 A New Pipeline for DN, DM and SR W e propose a new image processing pipeline, DN → SR → DM, that significantly improves the image quality of sequential solutions for the joint problem of DM, DN and SR. For a given noisy LR raw image M LR n , our pipeline obtains the final HR color image I H R from M LR n using a composite function as follows: I H R = C M ( S M ( D M ( M LR n ))) , (1) where C M is the demosaicing function ( C denotes “ c olorize”), S M the S R function for mosaic images, and D M the d enoising function for mosaic images. M and subscript M stand for “mosaic”, while subscript n indicates noisy . W e first perform DN on the noisy raw mosaic image Re a l -W orl d Im a ge Ca pt ure d by i P hone X dc ra w + *CA RN Ca m e ra Ra w + *CA RN D e m os a i c N e t + *CA RN T E N e t (ours ) Real-W orld Image Captured by iPhone X DCRaw Camera Raw JDnDmSR [13] TENet++ (ours) Fig. 1: Qualitative comparisons for joint DM, DN, and SR ( × 2 ) on a real raw image captured by an iPhone X. Our TENet++ delivers a more visually appearing r esult compar ed to popular software DCRaw and Camera Raw and state-of- the-art JDnDmSR [13], pr oducing less color distortions and more fine-grained details. The output of DCRaw and Camera Raw is superresolved by a SR model implemented by the same 6 RRDB blocks as TENet++ for a fair comparison (Sec. 5). to obtain its noise-free version, M LR = D M ( M LR n ) . W e then adopt S M to superr esolve the LR mosaic image and obtain a HR mosaic image, M H R = S M ( M LR ) . Finally , we use DM to interpolate M H R to a full-color HR image, I H R = C M ( M H R ) . Why perform DN at the first stage ? DN is usually per- formed after DM in a typical ISP . W e pr opose DN first for three r easons: (1) the noise model for raw images has been well studied (Gaussian-Poisson distribution). The quality of DN is higher for raw images than for color images. (2) The existence of noise adds complexity to subsequent tasks. Noise has a high possibility of hiding color information and destroying textur es, depending on the noise level. Process- ing a noisy image will r esult in unwanted artifacts in most cases. For example, Fig. 4 (DM → DN → SR) showcases that demosaicing a noisy image is prone to moir ´ e. (3) Image processing prior to DN will degrade the noise pattern and complicate denoising. For example, SR will destroy the noise distribution and make removal of noise from the super - resolved image extremely difficult. Fig. 4 (DM → SR → DN) shows such an example, where obvious noise appears. Why perform SR before DM? Pr evious ISPs usually first demosaic a raw image into a color image and then perform SR. W e suggest super-r esolving the raw image to a higher resolution before conducting DM. In other words, super- resolution in our suggested pipeline is performed on mosaic images instead of RGB images. Our proposed pipeline has at least two advantages: (1) demosaicing a higher resolu- tion raw image yields fewer artifacts than demosaicing a lower resolution image. A DM algorithm usually introduces conspicuous artifacts (zippering, color moir ´ e, and blurring) in the high-frequency textur e regions, especially when the input resolution is low . These artifacts are alleviated when DM is applied to an image with higher resolution. (2) The artifacts caused by super-r esolving the defects of a demo- saiced image can be avoided in our pipeline. As shown in Fig. 4, DN → SR → DM that performs SR before DM alleviates color distortion and moir ´ e compared to its counterpart DN → DM → SR. 3.2 Inserting Our Pipeline into An End-to-end Network Despite the ef fectiveness of the proposed pipeline, simply performing multiple tasks sequentially and independently , as shown in Equation 1 reduces performance. For exam- ple, DN will intr oduce blurring in subsequent tasks. An important reason for this performance drop is that no appropriate model can perfectly handle the intermediate state. The intermediate state refers to the temporal result after previous processing and usually involves complex task-related defects that affect subsequent tasks. W ith the advent of deep learning-based methods, we can addr ess complicated multitask problems in an end-to-end manner , i.e. a “joint solution”. Although the joint solution has shown impressive performance in a variety of tasks [37], [38], [39], it is still underexplored for joint DN, DM, and SR. The most recent works [10], [12], [13], [40] focused on such a mixtur e problem. However , most of them simply treat the whole network as a black box, without considering the pipeline inside. Their methods just learn a mapping from the noisy LR raw image to the HR color image, with the final target (the output of a camera ISP) serving as supervision. W e denote this type of one-stage end-to-end black-box network as E2ENet , whose architectur e is illustrated in Fig. 2a. W e denote E2ENet’s pipeline as DN + SR + DM. W e show how to make an end-to-end network follow a certain pipeline to solve the mixture pr oblem instead of just learning a one-stage mapping. W ith the joint solu- tion, we can simplify the sequential pipeline DN → SR → DM as DN+SR → DM . Compared to E2ENet (DN + SR + DM), we assign a specific task to each component of the network. Our network performs joint DN and SR in the first stage, followed by DM in the final stage. W e achieve this pipeline by pr oviding intermediate supervision when training an end-to-end network. W e denote the mapping function of joint DN and SR as F M , and the DM mapping as C M . F M and C M can be trained jointly . The l 1 -norm loss for the final output is calculated by: L j oint = kC M ( F M ( M LR n )) − I H R g t k , (2) M LR ⇧ n Conv Conv Conv Block Block + I SR Upsample Module (a) E2ENet M LR ⇧ n Conv Conv F M Module Module C M I SR C=64 C=4 C=64 C=3 (b) TENet M LR ⇧ n Conv Conv F M Module Module C M I SR Detachable C=64 C=64 C=3 C=4 (c) TENet++ Conv LReLU Conv LReLU Conv LReLU Conv LReLU Conv Residual in Residual Dense Block + (d) residual in residual dense block (RRDB) Fig. 2: Architecture of our TENet++ (c). (a) E2ENet is a one-stage end-to-end network that learns a mapping from the noisy LR raw image to the HR color image directly using a single module. (b) Our naive version of T rinity Enhancement Network, denoted as TENet, consists of two main components: a joint denoising and super -resolution module F M and a demosaicing module C M . Each module shares the same architectur e as (a), which composes convolutional layers to extract features and an upsampling layer to interpolate features. This two-component design makes the network follow a certain pipeline (supersolve raw image before demosaicking) for the joint DN, DM, and SR pr oblem, and facilitates optimization by providing intermediate supervision, compar ed to (a). However , TENet suffers fr om the bottleneck issue (channel size is dropped to C = 4 in the middle of the network). (c) Our proposed TENet++ where a detachable convolution layer is adopted after F M for r econstructing the high-resolution raw image ( M S R ). This detached layer is activated during training, thus eschewing TENet++ from the bottleneck issue, and is detached in inference. (d) The default block (RRDB [33]) used in TENet++. where I H R g t repr esents the gr ound-truth HR color image of the input LR noisy raw image M LR n . W e further construct an intermediate output M H R (the superresolved mosaic image) and propose an intermediate loss, L S R , as follows: L S R = kF M ( M LR n ) − M H R g t k , (3) where M H R g t repr esents the ground-truth HR noise-free mo- saic image and the output of F M ( M LR n ) is M S R . The L S R loss makes the first part of the network focus on joint DN and SR, and the second part on DM. The L j oint loss controls the fidelity of the final output. The final objective function is the sum of two loss terms: L = L j oint + L S R , (4) While DN → SR → DM outperforms other pipelines in se- quential solutions, DN+SR → DM is the best overall in joint solutions (despite the marginal improvements). Note here adding additional denoising supervision is not beneficial to the performance as shown in our experiment. This demonstrates that the essence of our proposed pipeline is to perform DN and SR in mosaic space, not RGB space, which are our core arguments for both pipelines (see Sec. 3.1). 3.3 T rinity of Pixel Enhancement Netw ork The naive solution to achieve the DN+SR → DM pipeline is to concatenate two subnetworks F M and C M in the network backbone and actually pr oduce M S R in the middle of the network, as shown in Fig. 2b. This is the ar chitecture that we used in the preprint version of our work and is denoted TENet. Unfortunately , this solution will face performance drops due to a bottleneck issue. The bottleneck arises as the channel size C is decreased from the latent space ( e.g. C = 64 ) to the raw image space ( C = 4 ) to yield the SR raw image. T o solve this issue, we present T rinity of Pixel E nhancement N etwork ( TENet++ ). TENet++ leverages an attachable branch to provide additional supervision during training. The attachable branch is implemented by a single convolutional layer to map the feature from the latent space to the raw image space. The ar chitecture of TENet++ is illustrated in Fig. 2c. The noisy LR mosaic image M LR n with size H × W is r eshaped to a four-channel image (red, green, green, blue) with size H 2 × W 2 × 4 . The noise variance for each channel is concatenated into the reshaped raw image. The eight-channel input is denoted M LR n , which is passed to the TENet++ backbone. TENet++ consists of two components in its backbone: a joint denoising and super-r esolution module F M and a demosaicing module C M . F M and C M share the same structure as the module used in E2ENet (detailed in Fig. 2a). Both F M and C M are composed of a convolution layer to transform features, N / 2 convolutional blocks to extract features, and a convolution layer with an upsampling layer to interpolate features. Note N is the total number of blocks in TENet++ and is set to 12 by default. The upsampling ratio of F M is the SR ratio ( 2 by default), while the upsampling ratio of C M equals 2 since C M is the demosaicing module to interpolate colors. W e employ the Residual in Residual Dense Block (RRDB) proposed in ESRGAN [33] (see Fig. 2d) to implement the blocks used in each module by default. A pixel shuffle layer [41] is used to upsample the feature maps for DM and SR. A detachable layer is attached to F M to produce the in- termediate output M S R for additional training supervision, and can be r emoved during testing. In our experiment, the number of RRDB modules for both F M and C M is set to 6 . Compared to E2ENet, TENet++ has two major differ- ences: (1) the upsampling layer for SR is moved forward to the middle of the network (end of F M ) to yield super- resolved raw images; (2) intermediate supervision is pro- vided. From a theoretical perspective, we hypothesize that reasonable intermediate supervision (superresolved raw im- age in our case) yields a limited solution space with good local minima, thus leading to an eased optimization. W e show the effectiveness of TENet++ over E2ENet through extensive experiments in Sec. 5. 4 P I X E L S H I F T 2 0 0 D AT A S E T 4.1 Motivation of PixelShift200 Previous learning-based DM algorithms train their net- works on incompletely color-sampled datasets such as DIV2K [42] and ImageNet [43], wher e they take color images demosaiced from incomplete color samples (Bayer images) as Ground T ruth and synthesize the mosaic images as input [9], [28], [44]. However , this scheme has thr ee main issues: (1) the color images ar e interpolated by the camera ISP , which introduces DM artifacts caused by incomplete color sampling. These artifacts will also be learned if a DM model is trained on them. (2) The DM model trained on such synthesized dataset only learns an “average” DM algorithm used in the camera’s ISP . And (3) the synthesized raw images only have a depth of 8-bit and ther efore suffer from information loss, compar ed to normal 14-bit real raw images. Thus, real-world, high-resolution, uncompressed image datasets with full-color sampling are needed. W e contribute a novel real-world dataset PixelShift200 , which contains 200 4K-resolution full-color sampled im- ages. The color information in red, green, and blue in 14- bit for each pixel is known in our dataset without any domosaicing. PixelShift200 was collected using the pixel shift technique [45] embedded in the camera we use (see Sec. 4.2). This technique takes four samples of the same image at the same time, and physically controls the camera sensor to precisely move one pixel horizontally or vertically at each sampling. The four samples are then combined to directly obtain all the color information for each pixel. Refer to Fig. 3 for an example of the pixel shift pr ocess. The pixel shift technique ensur es that the sampled images follow the distribution of natural images. Due to full-color sampling, our collected images in PixelShift200 are almost free of artifacts compared to the images interpolated from mosaic inputs. Fig. 3 compares a color image obtained by the pixel shift technique with the output of the well-known raw pr ocessing software, Adobe Camera Raw (version 12.3). The pixel shift combines the four raw images into a single full-color sampled image, while Camera Raw interpolates the first sample using the built-in demosaicing algorithm. It is worth noting that the pixel shift technique generates much less aliasing (see the letter “K” in the first row) and fewer moir ´ es (see the barcode in the second row). In Sec. 6.2, we demonstrate training raw image processing networks on our PixelShift200 dataset will produce better image quality than training the same network on the incompletely color sampled dataset ( e.g. DIV2K [42]). W e highlight that, as far as we are aware, we are the first to collect such a full-color sampled dataset. PixelShift200 is useful for training raw image pr ocessing methods and can also be used as a unique benchmark for demosaicing-related tasks. 4.2 PixelShift200 Collection Procedure W e collected PixelShift200 dataset with a Sony ILCE-7RM3 digital camera, which includes the pixel shift technique in its camera system [45]. T o avoid serious noise, we mounted a lens with fixed focal length and aperture (Zeiss FE 50 mm/ 1 . 4 ) with low photosensitivity (ISO 100 or less). T o reduce motion parallax, we controlled the depth of the scene field to a small range and held the camera with a heavy tripod. PixelShift200 consists of 200 4K resolution images for training and 20 1K resolution images for testing. The testing set is selected to cover a wide range of scenes. As data augmentation, the training samples were cropped into 9444 overlapping patches of size 512 × 512 . 5 E X P E R I M E N T S 5.1 Experimental Setup Data Preprocessing W e perform a bicubic downsampling kernel (denoted as S − 1 C ), a mosaic kernel [9] ( C − 1 M ), and then the Gaussian-Poisson noise model [27] to generate LR noisy raw images M LR n as input from HR color images I H R in pixelshift200: M LR n = C − 1 M ( S − 1 C ( I H R )) + n (5) where the noise term n is sampled from: n ∼ N ( µ = 0 , σ 2 = λ read + λ shot M LR ) (6) λ read and λ shot are the r ead and shot noise levels of a given raw image. The noise variance n 0 is given by ( λ read + λ shot M LR n ) . In Pixelshift200, we generated the random Gaussian- Poisson noise in both training and testing. Note that the noise was generated on the fly during training and was sampled once and fixed for the testing samples. Noise levels follow the same range as the real-shot denoising benchmark dataset, DND [29]. Random rotation and flipping were used as data augmentation during training. The output of the model after the whole pipeline is the RGB image in the linear color space. The black level subtraction is conducted as the pre-processing step for each raw image and is per- formed before DN, DM, and SR. The white balance and color mappings were r ead from the raw images and applied to the final outputs to transform them into standard RGB space (sRGB). Metric For quantitative experiments, we use PSNR ( ↑ ), SSIM ( ↑ ), and FreqGain ( ↓ ) [4] to measur e overall fidelity , overall structure similarity , and fine-grained artifacts. Note that Fr eqGain is the metric we modify fr om [4], which was proposed to detect moir ´ es. W e revise it to a scalar version by averaging the positive logarithmic values of the frequency gains. The formula of FreqGain is the following: ρ = avg ReLU log |F O ( ω ) | 2 + |F I ( ω ) | 2 + (7) Pix el Shift C ame ra Raw All R G B Channels Full color sampling Shift Shift Shift Fig. 3: The pixel shift technique used to create dataset PixelShift200 (left) and qualitative comparison between the commonly used raw processing software Camera Raw , and the pixel shift. Pixel shift collects artifact-less (less zippering, moir ´ e and chromatic aberration) full color sampled images directly without color interpolation. where F I ( ω ) and F O ( ω ) repr esent the 2D Fourier transform of the gr ound tr uth and the prediction. = 10 − 6 is added to avoid dividing by zero. ReLU is used to only consider positive values that repr esent regions where moir ´ e-like arti- facts are likely to appear . A veraged value across fr equencies is returned as the quantitative metric. Network T raining W e optimized all models using Adam [46] with an initial learning rate lr = 5 × 10 − 4 on four NVIDIA R TX2080T i GPU. A cosine annealing learning rate schedule is adopted. All models are trained for 1000 epochs to ensure convergence. Experimental Setup of Comparison with the State-of- the-art The most closely related works are JDSR [12], RawSR [10], SGNet [40], and JDnDmSR [13], wher e most of which are black-box end-to-end networks without a specific pipeline ( E2ENet ). Since the architectur es and data process- ing are differ ent, rather than unfairly comparing with these networks, we implemented all possible pipelines (including E2ENet) using the same module as TENet++ (see Fig. 2a for the module structur e) and trained all networks on the same PixelShift200 dataset. W e also validate our proposed pipeline on differ ent datasets and using models built by differ ent modules. W e compar e our proposed pipeline DN → SR → DM with all other possible pipelines in sequential solutions, and our DN+SR → DM pipeline with others in partially and fully joint solutions. A sequential solution applies three separate models sequentially , e.g. DN → SR → DM executing DN, SR, and DM sequentially . A partially joint solution sequentially conducts two models, while one is a joint model for two tasks, and another a single-task model. For example, DN+SR → DM performs first a joint DN and SR model DN+SR, and then a DM model. A fully joint solution solves the three tasks together using a single model. The pipeline of a joint solution ( e.g. , DN+SR → DM) is achieved by providing additional supervision ( e.g. , denoised super- resolved mosaic image) in an end-to-end network. The way of providing intermediate supervision is mentioned in Sec. 3.2. All models needed are implmented as follows: • Five single-task models: raw image denoising, raw image SR, demosaicing, color image denoising, and T ABLE 1: Comparison of pipelines on PixelShift200 test set . Gaussian-Poisson noise with × 2 SR are used. Bold denotes the best performance. Our proposed pipelines yield the best quantitative results among all possible pipelines. T ype Pipeline PSNR SSIM FreqGain ↓ Sequential DM → DN → SR (usual) 33.51 0.8379 0.4853 DM → SR → DN 30.01 0.6773 1.0978 SR → DM → DN 31.44 0.7270 0.7858 SR → DN → DM 33.42 0.8059 0.5583 DN → DM → SR 36.33 0.9256 0.2067 DN → SR → DM (ours) 36.61 0.9294 0.1886 Partially joint DN → DM+SR 36.65 0.9299 0.1884 DN+DM → SR 36.24 0.9259 0.1952 DN+SR → DM (ours) 37.04 0.9327 0.1829 Fully joint DN+DM+SR 36.71 0.9292 0.1851 DN → DM+SR 36.18 0.9245 0.2451 DN+DM → SR 37.24 0.9341 0.1907 DN+SR → DM (ours) 37.36 0.9353 0.1814 color image SR. All five models are implemented in the same way as F M (Fig. 2) by 6 RRDBs. • Thr ee partially joint models: DN+DM (joint DN and DM), DN+SR (joint raw image DN and SR), and DM+SR (joint DM and SR). While DN+DM and DN+SR are implemented as F M (Fig. 2) using 6 RRDBs, DM+SR are implemented as E2ENet using 12 RRDBs. • Four fully joint models: DN+SR → DM (proposed TENet++), DN+DM+SR (E2ENet), DN → DM+SR (similar architectur e as TENet++ wher e DN supervi- sion is provided instead), and DN+DM → SR (similar architectur e as TENet++ where DM supervision is provided instead). All fully joint models are imple- mented by 12 RRDBs. All models are trained with the × 2 SR factor and the same level of Gaussian-Poisson noise in PixelShift200. W e compare our proposed pipelines with other pipelines using these models for a fair comparison. DM->DN->SR DM->SR->DN DN->DM->SR DN->SR->DM DN+DM+SR DN+DM->SR DN+SR->DM Gr ound T ruth Fig. 4: Qualitative comparisons of different pipelines on an example from PixelShift200 test set. The left is the ground truth image, while the right shows the closeups of the output of dif ferent pipelines. The input is the low-resolution noisy mosaiced version of the left image. The top r ow of the right shows the results using different sequential solutions, while the bottom row shows the results of fully joint pipelines and the gr ound truth. Our proposed pipeline DN → SR → DM yields the highest quality among all sequential pipelines, while DN+SR → DM achieves the best among all the joint pipelines. Fig. 5: Qualitative comparisons between DN + DM + SR (top row) vs. DN+SR → DM (bottom row) on different architectures on PixelShift200 test set. Our pipeline produces results with sharper edges and preserves the color of the objects better . 5.2 Pipeline Comparison Experiments Proposed pipeline outperforms others in sequential solutions. T ABLE 1 shows that our pr oposed pipeline DN → SR → DM clearly outperforms all other pipelines under the sequential solution setting. Surprisingly , the PSNR is 3.10 dB higher for our pipeline than the usual pipeline DM → DN → SR. This improvement is achieved simply by adopting our pipeline as a r eplacement for the other pipelines. As observed, when DN is not performed in the first stage, the image quality obtained will drop sharply . When DN is fixed as the first task, our proposed pipeline still improves PSNR by 0.28 dB, which reflects that perform- ing SR before DM yields a higher quality than performing DM befor e SR. These experimental findings confirm our discussion in Sec. 3.1 that DN and SR in mosaic space are suggested. Proposed pipeline insignificantly outperforms others in joint solutions. Since performing DN in the first stage is the best option, we now mainly study the pipelines where DN is performed first among partially and fully joint solutions. T ABLE 1 shows the quantitative comparisons. One can con- clude that (1) joint solutions outperform sequential solutions in the same execution order , as expected. For example, DN+SR → DM in both partially and fully joint solutions pro- duces images with better metric values than DN → SR → DM in sequential solutions. (2) In both partially joint and fully joint solutions, our proposed pipeline DN+SR → DM consistently generates slightly higher PSNR and SSIM than other pipelines . In particular , PSNR of the proposed pipeline is 0.39 dB higher than any other pipelines in the partially joint solutions. In joint solutions, TENet++ (DN+SR → DM) outperforms the E2ENet counterpart (DN+DM+SR) by 0.65 dB in terms of PSNR. However , we highlight that the improvement of the T ABLE 2: Ablation on architectures. W e experiment with the other possible architectures constructed by the NLSA [11] block and two SOT A models, JDSR [12] and JDnDmSR [13]. Our pr oposed pipeline DN+SR → DM consistently im- proves the performance of all given networks on the task of joint DN, DM and SR. Architecture Pipeline PSNR SSIM FreqGain ↓ NLSA block [11] DN+DM+SR 34.63 0.9086 0.2975 DN+SR → DM (ours) 36.05 0.9270 0.1769 JDSR [12] DN+DM+SR 36.53 0.9289 0.1957 DN+SR → DM (ours) 36.68 0.9296 0.1947 JDnDmSR [13] DN+DM+SR 33.11 0.8782 0.4180 DN+SR → DM (ours) 36.91 0.9317 0.1959 TENet++ (Ours) DN+DM+SR 36.71 0.9292 0.1851 DN+SR → DM (ours) 37.36 0.9353 0.1814 proposed pipeline in joint solutions is less than 1 dB, which is not as significant as sequential solutions. Such a marginal improvement may indicate that the execution order of tasks in an end-to-end solution might be inapplicable. Qualitative comparisons of dif ferent pipelines. The com- parison of sequential solutions in Fig. 4 (top row) shows our proposed pipeline DN → SR → DM clearly outperforms other pipelines with significantly fewer color artifacts, validating our suggestion to perform SR before DM. Our pipeline pro- duces less noise and r eflects the importance of performing DN at the first stage. In the fully joint solutions (Fig. 4 bottom r ow), our proposed pipeline again achieves better qualitative results than others, suffering less moir ´ e. 6 A B L AT I O N S T U DY 6.1 Ablate Pr oposed Pipeline W e have demonstrated that our proposed pipeline is quanti- tatively and qualitatively better than other pipelines in Sec. 5. However , one may wonder: (1) what if a different ar chi- tecture is used other than the RRDB module and TENet++? (2) What if a different dataset instead of Pixelshift200 is used for training and evaluation? (3) What if a different SR factor is used instead of 2? (4) What if a differ ent noise model is adopted instead of the Gaussian-Poisson noise model? Here we validate that our pr oposed pipelines consistently outperform other pipelines in a variety of settings . Architecture. W e ablate the module-level and network-level architectur es in PixelShift200. The module-level architecture ablation study denotes that we use the same architectur e as E2ENet for pipeline DN+DM+SR and as TENet++ for pipeline DN+SR → DM where a dif ferent module ( e.g. NLSA) is used instead of the original RRGB. The network-level architectur e ablation study means a differ ent architectur e rather than TENet++ is used. For the module-level exper- iment, we leverage the non-local sparse attention (NLSA) module from the state-of-the-art (SOT A) image SR work [11] to build the end-to-end network. For the network-level experiment, we replace TENet++ with the SOT A networks JDSR [12] and JDnDmSR [13] for the joint DN, DM and SR problem. W e insert our pr oposed pipeline into the two mod- els by providing intermediate supervision in a similar way as TENet++ as illustrated in Fig. 2c. T ABLE 2 compar es the performance of the original pipeline (DN+DM+SR) and the same network using our proposed pipeline DN+SR → DM. Experiments on all three architectures show our pipeline con- sistently improves the performance regardless of the architecture designs . In addition, by comparing r esults in T ab. 2 with T ab. 1, one can observe that our proposed TENet++ outperforms the network constructed by the SOT A module and the SOT A networks (JDSR [12], JDnDmSR [13]) for joint DN, DM, and SR. Qualitative comparisons of the results of differ ent archi- tectures (columns) fitted with two differ ent pipelines (the top r ow shows the usual pipeline DN+DM+SR, the bottom row shows our pipeline DN+SR → DM) are presented in Fig. 5. For each network, our pipeline DN+SR → DM in joint solution enhances image sharpness while also preserving the color of the objects to a greater extent. Our TENet++ also yields more visually appealing images than SOT A when equipped with the same pipeline. Dataset. W e also experiment with different pipelines (se- quential and joint solutions) on other datasets instead of PixelShift200. W e train models with different pipelines on DIV2K 800 training images [42], wher e the mosaic images are synthesized fr om color images using the same unpr o- cessing technique in [9]. Gaussian-Poisson noise and × 2 SR are used. The evaluation on three widely used benchmarks, the DIV2K test set, Urban100 [49], and CBSD68 [25] is provided in T ABLE 3. As observed, our proposed pipelines improves the network’ s performance across all the widely-used benchmarks in both sequential and joint solutions . Despite the consistent improvement, the PSNR gain in joint solution is less than 0.2 dB in all benchmarks, which again shows that shuffling the pipeline in an end-to-end network is not necessarily applicable. SR factor . W e also experiment with a different factor ( × 4 ) of super-r esolution to validate the benefit of the proposed pipeline. T ABLE 4 shows that our proposed pipeline outper- forms other pipelines under the × 4 SR factor in both sequential and joint solutions. Noise model. Our previous experiments are conducted un- der the Gaussian-Poisson noise modeling assumpt ion. Here, we further validate our pipeline under a differ ent assump- tion of the noise model. W e study the widely used Gaussian noise. The noise level (sigma) is set to 10. W e train models on DF2K [50] and evaluate on the DIV2K test set [42], Urban100 [49] and CBSD68 [25]. T ABLE 5 shows that our proposed pipeline DN+SR → DM is only able to marginally outperform the vanilla pipeline DN+DM+SR under the Gaussian noise setting in joint solutions. In Fig.7, we further show the qualitative results of our TENet++ compar ed to the previous methods with a pipeline of DN+DM → SR. It is worth noting that our method achieves the closest qualitative performance to the Ground T ruth. 6.2 Ablate pr oposed Dataset PixelShift200 W e evaluate two identical models (TENet++) trained on two distinct datasets, our PixelShift200 and the incompletely color-sampled dataset DIV2K [42]. The real-shot raw images are used as input. PixelShift200 helps the model suffer less moir´ e and color artifacts , as shown in Fig. 6 (column 3 vs. column 4). The improved qualitative performance is attributed to JDSR [12] JDnDmSR [13] TENet++ (DIV2K) TENet++ (PixelShift200) Fig. 6: Qualitative comparisons on the real-shot images . W e compare the SOT A methods JDSR [12], JDnDmSR [13] and our proposed TENet++ trained on DIV2K, and TENet++ trained on PixelShift200. Images were captured using a Sony ILCE-7RM3 (top row) and iPhone XS Max (bottom row). ADMM [47] Condat [48] FlexISP [35] DemosaicNet [4] TENet++ (ours) Ground T ruth Fig. 7: The qualitative comparison of dif ferent methods on the noisy Urban100 [49] test images. The noise model is the additive Gaussian noise (sigma=10) and the SR factor is 2. Our TENet achieves a close performance to the Ground T ruth. T ABLE 3: Ablation on datasets. Models ar e trained on DIV2K [42], and tested on DIV2K test set, Urban100 [49], and CBSD68 [25] with Gaussian-Poisson noise and × 2 SR. Our pr oposed pipeline outperforms other pipelines in both sequential and joint solutions regar dless of datasets. T ype Pipeline DIV2K [42] Urban100 [49] CBSD68 [25] PSNR SSIM FreqGain ↓ PSNR SSIM FreqGain ↓ PSNR SSIM FreqGain ↓ Sequential DM → DN → SR (usual) 20.02 0.6296 0.8705 19.32 0.6393 1.7710 20.95 0.6399 0.8073 DM → SR → DN 19.60 0.5684 1.1938 18.96 0.5838 1.9813 20.55 0.5989 1.0690 SR → DM → DN 19.88 0.5950 0.7514 19.25 0.6103 1.5520 20.83 0.6195 0.6139 SR → DN → DM 20.07 0.6309 0.6012 19.41 0.6379 1.4749 21.00 0.6446 0.4723 DN → DM → SR 20.30 0.7660 0.4046 19.52 0.7326 1.5211 21.15 0.7097 0.4599 DN → SR → DM (ours) 20.30 0.7602 0.2748 19.55 0.7282 1.3483 21.17 0.7079 0.2837 Partially joint DN → DM+SR 20.30 0.7617 0.2875 19.59 0.7337 1.3684 21.15 0.7040 0.3260 DN+DM → SR 20.30 0.7664 0.4217 19.55 0.7366 1.5405 21.15 0.7101 0.4661 DN+SR → DM (ours) 20.34 0.7630 0.2693 19.67 0.7391 1.3379 21.22 0.7109 0.2776 Fully joint DN+DM+SR 20.30 0.7563 0.3167 19.59 0.7295 1.3980 21.16 0.7019 0.3571 DN+SR → DM (ours) 20.37 0.7677 0.2766 19.72 0.7467 1.2965 21.24 0.7118 0.3216 T ABLE 4: Ablation on SR factor . SR factor is 4. The pr o- posed pipeline again outperforms other pipelines in both sequential and partially joint solutions. T ype Pipeline PSNR SSIM FreqGain ↓ Sequential DM → DN → SR (usual) 31.49 0.8347 0.2473 DM → SR → DN 28.05 0.6766 0.7773 SR → DM → DN 28.70 0.6561 0.5657 SR → DN → DM 29.25 0.6752 0.5200 DN → DM → SR 32.41 0.8654 0.1650 DN → SR → DM (ours) 32.99 0.8752 0.1506 Partially joint DN → DM+SR 32.95 0.8739 0.1593 DN+DM → SR 32.32 0.8665 0.1542 DN+SR → DM (ours) 33.06 0.8770 0.1547 Fully joint DN+DM+SR 33.48 0.8797 0.1656 DN → DM+SR 33.21 0.8765 0.1917 DN+DM → SR 33.45 0.8796 0.1893 DN+SR → DM (ours) 33.54 0.8810 0.1655 T ABLE 5: Ablation on noise model. W e experiment a differ ent noise model, the additive Gaussian noise (sigma 10), with × 2 SR. Models are trained on DF2K [50]. Our proposed pipeline DN+SR → DM outperforms DN+DM+SR. Dataset Pipeline PSNR SSIM FreqGain ↓ DIV2K [42] DN+DM+SR 29.74 0.8396 0.3113 DN+SR → DM (ours) 29.81 0.8410 0.2978 Urban100 [49] DN+DM+SR 26.81 0.8287 1.2128 DN+SR → DM (ours) 26.96 0.8327 1.1960 CBSD68 [25] DN+DM+SR 27.52 0.7766 0.3674 DN+SR → DM (ours) 27.56 0.7775 0.3616 the full color-sampling and natural image distribution char- acteristics of the proposed PixelShift200. 7 R E A L - S H OT E X P E R I M E N T S W e compare TENet++ with the raw image processing li- brary , DCRaw , and popular commercial software, Camera Raw , on a raw image shot with an iPhone X (see Fig. 1). The SR model implemented using the Fig. 2a network str ucture by 6 RRDBs (r efer to Sec. 5.2 for details) is used to super - resolve the demoisaiced outputs of DCRaw and Camera Raw . The proposed TENet++ yields clean results with rich detail. W e also provide more r eal-shot comparisons between and our TENet++ and SOT A methods when equipped with the same pipeline (DN+SR → DM) as TENet++ in Fig. 6. All models ar e trained on DIV2K for a fair comparison. Compared to JDSR [12], our TENet++ successfully recon- structs the high-frequency texture. TENet++ also pr oduces far fewer artifacts, such as moir ´ es (r efer to the scarf textur e in the top r ow) and color aliasing (refer to the steel railing in the bottom row), than JDnDmSR [13]. 8 C O N C L U S I O N W e presented intermediate supervision that enforces a cer- tain pipeline in an end-to-end network. W e performed a comprehensive study in the effect of pipelines on the task of learning-based denoising (DN), demosaicing (DM), and super-r esolution (SR) in both sequential and joint solutions. W e found that the ef fect of the pipeline is significant in sequential solutions, while it is mar ginal in joint solutions, and thus shuffling the execution order of tasks is not very necessary for an end-to-end network. W e also contributed PixelShift200, a full-color sampled dataset, for training and evaluating raw image processing-related tasks. 9 L I M I TA T I O N A N D F U T U R E W O R K First, the proposed PixelShift200 only includes static objects and has a limited size (200 unique samples). It will be more beneficial to the community if more samples could be col- lected. Second, this work only considers single-frame image processing. W ith increasing interest in the use of multiple frames [51], we believe that it is promising to study end-to- end networks for multi-frame DN, DM, and SR, which have greater practical values but are rather under-explored. Acknowledgement The authors thank the reviewers of ICCP 2022 for valuable suggestions and Dr . Silvio Giancola for proofr eading the rebuttals. This work was supported by the KAUST Of fice of Sponsored Resear ch (OSR) through the V isual Computing Center (VCC) funding. R E F E R E N C E S [1] R. Kimmel, “Demosaicing: image reconstruction fr om color ccd samples,” IEEE transactions on image processing : a publication of the IEEE Signal Processing Society , vol. 8 9, pp. 1221–8, 1999. [2] K. Dabov , A. Foi, V . Katkovnik, and K. Egiazarian, “Image denois- ing by sparse 3-d transform-domain collaborative filtering,” IEEE T ransactions on image processing , vol. 16, no. 8, pp. 2080–2095, 2007. [3] M. Irani and S. Peleg, “Improving r esolution by image r egistra- tion,” CVGIP Graph. Model. Image Process. , vol. 53, pp. 231–239, 1991. [4] M. Gharbi, G. Chaurasia, S. Paris, and F . Durand, “Deep joint de- mosaicking and denoising,” ACM T ransactions on Graphics (TOG) , vol. 35, no. 6, p. 191, 2016. [5] K. Zhang, W . Zuo, Y . Chen, D. Meng, and L. Zhang, “Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising,” IEEE T ransactions on Image Pr ocessing , vol. 26, no. 7, pp. 3142–3155, 2017. [6] C. Dong, C. C. Loy , K. He, and X. T ang, “Image super-r esolution using deep convolutional networks,” IEEE transactions on pattern analysis and machine intelligence , vol. 38, no. 2, pp. 295–307, 2016. [7] J. Nakamura, “Image sensors and signal processing for digital still cameras,” 2005. [8] P . Chatterjee, N. Joshi, S. B. Kang, and Y . Matsushita, “Noise suppression in low-light images through joint denoising and demosaicing,” CVPR 2011 , pp. 321–328, 2011. [9] T . Brooks, B. Mildenhall, T . Xue, J. Chen, D. Sharlet, and J. Barron, “Unprocessing images for learned raw denoising,” 2019 IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 11 028–11 037, 2019. [10] X. Xu, Y . Ma, and W . Sun, “T owards r eal scene super-r esolution with raw images,” in Proceedings of the IEEE Confer ence on Computer V ision and Pattern Recognition (CVPR) , 2019. [11] Y . Mei, Y . Fan, and Y . Zhou, “Image super -resolution with non- local sparse attention,” in Proceedings of the IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , June 2021, pp. 3517–3526. [12] R. Zhou, R. Achanta, and S. S ¨ usstrunk, “Deep r esidual network for joint demosaicing and super-r esolution,” in Color and Imaging Conference , vol. 2018, no. 1. Society for Imaging Science and T echnology , 2018, pp. 75–80. [13] W . Xing and K. Egiazarian, “End-to-end learning for joint image demosaicing, denoising and super-resolution,” 2021 IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 3507–3516, 2021. [14] H. S. Malvar , L.-w . He, and R. Cutler , “High-quality linear in- terpolation for demosaicing of bayer -patterned color images,” in 2004 IEEE International Conference on Acoustics, Speech, and Signal Processing , vol. 3. IEEE, 2004, pp. iii–485. [15] K. Hirakawa and T . W . Parks, “Adaptive homogeneity-directed demosaicing algorithm,” IEEE T ransactions on Image Processing , vol. 14, no. 3, pp. 360–369, 2005. [16] L. Zhang and X. W u, “Color demosaicking via dir ectional linear minimum mean square-err or estimation,” IEEE T ransactions on Image Processing , vol. 14, no. 12, pp. 2167–2178, 2005. [17] O. Kapah and H. Z. Hel-Or , “Demosaicking using artificial neural networks,” in Applications of Artificial Neural Networks in Image Processing V , vol. 3962. International Society for Optics and Photonics, 2000, pp. 112–121. [18] Y .-Q. W ang, “A multilayer neural network for image demosaick- ing,” in 2014 IEEE International Conference on Image Processing (ICIP) . IEEE, 2014, pp. 1852–1856. [19] Y . Zhang, K. Li, K. Li, B. Zhong, and Y . Fu, “Residual non-local attention networks for image restoration,” in 7th International Conference on Learning Representations, ICLR 2019, New Orleans, LA, USA, May 6-9, 2019 . OpenReview .net, 2019. [Online]. A vailable: https://openreview .net/forum?id=HkeGhoA5FX [20] Q. Bammey , R. G. V . Gioi, and J. Morel, “An adaptive neural network for unsupervised mosaic consistency analysis in image forensics,” 2020 IEEE/CVF Conference on Computer V ision and Pat- tern Recognition (CVPR) , pp. 14 182–14 192, 2020. [21] L. I. Rudin, S. Osher , and E. Fatemi, “Nonlinear total variation based noise removal algorithms,” Physica D: nonlinear phenomena , vol. 60, no. 1-4, pp. 259–268, 1992. [22] A. Buades, B. Coll, and J.-M. Mor el, “A non-local algorithm for image denoising,” in 2005 IEEE Computer Society Conference on Computer V ision and Pattern Recognition (CVPR) , vol. 2. IEEE, 2005, pp. 60–65. [23] M. Aharon, M. Elad, A. Bruckstein et al. , “K-svd: An algorithm for designing over complete dictionaries for sparse representation,” IEEE T ransactions on signal processing , vol. 54, no. 11, p. 4311, 2006. [24] H. C. Burger , C. J. Schuler , and S. Harmeling, “Image denoising: Can plain neural networks compete with bm3d?” in 2012 IEEE conference on computer vision and pattern recognition (CVPR) . IEEE, 2012, pp. 2392–2399. [25] D. Martin, C. Fowlkes, D. T al, and J. Malik, “A database of hu- man segmented natural images and its application to evaluating segmentation algorithms and measuring ecological statistics,” in Computer V ision, 2001. ICCV 2001. Proceedings. Eighth IEEE Interna- tional Conference on , vol. 2. IEEE, 2001, pp. 416–423. [26] T . Pl ¨ otz and S. Roth, “Neural nearest neighbors networks,” in NeurIPS , 2018. [27] S. W . Hasinoff, “Photon, poisson noise,” in Computer V ision, A Reference Guide , 2014. [28] S. W . Zamir , A. Arora, S. Khan, M. Hayat, F . Khan, M.-H. Y ang, and L. Shao, “Cycleisp: Real image restoration via improved data synthesis,” 2020 IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 2693–2702, 2020. [29] T . Plotz and S. Roth, “Benchmarking denoising algorithms with real photographs,” 2017 IEEE Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 2750–2759, 2017. [30] D. Glasner , S. Bagon, and M. Irani, “Super -resolution from a single image,” 2009 IEEE 12th International Conference on Computer V ision (ICCV) , pp. 349–356, 2009. [31] R. T imofte, V . De Smet, and L. V an Gool, “A+: Adjusted anchored neighborhood regression for fast super-resolution,” in Asian Con- ference on Computer V ision . Springer , 2014, pp. 111–126. [32] C. Dong, C. C. Loy , and X. T ang, “Accelerating the super- resolution convolutional neural network,” in Eur opean Confer ence on Computer V ision (ECCV) . Springer , 2016, pp. 391–407. [33] X. W ang, K. Y u, S. W u, J. Gu, Y . Liu, C. Dong, Y . Qiao, and C. C. Loy , “Esrgan: Enhanced super-resolution generative adversarial networks,” in European Conference on Computer V ision (ECCV) . Springer , 2018, pp. 63–79. [34] X. Liu, K. Shi, Z. W ang, and J. Chen, “Exploit camera raw data for video super- resolution via hidden markov model inference,” IEEE T ransactions on Image Processing , vol. 30, pp. 2127–2140, 2021. [35] F . Heide, M. Steinberger , Y .-T . T sai, M. Rouf, D. Pajak, D. Reddy , O. Gallo, J. Liu, W . Heidrich, K. Egiazarian et al. , “Flexisp: A flexible camera image processing framework,” ACM T ransactions on Graphics (TOG) , vol. 33, no. 6, p. 231, 2014. [36] E. Schwartz, R. Giryes, and A. M. Bronstein, “Deepisp: T oward learning an end-to-end image processing pipeline,” IEEE T ransac- tions on Image Processing , vol. 28, pp. 912–923, 2019. [37] T . Klatzer , K. Hammernik, P . Knobelreiter , and T . Pock, “Learning joint demosaicing and denoising based on sequential energy min- imization,” in 2016 IEEE International Confer ence on Computational Photography (ICCP) . IEEE, 2016, pp. 1–11. [38] K. Zhang, W . Zuo, and L. Zhang, “Learning a single convolu- tional super-r esolution network for multiple degradations,” in Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition , 2018, pp. 3262–3271. [39] Y .-S. Xu, S.-Y . R. T seng, Y . T seng, H.-K. Kuo, and Y .-M. T sai, “Uni- fied dynamic convolutional network for super-resolution with variational degradations,” 2020 IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 12 493–12 502, 2020. [40] L. Liu, X. Jia, J. Liu, and Q. T ian, “Joint demosaicing and denoising with self guidance,” 2020 IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , pp. 2237–2246, 2020. [41] W . Shi, J. Caballero, F . Husz ´ ar , J. T otz, A. P . Aitken, R. Bishop, D. Rueckert, and Z. W ang, “Real-time single image and video super-r esolution using an ef ficient sub-pixel convolutional neural network,” in Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition , 2016, pp. 1874–1883. [42] E. Agustsson and R. T imofte, “Ntir e 2017 challenge on single im- age super-r esolution: Dataset and study ,” in Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition Workshops , 2017, pp. 126–135. [43] J. Deng, W . Dong, R. Socher , L.-J. Li, K. Li, and L. Fei-Fei, “Im- agenet: A lar ge-scale hierarchical image database,” in 2009 IEEE conference on computer vision and pattern recognition (CVPR) . IEEE, 2009, pp. 248–255. [44] Y . Xing, Z. Qian, and Q. Chen, “Invertible image signal pr ocess- ing,” in Proceedings of the IEEE/CVF Conference on Computer V ision and Pattern Recognition (CVPR) , June 2021, pp. 6287–6296. [45] Sony . (2017) Pixel shift multi shooting. https://support. d- imaging.sony .co.jp/support/ilc/psms/ilce7rm3/en/index. html. [46] D. P . Kingma and J. Ba, “Adam: A method for stochastic optimiza- tion,” CoRR , vol. abs/1412.6980, 2015. [47] H. T an, X. Zeng, S. Lai, Y . Liu, and M. Zhang, “Joint demosaicing and denoising of noisy bayer images with admm,” in 2017 IEEE International Conference on Image Processing (ICIP) . IEEE, 2017, pp. 2951–2955. [48] L. Condat and S. Mosaddegh, “Joint demosaicking and denoising by total variation minimization,” in 2012 19th IEEE International Conference on Image Processing . IEEE, 2012, pp. 2781–2784. [49] J.-B. Huang, A. Singh, and N. Ahuja, “Single image super- resolution from transformed self-exemplars,” in Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (CVPR) , 2015, pp. 5197–5206. [50] B. Lim, S. Son, H. Kim, S. Nah, and K. Mu Lee, “Enhanced deep residual networks for single image super-resolution,” in Proceedings of the IEEE conference on computer vision and pattern recognition workshops , 2017, pp. 136–144. [51] B. W r onski, I. Garcia-Dorado, M. Ernst, D. Kelly , M. Krainin, C.-K. Liang, M. Levoy , and P . Milanfar , “Handheld multi-frame super- resolution,” ACM T ransactions on Graphics (TOG) , vol. 38, no. 4, pp. 1–18, 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment