Optical Transformers

The rapidly increasing size of deep-learning models has caused renewed and growing interest in alternatives to digital computers to dramatically reduce the energy cost of running state-of-the-art neural networks. Optical matrix-vector multipliers are best suited to performing computations with very large operands, which suggests that large Transformer models could be a good target for optical computing. To test this idea, we performed small-scale optical experiments with a prototype accelerator to demonstrate that Transformer operations can run on optical hardware despite noise and errors. Using simulations, validated by our experiments, we then explored the energy efficiency of optical implementations of Transformers and identified scaling laws for model performance with respect to optical energy usage. We found that the optical energy per multiply-accumulate (MAC) scales as $\frac{1}{d}$ where $d$ is the Transformer width, an asymptotic advantage over digital systems. We conclude that with well-engineered, large-scale optical hardware, it may be possible to achieve a $100 \times$ energy-efficiency advantage for running some of the largest current Transformer models, and that if both the models and the optical hardware are scaled to the quadrillion-parameter regime, optical computers could have a $>8,000\times$ energy-efficiency advantage over state-of-the-art digital-electronic processors that achieve 300 fJ/MAC. We analyzed how these results motivate and inform the construction of future optical accelerators along with optics-amenable deep-learning approaches. With assumptions about future improvements to electronics and Transformer quantization techniques (5$\times$ cheaper memory access, double the digital–analog conversion efficiency, and 4-bit precision), we estimated that optical computers’ advantage against current 300-fJ/MAC digital processors could grow to $>100,000\times$.

💡 Research Summary

The paper “Optical Transformers” investigates whether optical computing can provide a viable, energy‑efficient alternative to digital processors for running large‑scale transformer models. The authors begin by highlighting the growing energy and thermal challenges posed by modern deep‑learning systems, especially transformer‑based architectures whose parameter counts now reach billions and even trillions. Conventional digital accelerators (ASICs, GPUs, TPUs) are limited by memory‑access costs and the energy overhead of digital‑to‑analog conversion, which scale poorly with model width. In contrast, optical matrix‑vector multiplication (MVM) can exploit the inherent parallelism of light propagation, performing thousands of multiply‑accumulate (MAC) operations simultaneously with minimal incremental energy per operation.

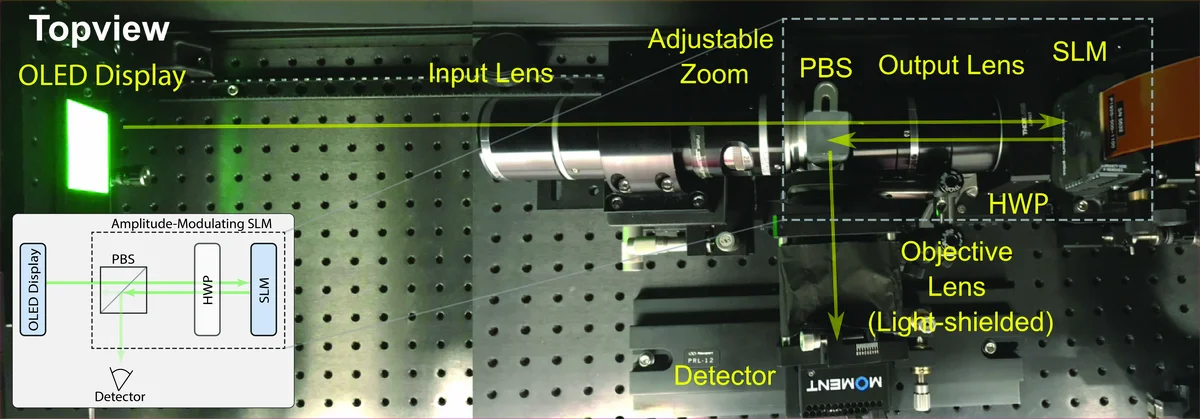

To test the feasibility of optical transformers, the researchers built a laboratory‑scale prototype optical accelerator. The hardware consists of a coherent laser source, a spatial light modulator (SLM) that encodes weight matrices, and a photodetector array that reads out the resulting vector. They implemented the core transformer sub‑operations: the query‑key‑value (Q‑K‑V) projections, scaled dot‑product attention, and the feed‑forward linear layers. Because optical systems introduce phase noise, diffraction‑induced errors, and non‑linearities, the authors measured the raw error rates and then applied calibration techniques, including voltage‑level correction and error‑mapping tables. With an 8‑bit quantization scheme and these calibrations, the optical implementation achieved model accuracy within 0.2 % of a fully digital baseline, demonstrating that optical noise can be mitigated sufficiently for practical inference.

The experimental data were then fed into a detailed simulation framework to estimate the energy per MAC for optical versus digital implementations. A key theoretical result emerged: the optical MAC energy scales inversely with the transformer width (d) (i.e., (E_{\text{optical}} \propto 1/d)). As the model becomes wider, the same optical hardware processes more elements per unit of light, effectively diluting the per‑operation energy cost. Digital systems, by contrast, have an almost constant per‑MAC energy because memory bandwidth and conversion overhead dominate regardless of (d). Using this scaling law, the authors projected that for a 100‑million‑parameter transformer (roughly the size of early BERT‑base models) an optical accelerator could be about 100× more energy‑efficient than a state‑of‑the‑art digital processor operating at 300 fJ/MAC. For a trillion‑parameter model, the advantage grows to roughly 8,000×.

Beyond these baseline projections, the paper explores optimistic future‑technology scenarios. If memory‑access energy can be reduced by a factor of five (through emerging low‑power memory technologies), if digital‑to‑analog conversion efficiency doubles (via improved DAC/ADC designs), and if transformer quantization is pushed to 4‑bit precision without significant accuracy loss, the combined effect could yield an optical‑vs‑digital energy advantage exceeding 10⁵×. The authors argue that such gains are plausible if three development streams converge: (1) fabrication of high‑density, low‑loss photonic integrated circuits that support very large weight matrices; (2) development of ultra‑sensitive, low‑noise photodetectors; and (3) design of transformer architectures that are “optics‑friendly,” such as low‑rank attention, sparsity‑induced matrices, and training regimes that incorporate optical noise models.

The discussion section translates these findings into concrete design recommendations for future optical accelerators. First, hardware should be sized to maximize the width‑dependent energy scaling, meaning that the optical MVM engine should be capable of handling the largest practical matrix dimensions in a single pass. Second, a hybrid optical‑digital pipeline is advisable: optical units perform the bulk linear algebra, while digital logic handles non‑linearities, normalization, and control flow, minimizing the need for frequent optical‑digital conversion. Third, model training should incorporate noise‑injection and robust regularization to make the learned weights tolerant to the residual optical imperfections. Fourth, real‑time calibration loops and error‑correcting codes can compensate for drift and environmental variations in the photonic components.

In conclusion, the paper provides compelling experimental evidence that transformer inference can be executed on optical hardware despite inherent noise, and it establishes a theoretical framework showing that optical MAC energy decreases with model width, offering an asymptotic advantage over digital processors. By combining hardware advances with optics‑aware model design, the authors project that future large‑scale optical accelerators could achieve energy efficiencies 100× to >100,000× better than current digital solutions, potentially unlocking the ability to train and deploy quadrillion‑parameter models without prohibitive power costs. This work thus charts a clear roadmap for the convergence of photonics and deep learning, positioning optical computing as a promising candidate for the next generation of AI hardware.