Edit wars in Wikipedia

We present a new, efficient method for automatically detecting severe conflicts `edit wars’ in Wikipedia and evaluate this method on six different language WPs. We discuss how the number of edits, reverts, the length of discussions, the burstiness of edits and reverts deviate in such pages from those following the general workflow, and argue that earlier work has significantly over-estimated the contentiousness of the Wikipedia editing process.

💡 Research Summary

The paper “Edit wars in Wikipedia” introduces a novel, language‑independent metric for automatically detecting severe editorial conflicts—commonly called edit wars—across multiple Wikipedia language editions. The authors argue that earlier approaches, which relied heavily on simple counts of edits, reverts, or the presence of dispute tags, either over‑estimate the prevalence of conflict or miss many disputes that are not explicitly tagged.

Their method centers on the concept of mutual reverts. For each article they parse the full revision history and identify a revert whenever a later revision matches the text of an earlier one (i‑1). Each revert is represented as a pair (N_d, N_r), where N_d is the total number of edits made by the reverted editor and N_r is the total number of edits made by the reverting editor. By taking the minimum of these two numbers, min(N_d, N_r), they give higher weight to conflicts between experienced editors while assigning low weight to typical vandalism reversions, which usually involve a newcomer with few edits.

All such minima are summed to obtain a raw controversy score M_r. To account for the breadth of a conflict, they multiply M_r by the number of distinct editors E who have participated in at least one mutual revert, yielding M_i = E × M_r. Finally, they suppress the influence of a single dominant pair of editors (the “topmost mutually reverting editors”) to avoid cases where a dispute is essentially a two‑person feud. The final metric is:

M = E × ∑ min(N_d, N_r)

where the sum runs over all mutual revert pairs after the top‑pair exclusion.

The authors validate the metric on six Wikipedia language editions (English, German, French, Spanish, Hungarian, and Romanian—though Romanian data were insufficient for full testing). They begin with a manually labeled seed set of 40 articles (20 clearly controversial, 20 clearly non‑controversial) and iteratively refine the metric by inspecting outliers. Table II shows that as the threshold M increases from 50 to 31 000, the proportion of truly controversial pages rises from 27 % to 97 %. Using a cutoff of M > 1 000 isolates roughly 0.9 % of all English‑Wikipedia articles (≈24 000 of 3 million) as serious edit‑war candidates, a figure far lower than earlier estimates that suggested up to 12 % of highly edited pages are contentious.

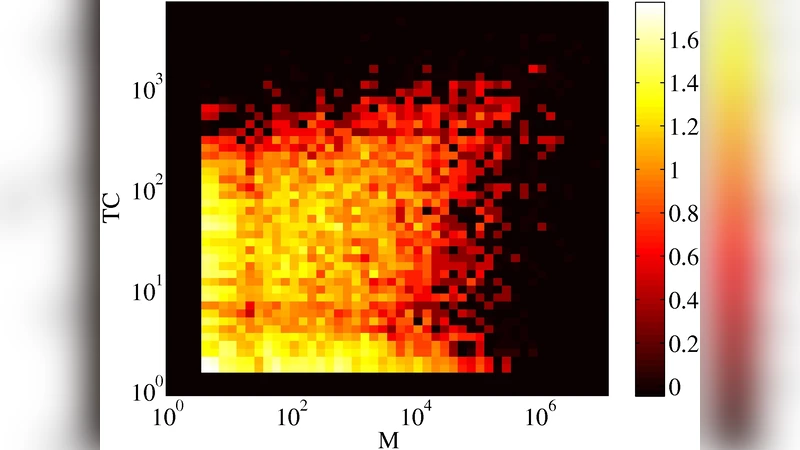

Comparative experiments (Table III) demonstrate that the new metric outperforms simpler baselines such as total edit count, raw revert count, mutual‑revert count, and even the number of dispute tags (TC). While tag‑based methods achieve high precision in English, they fail to generalize to smaller or culturally different Wikipedias; the M‑metric maintains superior precision across all tested languages, reducing classification errors by more than 50 % relative to the best prior method.

Beyond detection, the authors discuss the metric’s ability to distinguish genuine edit wars from vandalism. High M values with few or no dispute tags often correspond to conflicts that the community has not formally recognized, whereas low M values paired with many tags usually indicate superficial disagreements or well‑tagged but low‑impact disputes. The authors suggest that monitoring the temporal evolution of M could enable early warning systems that flag pages on the brink of an edit war, allowing bots or moderators to intervene proactively.

The paper concludes that, contrary to popular belief, the vast majority of Wikipedia articles evolve peacefully; less than one percent exhibit the level of conflict that justifies the “edit war” label. Their language‑agnostic, quantitatively robust metric provides a scalable tool for researchers and Wikipedia administrators to monitor, study, and eventually mitigate editorial conflicts across the entire multilingual Wikipedia ecosystem. Future work includes extending the approach to detect pure vandalism, refining low‑M classification, and leveraging crowdsourcing platforms such as Mechanical Turk to generate larger ground‑truth datasets for further validation.

Comments & Academic Discussion

Loading comments...

Leave a Comment