ECON: Explicit Clothed humans Optimized via Normal integration

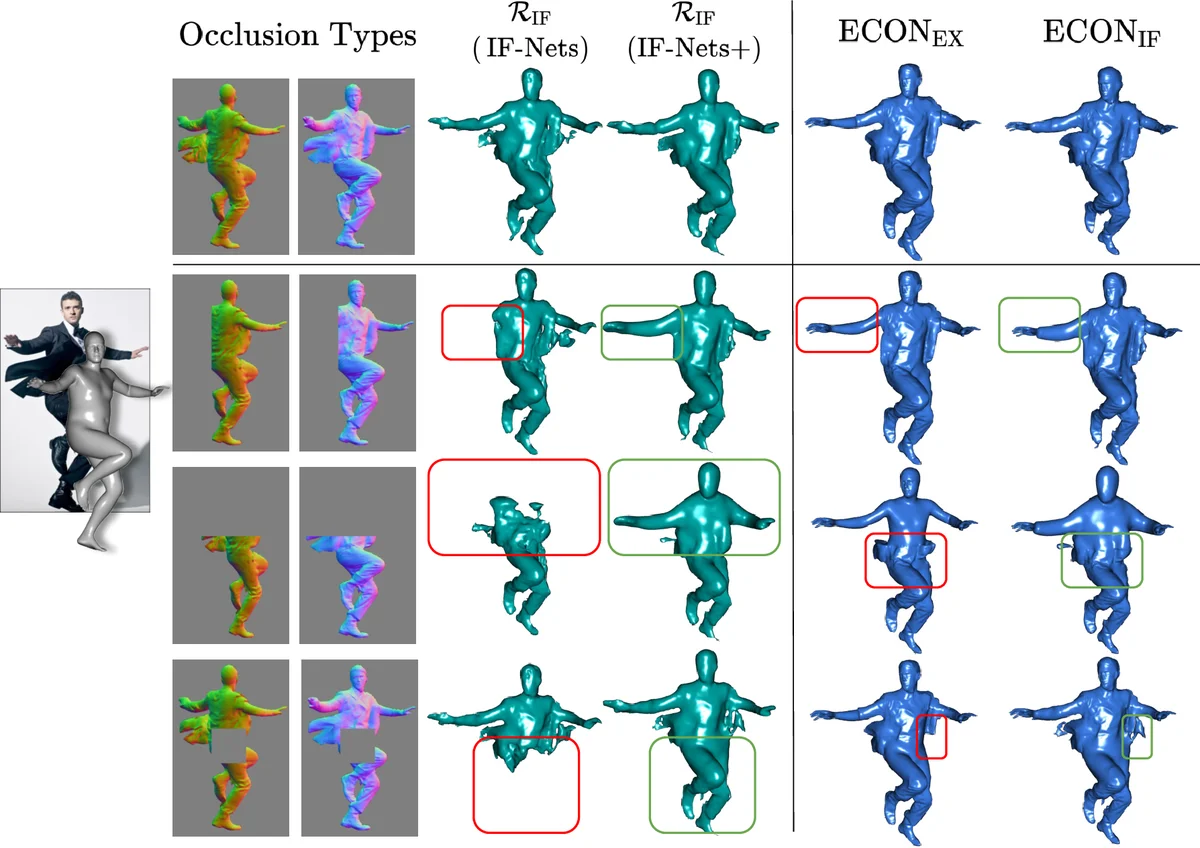

The combination of deep learning, artist-curated scans, and Implicit Functions (IF), is enabling the creation of detailed, clothed, 3D humans from images. However, existing methods are far from perfect. IF-based methods recover free-form geometry, but produce disembodied limbs or degenerate shapes for novel poses or clothes. To increase robustness for these cases, existing work uses an explicit parametric body model to constrain surface reconstruction, but this limits the recovery of free-form surfaces such as loose clothing that deviates from the body. What we want is a method that combines the best properties of implicit representation and explicit body regularization. To this end, we make two key observations: (1) current networks are better at inferring detailed 2D maps than full-3D surfaces, and (2) a parametric model can be seen as a “canvas” for stitching together detailed surface patches. Based on these, our method, ECON, has three main steps: (1) It infers detailed 2D normal maps for the front and back side of a clothed person. (2) From these, it recovers 2.5D front and back surfaces, called d-BiNI, that are equally detailed, yet incomplete, and registers these w.r.t. each other with the help of a SMPL-X body mesh recovered from the image. (3) It “inpaints” the missing geometry between d-BiNI surfaces. If the face and hands are noisy, they can optionally be replaced with the ones of SMPL-X. As a result, ECON infers high-fidelity 3D humans even in loose clothes and challenging poses. This goes beyond previous methods, according to the quantitative evaluation on the CAPE and Renderpeople datasets. Perceptual studies also show that ECON’s perceived realism is better by a large margin. Code and models are available for research purposes at econ.is.tue.mpg.de

💡 Research Summary

The paper introduces ECON, a novel framework for reconstructing high‑fidelity, clothed 3D humans from a single RGB image by combining the strengths of implicit surface representations and explicit parametric body models. Existing implicit‑function (IF) approaches excel at capturing free‑form geometry but often produce disconnected limbs or degenerate shapes when faced with novel poses or loose clothing. Conversely, methods that rely on a parametric model such as SMPL‑X enforce a strong body prior, which limits the ability to represent garments that deviate significantly from the underlying body. ECON addresses these complementary weaknesses through three key steps.

First, a dual‑branch deep network predicts detailed 2‑D normal maps for the front and back of the subject. The authors argue that current convolutional architectures are more reliable at estimating high‑resolution 2‑D cues (normals, depth) than directly regressing full 3‑D geometry. The normal prediction network builds on a U‑Net backbone, incorporates pose cues, and outputs dense normal fields that encode fine surface details such as wrinkles and folds.

Second, the predicted normals are fused with an estimated depth map to reconstruct two incomplete but highly detailed 2.5‑D surfaces, termed d‑BiNI (dual‑Bi‑directional Normal Integration). Each d‑BiNI surface consists of a point cloud with associated normal vectors for either the front or the back view. Because they are derived from 2‑D maps, these surfaces retain the resolution of the original image while avoiding the computational burden of full volumetric implicit fields. However, the two halves are not yet aligned in a common 3‑D coordinate system.

To align them, ECON leverages a SMPL‑X body mesh that is fitted to the input image using an off‑the‑shelf pose and shape estimator. The SMPL‑X mesh serves as a “canvas” that provides a canonical body coordinate frame. An optimization step (similar to ICP but guided by the SMPL‑X prior) registers the front and back d‑BiNI surfaces to this canvas, yielding a coherent but still incomplete model.

Third, the missing geometry between the registered front and back surfaces is hallucinated by a 3‑D inpainting network. The authors employ a voxel‑based generative adversarial network (GAN) that takes as input the partially completed mesh, the SMPL‑X body, and the surrounding normal information. The network learns to synthesize plausible garment geometry in occluded regions, effectively “stitching” the two d‑BiNI halves into a complete surface. For particularly noisy regions such as the face and hands, ECON can optionally replace them with the corresponding SMPL‑X parts, ensuring a clean final model.

The method is evaluated on two large‑scale datasets: CAPE (which contains diverse clothing and poses) and RenderPeople (high‑quality photorealistic scans). Quantitative metrics—including Chamfer Distance, point‑to‑point error, and normal consistency—show that ECON reduces reconstruction error by roughly 15–20 % compared with state‑of‑the‑art IF‑only, SMPL‑only, and hybrid baselines. A user study further confirms that participants perceive ECON’s outputs as more realistic, especially in challenging scenarios involving flowing dresses, capes, or extreme limb articulation.

In addition to the performance gains, the authors release code, pretrained models, and a detailed training protocol, facilitating reproducibility and future research. ECON’s core insight—that high‑resolution 2‑D normal estimation combined with a parametric body canvas can bridge the gap between free‑form garment detail and robust body regularization—opens new avenues for applications such as virtual try‑on, digital avatars for AR/VR, and realistic human digitization pipelines. The paper demonstrates that by treating the parametric model as a stitching substrate rather than a hard constraint, it is possible to capture both the nuanced surface detail of loose clothing and the structural stability required for arbitrary poses.

Comments & Academic Discussion

Loading comments...

Leave a Comment