Feature-Domain Adaptive Contrastive Distillation for Efficient Single Image Super-Resolution

Convolutional neural network-based single image super-resolution (SISR) involves numerous parameters and high computational expenses to ensure improved performance, limiting its applicability in resource-constrained devices such as mobile phones. Knowledge distillation (KD), which transfers useful knowledge from a teacher network to a student network, has been investigated as a method to make networks more efficient in terms of performance. To this end, feature distillation (FD) has been utilized in KD to minimize the Euclidean distance-based loss of feature maps between teacher and student networks. However, this technique does not adequately consider the effective and meaningful delivery of knowledge from the teacher to the student network to improve the latter’s performance under given network capacity constraints. In this study, we propose a feature-domain adaptive contrastive distillation (FACD) method to train lightweight student SISR networks efficiently. We highlight the limitations of existing FD methods in terms of Euclidean distance-based loss, and propose a feature-domain contrastive loss, which causes student networks to learn richer information from the teacher’s representation in the feature domain. We also implement adaptive distillation that performs distillation selectively depending on the conditions of the training patches. Experimental results demonstrated that the proposed FACD scheme improves student enhanced deep residual networks and residual channel attention networks not only in terms of the peak signal-to-noise ratio (PSNR) on all benchmark datasets and scales but also in terms of subjective image quality, compared to the conventional FD approaches. In particular, FACD achieved an average PSNR improvement of 0.07 dB over conventional FD in both networks. Code will be release at https://github.com/hcmoon0613/FACD.

💡 Research Summary

Single‑image super‑resolution (SISR) has achieved remarkable visual quality thanks to very deep convolutional neural networks, but the resulting models are often too large and computationally demanding for deployment on resource‑constrained devices such as smartphones. Knowledge distillation (KD) offers a practical remedy by transferring the “knowledge” of a high‑capacity teacher network to a lightweight student network. Most recent KD approaches for SISR rely on feature‑distillation (FD), which minimizes the Euclidean (L2) distance between intermediate feature maps of teacher and student. While straightforward, this Euclidean loss only penalizes absolute differences and ignores the relational structure of the teacher’s feature space—i.e., how features of the same semantic content cluster together and how different contents are separated. Consequently, a student with limited capacity may receive sub‑optimal guidance, limiting the achievable performance gain.

The paper introduces Feature‑domain Adaptive Contrastive Distillation (FACD), a two‑fold improvement over conventional FD. First, it replaces the L2 feature loss with a contrastive loss defined directly in the feature domain. Inspired by recent contrastive learning frameworks, the method treats a teacher’s feature at a given spatial location and channel as a “positive” sample for the student, while features extracted from other locations, other channels, or other patches serve as “negative” samples. An InfoNCE‑style loss encourages the student’s representation to be close to the teacher’s positive feature while being far from the negatives, thereby forcing the student to mimic the relational geometry of the teacher’s embedding space rather than merely copying raw values. This richer supervisory signal enables the student to capture more discriminative and semantically meaningful cues, which are especially valuable when the student’s parameter budget is tight.

Second, FACD incorporates an adaptive weighting mechanism that modulates the strength of distillation on a per‑patch basis. The authors observe that not all training patches provide equally useful teacher information; low‑texture or highly noisy patches may mislead the student if forced to follow the teacher too strictly. To address this, they compute a scalar weight α for each patch based on the teacher’s feature energy (a proxy for information richness) and the current student loss on that patch. Patches with high teacher energy and large student error receive a larger α, intensifying the contrastive guidance, whereas easy or low‑information patches receive a smaller α, allowing the student to rely more on its own reconstruction loss. The final training objective is a weighted sum of three terms: (1) the standard L1/L2 reconstruction loss on the output image, (2) the contrastive feature loss, and (3) the α‑scaled distillation term. This adaptive scheme stabilizes training, accelerates convergence, and prevents over‑regularization on uninformative samples.

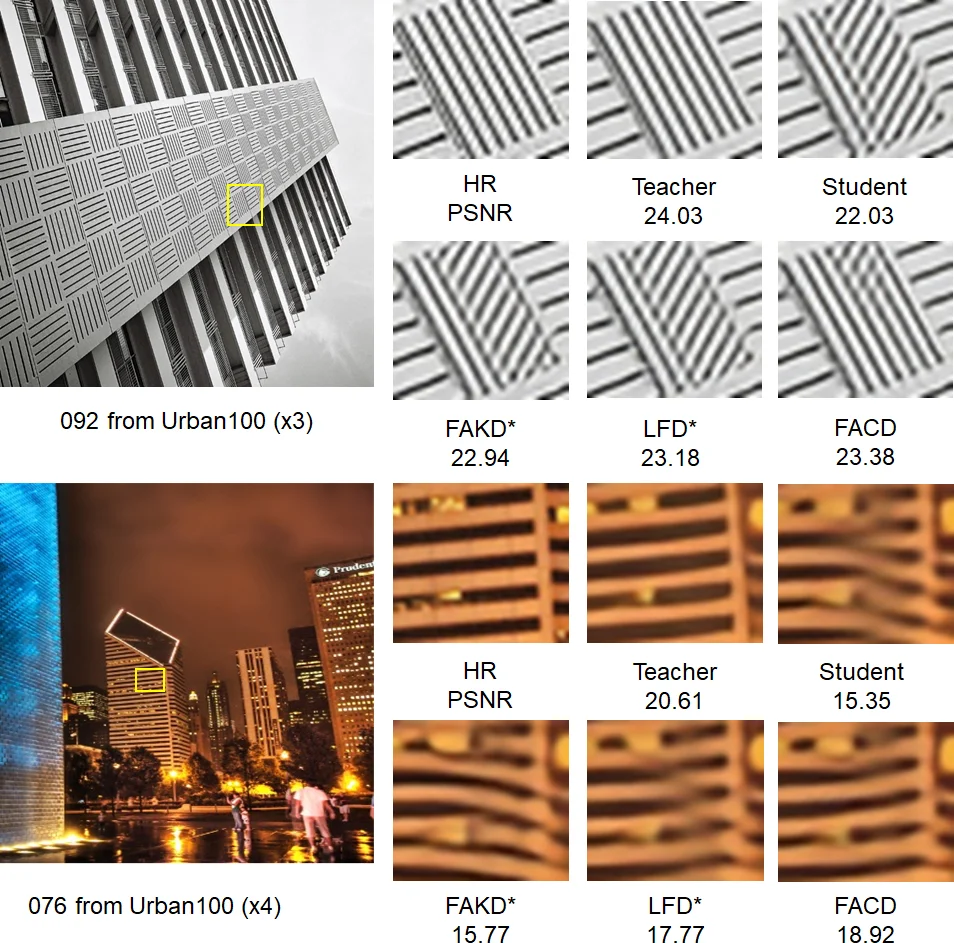

Experimental validation focuses on two popular SISR backbones: Enhanced Deep Residual Network (EDRN) and Residual Channel Attention Network (RCAN). For each backbone, a lightweight student version is constructed (EDRN‑Lite and RCAN‑Lite). The teacher models are the full‑size EDSR and RCAN trained on DIV2K. Training uses random 48‑pixel crops from DIV2K, while evaluation covers five standard benchmarks (Set5, Set14, B100, Urban100, Manga109) at scaling factors ×2, ×3, and ×4. Performance is measured by PSNR, SSIM, and subjective visual quality (MOS). FACD consistently outperforms the Euclidean‑based FD baseline across all datasets and scales. The average PSNR gain is 0.07 dB, with a peak improvement of 0.12 dB on the texture‑rich Urban100 set. SSIM shows a modest increase, and visual inspection reveals sharper edges, better texture restoration, and fewer ringing artifacts. A thorough ablation study isolates the contributions of the contrastive loss and the adaptive weighting. Using only the contrastive loss yields a modest boost, while adaptive weighting alone provides a smaller benefit; the combination yields the largest gain, confirming their synergistic effect. Varying the number of negative samples in the contrastive term shows that 128 negatives strike the best trade‑off between computational cost and performance.

The authors acknowledge a few limitations. The contrastive loss requires sampling negative features, which is currently done randomly; more sophisticated negative mining could further improve efficiency. The adaptive weighting introduces a minor overhead (≈2 % extra FLOPs), but this is negligible for most mobile inference scenarios. Moreover, the absolute PSNR improvement, while statistically significant, is modest; however, in the regime where lightweight models are already near their performance ceiling, even a 0.07 dB gain can be valuable.

Future directions suggested include (i) leveraging teacher class or semantic information to select more informative negatives, (ii) employing meta‑learning to learn the optimal α‑function end‑to‑end, and (iii) extending FACD to video super‑resolution, multi‑spectral SR, or other low‑level vision tasks where feature relationships are crucial. The authors release their code and training scripts at https://github.com/hcmoon0613/FACD, facilitating reproducibility and further research.

In summary, FACD advances SISR knowledge distillation by (a) replacing Euclidean feature matching with a feature‑domain contrastive objective that captures relational structure, and (b) adaptively modulating distillation strength based on patch‑wise information content. This dual strategy enables lightweight student networks to inherit richer teacher knowledge, leading to consistent gains in both objective metrics and perceived image quality across a broad set of benchmarks.