On the Robustness of Median Sampling in Noisy Evolutionary Optimization

Evolutionary algorithms (EAs) are a sort of nature-inspired metaheuristics, which have wide applications in various practical optimization problems. In these problems, objective evaluations are usually inaccurate, because noise is almost inevitable in real world, and it is a crucial issue to weaken the negative effect caused by noise. Sampling is a popular strategy, which evaluates the objective a couple of times, and employs the mean of these evaluation results as an estimate of the objective value. In this work, we introduce a novel sampling method, median sampling, into EAs, and illustrate its properties and usefulness theoretically by solving OneMax, the problem of maximizing the number of 1s in a bit string. Instead of the mean, median sampling employs the median of the evaluation results as an estimate. Through rigorous theoretical analysis on OneMax under the commonly used onebit noise, we show that median sampling reduces the expected runtime exponentially. Next, through two special noise models, we show that when the 2-quantile of the noisy fitness increases with the true fitness, median sampling can be better than mean sampling; otherwise, it may fail and mean sampling can be better. The results may guide us to employ median sampling properly in practical applications.

💡 Research Summary

The paper investigates a new sampling strategy for noisy evolutionary optimization, namely median sampling, and provides a rigorous theoretical analysis of its performance on the classic OneMax problem under various noise models. Evolutionary algorithms (EAs) often face noisy fitness evaluations in real‑world applications; the standard approach to mitigate noise is to evaluate each solution multiple times and use the arithmetic mean of the samples (mean sampling). While mean sampling reduces variance proportionally to the sample size, it is highly sensitive to outliers and can be ineffective when the noise distribution is asymmetric or contains extreme values.

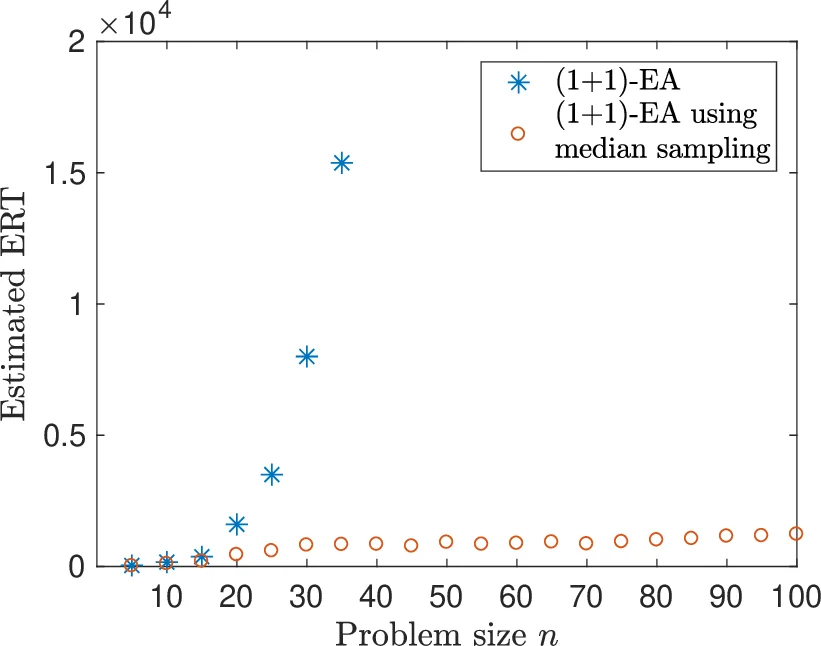

The authors focus on the (1+1)-EA, the simplest evolutionary algorithm, and study its expected runtime (ERT) when solving OneMax (maximizing the number of 1‑bits) under the widely used one‑bit noise model. In this model, with probability p a uniformly chosen bit of the solution is flipped before evaluation, leading to a noisy fitness value that can differ from the true fitness by at most ±1. Prior work showed that without sampling the ERT becomes super‑polynomial when p = ω(log n / n), and that mean sampling with a large enough sample size (e.g., m = 4 n³) restores polynomial runtime only for relatively small p.

The main contribution is the introduction of median sampling, which takes the median of m independent noisy evaluations as the fitness estimate. The authors prove that with a sample size m = 2 n³ + 1, median sampling yields a polynomial ERT for any noise probability p ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment