RTF-Based Binaural MVDR Beamformer Exploiting an External Microphone in a Diffuse Noise Field

Besides suppressing all undesired sound sources, an important objective of a binaural noise reduction algorithm for hearing devices is the preservation of the binaural cues, aiming at preserving the spatial perception of the acoustic scene. A well-kn…

Authors: N. G"o{ss}ling, S. Doclo

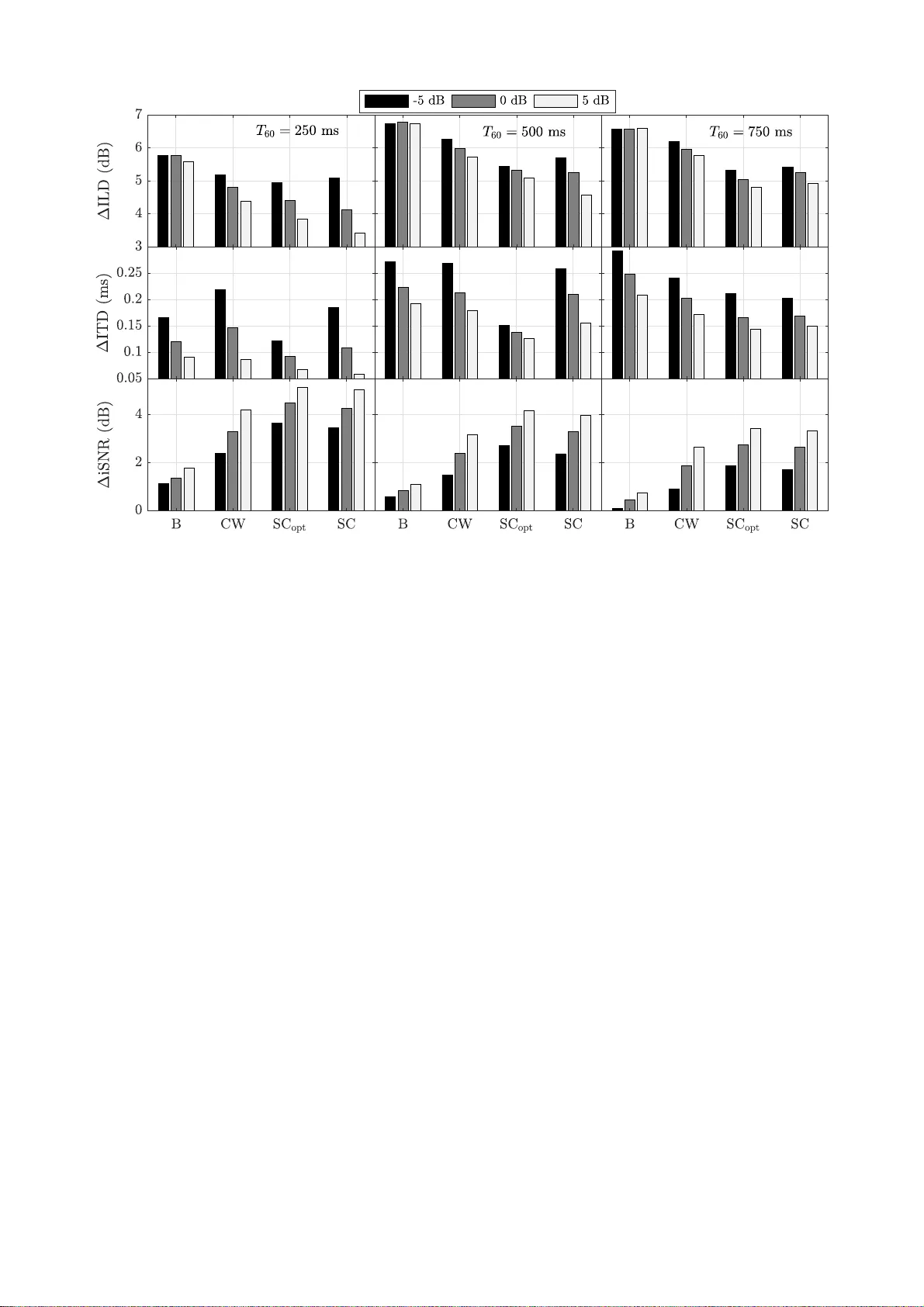

R TF-Based Binaural MVD R Beamf ormer Exploiting an External Microphone in a Diffuse Noise Field Nico Gößling, Simon Doclo Univ ersit y of Oldenb urg , Department of M edical Physics and Aco ustics and Cluster o f Excellence Hearing4 All, Oldenbu rg, German y Email: {nico. goessling, simon.doclo}@uni-old enburg.de W eb: www.sig proc.uni-olde nburg.de Abstract Besides suppressing all un desired sound sources, an important ob- jectiv e of a binaural noise reduction algorithm for hearing devices is the preserv ation of the binaural cues, aiming at preserving the spatial perception of the acoustic scene. A well-kno wn binaural noise reduction algorithm is the binaural minimum v ariance dis- tortionless response beamformer , which can be steered using the relativ e transfer function (R TF ) vector of the desired source, relat- ing t he acoustic transfer functions between the desired source and all microphones to a reference micropho ne. In this paper , we pro- pose a computationally efficient method to estimate the R TF vector in a dif fuse noise field, requiring an additional microphone that is spatially separated from the head-mounted microphones. Assum- ing that the spatial cohe rence between the noise componen ts in the head-moun ted mi crophone signals and the additional microphone signal is zero, we show that an unbiased estimate of the R TF vec- tor can be obtained. Based on real-world recordings, e xperimental results for sev eral re verb eration times show that the proposed R T F estimator outperforms the widely used R TF estimator based on co - v ariance whitening and a simple biased RTF estimator in t erms of noise reduction and binaural cue preservation performance. 1 Introd uction Noise reduction algorithms for head-mounted assistive list ening de vices (e.g., hearing aids, cochlear implants, hearables) are cru- cial to improve speech intelligibility and speech quality i n noisy en vironments. Binaural noise reduction algorithms are able to use the spatial information captured by all microphones on both sides of the head [1, 2]. Besides suppressing undesired sound sources, binaural noise reduction algorithms also aim at preserving the lis- tener’ s spatial perception of the acoustic scene to assure spatial aw areness, to redu ce confusions due to a possible mismatch be- tween acoustical and visual information, and to enable the listener to exploit the binaural hearing adv antage [3]. As shown in [1, 2 , 4], the binaural mi nimum varianc e distortion- less response beamformer (BMVDR) beamformer is able to pre- serve the binaural cues, i. e., the interaural lev el difference (ILD ) and the interaural time dif ference (ITD), of t he desired source. The BMVDR beamformer can either be implemented using the acous- tic transfer f unctions (A TF s) between t he desired source and all microphones or using the relativ e transfer functions ( R TFs), r elat- ing the A TFs to a r eference microphon e [5]. S i nce estimating the R TF s (unlike the A TFs) is feasible in practice, R TF estimation has become an important t ask in the field of multichannel speech en- hancement [6–13]. Aiming at improving the performance of (binaural) noise reduc - tion algorithms, recently the use of an external microphone in com- bination with the head-moun ted microphones has been explored [14–21]. It has, e.g., been sho wn that using an external micro- phone is able to improv e performance in terms of noise reduction [14, 16, 18–21], source l ocalisation [17 ] and binaural cue preser- v ation [16 , 18]. In this paper , we propose a computationally ef ficient method to estimate the R TF vector in a dif fuse noise field using the exter - This work was supported by the Collaborati ve Research Centre 1330 Hearing Acoustics. the Cluster of E xcelle nce 1077 Hearing4all , funded by t he German Researc h Foundation (DFG), and by the joint Lowe r Saxony -Israeli Project A THENA. Y E ( ω ) desir ed sou r ce S ( ω ) Y L , 1 ( ω ) Y L , 2 ( ω ) Y R , 1 ( ω ) Y R , 2 ( ω ) head-moun ted micr opho nes externa l micr opho ne Figure 1: T op-vie w of the conside red acoustic scenario and microphone configuration ( M = 2). nal microphone. This method requires the external microphone to be located far enough from the head-mounted microphones , such that the spatial coherence between the noise components in the head-moun ted microphone signals and the external microphone signal is low . Assuming this spatial coherence to be zero, we show ho w an unbiased R TF esti mator can be deriv ed. Using real-world recordings, we compare t he proposed RTF estimator to a simple biased R T F estimator and to the widely used R TF estimator based on cova riance whitening (CW) [7–11] for sev eral reverb eration times and si gnal-to-noise ratios (SNRs). The results sho w t hat the proposed R TF estimator yields a larger S N R improv ement and re- duced binaural cue errors compared to the existing R TF estimators. When comparing the proposed R T F estimator to an oracle R TF estimator (using the clean speech signal as external microphone signal), only a small performance differenc e can be observed. 2 Configuration and Notation W e consider an acoustic scenario wit h one desired source S ( ω ) and diffuse background noise (e.g., cylindrically or spherically isotropic noise) in a reverbe rant enclosure. Moreov er , we consider a binaural configuration, consisting of a left and a right device (each containing M microphones), and an external microphon e that is spatially separated from the head-mounted microphones, cf. Figure 1. T he m -th microphone signal of t he left hearing de vice Y L ,m ( ω ) can be written in the frequency-do main as Y L ,m ( ω ) = X L ,m ( ω ) + N L ,m ( ω ) , m ∈ { 1 ,...,M } , (1) where X L ,m ( ω ) denotes the desired speech component, N L ,m ( ω ) denotes the noise component and ω denotes the angular frequen cy . For conciseness we will omit ω in the remainder of the paper , where ver possible. The m -th microphone si gnal of the right hearing de vice Y R ,m and the externa l microphon e Y E are similarly defined by substituting R and E for L, respective ly . The mi cro- phone signals of the hearing de vices can b e stacke d in a vector , i.e., y = Y L , 1 , ..., Y L ,M , Y R , 1 , ..., Y R ,M T ∈ C 2 M , (2) with ( · ) T denoting t he t r anspose of a vector . Using (1) , the vector y can be writt en as y = x + n , (3) where the speec h vector x and the noise vector n are defined similarly as i n (2). Without loss of generality , we choose the fi rst microphone on each hearing device as reference microphone, i.e., Y L = e T L y , Y R = e T R y , (4) where e L and e R are selection vectors consisting of zeros and one element equal to 1, i . e., e L ( 1 ) = 1 and e R ( M + 1 ) = 1. In the case of a single desired source, the speech vector x is equal to x = a S , (5) where the vector a ∈ C 2 M contains the A TFs between the desired source S and all microph ones, including re verb eration, micro- phone characteristics and head-shado wing. The R TF vectors a L and a R of the desired source are defined by relating the A TF vector a to both reference microphones, i.e. , a L = a e T L a , a R = a e T R a . (6) The speech cov ariance matrix R x ∈ C 2 M × 2 M and the noise cov ariance matr i x R n ∈ C 2 M × 2 M are defined as R x = E { xx H } = φ x , L a L a H L = φ x , R a R a H R , (7) R n = E { nn H } , (8) where E {·} denotes the expectation operator , ( · ) H denotes the conjugate transpose, and φ x , L = E {| X L | 2 } and φ x , R = E {| X R | 2 } denote the power spectral density (PSD) of t he desired source in the reference micropho nes. Assuming statistical indepe n- dence between the desired speec h and noise components, the microphone signal cov ariance matrix is equal to R y = E { yy H } = R x + R n . (9) The output signals at the left and t he right hearing dev ice are obtained by filtering and summing all microphone signals using the complex -v alued filter vectors w L and w R , respectiv ely , i . e., Z L = w H L y , Z R = w H R y . (10) 3 Binaural MVDR Beamf ormer In this section, we briefly revie w the well-kno wn BMVDR beamformer [2, 22, 23]. T he BMVDR beamformer minimizes the output noise PSD while preserving the desired speech component in the reference mi crophones, hence preserving the binaural cues of the desired source. T he constrained optimization problem for the left filter vector is giv en by min w L E {| w H L n | 2 } subject to w H L a L = 1 . (11) The constrained optimization problem for the right fi lter vector is defined similarly by substituting R for L. T he solutions of these optimization problems are equal to [1, 2, 5] w L = R − 1 n a L a H L R − 1 n a L , w R = R − 1 n a R a H R R − 1 n a R . (12) Hence, to calculate the BMVDR beamformer an estimate of the noise cov ari ance matrix R n and the R TF vectors a L and a R of the desired source is required. Usually , the noise cov ariance matrix R n is either estimated by recursiv ely updating the matrix during speech pauses or approximated by using an appropriate model, e.g., assuming a spherically isotropic noise fi eld. Similarly , the R TF vectors a L and a R are either estimated from the microphone signals or approximated by using – simulated or measured – anechoic R TFs correspondin g to the assumed position of the desired source (e.g., i n front of the user). In the following sections we will consider data-dependent R T F estimation approaches to steer the BMVDR beamformer in (12). 4 R TF Estimation A pproaches In this section, we discuss dif ferent approaches to estimate the R TF vectors a L and a R of the desired source. First, we consider a biased estimator , which only requires an estimate of the mi- crophone signal cov ariance matrix R y . Second, we consider the CW estimator [8, 10 ], which requires estimates of the microphone signal cova riance matrix R y and the noise cov ariance matrix R n . Third, we present an R TF estimator that exploits the external microphone signal Y E , assuming the spatial coherence between the noise components in the head-mounted microphone signals and the external microphone signal is zero. 4.1 Bias ed Estimator (B) Using (6) and (7), it can be easily shown that the R TF vectors are equal to a L = R x e L e T L R x e L , a R = R x e R e T R R x e R , (13) i.e., a column of the speech cova riance matrix R x normalized with the element corresponding to the respectiv e reference micro- phone. When no reliable estimate of the speech cov ariance matrix R x is av ailable, a simple but biased R TF esti mate can be obtained by using the (noisy) microphone signal cov ari ance matrix R y [24] a B L = R y e L e T L R y e L , a B R = R y e R e T R R y e R . (1 4) The biased estimator in (14) obviously does not lead t o the same solution as (13), especially for low input S NRs. 4.2 Covariance Whitening (CW) A frequen tly used (unbiased ) R TF estimator is based on cov ari- ance whitening [7–11]. Using a square-root decomp osition (e.g., Cholesky decomposition), the noise cov ari ance matrix R n can be written as R n = R H/ 2 n R 1 / 2 n . (15) The pre-whitene d microphone signal cov ariance matrix is then equal to R w y = R − H/ 2 n R y R − 1 / 2 n , (16) which can be decomposed using the eigen value decomposition (EVD) as R w y = VΛV H , (17) where the matrix V ∈ C 2 M × 2 M contains the eigen ve ctors and the diagonal matrix Λ ∈ R 2 M × 2 M contains the corresponding eigen values. Using the principal eigen vector v max , i.e., the eigen vector corresponding to the largest eigen value, the R T F vectors can be estimated as [11] a CW L = R 1 / 2 n v max e T L R 1 / 2 n v max , a CW R = R 1 / 2 n v max e T R R 1 / 2 n v max . (18) Due to t he EV D , this estimator has a larger computational complex ity than the biased estimator . Additionally , an estimate of both the microphone signal cova riance matri x R y and the noise cov ariance matrix R n is required, although this estimate is required anyway for the B MVDR beamformer , cf. (12). 4.3 Spatial Coherenc e (SC) Considering a spherically isotropic noise fi el d as an example for a dif fuse noise field, the magnitude-squared coherence (MS C ) between the noise components in two different microphones (neglecting head-shado wing) is equal to [25 ] MSC = sinc ω d c 2 , (1 9) Figure 2 : Analytical inter-microph one magnitude-sq uared coherence in a spherically isotropic noise field. where d denotes the distance between t he two micropho nes and c denotes the speed of sound. Figure 2 depicts the MSC for d ∈ { 0 . 01 , 0 . 1 , 1 } m and c = 343 ms − 1 . It can be seen that for l arge distances between the microphones the MSC tends to be very small, especially for high frequencies. For now , let us assume that the external microphone i s sufficiently far away from the head-mounted microphones, such that E { n N ∗ E } = 0 , (20) i.e., the noise componen ts in the head-mounted microphone signals are spatially uncorrelated with the noise component in t he external microphone signal. Using (20) yields E { y Y ∗ E } = E { x X ∗ E } + E { n N ∗ E } = E { x X ∗ E } . (21) Using (21) and x = X L a L = X R a R , the spatial-coherence-based R TF estimator ( S C) is equal to a SC L = E { y Y ∗ E } E { Y L Y ∗ E } , a SC R = E { y Y ∗ E } E { Y R Y ∗ E } (22) Of course, in practice the assumption made in (20 ) does not perfectly hold. Hence, in the experimen tal ev aluation i n Section 5 we also consider an oracle ve rsion of the estimator in (22 ), which uses the clean speech signal S as the external microphone signal, such that (20) perfectly holds, i.e., a SC opt L = E { y S ∗ } E { Y L S ∗ } , a SC opt R = E { y S ∗ } E { Y R S ∗ } . (23) Compared t o the CW estimator , the SC estimator does not need an estimate of t he noise cov ariance matri x R n and has a lower computational complexity , b ut obviously requires an external microphone to be av ailable. 5 Experimental Results In this section, an experimental ev aluation is presented of the BMVDR beamformer in (12) using the R TF estimators discussed in Section 4. In Section 5.1 the recordin g setup is desc ribed, while detailed information about t he implementation is provide d in Section 5.2 and t he results are presented in Section 5.3. 5.1 Recording setup All si gnals were recorded in a laboratory located at the Univ ersity of Oldenb urg where the re ve rberation time can be easily changed by closing and opening absorber panels mounted to the walls and the ceili ng. The room dimensions are about ( 7 × 6 × 2 . 7 ) m, where the rev erberation t ime w as set approximately to the three differe nt values T 60 ∈ { 250 , 500 , 750 } ms. The reverb eration times were measured using the broad band energy decay curve of measured impulse responses. At the center of the room a KEMAR head-and-torso simulator (HA TS) was placed . T wo behind-the-ear hearing ai d dummies with two microphones each, i.e., M = 2, were placed on the ears of t he HA TS. The desired source was a male English speaker played back by Figure 3: Measured long-term magnitud e-squared coherenc e between the recorded noise in the left reference microphone and the external microphone. a loudspeak er placed at about 2 m from the center of the head at the same height and at an angle of 35 ◦ , i. e., on to the r i ght si de of the HA TS ( cf. Figure 1). The external microphone was placed at about 0 . 5 m from the desired source, l eading to a distance of about 1 . 5 m to the HA TS, which refers to, e.g., a table microphone or a smartphone that is connected to the binaural hearing de vice. T o generate the background noise, we used four loudspeakers facing the corners of the laboratory , playing back different multi-talker recordings. Figure 3 sho ws the long-term magnitude-squared co- herence between the recorded noise i n the r eference microphone of the left hearing aid and t he external microphone. It can be observ ed that the assumption in (20) obviously does not perfectly hold, but the coherenc e is fairly small. The desired source and the backgroun d noise were recorded separately in order to be able to mix them t ogether at differe nt input SNRs ∈ {− 5 , 0 , 5 } dB . The SNR in the external microphone signal was about 9 . 6 dB higher than in the head-mounted microphone signals. Please note, that streaming and directly using the external microphone signal would not include any binaural cues. The complete signal had a length of 20 s with 0 . 5 s of noise-only at the beginning. 5.2 I mplementation and Perf ormance Mea- sur es All signals were processed at a sampling rate of 16 kHz. W e used the sho rt-time Fourier t ransform (STFT ) with fr ame length T = 256, correspo nding to 16 ms, o verlapp ing by R = 128 samples, e. g., for t he left reference microphone signal Y L ( k,l ) = T − 1 ∑ t = 0 y L ( l · R + t ) w ( t ) e − j 2 π kt/T , (24) = X L ( k,l ) + N L ( k,l ) , (25) with k the fr equency bin index, l the time frame index, y L ( t ) the l eft reference microph one signal in the ti me-domain, w ( t ) a square-root Hann windo w of length T and j = √ − 1. T o distinguish between speech-plus-no ise and noise-only frames we used an oracle broad band voice acti vity detection (V AD), based on the energy of the speech component in the right reference microphone signal. Using this V AD, t he microphone signal cov ariance matrix ˆ R y ( k, l ) and the noise cov ariance matrix ˆ R n ( k,l ) were recursiv ely esti mated as ˆ R y ( k,l ) = α y ˆ R y ( k,l − 1 ) + ( 1 − α y ) y ( k ,l ) y H ( k,l ) , (26) ˆ R n ( k,l ) = α n ˆ R n ( k,l − 1 ) + ( 1 − α n ) y ( k ,l ) y H ( k,l ) , (27) during detected speech-plus-n oise frames and noise-only frames, respecti vely . The forgetting factors were chosen as α y = 0 . 852 1 and α n = 0 . 9841, corresponding to time constan ts of 50 ms and 500 ms, respecti v ely . As initialization the correspond ing long-term estimates of the cov ariance matrices were used. The (time-varying) estimates of the co v ariance matrices were then used in the biased RTF estimator (B) in (14), t he cov ariance- whitening-based R TF estimator (CW) in (18), the oracle spatial-coherence-ba sed R TF estimator (SC opt ) in (23) and the spatial-coherence-ba sed (SC) R TF estimator in (22). W e then Figure 4: Binaural cue errors and intelligibility-weighted SNR improveme nt for the R TF estimators for different rev erberation times (250 ms, 500 ms, 750 ms) and different input SNRs (- 5 dB, 0 dB, 5 dB). computed the (t ime-v arying) BMVDR beamformer in (12 ) using the estimated RTF v ectors and the estimated noise cov ariance matrix ˆ R n ( k, l ) . The resulting BMVDR beamformer was then applied to the head-mounted microphone signals, i.e., Z L ( k,l ) = w H L ( k,l ) y ( k ,l ) , Z R ( k,l ) = w H R ( k,l ) y ( k ,l ) . (28) The performance w as e v aluated in t erms of noise reduction and binaural cue preserv ation. As a measure for noise reduction per- formance we used the intelligibilit y-weighted SNR improvemen t ( ∆ iSNR) [26] between the right reference microphone signal and the output of the right hearing aid. As a measure for binaural cue preserv ation performance we used the reliable binaural cue errors of t he direct sound of the desired speech compon ent, i.e., ∆ ILD and ∆ I TD, based on an auditory model [27] and average d ov er frequenc y . 5.3 Results Figure 4 depicts the results for all four considered R TF estimators for different reverb eration times and input SNRs. As expected, B generally shows worst performance in t erms of binaural cue preserv ation and noise reduction performance. Considering the ILD error , it can be observed for all estimators the ILD errors gen erally increase for increasing T 60 and decrea sing input SNR. In addition it can be observ ed that the SC estimator consistently outperforms the CW esti mator, especially for large T 60 . Moreover , almost no difference can be observed between the SC esti mator and the oracle SC opt estimator , for all T 60 and input SNRs. Considering t he ITD errors, it can be observed that for all estimators the ITD errors generally increase for increasing T 60 and decreasing i nput SNRs. C ontrary to the ILD error, the SC estimator typically leads to larger ITD errors than the oracle SC opt estimator , especially for T 60 = 250 ms and 500 ms. Informal listening tests sho wed that when using S C (and SC opt ) the desired source is perceiv ed as a point source and sounded slightly less rev erberated than the input of t he reference mi crophones. For B and CW the binaural cue error sometimes showed large variations ov er frequenc y , which may lead to strange sounding artefacts, such that some frequencies are perceiv ed as coming from another direction and the desired source sounds slightly diffuse. Considering the iSNR improvement, it can be observed that for all estimators the SNR improv ement generally decreases for i ncreas- ing T 60 and decreasing input S NR. In addition, i t can be observ ed that the SC estimator consistently outperforms the CW estimator for all T 60 and input SNRs. Moreov er , almost no differen ce can be observ ed between the S C estimator and the oracle SC opt estimator . From these results it can be concluded that the SC estimator outper- forms the C W esti mator . Moreov er , for the considered scenario, i.e., the external microphone about 0 . 5 m fr om the desired source and about 1 . 5 m from the head-moun ted microphones , the overa ll performance of the (practically implementable) SC estimator is very similar to the oracle S C opt estimator , showin g that the spatial coherence assumption in (20) is v alid for the considered scenario. It can be expected that placing the external microphone closer to the desired source wo uld sli ghtly improve the performance of the SC esti mator , especially in terms of binaural cue preservation. 6 Conclusions In this paper we hav e shown how an external microphone signal can be exploited to estimate the R TF vectors of a desired source in a diffuse noise field. W e assumed the spatial coherence between the noise components in the head-mounted microphone signals and the noise componen t in the external microphone signal to be zero to derive an unbiased RTF esti mator . An experimental e v aluation using real-world signals for sev eral rev erberation times and input SNRs sho wed that a better noise reduction performance and binaural cue preservation can be obtained when using the proposed R T F estimator compared to an R TF estimator based on cov ariance w hit ening and a simple biased RTF esti mator . Refer ences [1] S. Doclo, W . Kellermann, S . Makino, and S. Nordholm, “Multichannel Signal Enhancement Algorithms for As- sisted Listening Devices: Exploiting spatial diversity using multiple microphones, ” IEEE Signal Processing Ma gazine , vol. 32, pp. 18–30, Mar . 2015. [2] S. Doclo, S. Gannot, D. Marquardt, and E. Hadad, “Binaural Speech P r ocessing w i th Application to Hearing Devices, ” in Audio Sour ce Separation and Speech Enhancement , ch. 18, W iley , 2018. [3] A. W . Bronkhorst and R. Plomp, “T he effect of head-indu ced interaural time and lev el differen ces on speech intelli gibility in noise, ” T he Journ al of the Aco ustical Society of America , vol. 83, no. 4, pp. 1508–1516, 1988. [4] B. Cornelis, S. Doclo, T . V an den Bogaert, J. W outers, and M. Mo onen, “Theoretical analysis of binaural multi- microphone noise reduction t echniques, ” IEEE T ransactions on Audio, Speec h and Lan gua ge Pro cessing , vol. 18, pp. 342–355, Feb. 201 0. [5] S. Gannot, D. Burshtein, and E. W einstein, “Signal En- hancement Using Beamforming and Non-Stationarity with Applications to Speech, ” IEE E T ransactions on Signal Pr ocessing , vol. 49, pp. 1614–1626 , Aug. 2001. [6] I. Cohen , “Relativ e transfer function identification using speech signals, ” IE EE T ran sactions on Sp eech and Audio Pr ocessing , vol. 12, pp. 451–459, Sep. 2004. [7] E. W arsitz and R. Haeb-Umbac h, “Bl i nd acoustic beamform- ing based on generalized eigen value decomposition, ” IEEE T ransactions on Audio Speech and Langua ge Pr ocessing , vol. 15, pp. 1529–1539, July 2007. [8] S. Markovich, S. Gannot, and I. Cohen, “Multichannel eigenspace beamforming in a re v erberant noisy en vironment with multi ple interfering speech signals, ” IEE E T ran sac- tions on Audio, Speech, and Languag e Pro cessing , vol. 17, pp. 1071–1086, Aug. 2009. [9] A. Krueg er , E. W arsitz, and R. Haeb-Umbach, “Speech enhanceme nt with a GSC-l i ke structure emplo ying eigen vector-based transfer function rati os estimation, ” IEEE T ransactions on Audio Speech and Langua ge Pr ocessing , vol. 19, pp. 206–219, Jan. 2011. [10] R. Serizel, M. Moonen , B. V an Dijk, an d J. W outers, “Lo w-rank approximation based multichannel Wiener filter algorithms for noise reduction with application in cochlear implants, ” IEE E/ACM T ran sactions on Audio, Speech and Langua ge Pr ocessing , vol. 22, pp. 785–799, Apr . 2014. [11] S . Marko vich-Golan and S. Gannot, “P erformance analysis of the co v ariance subtraction method for relati v e transfer function estimation and co mparison to the co variance whitening method, ” in Pr oc. IEEE International Confer ence on Acoustics, Spe ech and Signal P r ocessing (I CASSP) , (Brisbane, Australia), pp. 544–548 , Apr . 2015. [12] R. Giri, B. D. Rao, F . Mustiere, and T . Zhang, “Dyn amic relativ e impulse response estimation using structured sparse Bayesian learning, ” in Proc. IEEE International Confer ence on Acoustics, Spe ech and Signal P r ocessing (I CASSP) , (Shanghai, China), pp. 514–51 8, Mar . 2016. [13] R. V arzandeh, M. T aseska, and E. A. P . Habets, “ An iter- ativ e multichannel subspace-based cov ariance subtraction method for relativ e transfer function estimati on, ” in Pro c. J oint W orksho p on Han ds-fr ee Speech Commun ication and Micr ophone Arrays (HSCMA) , (San Francisco, USA), pp. 11–15, Mar . 2017. [14] A. Bertr and and M. Moonen, “Robust Distributed Noise Reduction in Hearing Aids with External Acoustic Sen- sor Nodes, ” EURASIP J ourna l on Advances i n Signal Pr ocessing , vol. 2009, p. 14 pages, Jan. 2009. [15] N. Cvij anovic, O. S adiq, and S. Sriniv asan, “Speech en- hancement using a remote wireless microphone, ” IEEE T ransactions on Consumer Electro nics , vol. 59, pp. 16 7– 174, F eb. 2013 . [16] J. Szurley , A. Bertrand, B. V an Dij k, and M. Moonen, “Binaural noise cue preservation in a binaural noise reduc - tion system with a remote microphone signal, ” IEE E/ACM T ransactions on Audio, Speech and Languag e Pr ocessing , vol. 24, pp. 952–966 , May 2016. [17] M. Farmani, M. S. Pedersen, Z.- H . T an, and J. Jensen, “In- formed Sound Source Localization Using Relative Transfer Functions for Hearing Aid Applications, ” IEEE/ACM Tr ans. on Audio, Sp eech, and L angua ge Pr ocessing , v ol. 25, pp. 611–623, Mar . 2017. [18] N. Gößling, D. Marquard t, and S. Doclo, “Performance analysis of the extende d binaural MVDR beamformer with partial noise estimation in a homogeneous noise field, ” in Pr oc. Joint W orkshop on Hands-fr ee Speech Communication and Micr ophone Arrays (HSCMA) , (San Francisco, USA), pp. 1–5, Mar . 2017. [19] N. Gößling, D. Marquardt, and S . Doclo, “Comparison of R TF estimation methods between a head-mounted binaural hearing de vice and an extern al microphone, ” in Pr oc. International W orksho p on Challeng es in Hearing Assistive T echnolo gy (CHAT) , (S tockholm, Sweden), pp. 101–106, Aug. 2017. [20] D. Y ee, H. Kamkar -Parsi, R. Martin, and H. Puder , “A Noise Reduction Post-Filter f or Binaurally-linked Single- Microphone Hearing Aids Utilizing a Nearby External Microphone , ” IEEE/ACM Tr ansactions on Audio Speech and Languag e Pr ocessing , vol. 26, no. 1, pp. 5–18, 2017. [21] R. Ali, T . V an W atershoot, and M. Moonen, “Genera lised sidelobe canceller for noise reduction in hearing dev ices using an external mi crophone, ” in Pr oc. IEEE Internationa l Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , (Calgary , Canada), pp. 521–525, Apr . 2018. [22] S . Doclo, S. Gannot, M. Moonen, and A. Spriet, “ Acoustic beamforming for hearing aid applications, ” in H andboo k on Array Pr ocessing and Sensor Networks , pp. 269–30 2, W iley , 2010. [23] T . Klasen, T . v an den Bogaert, M. Moonen, and J. W outers, “Binaural noise reduction algorithms f or hearing aids t hat preserve interaural time delay cues, ” IEEE Tr ansac tions on Signal Process ing , vol. 55, pp. 1579–158 5, Apr . 2007. [24] S . Braun, W . Zhou, and E. A. P . Habets, “Narrowband direction-of-arri v al estimation for binaural hearing aids using relati ve transfer f unctions, ” in Pr oc. IEEE W orkshop on Applications of Signal Proce ssing to Audio and Acoustics (W ASP AA) , pp. 1–5, Oct. 2015. [25] B. F . Cron and C. H. Sherman, “Spatial-Correlation Func- tions for V arious Noise Models, ” Journ al of the Acoustical Society of America , vol. 34, pp. 1732–173 6, Nov . 1962. [26] J. E. Green berg, P . M. Peterson, and P . M. Zurek, “Intelligibility-weighted measures of speech-to-interferenc e ratio and speech system performan ce, ” Journa l of t he Acous- tical Society of America , vol. 94, pp. 3009–30 10, Nov . 1993. [27] M. Dietz, S. D. Ewert , and V . Hohmann, “ Auditory model based direction estimation of concurren t speakers from bin- aural signals, ” Speech Communication , vol. 53, pp. 59 2–605, 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment