Tiny noise, big mistakes: Adversarial perturbations induce errors in Brain-Computer Interface spellers

An electroencephalogram (EEG) based brain-computer interface (BCI) speller allows a user to input text to a computer by thought. It is particularly useful to severely disabled individuals, e.g., amyotrophic lateral sclerosis patients, who have no other effective means of communication with another person or a computer. Most studies so far focused on making EEG-based BCI spellers faster and more reliable; however, few have considered their security. This study, for the first time, shows that P300 and steady-state visual evoked potential BCI spellers are very vulnerable, i.e., they can be severely attacked by adversarial perturbations, which are too tiny to be noticed when added to EEG signals, but can mislead the spellers to spell anything the attacker wants. The consequence could range from merely user frustration to severe misdiagnosis in clinical applications. We hope our research can attract more attention to the security of EEG-based BCI spellers, and more broadly, EEG-based BCIs, which has received little attention before.

💡 Research Summary

This paper presents the first systematic investigation of security vulnerabilities in EEG‑based brain‑computer interface (BCI) spellers, focusing on the two most widely used paradigms: the P300 event‑related potential speller and the steady‑state visual evoked potential (SSVEP) speller. While decades of research have concentrated on improving spelling speed and classification accuracy, the authors highlight that the security aspect has been largely ignored, despite the critical role these systems play for severely disabled users such as patients with amyotrophic lateral sclerosis (ALS).

Key Contributions

- Demonstration of Feasibility of Targeted Attacks – The authors show that tiny, imperceptible adversarial perturbations can force both P300 and SSVEP spellers to output any character chosen by an attacker, regardless of the user’s intended selection.

- Attack on Traditional BCI Pipelines – Rather than attacking end‑to‑end deep‑learning models, the study targets the classic BCI processing chain (feature extraction + classifier) that dominates real‑world deployments.

- Causal, Real‑Time Perturbation Templates – A novel “adversarial template” is derived offline from the training set. Because the template is fixed, it can be added to a test EEG trial as it streams, satisfying causality (no need to know the future part of the signal) and enabling realistic, on‑line attacks.

Methodology – P300 Speller

- Dataset: Public 64‑channel EEG data (240 Hz) from two subjects, with 85 training and 100 test characters per subject. Each character is presented via 12 random row/column flashes repeated 15 times (≈3.15 s per character).

- Victim Model: A Riemannian‑geometry‑based pipeline that won the 2015 Kaggle BCI Challenge. It uses 16 xDAWN spatial filters, projects covariance matrices onto the tangent space of a Riemannian manifold, and classifies with logistic regression. All operations are re‑implemented in TensorFlow to obtain gradients for adversarial optimization.

- Attack Construction: The authors compute the gradient of the loss with respect to the input EEG, then generate a universal perturbation template that maximally increases the probability of a chosen target row/column. The template is injected only during the flash intervals, keeping the signal‑to‑perturbation ratio (SPR) above 20 dB, a level that is visually indistinguishable.

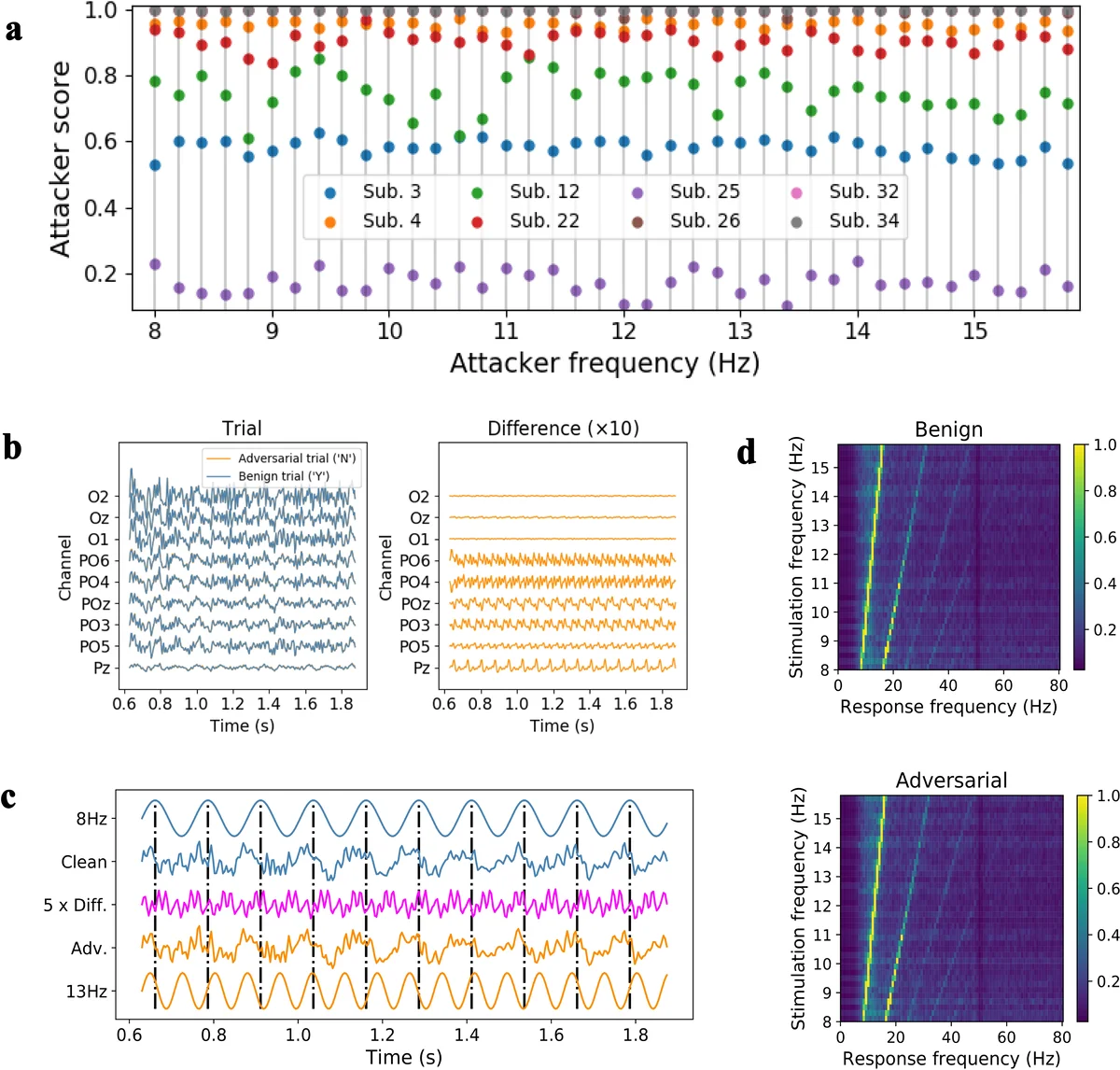

- Results: With the template added, attacker success rates exceed 90 % across all 36 possible characters. User accuracy and ITR collapse to near zero, while attacker accuracy and ITR remain high. Gaussian noise of equal energy has virtually no effect, confirming that the structured adversarial signal, not mere noise, drives the misclassification. Visual inspection of raw trials, averaged ERP waveforms, spectra, and topographic maps shows no discernible difference before and after perturbation.

Methodology – SSVEP Speller

- Dataset: 64‑channel recordings from 35 subjects (250 Hz, 6 s trials) where 40 characters flicker at frequencies from 8 Hz to 15.8 Hz in 0.2 Hz steps.

- Victim Model: A conventional pipeline that extracts canonical correlation analysis (CCA) features at the stimulus frequency and its harmonics, followed by logistic regression.

- Attack Construction: A universal perturbation template is derived from the training set and injected during the 0.13–1.38 s post‑stimulus window, the period where SSVEP responses are strongest. The same SPR constraints (≈20 dB) are applied.

- Results: Attacker scores reach 85 % or higher, while user scores drop dramatically. The perturbation subtly alters the frequency content enough to mislead the CCA matcher, yet the overall power spectrum and topographies remain visually unchanged.

Security Implications

BCI spellers are increasingly integrated into medical diagnostics, assistive communication devices, and even military or industrial control interfaces. An adversary who can pre‑compute a perturbation template and inject it in real time could cause a user to unintentionally select harmful commands, produce erroneous clinical data, or simply frustrate communication. Because the perturbations are below human perceptual thresholds, detection by the user is unlikely. The paper demonstrates that existing defenses—such as adding random Gaussian noise—are ineffective, and that current BCI pipelines lack any built‑in robustness to adversarial manipulation.

Future Directions Suggested

- Development of certified robustness guarantees for EEG‑based classifiers, extending recent advances in provable defenses for deep networks to the Riemannian‑geometry and CCA pipelines.

- Real‑time anomaly detection mechanisms that monitor statistical deviations in EEG power or spatial patterns to flag potential adversarial injections.

- Multimodal fusion (e.g., eye‑tracking, EMG) to cross‑validate user intent and increase resistance to EEG‑only attacks.

- Establishment of security standards for BCI devices, akin to those in medical device regulation, to mandate robustness testing before deployment.

Conclusion

The study convincingly shows that (1) state‑of‑the‑art EEG spellers are highly vulnerable to tiny, carefully crafted adversarial perturbations; (2) a universal, causally applicable perturbation template enables realistic, on‑line attacks without knowledge of the future EEG; and (3) such attacks can achieve >90 % success while remaining invisible to human observers. These findings call for an urgent shift in BCI research from solely performance optimization toward incorporating rigorous security and robustness considerations.

Comments & Academic Discussion

Loading comments...

Leave a Comment