Online Learning with an Almost Perfect Expert

We study the multiclass online learning problem where a forecaster makes a sequence of predictions using the advice of $n$ experts. Our main contribution is to analyze the regime where the best expert makes at most $b$ mistakes and to show that when $b = o(\log_4{n})$, the expected number of mistakes made by the optimal forecaster is at most $\log_4{n} + o(\log_4{n})$. We also describe an adversary strategy showing that this bound is tight and that the worst case is attained for binary prediction.

💡 Research Summary

The paper investigates the multiclass online prediction problem in which a forecaster must repeatedly choose among d possible outcomes while consulting the advice of n experts. After each round the true outcome is revealed and the forecaster incurs a unit loss for each mistake. The central assumption is that the best expert in the pool makes at most b mistakes over the entire horizon, where b may depend on n. The authors aim to characterize the minimal expected loss that any forecaster can guarantee against an adaptive adversary who controls both the experts’ predictions and the true outcomes.

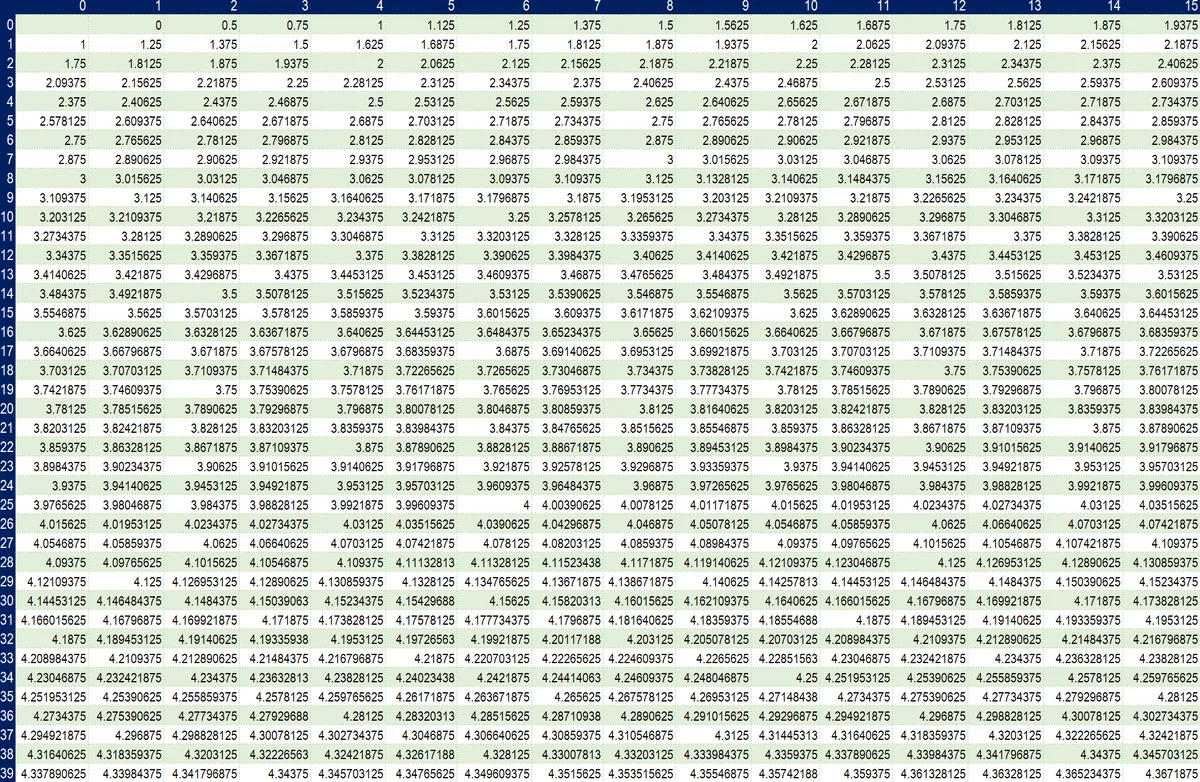

The authors introduce a compact state representation k = (k₀,…,k_b), where k_i counts the number of experts that have accumulated exactly i mistakes so far. In each round the experts are partitioned into d vectors k¹,…,k^d according to the option they vote for; this partition is called a decomposition. If option j turns out to be correct, the state transitions to a successor s_j(k¹,…,k^d) whose first coordinate is the number of zero‑mistake experts voting for j, and for i>0 the i‑th coordinate becomes k_j^i + ∑_{v≠j} k_v^{i‑1}. This formulation captures all relevant information for the forecaster and the adversary, making the problem a finite‑state zero‑sum game.

A key technical contribution is an abstract “potential function” f defined on non‑zero states. The function must satisfy two properties: (1) a boundary condition f(0,…,0,1) ≥ 0, and (2) a composition inequality that for any state k, any subset A of a choices (a≥1), and any decomposition,

f(k) ≥ (a‑1)/a + (1/a) ∑_{i∈A} f(s_i).

If such an f exists, a simple randomized strategy—predict choice i with probability p_i = max{0, 1 + f(s_i) − f(k)} and assign the remaining probability to the last option—guarantees an expected loss no larger than f(k). This bridges minimax analysis and algorithm design: any feasible f yields both an upper bound and an explicit forecaster policy.

Using this framework the authors derive two concrete upper bounds. The first (Theorem 1) shows that for any b, the optimal expected loss is at most

log₄ n + b·

Comments & Academic Discussion

Loading comments...

Leave a Comment