SWIFT: Using task-based parallelism, fully asynchronous communication, and graph partition-based domain decomposition for strong scaling on more than 100,000 cores

We present a new open-source cosmological code, called SWIFT, designed to solve the equations of hydrodynamics using a particle-based approach (Smooth Particle Hydrodynamics) on hybrid shared/distributed-memory architectures. SWIFT was designed from the bottom up to provide excellent strong scaling on both commodity clusters (Tier-2 systems) and Top100-supercomputers (Tier-0 systems), without relying on architecture-specific features or specialized accelerator hardware. This performance is due to three main computational approaches: (1) Task-based parallelism for shared-memory parallelism, which provides fine-grained load balancing and thus strong scaling on large numbers of cores. (2) Graph-based domain decomposition, which uses the task graph to decompose the simulation domain such that the work, as opposed to just the data, as is the case with most partitioning schemes, is equally distributed across all nodes. (3) Fully dynamic and asynchronous communication, in which communication is modelled as just another task in the task-based scheme, sending data whenever it is ready and deferring on tasks that rely on data from other nodes until it arrives. In order to use these approaches, the code had to be re-written from scratch, and the algorithms therein adapted to the task-based paradigm. As a result, we can show upwards of 60% parallel efficiency for moderate-sized problems when increasing the number of cores 512-fold, on both x86-based and Power8-based architectures.

💡 Research Summary

The paper introduces SWIFT, an open‑source cosmological simulation code that solves the equations of hydrodynamics using Smoothed Particle Hydrodynamics (SPH) on hybrid shared‑/distributed‑memory systems. The authors argue that traditional parallel programming models—OpenMP for shared memory and MPI for distributed memory—rely on bulk‑synchronous steps and static workload distribution, which limits strong scaling on modern supercomputers that now contain tens of thousands of cores. To overcome these limitations, SWIFT adopts three complementary techniques:

-

Task‑based shared‑memory parallelism – The entire SPH computation is decomposed into thousands to millions of fine‑grained tasks (typically a few milliseconds each). Dependencies (data flow) and conflicts (exclusive resource access) are declared explicitly to a custom scheduler (QUICKSCHED). The scheduler dynamically assigns ready tasks to any idle core, providing automatic load balancing, eliminating most locks and barriers, and improving cache locality because each task works on a well‑defined subset of data.

-

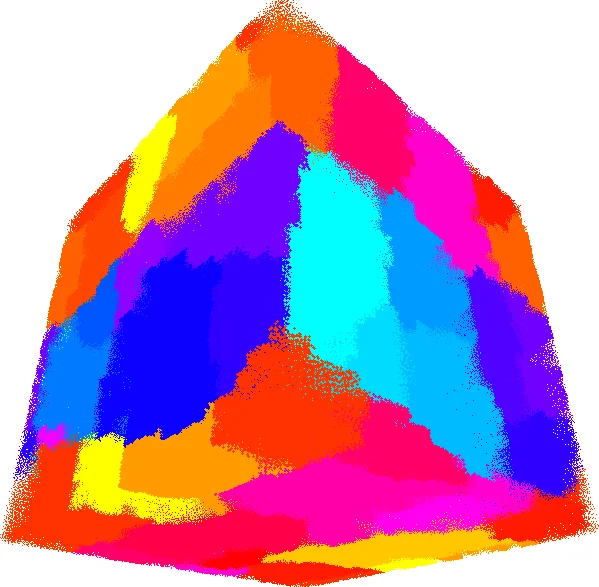

Task‑graph‑driven domain decomposition – Instead of partitioning particles or spatial cells based on geometric criteria, the authors construct a cell‑hypergraph where each node represents a cell of particles and each edge (or hyper‑edge) represents an interaction task that touches one or two cells. Edge weights are estimated from the asymptotic cost of the task type and the number of particles involved; after execution the measured cost is used to refine the weights. A standard graph partitioner (METIS) then partitions the graph so that the maximum sum of edge weights per partition is minimized. This yields a workload‑balanced distribution of computational work across MPI ranks, even when particle density is highly non‑uniform. The approach deliberately ignores data volume balance, focusing solely on equalizing compute time.

-

Fully asynchronous communication modeled as tasks – Communication between ranks is also expressed as tasks (send and receive). When a cell’s particle data is required by a remote rank, a send‑task is generated; the corresponding receive‑task on the remote side depends on the arrival of that data. Because these communication tasks are part of the same dependency graph as the compute tasks, they can be overlapped with computation automatically. No global “all‑to‑all then compute” barrier is needed; instead, computation proceeds as soon as its required data is available, dramatically reducing communication‑induced idle time.

The implementation replaces the traditional tree‑based neighbor search (k‑d trees, octrees) with a regular grid of cells whose edge length is at least the largest smoothing length. Cells initially contain about 100 particles, and are recursively split if they become too large, generating a hierarchy of interaction tasks that cover all particle pairs within range. The task hierarchy follows the SPH algorithmic steps: (i) sort particles within each cell, (ii) compute densities, (iii) compute forces/energy, and (iv) integrate positions and velocities. Ghost tasks enforce that all density calculations for a cell finish before any force task that depends on that cell can start.

Performance results are presented for two hardware families: an Intel Xeon‑based commodity cluster and an IBM Power8‑based Tier‑0 system. Scaling tests increase the core count by a factor of 512 (up to ~100 000 cores). Across both architectures, SWIFT maintains parallel efficiencies above 60 % for moderate‑size problems, demonstrating strong scaling far beyond what conventional SPH codes achieve (which typically degrade sharply beyond a few thousand cores). The task‑graph partitioning reduces the amount of data that must be exchanged compared with pure data‑based partitioning, and the asynchronous communication model keeps communication overhead to less than 5 % of total runtime even at the largest scales.

In the discussion, the authors note that the three techniques are not specific to cosmology; any application with fine‑grained data dependencies could benefit from a task‑based formulation, graph‑based workload partitioning, and treating communication as just another task. They acknowledge that the approach assumes the critical path of the task graph is short relative to the total work and that network latency is modest; otherwise, additional strategies would be required. Future work includes extending the model to heterogeneous architectures (GPUs, many‑core accelerators) and refining the cost model for dynamic load balancing.

Overall, the paper demonstrates that a clean redesign of a scientific code around task‑centric abstractions can unlock strong scaling on the next generation of exascale‑class machines, achieving high efficiency without relying on vendor‑specific optimizations or specialized hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment