An Algorithmic Theory of Integer Programming

We study the general integer programming problem where the number of variables $n$ is a variable part of the input. We consider two natural parameters of the constraint matrix $A$: its numeric measure $a$ and its sparsity measure $d$. We show that integer programming can be solved in time $g(a,d)\textrm{poly}(n,L)$, where $g$ is some computable function of the parameters $a$ and $d$, and $L$ is the binary encoding length of the input. In particular, integer programming is fixed-parameter tractable parameterized by $a$ and $d$, and is solvable in polynomial time for every fixed $a$ and $d$. Our results also extend to nonlinear separable convex objective functions. Moreover, for linear objectives, we derive a strongly-polynomial algorithm, that is, with running time $g(a,d)\textrm{poly}(n)$, independent of the rest of the input data. We obtain these results by developing an algorithmic framework based on the idea of iterative augmentation: starting from an initial feasible solution, we show how to quickly find augmenting steps which rapidly converge to an optimum. A central notion in this framework is the Graver basis of the matrix $A$, which constitutes a set of fundamental augmenting steps. The iterative augmentation idea is then enhanced via the use of other techniques such as new and improved bounds on the Graver basis, rapid solution of integer programs with bounded variables, proximity theorems and a new proximity-scaling algorithm, the notion of a reduced objective function, and others. As a consequence of our work, we advance the state of the art of solving block-structured integer programs. In particular, we develop near-linear time algorithms for $n$-fold, tree-fold, and $2$-stage stochastic integer programs. We also discuss some of the many applications of these classes.

💡 Research Summary

The paper presents a unified algorithmic theory for integer programming (IP) that parameterizes the problem by two natural measures of the constraint matrix A: the numeric magnitude a (the maximum absolute entry, at least 2) and a sparsity measure d defined as the minimum of the primal and dual treedepths of A. Treedepth captures how deeply the non‑zero pattern of A can be embedded in a rooted forest, and it is a stricter structural parameter than treewidth.

The main result (Theorem 1) shows that any IP with a separable convex objective can be solved in time g(a,d)·poly(n,L), where n is the number of variables, L is the binary encoding length of the numeric input, and g is a computable function depending only on a and d. Consequently, for any fixed a and d the problem is polynomial‑time solvable, and for linear objectives a strongly polynomial algorithm (Corollary 2) with running time g(a,d)·poly(n) exists, independent of the numeric data.

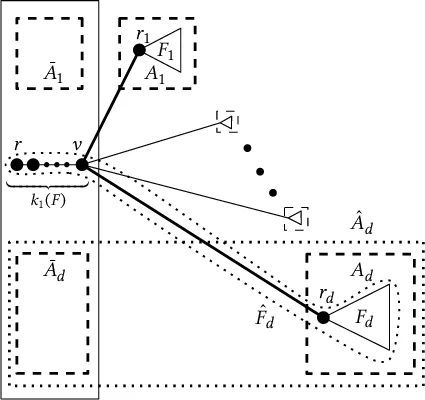

The algorithmic framework is built around the Graver basis 𝔊(A), the set of primitive integer vectors in the kernel of A. The authors prove new bounds on the size and ℓ∞‑norm of Graver elements that depend only on a and d, and they develop an iterative augmentation scheme: starting from a feasible solution, each augmentation step adds a Graver element that improves the objective, and the process converges after a number of steps bounded by a function of a and d.

A novel proximity theorem establishes that the distance (in ℓ∞) between an optimal fractional solution and an optimal integer solution is O(a·d). Using this, they design a proximity‑scaling algorithm that reduces the original IP to O(log ‖u−l‖∞) auxiliary IPs with polynomially bounded variable ranges. Each auxiliary IP is solved by the augmentation routine, yielding the overall g(a,d)·poly(n) complexity.

For linear objectives, the paper extends the Frank‑Tardos technique to compute an equivalent weight vector \bar w with ‖\bar w‖∞ bounded by a polynomial in a and d, while preserving the ordering of feasible solutions. This reduction enables the strongly polynomial algorithm. The same idea is generalized to separable convex objectives.

The framework is applied to several important block‑structured families: n‑fold, tree‑fold, 2‑stage stochastic, and multi‑stage stochastic integer programs. In each case the authors obtain near‑linear time FPT algorithms of the form g(parameters)·n·polylog(n)·L, improving on prior results that either had super‑linear dependence on n or weaker parameter dependence.

Hardness results complement the algorithmic contributions. The authors prove that replacing treedepth by treewidth makes the problem NP‑hard even for a = 2 and treewidth = 2. Moreover, parameterizing solely by a or solely by d leads to para‑NP‑hardness and W

Comments & Academic Discussion

Loading comments...

Leave a Comment