Adding Neural Network Controllers to Behavior Trees without Destroying Performance Guarantees

In this paper, we show how Behavior Trees that have performance guarantees, in terms of safety and goal convergence, can be extended with components that were designed using machine learning, without destroying those performance guarantees. Machine learning approaches such as reinforcement learning or learning from demonstration can be very appealing to AI designers that want efficient and realistic behaviors in their agents. However, those algorithms seldom provide guarantees for solving the given task in all different situations while keeping the agent safe. Instead, such guarantees are often easier to find for manually designed model-based approaches. In this paper we exploit the modularity of behavior trees to extend a given design with an efficient, but possibly unreliable, machine learning component in a way that preserves the guarantees. The approach is illustrated with an inverted pendulum example.

💡 Research Summary

The paper addresses a fundamental challenge in autonomous system design: how to incorporate high‑performance, data‑driven controllers—typically neural networks trained by reinforcement learning or imitation—into a behavior tree (BT) architecture that already guarantees safety and goal convergence, without sacrificing those guarantees. The authors exploit the inherent modularity of BTs, modeling each BT node as a continuous‑time controller together with a metadata function that classifies the current state as Running (R), Success (S), or Failure (F). By interpreting the entire BT as a discontinuous dynamical system (DDS), they can define operating regions Ωi that determine which leaf node is active for a given state.

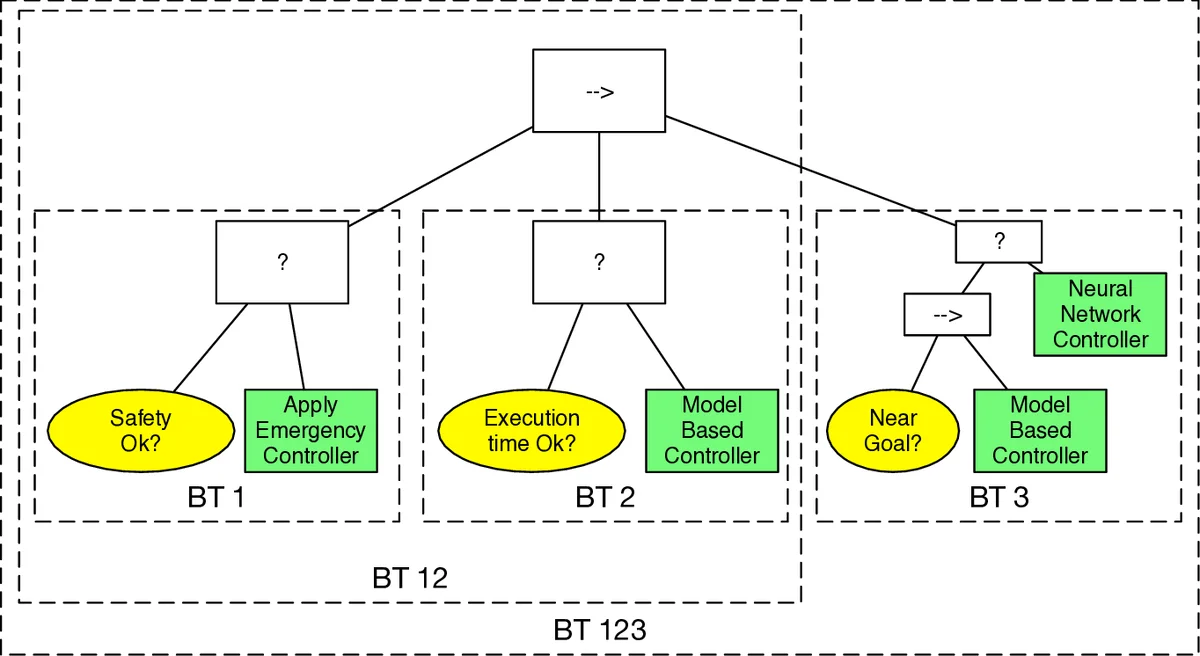

The core contribution is a specific BT structure (Fig. 1) that layers three controllers: a safety controller uS, a model‑based controller uMB with formal guarantees, and a data‑driven controller uDD that is efficient but lacks guarantees. The BT is organized as a Sequence of three children: (1) a safety action, (2) a Fallback between a high‑cost condition and the data‑driven action, and (3) the model‑based action. Metadata regions are chosen so that the failure region of the safety node coincides with an unsafe set O, the success region of the high‑cost condition corresponds to a cost‑threshold region C, and both the data‑driven and model‑based actions share the same goal region G. This design ensures that:

- If the state approaches O, the safety node preempts all other actions.

- If accumulated cost exceeds C, control switches from the data‑driven node to the model‑based node.

- Otherwise, the data‑driven node runs, and once it reaches G the model‑based node takes over to guarantee staying in G.

The authors formalize safety and performance using three definitions: Finite‑time Success (FTS), Safe (no entry into O), and Safeguarding (FTS plus safety). Lemma 2 shows that if a controller possesses a locally exponentially stable equilibrium within a region Bi that is disjoint from O, then the controller is both FTS and safe; if the success region is strictly inside Bi, it is also safeguarding. By constructing uS and uMB to satisfy these exponential stability conditions, the overall BT inherits the guarantees regardless of the behavior of uDD.

A detailed inverted pendulum swing‑up example illustrates the theory. The safety controller prevents the pendulum from falling beyond a safe angle, the model‑based LQR controller guarantees convergence to the upright position, and a neural‑network policy attempts fast swing‑up. Simulations demonstrate that when the neural policy respects the cost bound and stays away from the unsafe angle, it drives the pendulum efficiently; if it violates either condition, the BT automatically switches to the appropriate safe or model‑based controller, preserving safety and eventual convergence.

In summary, the paper provides a rigorous, modular framework for embedding unreliable but efficient learning‑based controllers into BTs while preserving formal safety and convergence guarantees. The approach is general, applicable to any BT‑based system, and opens avenues for combining the adaptability of machine learning with the reliability of classical control in autonomous robotics and AI applications. Future work may extend the method to multi‑objective tasks, richer hybrid dynamics, and online learning scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment