Output-sensitive Complexity of Multiobjective Combinatorial Optimization

We study output-sensitive algorithms and complexity for multiobjective combinatorial optimization problems. In this computational complexity framework, an algorithm for a general enumeration problem is regarded efficient if it is output-sensitive, i.e., its running time is bounded by a polynomial in the input and the output size. We provide both practical examples of MOCO problems for which such an efficient algorithm exists as well as problems for which no efficient algorithm exists under mild complexity theoretic assumptions.

💡 Research Summary

The paper “Output‑Sensitive Complexity of Multiobjective Combinatorial Optimization” investigates multi‑objective combinatorial optimization (MOCO) problems from the perspective of output‑sensitive complexity. Traditional complexity analyses for MOCO focus on decision versions and NP‑hardness, which capture the difficulty of finding a single feasible solution but do not reflect the potentially exponential size of the Pareto front (the set of all nondominated objective vectors). The authors argue that measuring running time solely with respect to input size can be misleading for enumeration tasks where the output size may dominate the computational effort.

To address this, the authors adopt the framework of output‑sensitive algorithms: an algorithm is considered efficient if its total running time is bounded by a polynomial in both the input size and the size of the output (the number of Pareto‑optimal points). They formalize this notion using the theory of enumeration problems, introducing the classes TotalP (output‑polynomial time), IncP (incremental polynomial time), DelayP (polynomial delay), and PSDelayP (polynomial delay with polynomial space). An algorithm that satisfies the TotalP condition is called output‑sensitive; stronger conditions (DelayP, IncP) guarantee small per‑solution delays.

The paper’s contributions are twofold: (1) it identifies concrete MOCO problems that admit output‑sensitive algorithms, and (2) it proves that for other MOCO problems such an algorithm cannot exist unless widely believed complexity separations collapse (e.g., P = NP).

Positive results – problems solvable in output‑sensitive time

The authors first examine the multi‑objective global minimum‑cut problem. Since the single‑objective minimum‑cut can be solved in polynomial time, they show that the Pareto front of the multi‑objective version is of polynomial size for any fixed number of objectives d. By exploiting the structure of cuts, duality, and the fact that each cut corresponds to a point in a d‑dimensional objective space, they construct an enumeration algorithm that outputs every nondominated cut in time polynomial in the graph size and the number of Pareto points. Hence the problem belongs to TotalP (and even DelayP).

They also discuss multi‑objective linear programming. For d = 2, the set of supported (i.e., extreme) nondominated points can be enumerated with polynomial delay using classic parametric simplex or weighted‑sum techniques. This shows that, while enumerating the entire Pareto front may be hard, enumerating the extreme frontier is output‑sensitive for many practical cases.

Negative results – problems unlikely to admit output‑sensitive algorithms

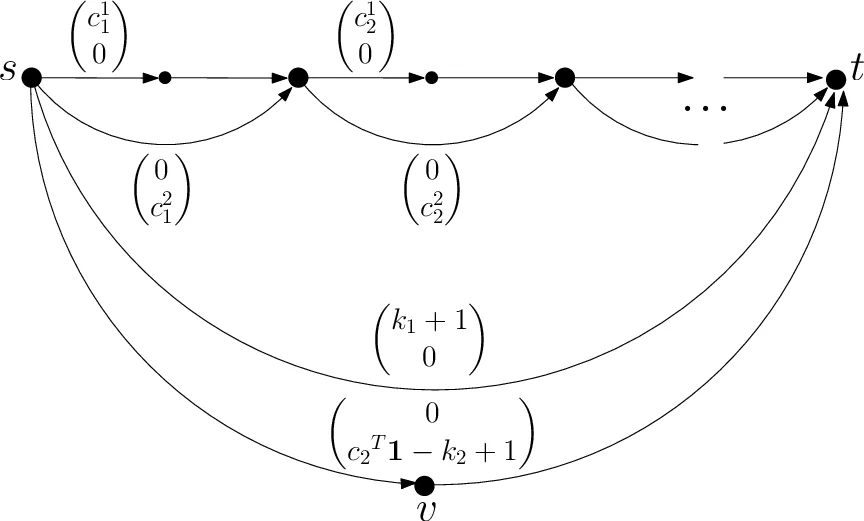

The central hardness result concerns the multi‑objective shortest‑path problem (MOSP). The authors reduce from the NP‑complete 0‑1 knapsack problem to MOSP in such a way that any output‑sensitive algorithm for MOSP would yield a polynomial‑time algorithm for knapsack, implying P = NP. The reduction ensures that the Pareto front can be forced to be polynomially bounded, yet enumerating all nondominated paths remains as hard as solving the underlying NP‑complete decision problem. Consequently, MOSP does not belong to TotalP under the standard assumption P ≠ NP.

More generally, they present a method for proving that an enumeration problem is not efficiently solvable in the output‑sensitive sense: if one can embed a known NP‑hard decision problem into the enumeration task such that a polynomial‑time output‑sensitive algorithm would solve the decision problem, then the enumeration problem cannot be in TotalP unless P = NP.

Smoothed analysis of Pareto‑front size

To reconcile the theoretical possibility of exponential Pareto fronts with empirical observations that they are often modest, the paper invokes smoothed analysis. Following Brunsch and Röglin (2012), they consider a model where the first objective’s coefficients are adversarially chosen, while the remaining d − 1 objectives are drawn from bounded probability densities. Under this model the expected size of the Pareto front is O(n^{2d} φ^{d}), polynomial in the problem size n for any fixed d and density bound φ. This result justifies the practical relevance of output‑sensitive algorithms: when the number of objectives is small, the expected output size is manageable, and algorithms that scale with the output are preferable to worst‑case exponential‑time methods.

Connections to multi‑objective linear programming

The authors explore the relationship between MOCO and multi‑objective linear programming (MOLP). They show that computing all extreme nondominated points of an MOLP is output‑sensitive, while computing the full Pareto front can be exponentially harder. For d = 2 they provide new algorithms that enumerate supported solutions with polynomial delay, improving upon earlier results.

Conclusion

The paper establishes output‑sensitive complexity as a natural and powerful lens for studying MOCO problems. It demonstrates that some classic MOCO problems (minimum cut, certain linear‑programming variants) admit algorithms whose running time is polynomial in both input and output, making them tractable in practice when the Pareto front is small. Conversely, it proves that other problems (multi‑objective shortest path) are unlikely to admit such algorithms unless major complexity class collapses occur. By integrating smoothed analysis, the authors explain why, despite worst‑case exponential Pareto fronts, many real‑world instances exhibit modest output sizes, further motivating the development of output‑sensitive methods. The work thus bridges theoretical complexity, algorithm design, and practical considerations in multi‑objective combinatorial optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment