Randomized Constraints Consensus for Distributed Robust Mixed-Integer Programming

In this paper, we consider a network of processors aiming at cooperatively solving mixed-integer convex programs subject to uncertainty. Each node only knows a common cost function and its local uncertain constraint set. We propose a randomized, distributed algorithm working under asynchronous, unreliable and directed communication. The algorithm is based on a local computation and communication paradigm. At each communication round, nodes perform two updates: (i) a verification in which they check—in a randomized fashion—the robust feasibility of a candidate optimal point, and (ii) an optimization step in which they exchange their candidate basis (the minimal set of constraints defining a solution) with neighbors and locally solve an optimization problem. As main result, we show that processors can stop the algorithm after a finite number of communication rounds (either because verification has been successful for a sufficient number of rounds or because a given threshold has been reached), so that candidate optimal solutions are consensual. The common solution is proven to be—with high confidence—feasible and hence optimal for the entire set of uncertainty except a subset having an arbitrary small probability measure. We show the effectiveness of the proposed distributed algorithm using two examples: a random, uncertain mixed-integer linear program and a distributed localization in wireless sensor networks. The distributed algorithm is implemented on a multi-core platform in which the nodes communicate asynchronously.

💡 Research Summary

This paper addresses the challenging problem of solving robust mixed‑integer convex programs (RMICP) in a fully distributed manner over networks that may be asynchronous, unreliable, and directed. Each processor knows only a common linear cost vector and its own local uncertain constraint set, while the global feasible set is the intersection of all local constraints over an (often infinite) uncertainty set. Traditional worst‑case robust optimization is computationally intractable for such problems, and scenario‑based methods require a central unit to collect samples. The authors propose a novel randomized consensus algorithm that eliminates the need for a central coordinator and works under minimal assumptions on the communication graph (uniform joint strong connectivity).

The theoretical foundation relies on Helly‑type theorems and the concept of an S‑Helly number, which for mixed‑integer spaces (S=\mathbb{Z}^{d_Z}\times\mathbb{R}^{d_R}) equals ((d_R+1)^2 d_Z). This number determines the combinatorial dimension of the problem and thus the maximal size of a “basis” – a minimal subset of constraints that defines the optimal solution. By limiting each node’s local problem to at most (h(S)-1) constraints, the algorithm guarantees that the local subproblems remain tractable and that the amount of information exchanged per communication round is bounded.

The algorithm proceeds in repeated rounds, each consisting of two steps:

-

Randomized Verification – Each node samples a finite number of uncertainty realizations from its own local distribution and checks whether the current candidate solution satisfies its sampled constraints. If all sampled constraints are satisfied, the node increments a local success counter; otherwise it records the first violating constraint as an “active” constraint.

-

Optimization with Basis Exchange – Nodes broadcast their current basis (the set of active constraints) to their out‑neighbors. Upon receiving bases from in‑neighbors, a node forms a new constraint set consisting of its own basis together with those received, solves a deterministic mixed‑integer program defined by this set, and updates both its candidate solution and its basis.

If a node’s verification succeeds for a pre‑specified number of consecutive rounds, or if a global iteration limit is reached, the algorithm stops. The authors prove that under the stated assumptions the algorithm terminates after a finite number of communication rounds with high probability. The resulting consensus solution is feasible for all but an (\epsilon)‑measure subset of the uncertainty space, where (\epsilon) and the confidence level (\delta) are user‑defined. The proof combines probabilistic bounds from Monte‑Carlo sampling with the finite‑basis property derived from Helly’s theorem, yielding a “finite‑stopping” theorem that is rare in distributed robust optimization literature.

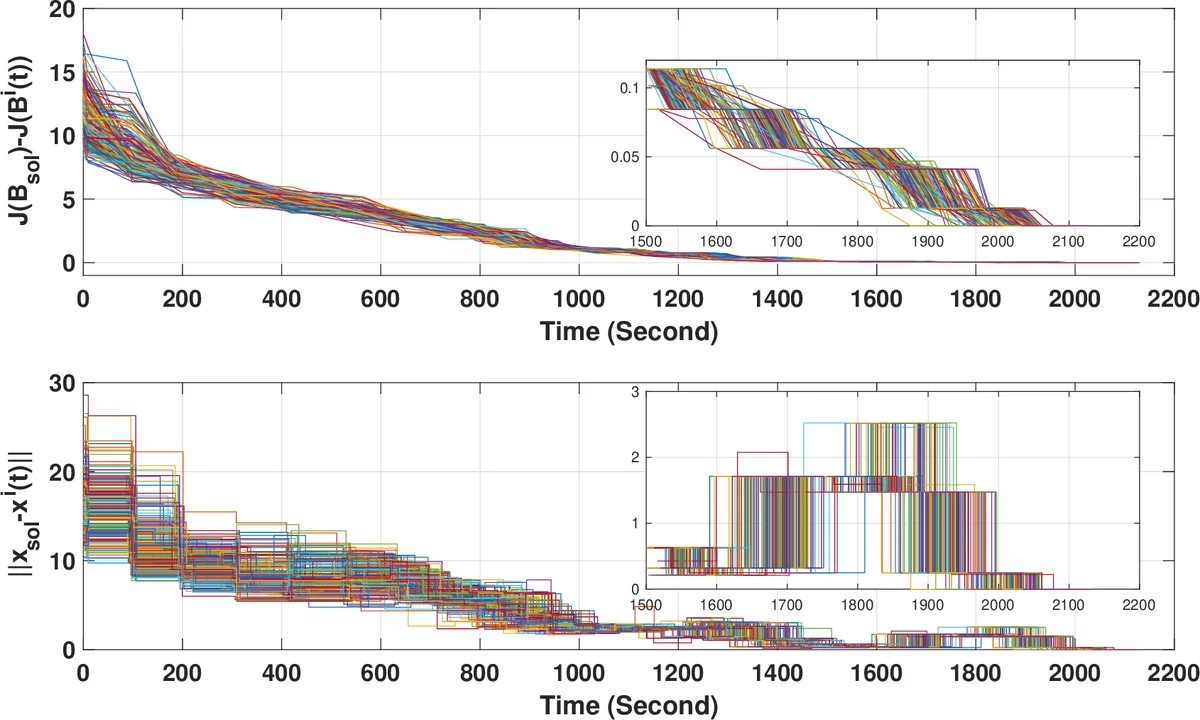

The paper includes extensive numerical experiments. The first test case solves a randomly generated uncertain mixed‑integer linear program with 20 agents, demonstrating that the proposed method achieves comparable optimal values to a centralized scenario approach while using dramatically fewer samples. The second case studies a distributed localization problem in a wireless sensor network, where each sensor’s distance measurements are corrupted by random noise. The algorithm converges to sub‑meter accuracy within 5–10 communication rounds, even when the communication graph is directed, time‑varying, and experiences up to 20 % packet loss. Implementation on a multi‑core platform shows that the per‑node computational burden is modest (solving small MILPs) and that communication overhead is limited to transmitting a basis (at most a handful of constraints) and a single candidate vector per round.

Key contributions of the work are:

- A fully decentralized algorithm for robust mixed‑integer optimization that does not require any central sampling or global knowledge of the uncertainty set.

- Exploitation of Helly‑type combinatorial geometry to bound the size of local subproblems, ensuring scalability.

- A probabilistic verification mechanism that provides explicit ((\epsilon,\delta)) guarantees on robustness.

- Rigorous finite‑time convergence analysis under asynchronous, directed, and unreliable communication.

- Demonstration of practical viability on realistic benchmark problems and on a multi‑core implementation.

The authors suggest several avenues for future research, including adaptive sampling for time‑varying uncertainties, privacy‑preserving encrypted basis exchange, and extensions to multi‑objective or nonlinear objective functions. The presented framework has immediate relevance to emerging cyber‑physical systems such as smart grids, autonomous vehicle fleets, and large‑scale IoT deployments, where distributed decision‑making under uncertainty and mixed‑integer constraints is a fundamental requirement.

Comments & Academic Discussion

Loading comments...

Leave a Comment