Node Graph Optimization Using Differentiable Proxies

Graph-based procedural materials are ubiquitous in content production industries. Procedural models allow the creation of photorealistic materials with parametric control for flexible editing of appearance. However, designing a specific material is a time-consuming process in terms of building a model and fine-tuning parameters. Previous work [Hu et al. 2022; Shi et al. 2020] introduced material graph optimization frameworks for matching target material samples. However, these previous methods were limited to optimizing differentiable functions in the graphs. In this paper, we propose a fully differentiable framework which enables end-to-end gradient based optimization of material graphs, even if some functions of the graph are non-differentiable. We leverage the Differentiable Proxy, a differentiable approximator of a non-differentiable black-box function. We use our framework to match structure and appearance of an output material to a target material, through a multi-stage differentiable optimization. Differentiable Proxies offer a more general optimization solution to material appearance matching than previous work.

💡 Research Summary

The paper addresses a fundamental limitation in procedural material graph optimization: the inability to differentiate through generator nodes, which often contain discrete parameters that control the structural aspects of a material (e.g., pattern type, scale, orientation). Prior work such as Shi et al.’s MATCH system could only back‑propagate through differentiable filter nodes, leaving the structural part of the graph fixed and requiring manual tuning or separate, costly gradient‑free searches.

To overcome this, the authors introduce the concept of a Differentiable Proxy (DP). A DP is a neural network that approximates a given non‑differentiable 2‑D image generator G(θ) by learning a mapping Ĝ(θ) ≈ G(θ) for the same procedural parameters θ. The proxy is built on a modified StyleGAN2 architecture: instead of feeding a random latent vector Z and per‑layer noise, the network receives the procedural parameters θ directly, passes them through fully‑connected layers to an intermediate latent space W, and then uses deterministic AdaIN blocks (noise removed) to synthesize the image. This design guarantees a one‑to‑one correspondence between θ and the output, which is essential for gradient‑based optimization.

Training data for each proxy are generated automatically by sampling the original generator across its parameter space, producing pairs (θ, I). The loss combines an L₁ pixel term, a deep‑feature loss (L₁ on VGG‑19 activations), a style loss (L₁ on Gram matrices), and optionally an adversarial loss for highly stochastic generators (e.g., scratch patterns). The weighting (λ₀ = λ₂ = 1, λ₁ = 10, λ₃ > 0 only when needed) ensures that the network learns the deterministic mapping while the adversarial term only refines stochastic detail.

The optimization pipeline consists of three stages:

-

Pre‑optimization (Stage I) – Mirrors the original MATCH approach, quickly aligning continuous material properties (albedo, roughness, etc.) using gradient descent on the original graph.

-

Global optimization (Stage II) – Replaces each non‑differentiable generator with its DP, allowing simultaneous gradient‑based updates of both generator and filter parameters. A relatively large learning rate encourages the discovery of a good structural initialization.

-

Post‑optimization (Stage II*) – Swaps the proxies back to the original generators, fixing their parameters to the values found in Stage II, and fine‑tunes only the filter nodes with a reduced learning rate. This step restores the exact physical behavior of the original generators while preserving the structural match achieved through the proxy.

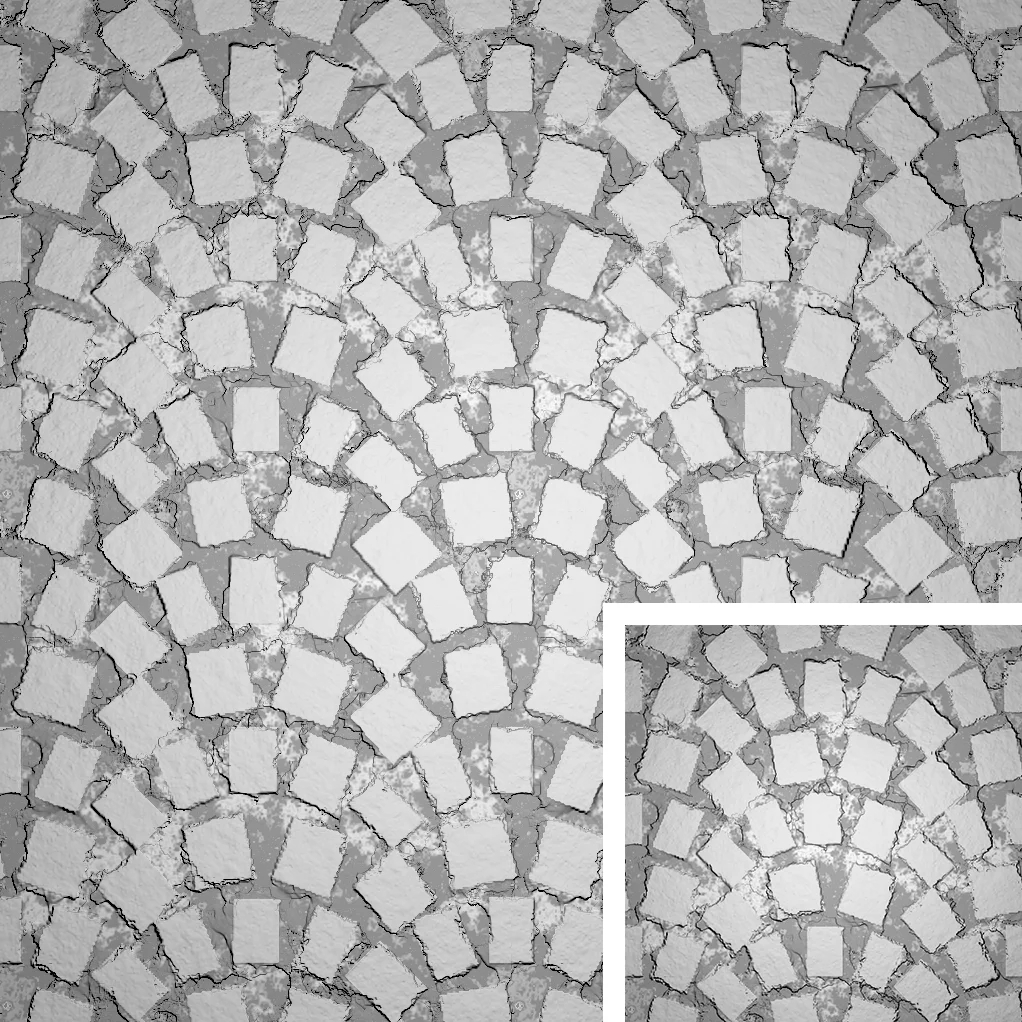

Experiments on a variety of materials (brick, leather, pavement, scratched potato skin, etc.) demonstrate that the DP‑enabled method outperforms MATCH both qualitatively and quantitatively. Metrics such as L₂ distance and SSIM improve by 15‑25 % on average, and the method succeeds on cases where MATCH fails entirely (e.g., matching stochastic scratch patterns). Moreover, the total optimization time is reduced by roughly 30 % thanks to the smoother loss landscape provided by the differentiable proxies.

The authors acknowledge limitations: the need to train a separate proxy for each generator type incurs an upfront cost, and extremely complex or physics‑based generators may be difficult to approximate accurately. Future directions include meta‑learning to reuse proxies across generators, ensemble proxies for better generalization, and integrating the approach into interactive material authoring tools for real‑time feedback.

In summary, the paper presents a novel, fully differentiable framework for procedural material graph optimization by introducing neural differentiable proxies for non‑differentiable nodes, a carefully designed loss function, and a three‑stage optimization strategy. This enables joint optimization of structure and appearance, reduces manual effort, and opens new possibilities for automated, high‑quality material creation in graphics pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment