Outsourcing Computation: the Minimal Refereed Mechanism

We consider a setting where a verifier with limited computation power delegates a resource intensive computation task—which requires a $T\times S$ computation tableau—to two provers where the provers are rational in that each prover maximizes their own payoff—taking into account losses incurred by the cost of computation. We design a mechanism called the Minimal Refereed Mechanism (MRM) such that if the verifier has $O(\log S + \log T)$ time and $O(\log S + \log T)$ space computation power, then both provers will provide a honest result without the verifier putting any effort to verify the results. The amount of computation required for the provers (and thus the cost) is a multiplicative $\log S$-factor more than the computation itself, making this schema efficient especially for low-space computations.

💡 Research Summary

The paper addresses the problem of outsourcing a computation that requires a T × S tableau to two rational provers while the verifier has only logarithmic resources. The authors propose the Minimal Refereed Mechanism (MRM), a game‑theoretic protocol that guarantees honest computation without the verifier performing any costly verification.

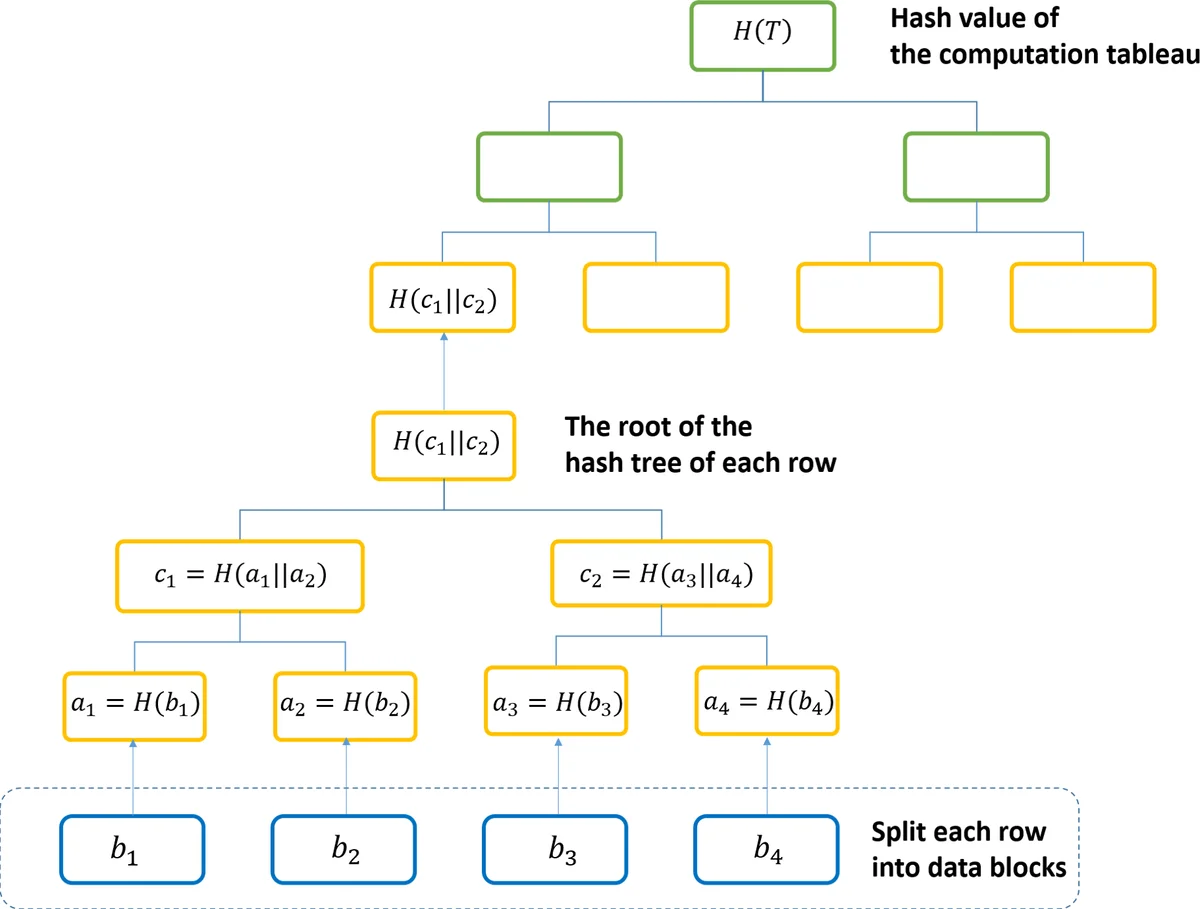

In MRM the verifier sends the program to two agents, Alice and Bob, and asks each to execute it, construct a Merkle tree over the entire computation table, and return the root hash. The verifier first checks whether the two roots match. If they do, the verifier pays both agents a modest reward and finishes; this step costs only O(log S + log T) time and space because the verifier never inspects the full table.

If the roots differ, the verifier initiates a logarithmic‑size arbitration phase. It randomly selects a row and a block within that row and asks the corresponding prover to provide the Merkle path from the leaf (the block) to the root. Since the path length is O(log S + log T), the verifier can verify consistency in the same logarithmic time. The prover who supplies a consistent path is deemed honest and receives a large reward, while the other receives a small (or zero) payment.

The incentive structure is modeled after the Prisoner’s Dilemma: both provers would like to collude by outputting the same (possibly false) result to obtain the modest reward, but the mechanism makes betrayal highly profitable. The authors prove that, under standard cryptographic assumptions (collision‑resistant hash functions) and assuming the provers cannot make binding side‑contracts, the unique Bayesian Nash equilibrium is for both provers to compute the function correctly and submit honest Merkle roots. The equilibrium is individually rational: each prover’s expected utility (payment minus computational cost) is positive, even if the other prover behaves irrationally.

From a complexity perspective, the verifier’s work is reduced from Θ(T·S) to Θ(log S + log T). Each prover’s extra work beyond the original computation is only a multiplicative log S factor (hashing each row and building the Merkle tree). This is dramatically more efficient than classical interactive proof systems, which often require the prover to perform heavy arithmetization or the verifier to run linear‑time checks.

The paper also discusses extensions: when the verifier has many independent tasks, it can assign the bulk of the work to one prover and use the second prover only for occasional consistency checks, cutting the provers’ overhead by roughly a factor of two.

Related work is surveyed comprehensively. Prior outsourced‑computation schemes (e.g., Belenkiy et al., Dong et al.) either require the verifier to occasionally recompute the whole task or do not exploit rationality. Canetti et al. introduced Merkle‑tree based verification but assumed at least one honest prover. Azar and Micali’s scoring‑rule mechanisms ignore the cost of producing exact answers. The authors argue that MRM uniquely combines low‑cost verification with a rational‑prover incentive model.

Limitations are acknowledged: the current design handles exactly two provers; scaling to many provers would need a more intricate reward scheme. Security relies on classical collision‑resistant hashes, so post‑quantum alternatives may be required for future deployments. Implementing the mechanism on smart‑contract platforms (e.g., Ethereum) would require careful gas‑cost analysis and automated dispute resolution.

In conclusion, the Minimal Refereed Mechanism provides a theoretically sound and practically efficient framework for verifiable outsourced computation when the verifier’s resources are severely limited. By leveraging Merkle trees for succinct integrity proofs and a game‑theoretic reward structure that makes honesty the only equilibrium, the protocol achieves near‑zero verification cost while keeping provers’ overhead modest (only a logarithmic factor). This makes MRM especially attractive for low‑space, high‑complexity tasks such as dynamic programming on large tables, streaming algorithms, or any setting where the computation can be expressed as a T × S tableau.

Comments & Academic Discussion

Loading comments...

Leave a Comment