Predicting Network Controllability Robustness: A Convolutional Neural Network Approach

Network controllability measures how well a networked system can be controlled to a target state, and its robustness reflects how well the system can maintain the controllability against malicious attacks by means of node-removals or edge-removals. The measure of network controllability is quantified by the number of external control inputs needed to recover or to retain the controllability after the occurrence of an unexpected attack. The measure of the network controllability robustness, on the other hand, is quantified by a sequence of values that record the remaining controllability of the network after a sequence of attacks. Traditionally, the controllability robustness is determined by attack simulations, which is computationally time consuming. In this paper, a method to predict the controllability robustness based on machine learning using a convolutional neural network is proposed, motivated by the observations that 1) there is no clear correlation between the topological features and the controllability robustness of a general network, 2) the adjacency matrix of a network can be regarded as a gray-scale image, and 3) the convolutional neural network technique has proved successful in image processing without human intervention. Under the new framework, a fairly large number of training data generated by simulations are used to train a convolutional neural network for predicting the controllability robustness according to the input network-adjacency matrices, without performing conventional attack simulations. Extensive experimental studies were carried out, which demonstrate that the proposed framework for predicting controllability robustness of different network configurations is accurate and reliable with very low overheads.

💡 Research Summary

The paper addresses the problem of evaluating the robustness of network controllability—a measure of how well a complex system can retain its ability to be driven to any desired state after malicious attacks such as node or edge removals. Traditional approaches rely on exhaustive attack simulations to generate a “controllability curve,” i.e., a sequence of driver‑node fractions (n_D) recorded after each removal step. While accurate, these simulations are computationally prohibitive for large‑scale networks.

Motivated by three observations, the authors propose a machine‑learning framework that bypasses explicit simulations. First, conventional topological descriptors (average degree, clustering, modularity, etc.) show little correlation with controllability robustness for generic networks. Second, the adjacency matrix of a network can be interpreted as a gray‑scale image (binary for unweighted graphs, continuous for weighted graphs). Third, convolutional neural networks (CNNs) have demonstrated powerful feature extraction directly from raw pixel data without handcrafted features.

The methodology proceeds as follows. Each network’s adjacency matrix A (size N×N) is transformed into an image: 0‑1 entries become black/white pixels for unweighted graphs, while real‑valued entries are normalized to gray levels for weighted graphs. Because many real networks are sparse (≤5 % non‑zero entries), the authors introduce an embedding layer that multiplies A by a randomly initialized dense matrix D, yielding a dense representation A′ = A·D that is more amenable to deep learning.

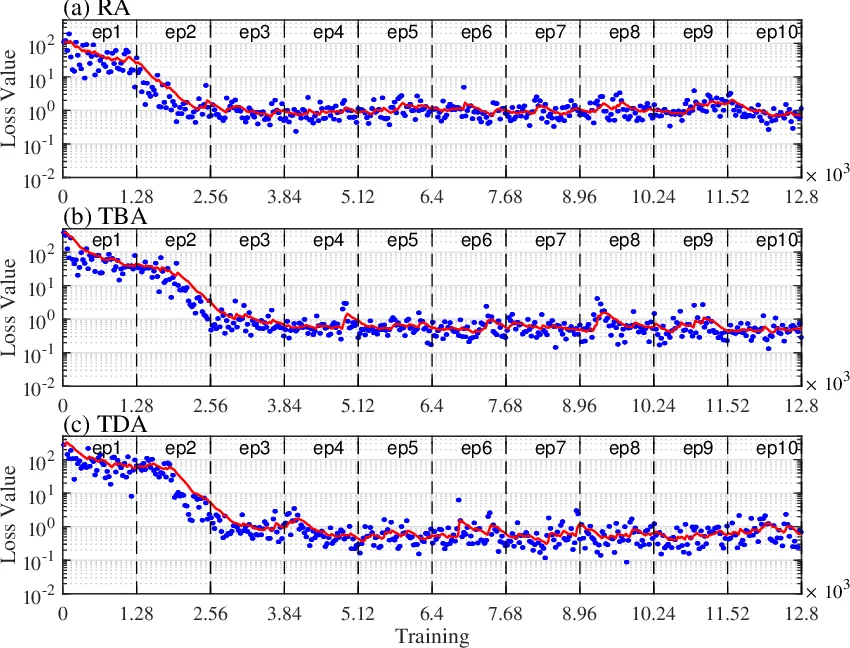

A VGG‑style CNN is then employed. The architecture consists of an embedding layer followed by seven groups of convolution‑ReLU‑max‑pooling blocks (with kernel sizes ranging from 7×7 to 3×3 and increasing channel depths up to 512), and finally two fully‑connected layers that output a vector of length N‑1, representing the predicted driver‑node fraction after each of the N‑1 possible node removals. The network is trained in a supervised regression setting using a large dataset generated by conventional simulations.

Experimental evaluation covers four canonical graph models—Erdős‑Rényi (ER), Scale‑Free (SF), Small‑World (SW), and a quasi‑scale network (QSN)—each instantiated with N = 1000 nodes. Five attack strategies are examined: random node removal, removal of highest‑degree nodes, upstream/downstream attacks (targeting hierarchical neighbors), and weighted‑edge removal. For each combination, 10,000 network‑attack pairs are simulated to create training data; a separate test set assesses generalization.

Results show that the CNN predicts the controllability curve with high fidelity: mean absolute error (MAE) below 0.02 and coefficient of determination (R²) exceeding 0.95 across all scenarios. Prediction time is dramatically reduced—on a standard CPU the model produces a full curve in a few seconds, and on a GPU in a few hundred milliseconds—compared to hours required for exhaustive Monte‑Carlo simulations. The authors also demonstrate that models based solely on handcrafted topological features fail to capture the robustness patterns, confirming the advantage of image‑based deep learning.

Limitations are acknowledged: the current study focuses exclusively on node‑removal attacks; extensions to edge‑removal, combined attacks, and dynamic networks are left for future work. Nonetheless, the proposed framework offers a scalable, accurate, and low‑overhead tool for assessing controllability robustness, with potential applications in power‑grid security, cyber‑physical system resilience, and real‑time network monitoring.

Comments & Academic Discussion

Loading comments...

Leave a Comment