Sex and Gender in the Computer Graphics Research Literature

💡 Research Summary

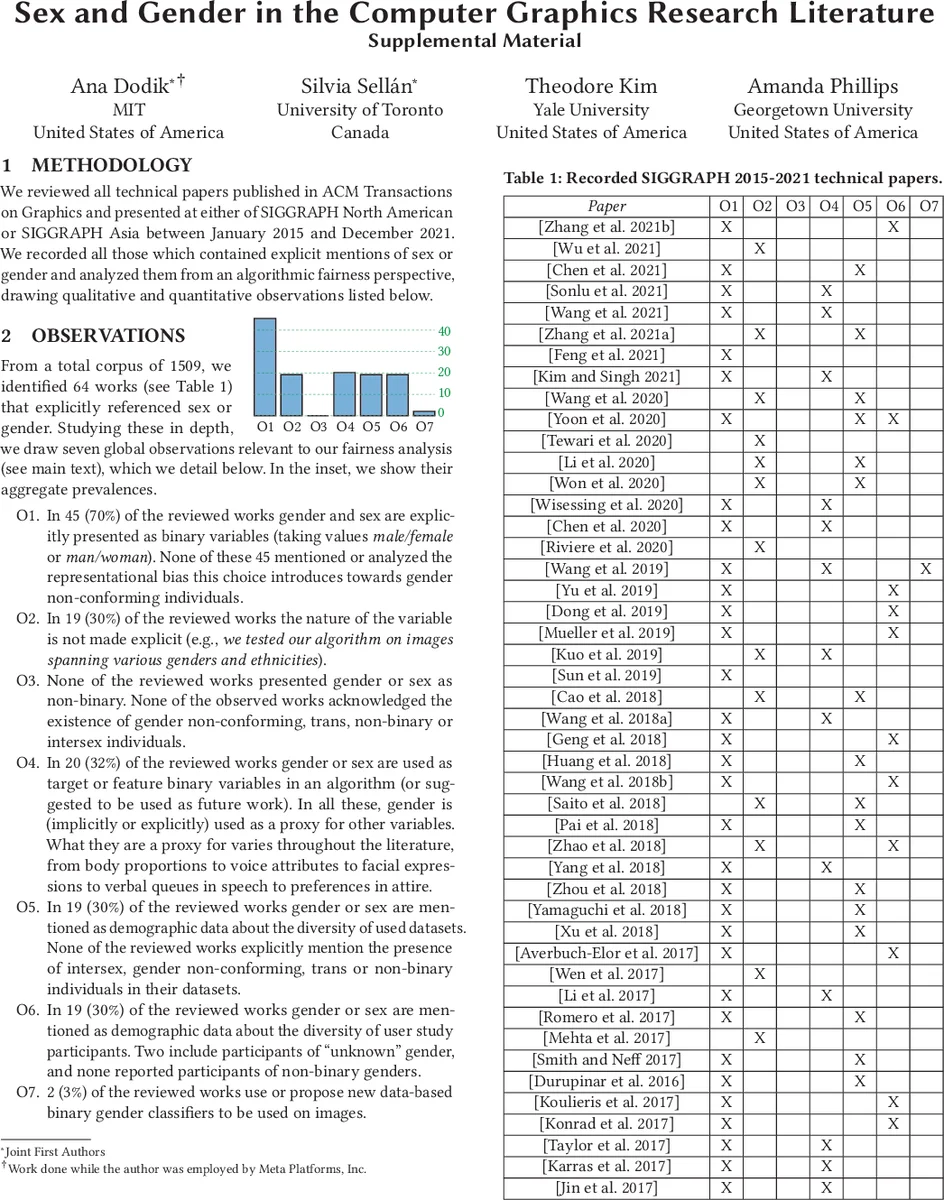

The paper “Sex and Gender in the Computer Graphics Research Literature” conducts a systematic survey of all technical papers presented at SIGGRAPH since 2015 that mention the terms “sex” or “gender.” The authors collected 64 such mentions and organized their observations into seven categories (O1‑O7). Their core finding is that the computer graphics community consistently treats sex and gender as binary variables (male/female) across datasets, user studies, and algorithmic design, completely ignoring non‑binary and gender‑nonconforming individuals.

Using the algorithmic fairness framework of Suresh and Guttag (2021), the authors map these practices onto five stages of bias in a machine‑learning lifecycle: representation bias, historical bias, measurement bias, omitted‑variable bias, evaluation bias, and deployment bias. Representation bias arises because datasets either exclude non‑binary participants or employ uniform sampling that does not reflect real‑world gender diversity. Historical bias is evident when models learn stereotypical associations (e.g., “dress = woman,” “short hair = man”) from socially constructed training data. Measurement bias occurs when sex or gender is used as a proxy for concrete attributes such as body proportions or voice pitch, leading to inaccurate or misleading feature representations. Omitted‑variable bias is observed when more direct physical features (hair length, hip width, fundamental frequency) are ignored in favor of the coarse binary gender label, obscuring the true source of performance gains. Evaluation bias is perpetuated by benchmarking against binary‑gender models, thereby cementing a biased standard across the field. Finally, deployment bias manifests in real products—virtual try‑on systems, voice assistants, virtual reality avatars—where binary gender assumptions exclude or misrepresent gender‑nonconforming users, potentially forcing them to alter their appearance or speech to avoid misclassification.

The paper highlights concrete real‑world harms. Representational harms include the reinforcement of stereotypes and public humiliation of gender‑nonconforming passengers in airport body scanners. Allocative harms arise when biased virtual fitting rooms deny access to individuals with non‑normative bodies, limiting their ability to use changing‑room‑like services. Moreover, the systematic omission of gender‑nonconforming participants from research creates an exclusive academic environment, contradicting SIGGRAPH’s stated goals of inclusion, equity, access, and diversity.

To address these issues, the authors propose several actionable recommendations. First, data collection protocols should incorporate self‑reported, non‑binary gender options and explicitly document gender composition in dataset metadata. Second, algorithm designers should replace binary gender variables with more precise, directly measured attributes (e.g., hair length, facial geometry, acoustic features) whenever possible. Third, researchers should compute and report fairness metrics (e.g., demographic parity, equalized odds) and discuss potential harms in the limitations section, following recent surveys on bias and fairness in machine learning. Fourth, benchmark suites should be revised to include diverse gender representations, preventing the codification of biased standards. Fifth, before deployment, products should undergo user testing that includes gender‑nonconforming participants to surface unintended consequences. Finally, the community should foster policy changes—such as conference guidelines that require explicit discussion of gender representation and bias mitigation—to shift cultural norms away from binary assumptions.

In conclusion, the study demonstrates that the prevailing binary treatment of sex and gender in computer graphics research introduces systematic algorithmic bias throughout the research lifecycle. By exposing representation, historical, measurement, omitted‑variable, evaluation, and deployment biases, the paper makes a compelling case for integrating fairness considerations from the outset of any graphics project. Implementing the suggested practices will not only improve scientific rigor but also align the field with broader societal goals of inclusivity and equity, ultimately leading to more robust, generalizable, and socially responsible graphics technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment