Stabilizing a linear system using phone calls: when time is information

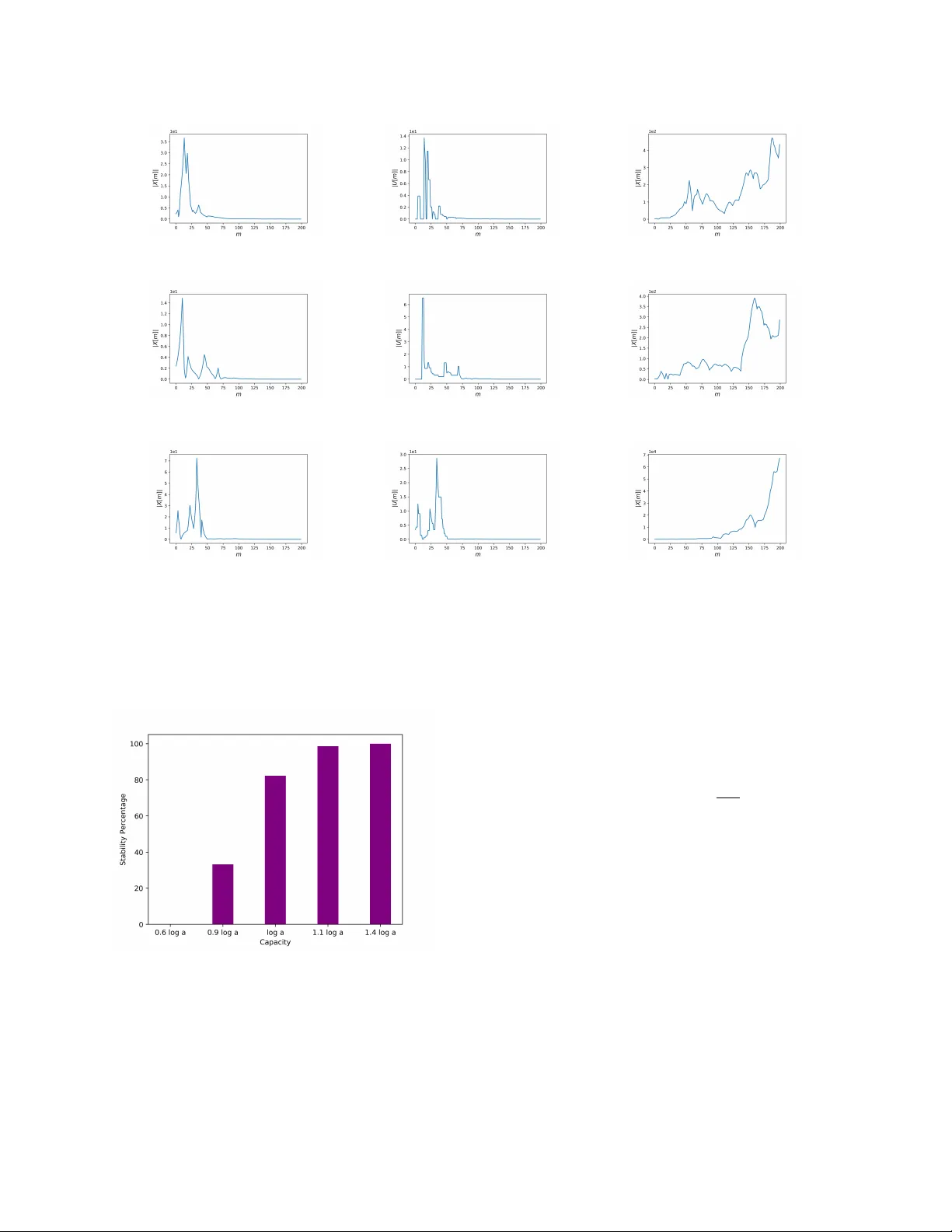

We consider the problem of stabilizing an undisturbed, scalar, linear system over a "timing" channel, namely a channel where information is communicated through the timestamps of the transmitted symbols. Each symbol transmitted from a sensor to a con…

Authors: Mohammad Javad Khojasteh, Massimo Franceschetti, Gireeja Ranade