Searching for Apparel Products from Images in the Wild

In this age of social media, people often look at what others are wearing. In particular, Instagram and Twitter influencers often provide images of themselves wearing different outfits and their followers are often inspired to buy similar clothes.We …

Authors: Son Tran, Ming Du, Sampath Ch

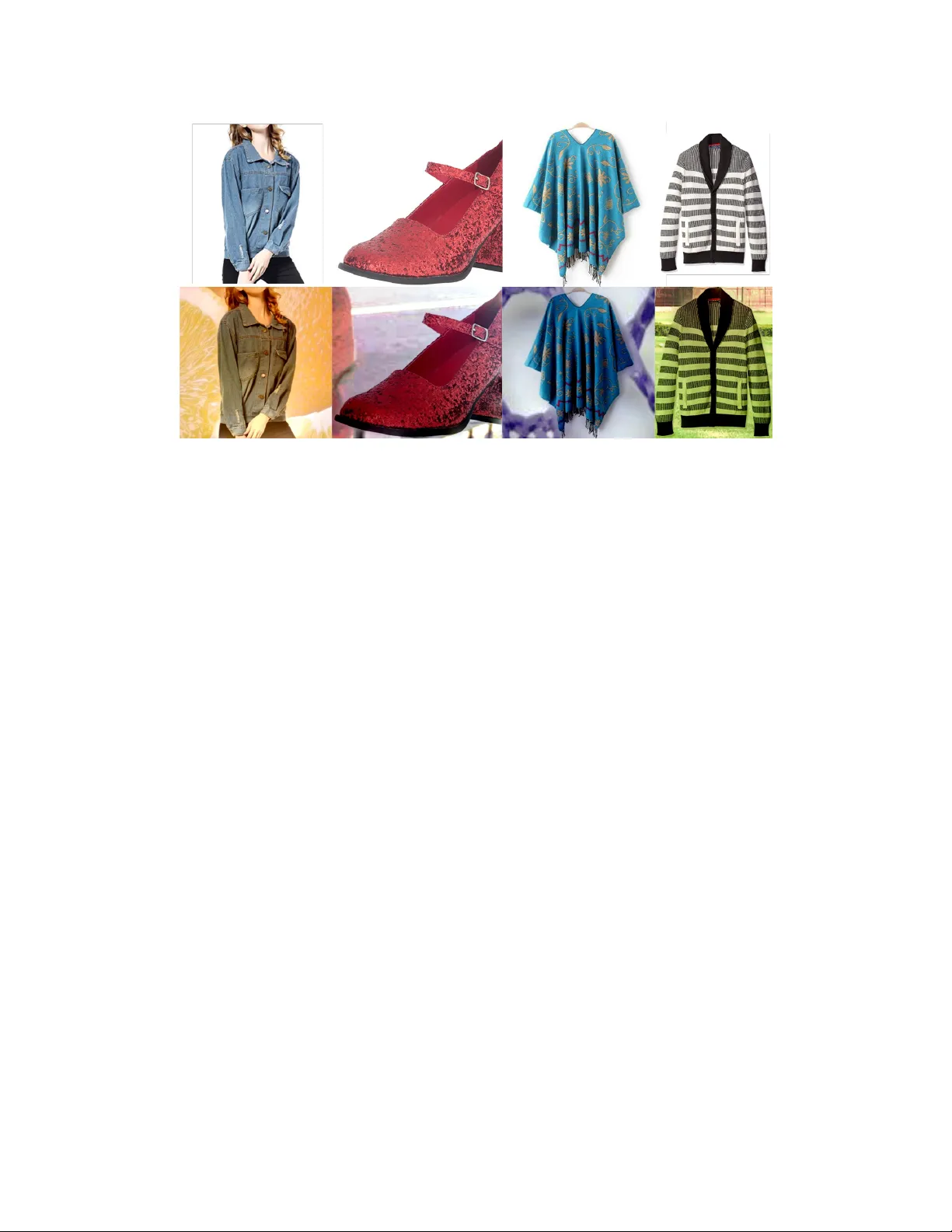

Searching for Appar el Products from Images in the Wild SON TRAN, MING DU, SAMP A TH CHANDA, R. MANMA THA, and CJ T A YLOR, Visual Search and AR Group, Amazon In this age of social media, pe ople often lo ok at what others are wearing. In particular , Instagram and T witter inuencers often provide images of themselves wearing dier ent outts and their followers are often inspired to buy similar clothes. W e propose a system to automatically nd the closest visually similar clothes in the online Catalog (street-to-shop searching). The problem is challenging since the original images ar e taken under dierent pose and lighting conditions. The system initially localizes high-level de- scriptive regions (top, bottom, wristwear . . . ) using multiple CNN detectors such as Y OLO and SSD that are trained specically for apparel domain. It then classies these regions into mor e specic regions such as t-shirts, tunic or dresses. Finally , a feature embedding learned using a multi-task function is recovered for every item and then compared with corresponding items in the online Catalog database and ranked according to distance. W e validate our approach component-wise using benchmark datasets and end-to-end using human evaluation. Additional K ey W ords and Phrases: object localization, object recognition, fashion images, deep neural networks 1 IN TRODUCTION People who want to lo ok for fashionable clothes today can look for inspiration to social me dia, specically inuencers on sites such as Instagram and T witter . Inuencers post images of themselves wearing dierent outts in the wild (see Figure 1. a). Pose and background are unconstrained and body parts may also be occluded. Items can be layered such as a person wearing a jacket on top of a shirt. All of these lead to challenges in both dete cting what people are wearing and also in searching for similar items in the online Catalog. This paper describ es our proposed approach to the pr oblem. First, we localize regions of the image at a high-level. W e nd tops rather than shirts or blouses or jackets and we nd b ottoms rather than pants or skirts. This allows us exibility given the large range of possible types of tops or bottoms or the other kinds of regions w e nd. This localization is done using a Y OLOV3 ([ 17 ]) and an SSD ([ 12 ]) model trained on apparel categories. W e show that how we obtain and train these images does matter by comparing results to those obtained using the Op enImages dataset ([ 11 ]). At this juncture, we also determine gender and coarse age (man, woman, boy or girl) to restrict the corr esponding clothes when searching in the database. Using a hierarchical classier , we break the results into ner classes – e.g., a top may be a dress shirt or a T -shirt or a blouse . W e use a CNN with a multi-task loss function that also takes into consideration other attributes, such as color , pattern , sle eve length and so on. The output of the last layer b efore the softmax is then used as a feature vector for the similarity computation. W e performed the same feature extraction on quer y image and catalog database images. The feature vectors are then compared and ranked. T o improve the Authors’ addr ess: Son Tran, sontran@amazon.com; Ming Du, mingdu@amazon.com; Sampath Chanda, csampat@amazon.com; R. Manmatha, manmatha@amazon.com; CJ T aylor, taylorcj@amazon.com, Visual Search and AR Group, Amazon, 130 Lytton, Palo Alto, CA, 94301. consistency of our results, the comparisons are restricted to those which are in the same ner class ( e.g., T -shirt or blouse ). W e present results on the individual components (lo calization) and also on the overall ranking and compare them with baselines. The layout of the paper is as follows: related work is reviewed in section 2. Section 3 describes dierent major components of our pipeline. The details of the data collection and model training process are in section 4. Our experimental results are reported in section 5 and we provide conclusions in 6. 2 RELA TED W ORK Localization W e review her e a number of main academic approaches for localiz- ing apparel items. In [ 13 ], deep CNNs were trained to predict a set of fashion landmarks, such as shoulder p oints or neck points. How- ever , landmarks for clothes are sometimes not well dened and are often sensitive to occlusions. Human p ose estimation ([ 1 ]) could be used as the mean to infer apparel item’s locations. One drawback is that they are not applicable if p eople were not present in the image. Localization could also be carried out through cloth parsing and (semantic) segmentation such as LIP ([ 5 ]). While the performance has been promising on standard datasets, it is mor e computation- ally expensive and the r ecall has been unsatisfactor y in the initial evaluation on our targeted datasets (e.g. fashion inuencer images). For simplicity and scalability , in this work, we localize apparel items using bounding boxes. T op performing multi-b ox object detectors such as SSD ([ 12 ]), Y OLO V3 ([ 17 ]) with dierent netw ork bodies and dierent resolutions are use d both for run-time queries and oine index construction. Classification Recognizing the product type accurately for apparel is of critical importance in nding similar items from the catalog. In the literature, a number of dierent classications have be en adopted for clothing items. This is partially a function of what the lab eled input datasets provide ([ 13 ], [ 25 ]). Most of these papers limit themselves to a small number of classes (less than 60 classes, se e, e.g. [ 26 ]) with many high-level classes containing highly dissimilar objects (i.e ., dierent product types). In this work, we create ne-grained classication of 146 classes. Our ne-grained breakdown matches well to product- type levels in online Catalog (for men and women’s clothing) and ar e typically visually distinctive. Additionally , since we use the Catalog as the search database, matching its hierarchy also greatly facilitates the later indexing and retrieval stages. Visual Similarity Search Visual similarity search can be done by searching for the nearest neighbors to an emb edding extracted from certain intermediate layer(s) in a deep neural network trained for surrogate tasks (see [ 26 ], [ 24 ], [ 21 ] and [ 23 ]). The de ep network can b e traine d with , V ol. 1, No. 1, Article . Publication date: April 2022. 2 • Son Tran, Ming Du, Sampath Chanda, R. Manmatha, and CJ T aylor Fig. 1. Searching for clothing items from images cross entropy loss (classication), contrastive loss (pairs), triplet loss ([ 19 ], [ 24 ]...) or quadruplet loss ([ 2 ]). There seem to b e no clear winner between these options (see e.g.[ 21 ] and [ 7 ]). Obtaining the highest accuracy seems to depend on careful sampling and tuning strategies for the specic problem (see [ 23 ]). Our approach in this paper , in contrast to parallel eorts in our team, is to directly use the embedding feature extracted from the ne-grained classication network thus avoiding the need for a separate CNN to nd an embedding feature. This also helps simplify the engineering eorts and reduce run time latency . 3 SYSTEM AND MODEL ARCHI TECT URE In this section, the three main components of our system: localiza- tion, recognition, and visual similarity search are rst describe d. This is followed by a description of the overall system and the end-to-end interaction between the components. Localization One could design a detector that detects object/no-obje ct and pass the detected bounding boxes to downstream classiers for further classication ([ 4 ]). The disadvantage in separating localization of object and its classication in this manner is that spatial context is lost, reducing accuracy . On the other hand, one could tr y to identify all the ne-grained categories at the detection stage. This would require a very large amount of detailed bounding box annotation to reasonably cover all categories, which is very expensive. (W e have found that a 13 high level class division on average takes a well-trained annotator three minutes to complete a b ounding box annotation on one image). W e strike a middle ground by grouping items of the same types, that often also app ear in similar lo cation ( e.g. jacket, coat, t-shirt ) into a high-le vel class (e.g. top ). Our complete list of top-level detection class for apparel items is as follows: headw ear , eyew ear , earring, belt, b ottom, dress, top, suit, tie, footwear , swimsuit, bag, wristwear , scarf, necklace and one-piece . On the same setting, dierent obje ct detectors usually have dif- ferent strengths with respect to object sizes and scales ([ 8 ]). T o boost recall for oine processes, such as index building, we use an array of multi-box object detectors operating at dierent levels of image resolutions: SSD-512 Resnet50 ([ 12 ]), YOLO V3 Darknet53 300 and 416 ([ 17 ]). Their results are combined using non-maximum suppression. During inference time, we use SSD-512 with a VGG backbone for real-time response. Fine-Grained Product T ype Classification and Feature Extraction W e build one ne-grained classier for each of the high-level classes in the previous section. For example, the top classier will tr y to classify all detected top bounding b oxes into one of the 33 product types such as denim jacket, tunic, blouse, vest . . . T aking run-time into considerations, we chose the Resnet18 ([ 6 ]) as the backbone for all ne-grained classiers. T o exploit better all available super vised signal in our training sets, we extended the network to perform multi-task classication. For example , for the top classier , we additionally classify color , pattern, shape, shoulder type, neck type, sleeve type. . . For each branch corresponding to one of these tasks, a fully connected layer with a 128-D output is inserted between pool5 and its softmax layers. W e obser ve that by using joint multi-task training, product-type classication as well as search relevance are both impro ved. The overall loss for the network is calculated as follow: 𝐿 = Í 𝑁 𝑖 = 1 𝐿 𝑖 ∗ 𝑤 𝑖 , where 𝑁 is number of tasks, 𝐿 𝑖 and 𝑤 𝑖 are the classi- cation loss and its weight for the i-th branch respectively . The 512-D feature from the p ool5 layer (output of average pool- ing immediately after all the convolution layers) is used for visual similarity search. With our architecture, illustrated in gure 2, this feature has the power to r epresent the combined similarity across product type, color , pattern, etc. In addition, the 128-D features from each of the fc128 layers are specic to each of these aspects and could be used to re-rank the candidate list if so desired. Previous user research studies show that typically users place the importance of occasion rst followed by product typ e, then color , followed by pattern and other features. Motivated by this observation, the loss weight for product type is empirically set to 1.0, for color , 0.3 and the rest 0.1. End-to-end System The run-time o w of our pipeline is illustrated in Figure 3. An image is input to the detector which generates high level class b ounding boxes (e.g. top / bottom / dress ). For each of these bounding b oxes, a cropped image patch is extracted and fed into the corresponding ne-grained classier . The top-k ne-grained classes are identied and their corresponding indices are searched for nearest neighbors (Catalog items) using the embedding feature e xtracted fr om the ne- grained classier . The resulting list of Catalog items from dierent indices are then re-sorted according to the embedding distance. , V ol. 1, No. 1, Article . Publication date: April 2022. Searching for Apparel Products from Images in the Wild • 3 Fig. 2. Our fine-grained multitask network for top Fig. 3. Overall architecture of our end-to-end visual search for apparel 4 D A T A COLLECTION, MODEL TRAINING AND IMPLEMEN T A TION DET AILS Localization W e e valuated existing public datasets for apparel and found out that they poorly matched our product ne eds. For example, OpenImages ([ 11 ]) contains a large amount of b ounding box annotations includ- ing ones for apparel-related categories. In particular , for shoes, the specic class breakdown and corr esponding number of bounding boxes are as follows: (footwear – 744474), (b oot – 3132), (high heels – 3124). Howev er , while being large in quantities, they are heavily skewed, incomplete in category , not suciently rened and do not match with online Catalog’s partition. Therefore, instead of relying on such public datasets, we decided to download and annotate 50K images from the internet using certain search ke yword composition to ensure a balanced distribution across all clothing classes, gender , pose and scenes. A small percentage (5%) of the resulting images do not contain persons, i.e., only clothing items. W e still tried to annotate gender for them to the b est we could. Overall, a total of 320K bounding boxes were annotated, an average of 16K boxes per (high level) detection class. This annotation task was carried out by our in-house vendors, since it was observed that using general MT urkers often leads to less accurate bounding box annotation. W e trained the following multi-box detectors on this dataset: SSD- 512 V GG, SSD-512 Resnet50, Y OLOV3 Darknet53 (300 and 416 image sizes). The rst one is used in real-time inference. The remaining detectors were used as an ensemble in bounding box extraction from Catalog images. W e used the default training parameters from Cae ([ 9 ]) as well as Gluon-CV ([ 15 ]) libraries and trained each detector for 200 epochs using the vanilla SGD optimizer . Classification Training accurate classiers requires a large amount of data. Ac- quiring images from the wild and adding class label for hundreds of apparel categories in a short amount of time is very challenging. Instead, we turned to two available data sources - Catalog and cus- tomer review images. The browse node associated with each image is known from the Catalog database . And since our class partition matches with Catalog’s node structure, training class label is readily available at a large scale without the need for manual annotation. One important issue is duplication, where multiple Catalog items use the same or near identical images. Using such images to train models would result in overtting. W e rst applied a lter to elimi- nate low sale volume items, deduped by image names, and nally deduped using a k-NN engine (similar to deduping for search indices described in the following section). Another and more critical issue is that Catalog images are typi- cally clean without background clutter , without occlusion or cloth- ing items not being worn on people. This domain dierence is well-known and there have been numerous eorts in the literature to close the gap ([ 3 ], [ 10 ]...). In this work, w e augment catalog im- ages with random background. Specically , background patches of random shape and sizes was drawn from a large repositor y of natural images. Catalog foreground object masks were obtained by rst thresholding away white pixels follo wed by a morphological dilation to create some space around object’s silhouettes. The fore- ground object is then blended with a random background patch using Poisson image editing ([ 16 ]). Figure 4 in the Appendix shows examples of our augmented data. Customer review images are images that online customer up- loaded when they review a product. While it is a good source for images taken in casual setting, they often are extr eme close-ups and hence not useful. In addition, they ar e not evenly distributed. For example, there were more than 20K images for legging , but only , V ol. 1, No. 1, Article . Publication date: April 2022. 4 • Son Tran, Ming Du, Sampath Chanda, R. Manmatha, and CJ T aylor Fig. 4. A ugmenting catalog image with background. Note the random color blending eect across samples. Best viewed electronically 1742 for denim shorts . Nevertheless, we still sampled part of this source for our training. For each ne-grained classier , we use a Resnet18 that was modi- ed for multi-task losses as described in the previous section. W e initialized the network with an ImageNet pretrained model and trained it for 50 epochs using multiple r ounds of cyclic learning rate ([ 20 ]). Using second cycle resulted in an increase of 2% for over- all classication accuracy . (W e stopped at the second round when overtting started to appear). Search Indices W e indexed most of the apparel categories for man and woman. The main images for top items in 242 browse nodes, which corre- spond to 146 ne-grained classes, were sele cted. They were de duped according to image ids. Each image was then run through the de- tector (section 3) to extract the bounding box for the underlying clothing item ( e.g., jeans ). The cropped image patch is then fed to the corresponding ne-grained classier network (e .g., bottom ) for embedding feature extraction. T o further reduce duplication, we rst placed all extracted features in a k-NN corpus, then re-queried to nd near duplicate neighbors for each of the features. Based on the duplication graph, we nd connecte d components and retained only the top Catalog item for each component. W e built one index for all Catalog items that belong to a ne- grained class. W e also split indices according to gender type. In total, there are close to 500 (sub) indices. W e use o-the-self hsnw librar y ([14]) for approximate nearest neighbor search. 5 EXPERIMEN T AL RESULTS Localization In this section, we report a comparison between training detec- tors using our collected data vs. training using OpenImages ([ 11 ], only for clothing-related categories). It was necessar y to roll-up the classes in OpenImages to make them comparable with our data, e.g. merging jean , skirt into bottom . The numbers of bounding boxes are kept roughly the same on both sides (~300K). W e used the same de- tector architecture for this test (Y OLOV3 416). The test set consisted of 5K held-out fashion images in the wild. When trained on Open- Images, the detector had an mAP of 0.6 on the test set, while when trained on our data (section 4) the mean A verage Precision (mAP) [ 18 ] is 0.71. This result shows the importance of dataset’s quality . W e attribute the low accuracy w .r .t. Op enImages to skewness, in- completeness and inaccuracies in its bounding box annotation. For completeness, mAP for all categories using Y OLO V3 416 are listed in the Appendix. Using SSD 512 with a Resnet50 body , the overall mAP was slightly lower , at 0.70. W e used a combination of detectors in oine process- ing: SSD 512 Resnet50, YOLO V3 300, Y OLO V3 416. Their combina- tion led to 2% increase in the overall mAP . Classification W e report here the performance e valuation of ne-graine d classiers on the Catalog validation datasets without (V1) and with noise clean- up (V2) (section 4). W e also report the classication accuracy on fashion images in the wild. W e rst downloaded 600K images using keywords that are corresponding to ne-grained class labels. Then we run these images through the detector ensemble in a high recall mode to extract bounding box for clothing items. All the extracted cropped images are given to Mturkers to verify their class labels. This process resulted in around 270K positive conrmation for both bounding boxes and their class lab els, which we used for evaluation. In summar y , the ne-grained accuracies for top (33 ne-graine d classes), bottom (10 ne-grained classes) and dress (5 ne-grained classes) are shown in table 1. As can be seen from the table , cleaning up the data led to an in- crease of r oughly 5-8% in accuracy across the classes. It also demon- strated that although we trained our ne-grained classiers using , V ol. 1, No. 1, Article . Publication date: April 2022. Searching for Apparel Products from Images in the Wild • 5 T able 1. Fine grain classification accuracies for three high-level class: top , boom and dress . *See text for further information Classiers V1 - V alidation V2 - V alidation Fashion Images (270K) dress 0.70 0.74 0.71 top 0.64 0.80 0.52* bottom 0.60 0.73 0.70 original and augmented Catalog images, the accuracies for dress and bottom only dropped slightly when tested on fashion images in the wild. For top , there is currently an issue with the data labelling; often, the detector returns only single b ounding box even if the per- son wears multiple layers such as a jacket on top of a tunic . When such a bounding b ox image was given to the annotator , it can be labelled as jacket or a tunic , while our system, by design, only pre- dicts ne-grained class for the outwear , jacket , in such a case. A s the result, the accuracy for top is lower than expecte d. W e are currently working to make annotation more consistent and r e-evaluate the accuracy for top on the fashion images in the wild. Visual Similarity Search In this section, we show qualitativ e and quantitative comparisons between dierent versions of our feature embedding that correspond to dierent versions of the classication networks: (V1) single-task, product type classication only , (V2) multi-task, product type and color classication, and (V3) multi-task, product type , color , and additional 13 classication tasks. In gur e 5, the picture on the left is the quer y image. The top row shows top-5 retrieved results using V1 embedding. Since the network only did product type classication, the r esulting embedding se ems to ignore other aspects such as color . The second row shows the result when a color classication task is added (V2). Initially , during training, the added task was carrie d out on original Catalog images only . As the result, its emb edding is quite sensitive to background color which is rarely present on Catalog images. In this case, the color of the under layer was wrongly picked up for matching. However , when background augmentation was used, as shown in the third row , the feature become more resilient to background clutter . W e used humans for quantitative A/B evaluation of the retrieval quality . Exact retrieval was not targeted, rather the results were judged based on following subjective matching criteria in order of importance: occasion, pr oduct type, color , pattern followed by other clothing featur es. Appr oximately 1000 fashion images were sent out to MT urkers for evaluation. For each query image, retrieval results from two feature embeddings, A and B, w ere shown side by side and the Mturkers were asked to choose one of the following options: A is better than B, B is better than A, b oth A and B are bad and both A and B are equally good. W e aggregated the votes from 5 people. Overall, between V1 and V2, people gave preference to V2 75% of the time and to V1 25% of the time. Between V2 and V3, the preference ratio giv en to V2 and V3 respectively ar e 33% and 67%. This clearly shows consistent progr esses in emb edding quality from V1 to V2 and from V2 to V3, as the corresponding classication network was trained to perform more tasks. 6 CONCLUSION AND F U TURE DIRECTIONS In this paper , we describe an appr oach of searching for apparel items using fashion images in the wild. W e trained multi-box dete ctors to localize clothing items as well as to perform gender detection. W e also trained multiple classiers to further classify detected b ounding boxes into ne-grained categories. W e then use d the embe dding features extracted from these classiers to search for similar cloth- ing items from online Catalog. Our initial positive experimental outcomes validate our system design. As the next steps, we plan to train ne-grained classiers on fashion images in the wild (i.e. instead just on Catalog images) and to add more extensive evalu- ation. W e also plan to use (semantic) segmentation and clothing parsing ([ 22 ]) to improve matching performance when pe ople wear multi-layer tops, which occur quite often in fashion images. REFERENCES [1] Zhe Cao, T omas Simon, Shih-En W ei, and Y aser Sheikh. Realtime multi-person 2d pose estimation using part anity elds. In CVPR , 2017. [2] W eihua Chen, Xiaotang Chen, Jianguo Zhang, and Kaiqi Huang. Beyond triplet loss: a deep quadruplet netw ork for person re-identication. In The Conference on Computer Vision and Pattern Recognition , 2017. [3] Arnab Dhua. Apparel classication using catalog synthesized images. In A mazon Computer Vision Conference , 2016. [4] Ross Girshick, Je Donahue, Trevor Darrell, and Jitendra Malik. Rich feature hierarchies for accurate object detection and semantic segmentation. In Computer Vision and Pattern Recognition , 2014. [5] Ke Gong, Xiaodan Liang, Dongyu Zhang, Xiaohui Shen, and Liang Lin. Look into person: Self-sup ervised structure-sensitive learning and a new b enchmark for human parsing. In The IEEE Conference on Computer Vision and Pattern Re cognition (CVPR) , July 2017. [6] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. CoRR , abs/1512.03385, 2015. [7] Alexander Hermans* , Lucas Beyer* , and Bastian Leibe. In Defense of the Triplet Loss for Person Re-Identication. arXiv preprint , 2017. [8] Jonathan Huang, Vivek Rathod, Chen Sun, Menglong Zhu, Anoop Korattikara Balan, Alireza Fathi, Ian Fischer , Zbigniew W ojna, Y ang Song, Sergio Guadarrama, and Kevin Murphy . Speed/accuracy trade-os for modern convolutional object detectors. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , pages 3296–3297, 2017. [9] Y angqing Jia, Evan Shelhamer , Je Donahue, Sergey Karayev , Jonathan Long, Ross Girshick, Sergio Guadarrama, and Trevor Darrell. Cae: Convolutional architecture for fast feature embedding. arXiv preprint , 2014. [10] M. Hadi Kiapour, Xufeng Han, Svetlana Lazebnik, Alexander C. Berg, and T amara L. Berg. Where to buy it:matching street clothing photos in online shops. In International Conference on Computer Vision , 2015. [11] Alina Kuznetsova, Hassan Rom, Neil Alldrin, Jasper Uijlings, Ivan Krasin, Jordi Pont- T uset, Shahab Kamali, Stefan Popov , Matteo Malloci, T om Duerig, and Vit- torio Ferrari. The open images dataset v4: Unie d image classication, object detection, and visual relationship detection at scale. , 2018. [12] W ei Liu, Dragomir Anguelov , Dumitru Erhan, Christian Szegedy , Scott Reed, Cheng- Y ang Fu, and Alexander C. Berg. SSD: Single shot multibox detector . In ECCV , 2016. [13] Z. Liu, P . Luo, S. Qiu, X. W ang, and X. Tang. Deepfashion: Powering robust clothes recognition and retrieval with rich annotations. In 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , pages 1096–1104, June 2016. [14] Y ury A. Malkov and D. A. Yashunin. Ecient and robust approximate near- est neighbor search using hierarchical navigable small world graphs. CoRR , abs/1603.09320, 2016. , V ol. 1, No. 1, Article . Publication date: April 2022. 6 • Son Tran, Ming Du, Sampath Chanda, R. Manmatha, and CJ T aylor Fig. 5. Le: the query image. Right: retrieval results - top row: using feature that sensitive only to pr oduct type – middle row: color aware, but trained without background augmentation – boom row: color aware and trained with background augmentation. Best view ed electronically . [15] MXNet. Gluoncv: a deep learning toolkit for computer vision. https://gluon- cv .mxnet.io/index.html, 2018–2019. [16] Patrick Pérez, Michel Gangnet, and Andrew Blake. Poisson image editing. In ACM SIGGRAPH 2003 Papers , SIGGRAPH ’03, pages 313–318, New Y ork, NY, USA, 2003. ACM. [17] Joseph Redmon and Ali Farhadi. Y olov3: An incremental improvement. CoRR , abs/1804.02767, 2018. [18] Gerard Salton and Michael J. McGill. Introduction to Modern Information Retrieval . McGraw-Hill, Inc., New Y ork, N Y , USA, 1986. [19] Devashish Shankar , Sujay Narumanchi, H. A. Ananya, Pramod Kompalli, and Krishnendu Chaudhury . Deep learning based large scale visual recommendation and search for e-commerce. CoRR , abs/1703.02344, 2017. [20] Leslie N. Smith. No more pesky learning rate guessing games. CoRR , abs/1506.01186, 2015. [21] Yi Sun, Yuheng Chen, Xiaogang W ang, and Xiaoou T ang. Deep learning face representation by joint identication-verication. In Proceedings of the 27th International Conference on Neural Information Processing Systems - V olume 2 , NIPS’14, pages 1988–1996, Cambridge, MA, USA, 2014. MIT Press. [22] Pongsate T angseng, Zhipeng W u, and K ota Y amaguchi. Looking at outt to parse clothing. CoRR , abs/1703.01386, 2017. [23] Evgeniya Ustinova and Victor Lempitsky . Learning deep embeddings with his- togram loss. In D. D . Lee, M. Sugiyama, U . V . Luxburg, I. Guyon, and R. Garnett, editors, Advances in Neural Information Processing Systems 29 , pages 4170–4178. Curran Associates, Inc., 2016. [24] Jiang W ang, Y ang Song, Thomas Leung, Chuck Rosenb erg, Jingbin W ang, James Philbin, Bo Chen, and Ying Wu. Learning ne-grained image similarity with deep ranking. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition , CVPR ’14, pages 1386–1393, W ashington, DC, USA, 2014. IEEE Computer Society . [25] K. Yamaguchi, M. H. Kiapour , and T . L. Berg. Paper doll parsing: Retrieving similar styles to parse clothing items. In 2013 IEEE International Conference on Computer Vision , pages 3519–3526, Dec 2013. [26] Shuai Zheng, Fan Y ang, M Hadi Kiapour , and Robinson Piramuthu. Modanet: A large-scale street fashion dataset with polygon annotations. arXiv preprint arXiv:1807.01394 , 2018. A DETECTOR PERFORMANCE See T able 2 , V ol. 1, No. 1, Article . Publication date: April 2022. Searching for Apparel Products from Images in the Wild • 7 T able 2. Detection mAP for one of our dete ctors (Y OLO V3/416) on fashion images in the wild dataset High Level Classes mAP Head-wear 0.80 Eye-wear 0.81 Earring 0.49 Belt 0.53 Bottom 0.78 Dress 0.81 T op 0.86 Suit 0.67 Tie 0.67 Footwear 0.87 Swimsuit 0.54 Bag 0.79 W ristwear 0.62 Necklace 0.65 One-piece 0.60 Scarf 0.52 Boy 0.82 Girl 0.77 W oman 0.90 Man 0.81 Overall 0.72 , V ol. 1, No. 1, Article . Publication date: April 2022.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment