Forecast Aggregation via Peer Prediction

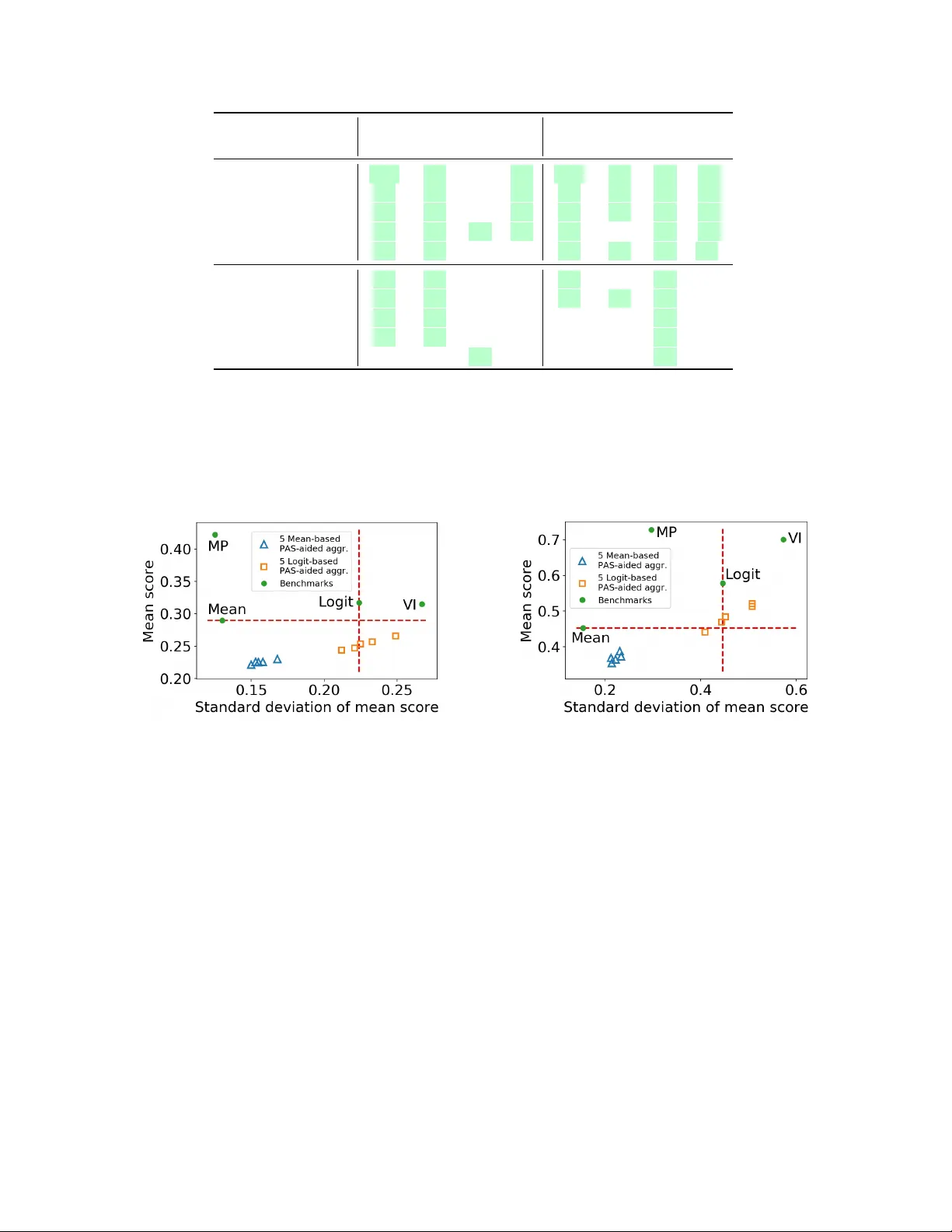

Crowdsourcing enables the solicitation of forecasts on a variety of prediction tasks from distributed groups of people. How to aggregate the solicited forecasts, which may vary in quality, into an accurate final prediction remains a challenging yet c…

Authors: Juntao Wang, Yang Liu, Yiling Chen