BiRA-Net: Bilinear Attention Net for Diabetic Retinopathy Grading

Diabetic retinopathy (DR) is a common retinal disease that leads to blindness. For diagnosis purposes, DR image grading aims to provide automatic DR grade classification, which is not addressed in conventional research methods of binary DR image clas…

Authors: Ziyuan Zhao, Kerui Zhang, Xuejie Hao

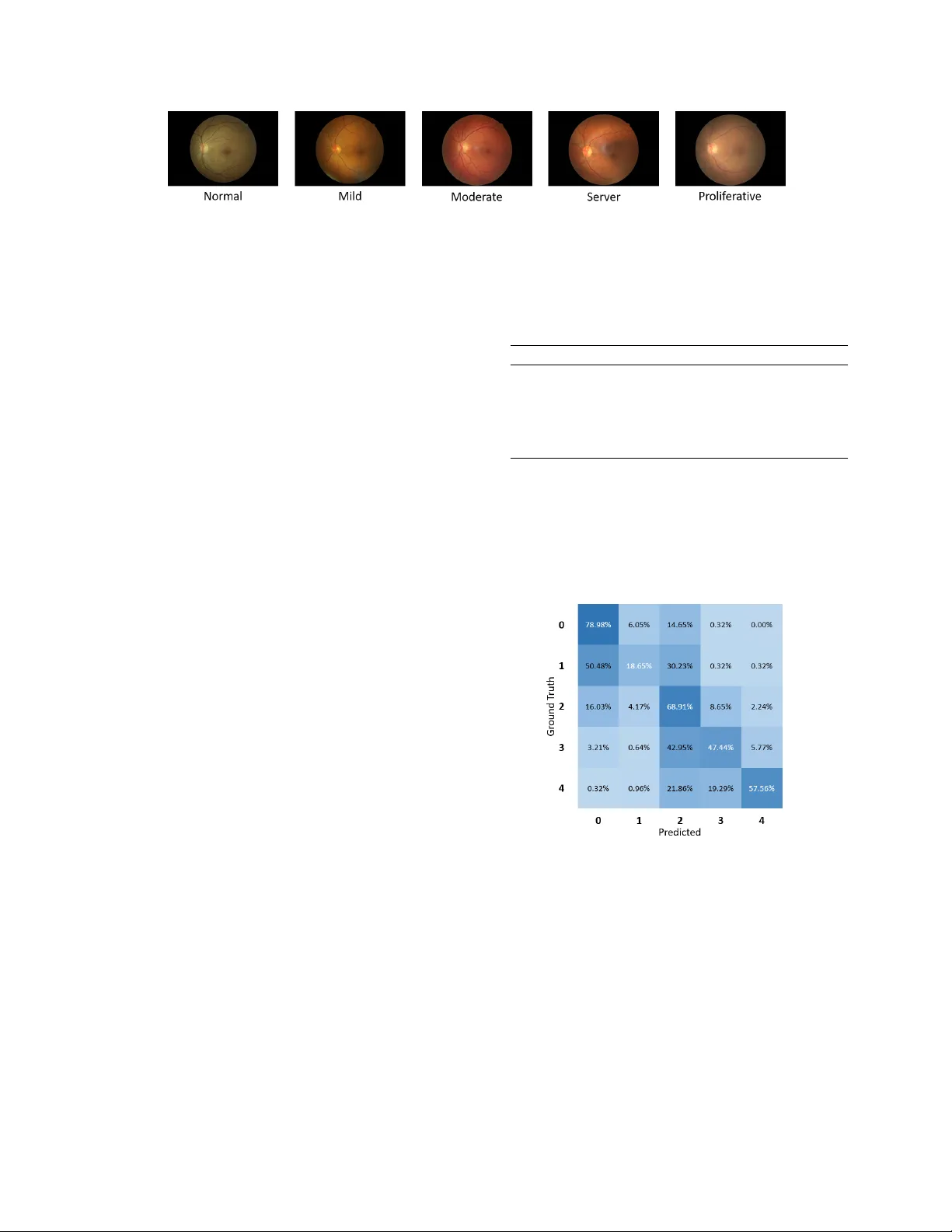

BIRA-NET : BILINEAR A TTENTION NET FOR DIABETIC RETINOP A THY GRADING Ziyuan Zhao ∗† , K erui Zhang ∗† , Xuejie Hao ‡ ,Jing T ian † , Matthew Chin Heng Chua † , Li Chen ], § , Xin Xu ], § † Institute of Systems Science, National Uni versity of Singapore, Singapore ‡ Uni versity of Electronic Science and T echnology of China, China ] School of Computer Science and T echnology , W uhan Uni versity of Science and T echnology , China § Hubei Province K ey Laboratory of Intelligent Information Processing and Real-time Industrial System, W uhan Uni versity of Science and T echnology , W uhan, China ABSTRA CT Diabetic r etinopathy (DR) is a common retinal disease that leads to blindness. For diagnosis purposes, DR image grad- ing aims to provide automatic DR grade classification, which is not addressed in con ventional research methods of binary DR image classification. Small objects in the eye images, like lesions and microaneurysms, are essential to DR grading in medical imaging, but they could easily be influenced by other objects. T o address these challenges, we propose a new deep learning architecture, called BiRA-Net , which combines the attention model for feature e xtraction and bilinear model for fine-grained classification. Furthermore, in considering the distance between dif ferent grades of dif ferent DR cate gories, we propose a new loss function, called grading loss , which leads to improv ed training conv ergence of the proposed ap- proach. Experimental results are pro vided to demonstrate the superior performance of the proposed approach. Index T erms — Diabetic retinopathy grading; Attention mechanism; Bilinear model; Con volutional neural netw ork 1. INTR ODUCTION Diabetic r etinopathy (DR) is one of the most common retinal diseases and it is a primary cause of blindness in humans [1]. It augments the blood pressure in small vessels and conse- quently influences the circulatory system of the retina and the light-sensitiv e tissue of the eye. Singapore is one of the coun- tries with the highest prev alence of diabetes mellitus. In a recent screening exercise for DR in Singapore, 28 . 2% of pa- tients with diabetes ha ve been found to ha ve DR, indicating a prev alent condition in the nation [2]. The major challenge for DR diagnosis is that DR is a silent disease with no early w arning sign, which makes it dif- ficult for a timely diagnosis to take place. The traditional solution is inefficient, in which, well-trained clinicians can manually examine and ev aluate the diagnostic images from the Digital Fondus Photography . This type of diagnosis can * Both authors contrib ute equally to this work. This work was supported by National Natural Science Foundation of China (No. 61602349, 61773297) take several days depending on the number of doctors av ail- able and patients to be seen. Besides this, the result of such a diagnosis varies from doctor to doctor and its accuracy relies greatly on the e xpertise of practitioners. Moreov er, the exper - tise and equipment required may be lacking in many high rate DR areas. The aforementioned challenges have raised the need for the dev elopment of an automatic DR detection system. In recent years, many research works have been conducted to detect DR automatically with the focus on feature extraction and two-class prediction [3 – 6]. These works are effecti ve to some e xtent but also ha ve se veral shortcomings. First, the e x- tracted features from photos are hand-crafted features which are sensitive to many conditions like noise, exposedness and artifacts. Second, feature location and segmentation cannot be well embedded into the whole DR detection framew ork. Moreov er , only diagnosing to determine whether or not DR is present rather than diagnosing the sev erity of DR could not well address practical problems and neither can it pro vide helpful information to doctors. Recently , con volutional neural networks (CNN) has demonstrated attracti ve performance in various computer vision tasks [7 – 9]. In this paper , we adopt the CNN-based ar - chitecture to dev elop a fiv e-class DR image grading approach. In the proposed architecture, we design an attention mecha- nism for better feature extraction and a loss function, called grading loss , for fast con vergence. In addition, the bilinear strategy is used here for better prediction in this fine-grained image task. Compared to other state-of-the-art research works in five-class classification, the proposed approach is able to achiev e superior classification accuracy performance. The contributions of this paper are summarized as belo w: • A new deep learning architecture BiRA-Net is pro- posed to tackle the DR grading challenge. It contains an attention mechanism that is designed for better fea- ture learning. Moreov er , a bilinear training strategy is used to help the classification of fine-grained retina images. • A new loss function based on log softmax is proposed Copyright 2019 IEEE. Published in the IEEE 2019 International Conference on Image Processing (ICIP 2019), scheduled for 22-25 September 2019 in T aipei, T aiwan. Personal use of this material is permitted. Howe ver , permission to reprint/republish this material for advertising or promotional purposes or for creating new collecti ve works for resale or redistrib ution to servers or lists, or to reuse any copyrighted component of this w ork in other works, must be obtained from the IEEE. Contact: Manager , Copyrights and Permissions / IEEE Service Center / 445 Hoes Lane / P .O. Box 1331 / Piscataway , NJ 08855-1331, USA. T elephone: + Intl. 908-562-3966. to measure the model classification accuracy for the fine-grained DR grading issue, and it is verified in the experiments to ef fecti vely improv e the training con ver- gence of the proposed approach. The rest of this paper is organized as follows. First, a brief revie w of the related work is provided in Section 2. Then the proposed BiRA-Net is presented in Section 3 and compared with the state-of-the-art approaches in Section 4. Finally , Sec- tion 5 concludes this paper . 2. RELA TED WORK Con ventionally , most diabetic retinopathy detection methods hav e focused on extracting the regions of interest such as mac- ula, blood vessels, exudates [3, 6], and these approaches ha ve dominated the field of DR detection for years. In recent years, CNN has been used in DR detection [10–13] and achieved satisfying results in the binary classification of DR. Compared to the binary classification, the classification on DR severity is more important, since it can provide more information that can better help doctors diagnose and make decisions. Howe ver , even for experienced doctors, it is still a challenge to diagnose the severity of DR based on complex factors of dif ferent characteristics of eyes. Ever since the California Healthcare Foundation put for- ward a challenge with an av ailable dataset in Kaggle [14], more and more research has been put into in vestigating a multi-class prediction of DR [15 – 17]. Bra vo et al. [16] ex- plored the influence of different pre-processing methods and combined them using VGG16-based architecture to achieve good performance in diabetic retinopathy grading. Howe ver , most research utilizes CNN like a black box which lacks intu- itiv e e xplanation. It is notable that a Zoom-in-Net is proposed to use attention mechanism to simulate a zoom-in process of a clinician diagnosing DR and achie ve state-of-the-art performance in binary classification [18]. 3. PR OPOSED BIRA-NET : BILINEAR A TTENTION NET FOR DR GRADING The proposed BiRA-Net is presented in this Section for DR prediction. The proposed BiRA-Net architecture is shown in Fig. 1, which consists of three key components: (i) ResNet, (ii) Attention Net and (iii) Bilinear Net. First, the processed images are put into the ResNet for feature extraction; then the Attention Net is applied to concentrate on the suspected area. For more fine-grained classification in this task, a bilin- ear strategy is adopted, where two RA-Net are trained simul- taneously to improv e the performance of classification. It is for this reason that our architecture is named “ BiRA-Net ”. 3.1. Con ventional ResNet Residual Neural Network (ResNet) [19] uses shortcut connec- tion to let some input skip the layer indiscriminately , which would av oid adding on new parameters and having too much calculation on the network. Simultaneously av oiding the loss Fig. 1 . The overvie w of the proposed BiRA-Net architecture. (A color version of this Figure is available in the electronic copy .) of information and degradation problem, ResNet can saliently increase the training speed and effects. Therefore, the pre- trained ResNet-50 which is 50 layers deep is applied for fea- ture extraction in the proposed network architecture. 3.2. Proposed Attention mechanism Medical images always contain much irrelev ant information which may disturb decision-making. For our task, micro- scopic features like lesions and microaneurysms are critical for doctors to classify DR grading. Therefore, the proposed BiRA-Net utilizes the attention mechanism, which mimics the clinician’ s behavior of focusing on the key features for DR prediction. The Attention Net in BiRA-Net firstly takes the feature maps F ∈ R 100 × 20 × 20 from ResNet as input, and then puts them into Net-A which is a CNN network of 3 con volution layers with 1 × 1 kernels, which add more nonlinearity and en- hance the representation of the network, as shown in Fig. 2, so as to generate attention maps A ∈ R 100 × 20 × 20 . Specifi- cally , it produces 20 attention maps for each disease lev el by a sigmoid operator . T o create the masks M of images, the multiplication between the feature maps F and the attention maps A is applied. And then, we perform global avera ge pooling (GAP) on both the masks M and the attention maps A respectiv ely to reduce the parameters and av oid o verfitting. Finally , to acquire the weight of images and to filter unrelated information, a division is used. T o summarize, the final output Fig. 2 . The ov erview of the attention net (Net-A) used in the proposed BiRA-Net. (A color v ersion of this Figure is a vailable in the electronic copy .) for the Attention Net is calculated as Output = GAP ( A l ) GAP ( A l ⊗ F l ) (1) where A l and F l are l -th attention map and l -th feature map, respectiv ely; ⊗ and denote element-wise multiplication and element-wise division respecti vely . 3.3. Proposed bilinear model The proposed BiRA-Net utilizes a bilinear strategy to im- prov e the performance of classification. T o speed up the train- ing process and reduce the parameters, two same streams of RA-Net are trained simultaneously , which is inspired by [20]. More specifically , only one stream needs to be trained, such symmetric bilinear learning strategy has been pro ved in [21]. The Bilinear Net used in the proposed network is illus- trated in Fig. 1, which takes the output of Attention Net and the output of the ResNet as inputs. The output of ResNet will be first put into Net-B which is made up of one con volution layer ( 100 , 20 × 20 ) and one ReLU activ ation layer to e xtract the features and reshape them to be the same as the output of the Attention Net. Then they will be computed by M operator (element-wise mean) as Z l = ( X l ⊕ Y l ) 2 (2) where X l and Y l are the output of Attention Net and the output of Net-B, Z l is the output of M operator, ⊕ denotes element-wise addition. Next, we use outer product on the output of M operator term as Z to obtain an image descriptor, and then the result- ing bilinear vector B ( B = Z Z T ) is passed through signed square-root step ( Y ← sig n ( B ) p | B | ) and L 2 normalization ( Z ← Y / k Y k 2 ) to improv e the performance. 3.4. Proposed grading loss Con ventional loss functions are restricted to reducing the multi-class classification to multiple binary classifications. The distance between different classes is not considered in these conv entional loss functions. T o reduce the loss-accuracy discrepancy and get an improv ed con ver gence, we propose a new loss which adds weights to the softmax function, called “ grading loss ”. The proposed grading loss function is a weighted softmax with the distance-based weight function and is defined by L seq ( x, y ) = weight y ( − log ( L softmax ( x, y ))) (3) where weight y = | argmax ( x ) − y | + 1 M (4) which denotes the softmax function as L softmax ( x, y ) = exp ( x [ y ]) P j exp ( x [ j ]) (5) where y ∈ [0 , C − 1] , x = ( x 0 , x 1 , x 2 , ...., x C − 1 ) , M = P C − 1 i =0 ( | y − i | + 1) . It defines the gap between class com- puted by the maximum difference between predicted class x and the real class y , and C is the number of class. The weights are normalized by dividing the accumulating of all circum- stances as (4). A toy example is provided to clarify the concept of the proposed grading loss function and it is as follows. F or ex- ample, in our DR classification task, there are 5 classes. W ith the proposed grading loss function, a more substantial price will be paid if it classifies the category with grade 0 as the cat- egory with grade 4 than that to be the category with the grade 1 , and the weights of these two scenarios are 5 / 15 and 1 / 15 , respectiv ely . This is in contrast to that the conv entional loss function imposes a same loss on these two scenarios. 4. EXPERIMENT AL RESUL TS 4.1. Dataset and implementation Experiments are conducted in this paper using a dataset from Kaggle [14]. The retinal images are provided by EyeP ACS consisting of 35126 images. And each image is labeled as { 0 , 1 , 2 , 3 , 4 } , depending on the disease’ s severity . Examples of each class are shown in Fig. 3. The dataset is highly un- balanced with 25810 level 0 images (Normal), 2443 lev el 1 (Mild), 5292 lev el 2 (Moderate), 873 lev el 3 (Server) and 708 lev el 4 (Proliferativ e). For better generalization and compari- son with the latest method of fiv e-class classification, the data distribution from [16] is adopted, and then we reserve a bal- anced set of 1560 images for validation, and the rest are used as training data. The original images hav e a black rectangle background. They are cropped to keep the whole retina regions in the square areas. Then the images are resized to 610 × 610 pix- els and are standardized by subtracting mean and dividing by standard deviation that is computed over all pixels in all training images. The histogram equalization is used for con- trast enhancement. T o balance the training data, weighted random sampling is adopted. During training, the images are randomly rotated by ± 10 degrees, flipped vertically or horizontally in data augmentation process. Fig. 3 . Examples of retinal images [14] used in the experiment. The proposed model is implemented using Pytorch and trained on a single GTX1080 Ti GPU, using stochastic gradi- ent descent (SGD) optimizer with the momentum of 0 . 9 . The l 2 regularization is performed on weights with weight decay factor of 5 × e − 7 , and the initial learning rate is 0 . 01 . 4.2. Perf ormance metrics A confusion matrix is used to count how many images of each class are classified in each class, and the average of classifica- tion accuracy (A CA) is calculated by the mean of the diagonal of the normalized confusion matrix. Note that an A CA of 0 . 2 is the score of a random guess since there are 5 classes in the experiments. In addition, the micro-averaged and macro-av eraged ver - sions of F1, denoted as Micro F1 and Macro F1 are used to ev aluate the results of multi-class classification. F1 is de- fined as the harmonic mean between precision and recall. The Macro F1 is the mean of the F1-scores of all the classes. In the micro-av eraged method, the individual true positiv es, false positiv es, and false negati ves of dif ferent classes are summed up and then applied to get the statistics. 4.3. Baseline methods W e compare our model with the work by Brav o et al. [16], which has achiev ed the best A CA using the fusion of VGG- based classifiers with different image preprocessing (circular RGB, grayscale and color centered sets). T o explore the ef- fectiv eness of various modules in the proposed BiRA-Net, ab- lation studies are implemented to e valuate the performance of different combinations among dif ferent parts as follo ws. • Bi-ResNet: A pre-trained ResNet-50 [19] using the proposed bilinear strategy . • RA-Net: Only one single stream of the proposed BiRA-Net is used. • BiRA-Net: The proposed architecture with the pro- posed grading loss function. 4.4. Results T able 1 summarizes the results of all methods on the test dataset. BiRA-Net outperforms all other methods in ACA, Marco F1 and Micro F1. W e also implemented BiRA-Net us- ing cross-entrop y loss and it has achie ved competitive results. The ACA is 0 . 5424 which is close to our proposed BiRA-Net. Howe ver , using the proposed loss, we observe an improv ed con ver gence in speed. T able 1 . The objecti ve performance ev aluation of various ap- proaches, which are described in Section 4.3. A CA Marco F1 Micro F1 Brav o et al. [16] 0.5051 0.5081 0.5052 ResNet-50 [19] 0.4689 0.4753 0.4689 Bi-ResNet 0.4889 0.5503 0.4897 RA-Net 0.4717 0.5268 0.4724 BiRA-Net 0.5431 0.5725 0.5436 Fig. 4 sho ws the confusion matrix for the proposed BiRA- Net. In the confusion matrix, each class is most likely to be predicted into the right class, except class 1 , which is mostly classified into class 0 . It is clear that class 1 is the most diffi- cult to differentiate and normal (class 0 ) is the easiest to de- tect. Fig. 4 . The classification confusion matrix of the proposed BiRA-Net. Horizontal axis indicates the predicted classes and vertical axis indicates the ground truth classes. 5. CONCLUSIONS This paper has proposed an attention-dri ven deep learning ar - chitecture for diabetic retinopathy grading, where the bilin- ear strategy is implemented for fine-grained grading tasks. In addition, the proposed grading loss function helps to attain much improv ed conv ergence of the proposed approach. The ablation analyses show that these proposed components effec- tiv ely improv e the classification performance. The proposed BiRA-Net is competitiv e with the state-of-the-art methods, as verified in the e xperimental results. 6. REFERENCES [1] Sneha Das and C. Malathy , “Survey on diagnosis of diseases from retinal images, ” Journal of Physics: Con- fer ence Series , vol. 1000, pp. 012053, Apr . 2018. [2] “Updates in detection and treatment of diabetic retinopathy in Singapore, ” https://www .singhealth.com.sg/news/medical-ne ws- singhealth/updates-in-detection-and-treatment-of- diabetic-retinopathy , [Online; accessed 01-May-2019]. [3] Axel Pinz, Stefan Bernogger , Peter Datlinger , and An- dreas Kruger , “Mapping the human retina, ” IEEE T rans. on medical imaging , v ol. 17, no. 4, pp. 606–619, 1998. [4] Nathan Silberman, Kristy Ahrlich, Rob Fergus, and Lakshminarayanan Subramanian, “Case for automated detection of diabetic retinopathy ., ” in AAAI Spring Sym- posium: Artificial Intelligence for Development , 2010. [5] Akara Sopharak, Bunyarit Uyyanon vara, and Sarah Bar- man, “ Automatic exudate detection from non-dilated di- abetic retinopathy retinal images using fuzzy C-means clustering, ” Sensors , v ol. 9, no. 3, pp. 2148–2161, 2009. [6] Di W u, Ming Zhang, Jyh-Charn Liu, and W endall Bau- man, “On the adaptiv e detection of blood vessels in reti- nal images, ” IEEE T rans. on Biomedical Engineering , vol. 53, no. 2, pp. 341–343, 2006. [7] Geert Litjens, Thijs K ooi, Babak Ehteshami Bejnordi, Arnaud Arindra Adiyoso Setio, Francesco Ciompi, Mohsen Ghafoorian, Jeroen A.W .M. v an der Laak, Bram van Ginneken, and Clara I. Snchez, “ A survey on deep learning in medical image analysis, ” Medical Image Analysis , v ol. 42, pp. 60 – 88, 2017. [8] Zenglin Shi, Guodong Zeng, Le Zhang, Xiahai Zhuang, Lei Li, Guang Y ang, and Guoyan Zheng, “Bayesian voxdrn: A probabilistic deep vox elwise dilated resid- ual network for whole heart segmentation from 3d mr images, ” in International Confer ence on Medical Im- age Computing and Computer -Assisted Intervention . Springer , 2018, pp. 569–577. [9] Ziyuan Zhao, Xiaoman Zhang, Cen Chen, W ei Li, Songyou Peng, Jie W ang, Xulei Y ang, Le Zhang, and Zeng Zeng, “Semi-supervised self-taught deep learn- ing for finger bones segmentation, ” arXiv pr eprint arXiv:1903.04778 , 2019. [10] Gilbert Lim, Mong-Li Lee, W ynne Hsu, and T ien Y in W ong, “T ransformed representations for conv olutional neural networks in diabetic retinopathy screening., ” in AAAI W orkshop: Modern Artificial Intelligence for Health Analytics , 2014. [11] Shuangling W ang, Y ilong Y in, Guibao Cao, Benzheng W ei, Y uanjie Zheng, and Gongping Y ang, “Hierarchi- cal retinal blood vessel segmentation based on feature and ensemble learning, ” Neur ocomputing , vol. 149, pp. 708–717, 2015. [12] V arun Gulshan, Lily Peng, Marc Coram, Martin C Stumpe, Derek W u, Arunachalam Narayanasw amy , Subhashini V enugopalan, Kasumi W idner , T om Madams, and Jorge Cuadros, “Dev elopment and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs, ” J ama , vol. 316, no. 22, pp. 2402–2410, 2016. [13] Ratul Ghosh, Kuntal Ghosh, and Sanjit Maitra, “ Auto- matic detection and classification of diabetic retinopathy stages using CNN, ” in Int. Conf. on Signal Pr ocessing and Inte grated Networks . IEEE, 2017, pp. 550–554. [14] “Kaggle: Diabetic Retinopathy Detection, ” https://www .kaggle.com/c/diabetic-retinopathy- detection, [Online; accessed 01-May-2019]. [15] Harry Pratt, Frans Coenen, Deborah M Broadbent, Si- mon P . Harding, and Y alin Zheng, “Conv olutional neu- ral networks for diabetic retinopathy , ” Pr ocedia Com- puter Science , vol. 90, pp. 200–205, 2016. [16] Mar ´ ıa A Brav o and Pablo A Arbel ´ aez, “ Automatic di- abetic retinopathy classification, ” in Int. Conf. on Med- ical Information Pr ocessing and Analysis , 2017, vol. 10572, p. 105721E. [17] Kang Zhou, Zaiwang Gu, W en Liu, W eixin Luo, Jun Cheng, Shenghua Gao, and Jiang Liu, “Multi-cell multi- task con volutional neural networks for diabetic retinopa- thy grading, ” in IEEE Int. Conf. on Engineering in Medicine and Biology Society , 2018, pp. 2724–2727. [18] Zhe W ang, Y anxin Y in, Jianping Shi, W ei Fang, Hong- sheng Li, and Xiaogang W ang, “Zoom-in-net: Deep mining lesions for diabetic retinopathy detection, ” in Int. Conf. on Medical Ima ge Computing and Computer- Assisted Intervention , 2017, pp. 267–275. [19] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun, “Deep residual learning for image recognition, ” in IEEE Int. Conf. on Computer V ision and P attern Recog- nition , 2016, pp. 770–778. [20] Tsung-Y u Lin, Aruni RoyChowdhury , and Subhransu Maji, “Bilinear cnn models for fine-grained visual recognition, ” in IEEE Int. Conf. on Computer V ision and P attern Recognition , 2015, pp. 1449–1457. [21] Shu Kong and Charless Fo wlkes, “Lo w-rank bilin- ear pooling for fine-grained classification, ” in IEEE Int. Conf. on Computer V ision and P attern Recognition , 2017, pp. 7025–7034.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment