Shannon-Kotelnikov Mappings for Analog Point-to-Point Communications

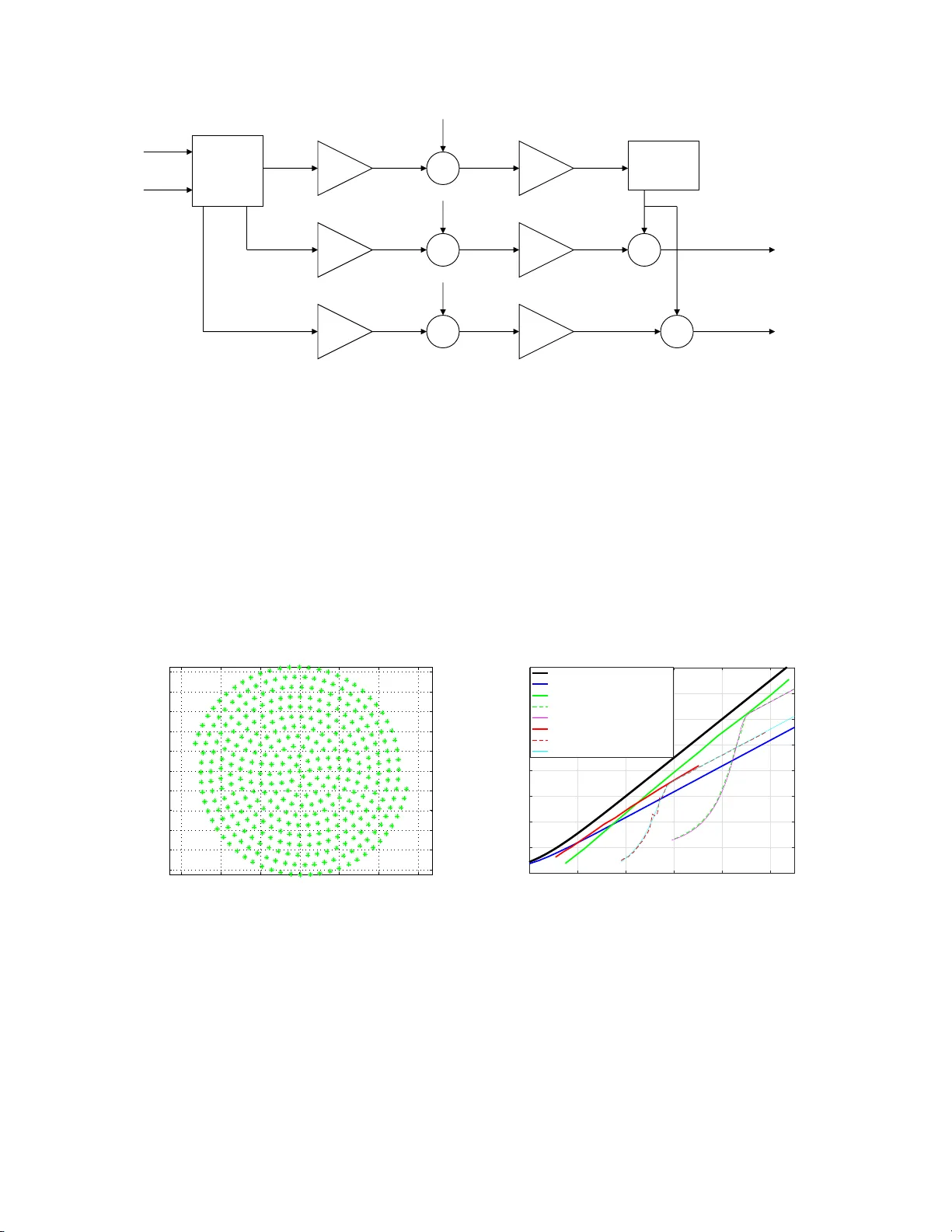

In this paper an approach to joint source-channel coding (JSCC) named Shannon-Kotel'nikov mappings (S-K mappings) is presented. S-K mappings are continuous, or piecewise continuous direct source-to-channel mappings operating directly on amplitude con…

Authors: P{aa}l Anders Floor, Tor A. Ramstad