Augmented Reality and Robotics: A Survey and Taxonomy for AR-enhanced Human-Robot Interaction and Robotic Interfaces

This paper contributes to a taxonomy of augmented reality and robotics based on a survey of 460 research papers. Augmented and mixed reality (AR/MR) have emerged as a new way to enhance human-robot interaction (HRI) and robotic interfaces (e.g., actuated and shape-changing interfaces). Recently, an increasing number of studies in HCI, HRI, and robotics have demonstrated how AR enables better interactions between people and robots. However, often research remains focused on individual explorations and key design strategies, and research questions are rarely analyzed systematically. In this paper, we synthesize and categorize this research field in the following dimensions: 1) approaches to augmenting reality; 2) characteristics of robots; 3) purposes and benefits; 4) classification of presented information; 5) design components and strategies for visual augmentation; 6) interaction techniques and modalities; 7) application domains; and 8) evaluation strategies. We formulate key challenges and opportunities to guide and inform future research in AR and robotics.

💡 Research Summary

The paper presents a comprehensive taxonomy of augmented reality (AR)–enhanced human‑robot interaction (HRI) and robotic interfaces, derived from a systematic review of 460 peer‑reviewed publications spanning two decades. The authors first define the scope of “robotic systems that utilize AR for interaction,” explicitly excluding pure virtual‑reality simulations, externally actuated passive objects, and concept‑only papers. Using a two‑stage coding process—open coding followed by iterative refinement and cross‑author validation—they identify eight orthogonal design dimensions that together capture the breadth of the field.

-

Approaches to augmenting reality – The taxonomy distinguishes where the AR hardware is placed (on‑body, on‑environment, on‑robot) and what is being augmented (the robot itself or its surrounding environment). This yields a 3 × 2 matrix that maps existing work into twelve distinct sub‑spaces, each characterized by specific display technologies (head‑mounted displays, handheld devices, spatial projectors, robot‑mounted projectors, etc.) and target visualizations.

-

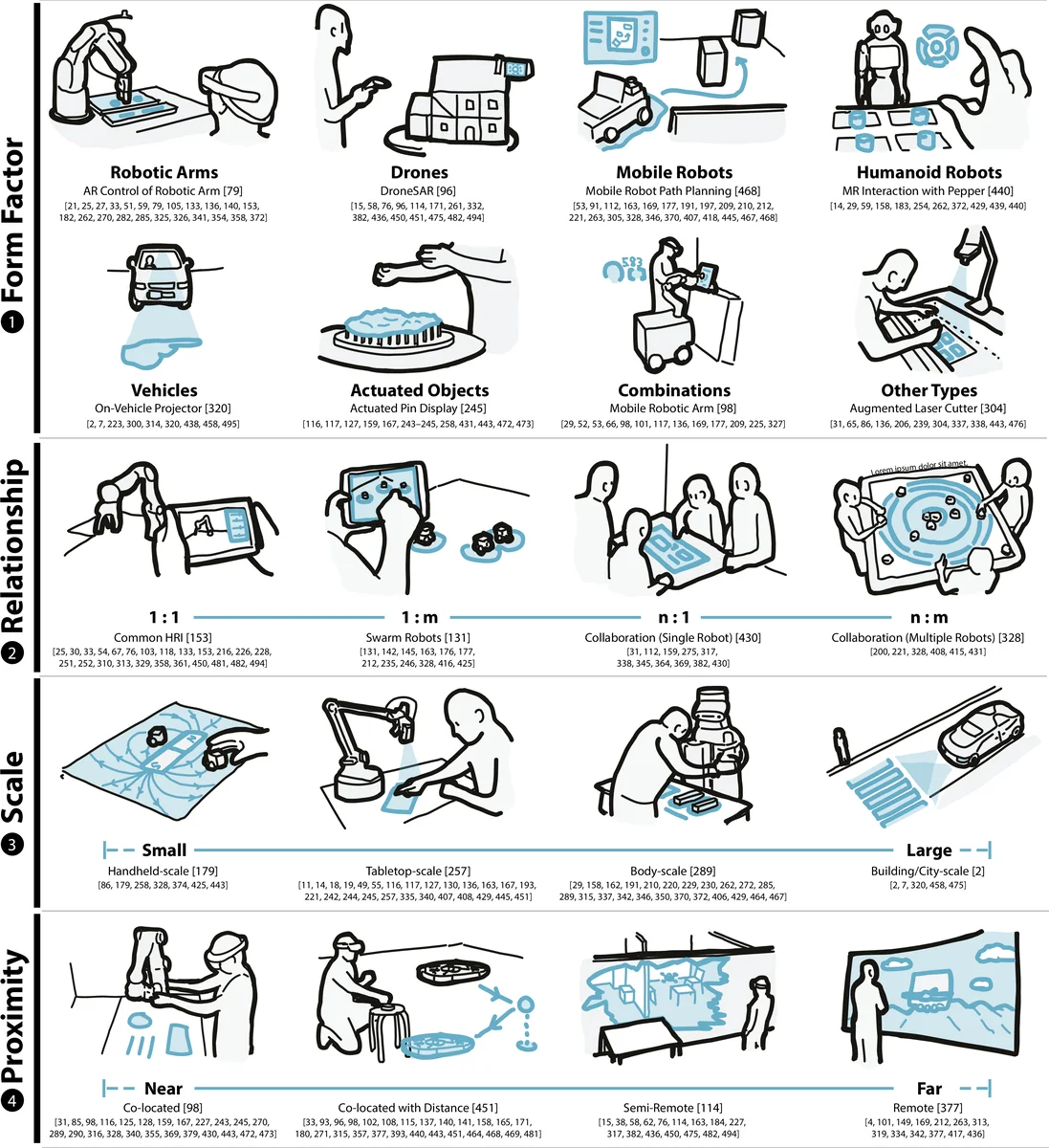

Characteristics of augmented robots – Robots are described along four axes: form factor, relational context (human‑robot, robot‑robot), scale, and proximity. The analysis shows how AR can compensate for physical constraints (e.g., limited screen size on small robots) by projecting information onto the workspace or by attaching displays to the robot’s body.

-

Purposes and benefits – Five primary goals emerge: (a) programming and control assistance, (b) real‑time control feedback, (c) safety enhancement, (d) communication and interpretability, and (e) expressive augmentation. Safety is highlighted as a recurring theme, with AR used to highlight hazardous zones, render virtual boundaries, or overlay intent cues that reduce operator error.

-

Classification of presented information – The surveyed papers are grouped into demonstrations (97), technical evaluations (83), and user studies (122). The prevalence of user‑centered evaluations indicates a mature emphasis on empirical validation.

-

Design components and visual strategies – Twelve categories of visual elements are identified: UI widgets/menus, spatial references, embedded visual effects, labels/annotations, controls/handles, monitors/displays, points/locations, paths/trajectories, areas/boundaries, other visualizations, anthropomorphic effects, virtual replicas/ghosts, and texture‑mapping effects. Each component is linked to specific interaction goals (e.g., path visualizations for trajectory planning, ghost avatars for telepresence).

-

Interaction techniques and modalities – Six interaction modalities are catalogued: tangible (touch), gesture, voice, proximity, gaze, and controller‑based input. The authors note a trend toward multimodal combinations that improve robustness and user satisfaction.

-

Application domains – The taxonomy spans twelve domains: domestic/everyday use, industry, entertainment, education/training, social interaction, design/creative tasks, medical/health, remote collaboration, mobility/transportation, search and rescue, workspace/knowledge work, and data physicalization. Industrial robotics accounts for the largest share of studies, but emerging applications in healthcare and remote collaboration are gaining traction.

-

Evaluation strategies – Four evaluation axes are defined: internal information (system logs, sensor data), external information (external cameras, trackers), plan/activity (task scenarios, workflow analysis), and supplemental content (tutorials, guides). Studies typically combine quantitative performance metrics (latency, accuracy) with qualitative user experience measures (NASA‑TLX, SUS, interviews).

The authors also discuss methodological limitations (potential bias in keyword‑based selection, exclusion of borderline papers) and mitigate them by releasing the full coding dataset and an interactive web portal for community extension. Finally, they outline key challenges and opportunities: (1) improving real‑time tracking and registration accuracy, (2) designing for multi‑user and multi‑robot collaboration, (3) conducting longitudinal studies on fatigue, safety, and trust, (4) addressing privacy, security, and regulatory concerns, and (5) democratizing low‑cost, lightweight AR hardware.

In sum, this work offers a unified, interaction‑focused taxonomy that clarifies design choices, highlights prevalent research trends, and maps open research questions, thereby serving as a valuable reference for scholars and practitioners seeking to develop the next generation of AR‑enhanced robotic systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment