Convergence of a Grassmannian Gradient Descent Algorithm for Subspace Estimation From Undersampled Data

Subspace learning and matrix factorization problems have great many applications in science and engineering, and efficient algorithms are critical as dataset sizes continue to grow. Many relevant problem formulations are non-convex, and in a variety of contexts it has been observed that solving the non-convex problem directly is not only efficient but reliably accurate. We discuss convergence theory for a particular method: first order incremental gradient descent constrained to the Grassmannian. The output of the algorithm is an orthonormal basis for a $d$-dimensional subspace spanned by an input streaming data matrix. We study two sampling cases: where each data vector of the streaming matrix is fully sampled, or where it is undersampled by a sampling matrix $A_t\in \mathbb{R}^{m\times n}$ with $m\ll n$. Our results cover two cases, where $A_t$ is Gaussian or a subset of rows of the identity matrix. We propose an adaptive stepsize scheme that depends only on the sampled data and algorithm outputs. We prove that with fully sampled data, the stepsize scheme maximizes the improvement of our convergence metric at each iteration, and this method converges from any random initialization to the true subspace, despite the non-convex formulation and orthogonality constraints. For the case of undersampled data, we establish monotonic expected improvement on the defined convergence metric for each iteration with high probability.

💡 Research Summary

The paper investigates the convergence properties of the GROUSE algorithm, a first‑order incremental gradient descent method performed on the Grassmannian manifold for subspace estimation from streaming data. The authors consider three sampling regimes: (1) fully sampled data (Aₜ = Iₙ), (2) compressively sampled data where Aₜ ∈ ℝ^{m×n} is a random Gaussian matrix with m ≪ n, and (3) missing‑data case where each Aₜ consists of a random subset of rows of the identity matrix. The problem is formulated as a non‑convex optimization: minimize over orthonormal U ∈ ℝ^{n×d} the sum of least‑squares residuals Σₜ min_{w}‖Aₜ U w – xₜ‖², where xₜ = Aₜ vₜ and vₜ lies in the true subspace \bar U.

At each iteration the algorithm computes the optimal coefficient wₜ, forms the projected vector pₜ = Uₜ wₜ, the residual rₜ = xₜ – Aₜ pₜ, and its back‑projection \tilde rₜ = Aₜᵀ rₜ. An adaptive step size θₜ = arctan(‖rₜ‖/‖pₜ‖) is chosen, which corresponds to ηₜσₜ = θₜ with σₜ = ‖\tilde rₜ‖‖pₜ‖. The update U_{t+1} = Uₜ + (cosθₜ – 1) (pₜ/‖pₜ‖) (wₜᵀ/‖wₜ‖) + sinθₜ (\tilde rₜ/‖\tilde rₜ‖) (wₜᵀ/‖wₜ‖) is a rank‑one move that stays on the Grassmannian, preserving orthonormality.

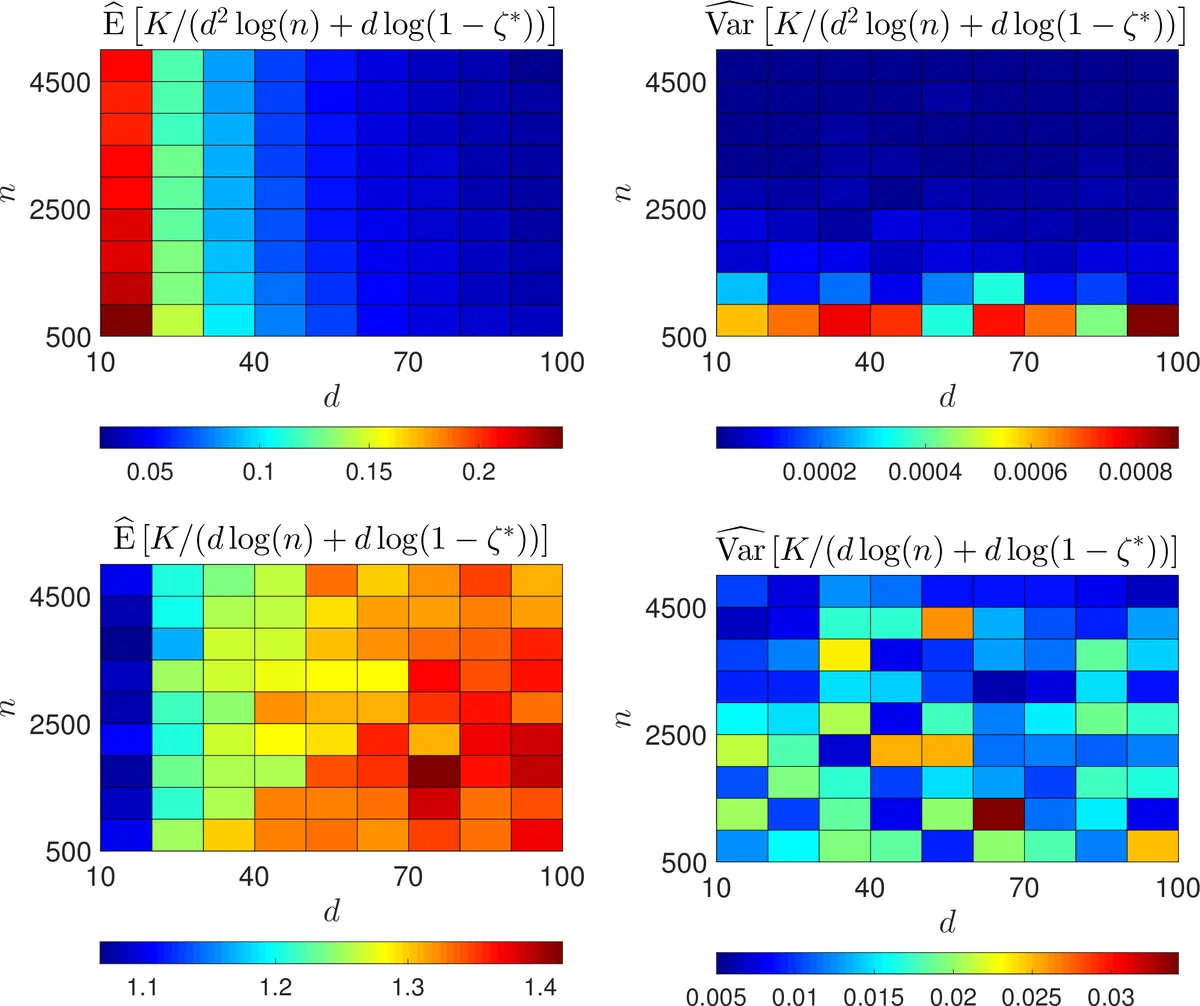

The convergence metric is the deterministic similarity ζ = det(\bar Uᵀ U Uᵀ \bar U) = ∏_{k=1}^d cos² φ_k, where φ_k are the principal angles between the estimated and true subspaces. ζ ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment