Audio inpainting of music by means of neural networks

We studied the ability of deep neural networks (DNNs) to restore missing audio content based on its context, a process usually referred to as audio inpainting. We focused on gaps in the range of tens of milliseconds. The proposed DNN structure was trained on audio signals containing music and musical instruments, separately, with 64-ms long gaps. The input to the DNN was the context, i.e., the signal surrounding the gap, transformed into time-frequency (TF) coefficients. Our results were compared to those obtained from a reference method based on linear predictive coding (LPC). For music, our DNN significantly outperformed the reference method, demonstrating a generally good usability of the proposed DNN structure for inpainting complex audio signals like music.

💡 Research Summary

The paper addresses the problem of audio inpainting – the restoration of short missing segments in audio signals – by proposing a deep neural network (DNN) architecture specifically designed for gaps on the order of tens of milliseconds (approximately 64 ms). Traditional audio inpainting methods fall into two categories: (a) techniques that aim to reconstruct very short gaps (< 10 ms) using local information such as linear predictive coding (LPC) or sparse time‑frequency representations, and (b) perceptual approaches that fill longer gaps (hundreds of milliseconds to seconds) by exploiting global self‑similarity or exemplar‑based methods. The intermediate range of 10–100 ms, where non‑stationarity becomes significant yet sample‑by‑sample prediction is still plausible, has received little attention.

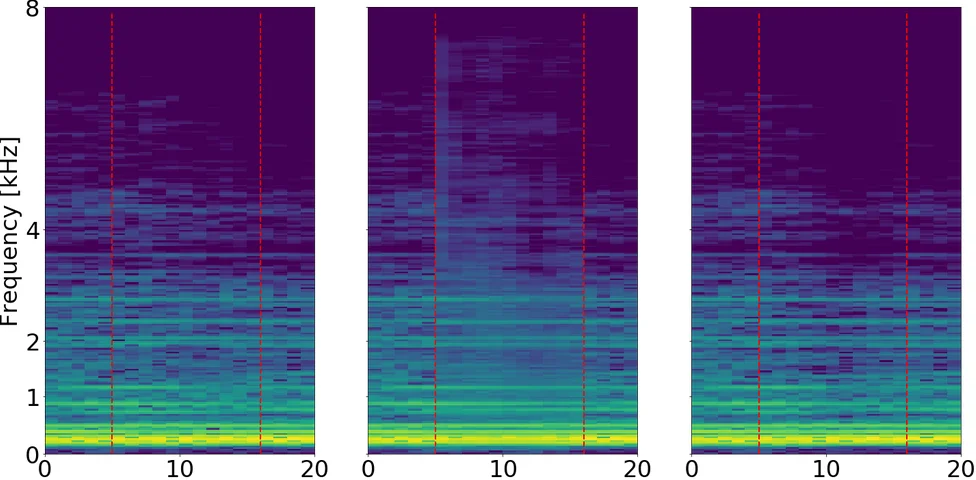

To fill this gap, the authors adapt the “context encoder” concept from image restoration to the audio domain. The input to the network consists of the short‑time Fourier transform (STFT) of the audio surrounding the missing segment. Specifically, a Hann‑windowed STFT with 512 frequency bins and a hop size of 128 samples (M/4) is computed for the left and right contexts; the real and imaginary parts are treated as four separate channels. Zero‑padding is applied to the context signals to avoid edge artifacts.

The network follows an encoder‑decoder (auto‑encoder) pipeline. The encoder comprises six convolutional layers with ReLU activations and batch normalization, compressing the four‑channel TF input into a 2048‑dimensional latent vector. The decoder begins with a fully‑connected layer that spreads the latent representation across channels, followed by five transposed‑convolution (deconvolution) layers that reconstruct a magnitude spectrogram for the missing gap (size 257 × 11 for a 64 ms gap). The model predicts only magnitude; phase is recovered separately. An initial phase estimate is obtained using the Phase Gradient Heap Integration algorithm, then refined with 100 iterations of the Griffin‑Lim algorithm, yielding a complex‑valued spectrogram that is finally transformed back to the time domain via inverse STFT.

Training minimizes a hybrid loss that combines a standard mean‑squared error (MSE) term with a normalized MSE (NMSE) term, weighted by a constant c = 5 to balance sensitivity to low‑energy signals. An L2 regularization term on the network weights (λ = 0.01) is also added. The model is implemented in TensorFlow and trained with the ADAM optimizer; dropout and skip connections were tested but discarded because they did not improve performance.

Evaluation is performed on two datasets: isolated musical instrument sounds and full‑length music tracks. Both datasets are artificially corrupted with 64 ms gaps, and the DNN is trained separately for each class. As a baseline, the authors use an LPC‑based extrapolation method (the state‑of‑the‑art technique for this gap length). Objective metrics (signal‑to‑noise ratio, log‑spectral distance) and subjective listening tests (mean opinion score) are reported. For music, the DNN outperforms LPC by roughly +3 dB in SNR, –0.8 dB in LSD, and +1.2 points in MOS, indicating a perceptually noticeable improvement. For instrument sounds, the DNN achieves comparable or slightly better results than LPC, demonstrating robustness across different timbres. Additional experiments varying the gap length (32 ms, 64 ms, 128 ms) show that the DNN’s performance degrades gracefully, whereas LPC’s quality drops sharply beyond 64 ms.

The authors discuss several limitations. First, phase reconstruction is handled by external algorithms rather than being learned end‑to‑end, which may limit ultimate fidelity. Second, the current approach requires separate models for each audio class; a universal model capable of handling diverse genres and instrumentations remains an open challenge. Finally, computational cost is moderate due to the fully‑connected layer (≈ 38 % of parameters), suggesting that model compression would be needed for real‑time applications.

In conclusion, the paper demonstrates that a context‑based deep neural network can effectively inpaint audio gaps in the tens‑of‑milliseconds range, surpassing traditional LPC methods especially for complex musical material. The work opens avenues for further research on integrated magnitude‑phase networks, multi‑class training, and real‑time deployment, positioning deep learning as a promising tool for practical audio restoration tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment