Block Factor-width-two Matrices and Their Applications to Semidefinite and Sum-of-squares Optimization

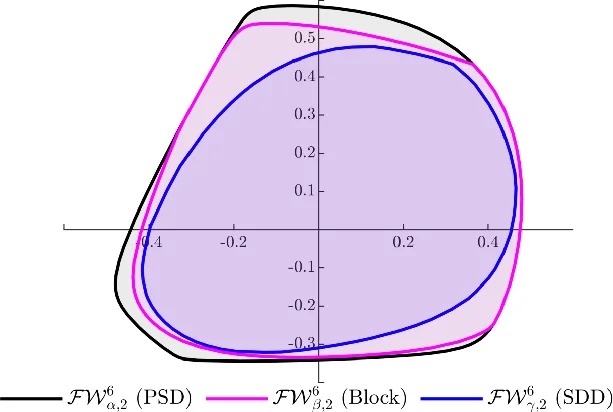

Semidefinite and sum-of-squares (SOS) optimization are fundamental computational tools in many areas, including linear and nonlinear systems theory. However, the scale of problems that can be addressed reliably and efficiently is still limited. In this paper, we introduce a new notion of block factor-width-two matrices and build a new hierarchy of inner and outer approximations of the cone of positive semidefinite (PSD) matrices. This notion is a block extension of the standard factor-width-two matrices, and allows for an improved inner-approximation of the PSD cone. In the context of SOS optimization, this leads to a block extension of the scaled diagonally dominant sum-of-squares (SDSOS) polynomials. By varying a matrix partition, the notion of block factor-width-two matrices can balance a trade-off between the computation scalability and solution quality for solving semidefinite and SOS optimization problems. Numerical experiments on a range of large-scale instances confirm our theoretical findings.

💡 Research Summary

The paper introduces a novel class of matrices called block factor‑width‑two (BFW‑2) matrices, extending the well‑known factor‑width‑two (FW‑2) or scaled diagonally dominant (SDD) matrices to a block‑structured setting. By partitioning an n‑by‑n matrix into non‑overlapping blocks according to a user‑chosen partition α = {k₁,…,k_p}, the authors enforce SDD constraints on each block rather than on individual entries. This yields a cone that strictly contains the traditional FW‑2 cone, providing a tighter inner approximation of the positive semidefinite (PSD) cone while still being representable by a modest number of second‑order cone constraints. The paper proves that coarsening the partition (i.e., using larger blocks) improves the quality of the outer approximation, whereas finer partitions retain the conservatism of FW‑2 but with fewer variables. Quantitative bounds on the distance between the BFW‑2 cone and the PSD cone are derived, showing that the approximation error decreases as the number of blocks is reduced.

In the context of sum‑of‑squares (SOS) optimization, the authors translate the block‑wise SDD idea to polynomial matrices, defining block‑SDSOS (B‑SDSOS) polynomials. B‑SDSOS polynomials form a hierarchy that strictly contains the classical SDSOS class and can be optimized via second‑order cone programming (SOCP), thus preserving the computational advantages of SDSOS while enlarging the feasible polynomial set.

Extensive numerical experiments are conducted on large‑scale semidefinite programs (up to 2000 dimensions) and SOS problems involving 6‑ to 8‑degree polynomials. Various partitions are tested, such as uniform blocks of size 4, 8, and 16. The results demonstrate that BFW‑2‑based models solve up to five times faster than standard SDSOS formulations, use significantly less memory, and achieve objective‑value errors typically between 1 % and 3 %, markedly better than the errors observed with plain FW‑2 approximations. Moreover, the experiments confirm the theoretical trade‑off: larger blocks improve solution quality at a modest increase in computational effort, while finer partitions keep the problem size small but are more conservative.

The paper concludes with several promising research directions: (i) adaptive or data‑driven selection of the block partition, (ii) extension to asymmetric or overlapping block structures, and (iii) integration of the BFW‑2 framework with advanced SDP solvers, including first‑order methods and learning‑based algorithms. Overall, the work provides a powerful, scalable tool for tackling high‑dimensional SDP and SOS problems, bridging the gap between tractable SOCP‑based approximations and the expressive power of full semidefinite programming.

Comments & Academic Discussion

Loading comments...

Leave a Comment