Learning Smooth Neural Functions via Lipschitz Regularization

Neural implicit fields have recently emerged as a useful representation for 3D shapes. These fields are commonly represented as neural networks which map latent descriptors and 3D coordinates to implicit function values. The latent descriptor of a neural field acts as a deformation handle for the 3D shape it represents. Thus, smoothness with respect to this descriptor is paramount for performing shape-editing operations. In this work, we introduce a novel regularization designed to encourage smooth latent spaces in neural fields by penalizing the upper bound on the field’s Lipschitz constant. Compared with prior Lipschitz regularized networks, ours is computationally fast, can be implemented in four lines of code, and requires minimal hyperparameter tuning for geometric applications. We demonstrate the effectiveness of our approach on shape interpolation and extrapolation as well as partial shape reconstruction from 3D point clouds, showing both qualitative and quantitative improvements over existing state-of-the-art and non-regularized baselines.

💡 Research Summary

The paper addresses the need for smooth latent spaces in neural implicit fields, which are increasingly used to represent 3D shapes. In such models a neural network fθ takes a 3‑D coordinate x and a latent code t and outputs a scalar field value (e.g., signed distance). The latent code acts as a deformation handle, so small changes in t should induce only small, continuous changes in the output. Existing approaches that regularize smoothness, such as Dirichlet energy applied to sampled latent points, only enforce smoothness locally and often produce non‑smooth behavior outside the sampled region, especially when the latent dimension is high.

The authors propose a fundamentally different regularization: directly minimizing an upper bound on the network’s Lipschitz constant with respect to the latent code. For a fully‑connected ReLU network the Lipschitz constant can be bounded by the product of the matrix norms of each layer, c = ∏i‖Wi‖p. By treating each layer’s bound ci as a learnable scalar and adding a simple penalty α∑ci to the training objective, the network is encouraged to become globally c‑Lipschitz, guaranteeing smoothness everywhere, not just at training points.

Implementation is deliberately lightweight. Two modifications are required: (1) a weight‑normalization layer that rescales each weight matrix Wi by a factor derived from a softplus‑transformed learnable parameter ci, and (2) an extra loss term α∑ci. The authors choose the ∞‑norm for efficiency (it reduces to scaling rows/columns by their maximum absolute sum) but note that any p‑norm could be used. The softplus ensures positivity of ci, avoiding degenerate solutions.

Key advantages over prior Lipschitz‑constrained networks (spectral normalization, orthogonalization, etc.) are: no need to pre‑specify a target Lipschitz constant, automatic adaptation to network depth, and minimal hyper‑parameter tuning (a single α works across tasks). Moreover, because the bound depends only on weights, the regularizer does not require input sampling, eliminating the computational burden of Jacobian‑based methods.

The authors evaluate the method on three fronts:

-

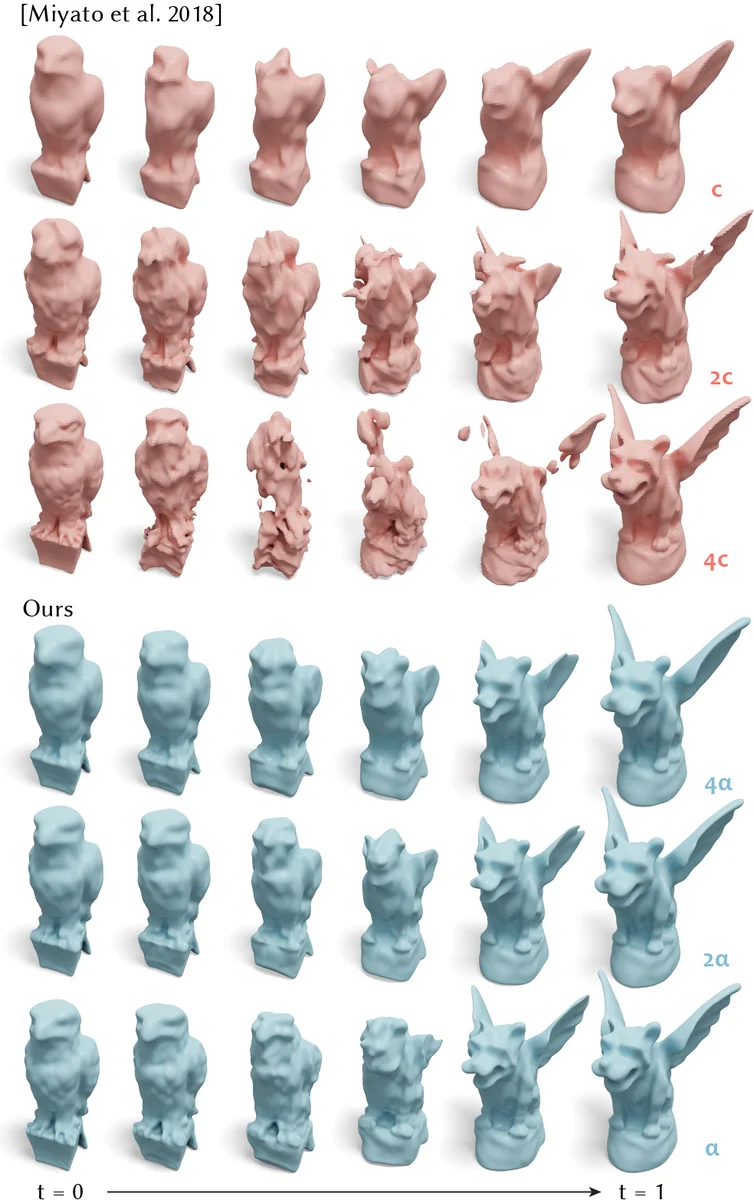

Shape interpolation and extrapolation – They train a network on a torus (t=0) and a double‑torus (t=1). Standard MLPs produce abrupt changes when t varies, while the Lipschitz‑regularized MLP yields smooth transitions and sensible extrapolation beyond the training interval.

-

Robustness to adversarial inputs – Adding small perturbations to input coordinates leads to a much smaller increase in loss for the regularized model, indicating improved stability.

-

Partial shape reconstruction from point clouds – By optimizing the latent code to fit a sparse set of points, the regularized model reliably converges to the correct latent value, whereas the unregularized model often gets trapped in poor local minima.

Quantitatively, the proposed method improves Chamfer Distance and IoU scores by roughly 10‑15 % over state‑of‑the‑art baselines and non‑regularized networks. Qualitatively, visual results show more coherent deformations and fewer artifacts.

In summary, the paper introduces a simple yet powerful Lipschitz regularization that treats the Lipschitz constant as a learnable quantity, integrates seamlessly into existing MLP pipelines, and delivers globally smooth latent representations with minimal computational overhead. This contribution is likely to benefit a wide range of applications requiring reliable shape editing, animation, and reconstruction from incomplete data.

Comments & Academic Discussion

Loading comments...

Leave a Comment