Biologically Plausible Sequence Learning with Spiking Neural Networks

Motivated by the celebrated discrete-time model of nervous activity outlined by McCulloch and Pitts in 1943, we propose a novel continuous-time model, the McCulloch-Pitts network (MPN), for sequence learning in spiking neural networks. Our model has a local learning rule, such that the synaptic weight updates depend only on the information directly accessible by the synapse. By exploiting asymmetry in the connections between binary neurons, we show that MPN can be trained to robustly memorize multiple spatiotemporal patterns of binary vectors, generalizing the ability of the symmetric Hopfield network to memorize static spatial patterns. In addition, we demonstrate that the model can efficiently learn sequences of binary pictures as well as generative models for experimental neural spike-train data. Our learning rule is consistent with spike-timing-dependent plasticity (STDP), thus providing a theoretical ground for the systematic design of biologically inspired networks with large and robust long-range sequence storage capacity.

💡 Research Summary

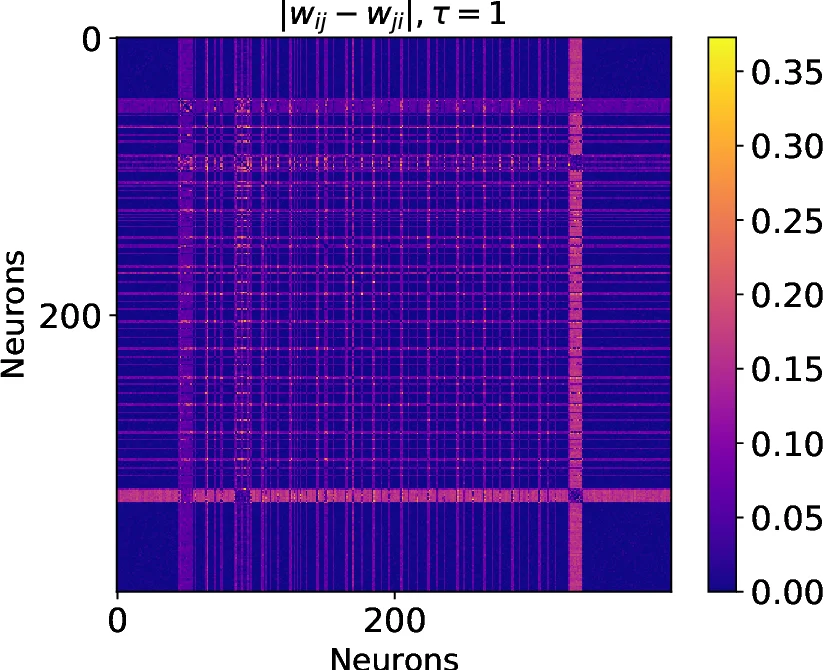

The paper introduces a continuous‑time spiking neural network model called the McCulloch‑Pitts Network (MPN), which extends the classic 1943 binary neuron model to a stochastic process suitable for sequence learning. An MPN is defined on a directed weighted graph where each node represents a binary unit that can be either “armed” (0) or “refractory” (1). The firing rate of unit i is λ_i = exp(σ_i z_i / τ), where σ_i = 1 − 2x_i encodes the current state, z_i = ∑{j→i} w{ji} x_j + b_i is a linear combination of presynaptic activities (the unsigned activity), and τ is a temperature parameter controlling stochasticity. Holding times are exponentially distributed, making the refractory period itself stochastic.

Training maximizes the log‑conditional likelihood of a given binary time series D(t). The likelihood decomposes into a transition term T_n(θ) and a holding term H_n(θ). Differentiating yields local update rules: when a spike occurs, Δw_{jk} = η τ x_j δ_k and Δb_k = η τ δ_k; when no spikes occur for a duration Δt, Δw_{jk} = −η τ x_j σ_k λ_k Δt and Δb_k = −η τ σ_k λ_k Δt. These updates depend only on the presynaptic state, the postsynaptic transition, and the postsynaptic firing rate, satisfying the biological constraint that synaptic changes are driven by locally available information.

The authors analytically connect these updates to spike‑timing‑dependent plasticity (STDP). Under simplifying assumptions (two neurons, constant background rates, small timing differences ε), they prove that the expected weight change follows an exponential STDP curve: E

Comments & Academic Discussion

Loading comments...

Leave a Comment