Self-Directed Online Machine Learning for Topology Optimization

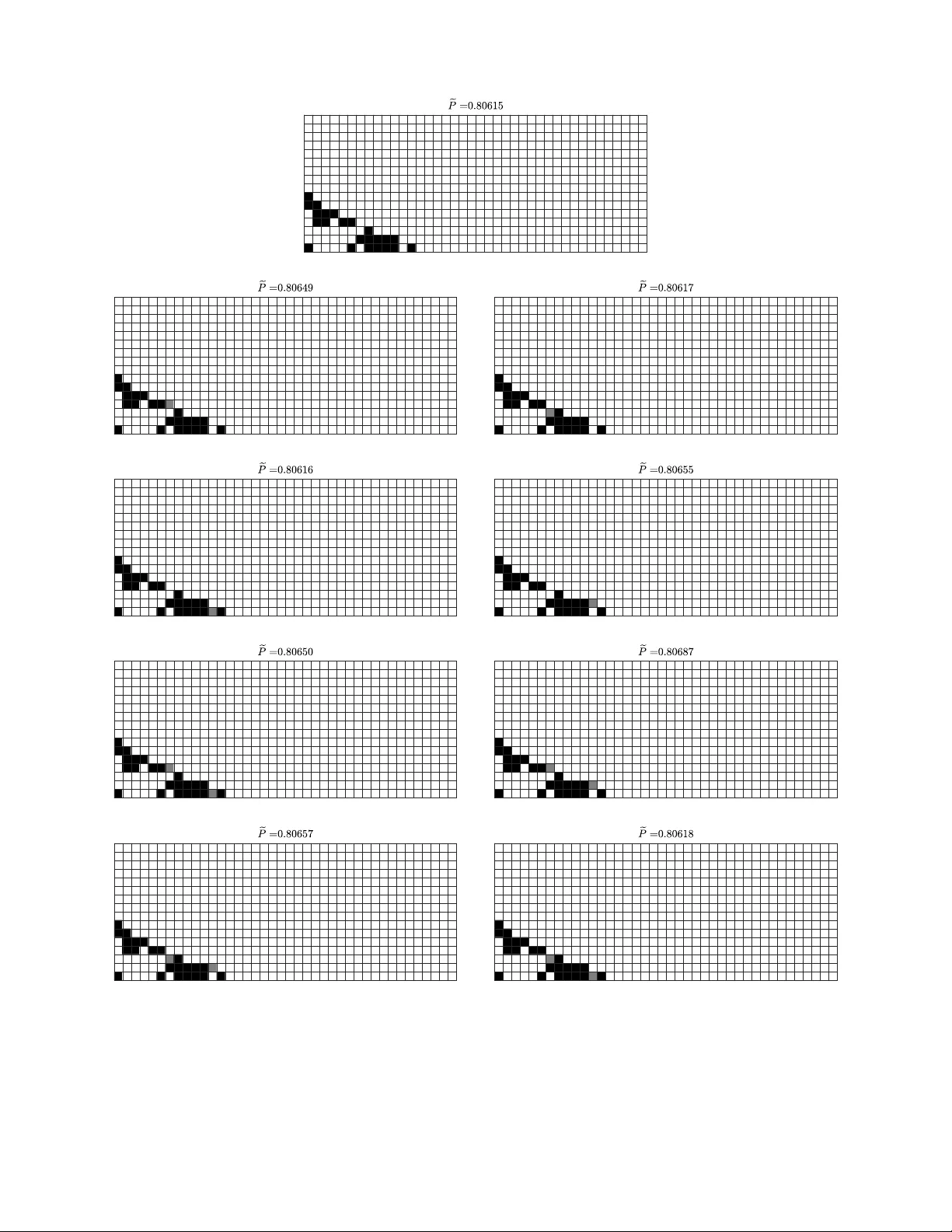

Topology optimization by optimally distributing materials in a given domain requires non-gradient optimizers to solve highly complicated problems. However, with hundreds of design variables or more involved, solving such problems would require millio…

Authors: Changyu Deng, Yizhou Wang, Can Qin