Speaker Diarization with LSTM

For many years, i-vector based audio embedding techniques were the dominant approach for speaker verification and speaker diarization applications. However, mirroring the rise of deep learning in various domains, neural network based audio embeddings…

Authors: Quan Wang, Carlton Downey, Li Wan

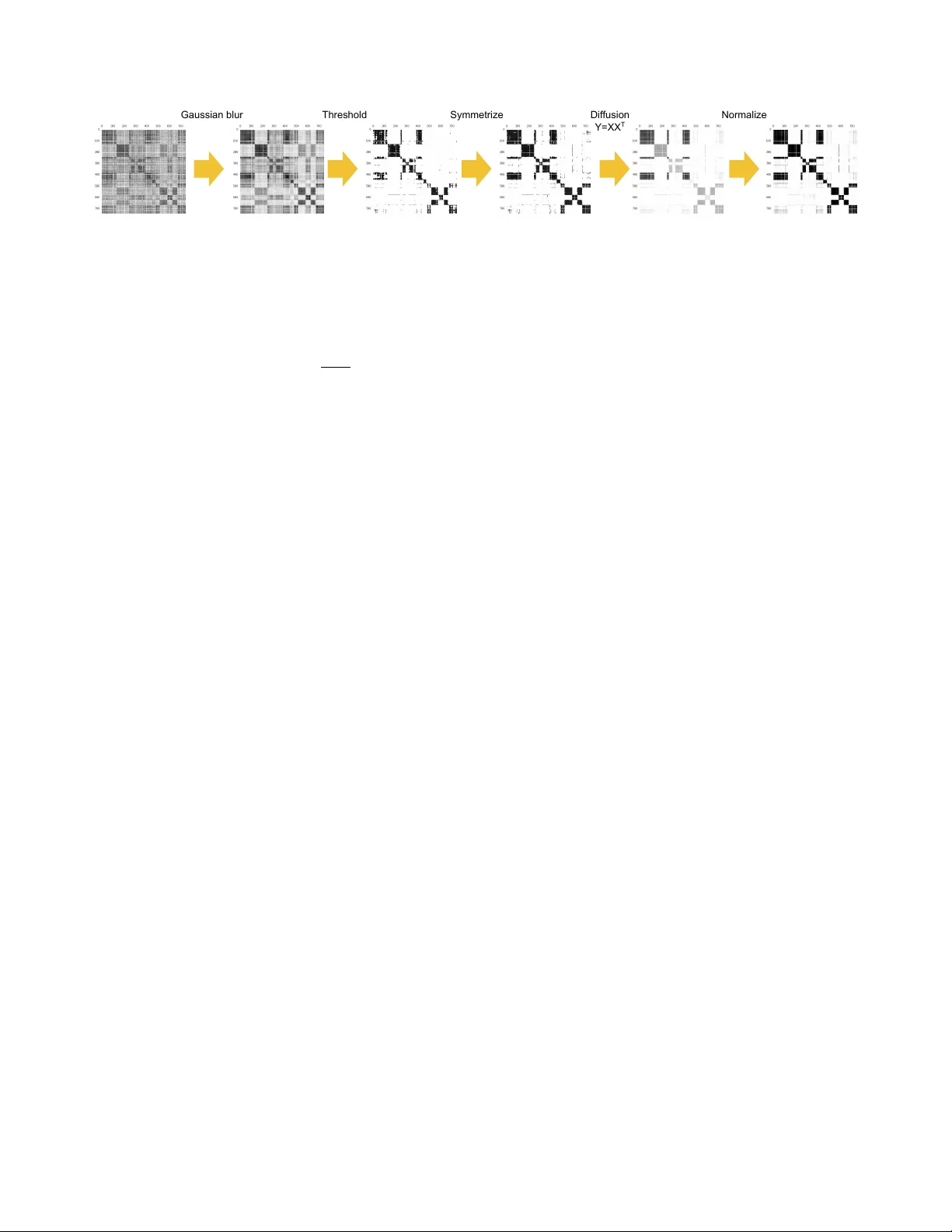

SPEAKER DIARIZA TION WITH LSTM Quan W ang 1 Carlton Downe y 2 Li W an 1 Philip Andr e w Mansfield 1 Ignacio Lopez Mor eno 1 1 Google Inc., USA 2 Carnegie Mellon Uni v ersity , USA 1 { quanw , liwan , memes , elnota } @google.com 2 cmdowney@cs.cmu.edu ABSTRA CT For many years, i-vector based audio embedding techniques were the dominant approach for speaker verification and speak er diarization applications. Howe ver , mirroring the rise of deep learning in vari- ous domains, neural network based audio embeddings, also known as d-vectors , have consistently demonstrated superior speaker veri- fication performance. In this paper, we build on the success of d- vector based speaker verification systems to dev elop a ne w d-vector based approach to speaker diarization. Specifically , we combine LSTM-based d-vector audio embeddings with recent work in non- parametric clustering to obtain a state-of-the-art speaker diarization system. Our system is ev aluated on three standard public datasets, suggesting that d-vector based diarization systems of fer significant advantages over traditional i-vector based systems. W e achiev ed a 12.0% diarization error rate on NIST SRE 2000 CALLHOME, while our model is trained with out-of-domain data from voice search logs. Index T erms — Speaker diarization, deep learning, audio em- bedding, LSTM, spectral clustering 1. INTR ODUCTION Speaker diarization is the process of partitioning an input audio stream into homogeneous segments according to the speaker iden- tity . It answers the question “ who spoke when ” in a multi-speaker en vironment. It has a wide variety of applications including multi- media information retrieval, speaker turn analysis, and audio pro- cessing. In particular , the speaker boundaries produced by diariza- tion systems hav e the potential to significantly improve acoustic speech recognition (ASR) accuracy . A typical speaker diarization system usually consists of four components: (1) Speech segmentation, where the input audio is se g- mented into short sections that are assumed to ha ve a single speak er , and the non-speech sections are filtered out; (2) Audio embedding extraction, where specific features such as MFCCs [ 1 ], speaker fac- tors [ 2 ], or i-vectors [ 3 , 4 , 5 ] are extracted from the segmented sec- tions; (3) Clustering, where the number of speakers is determined, and the extracted audio embeddings are clustered into these speak- ers; and optionally (4) Resegmentation [ 6 ], where the clustering re- sults are further refined to produce the final diarization results. In recent years, neural network based audio embeddings (d- vectors) have seen wide-spread use in speaker verification applica- tions [ 7 , 8 , 9 , 10 , 11 ], often significantly outperforming previously state-of-the-art techniques based on i-vectors. Howe ver , most of these applications belong to text-dependent speaker verification, where the speaker embeddings are extracted from specific detected More information of this work can be found at: https://google. github.io/speaker- id/publications/LstmDiarization keyw ords [ 12 , 13 ]. In contrast, speaker diarization requires text- independent embeddings which work on arbitrary speech. In this paper, we explore a text-independent d-vector based ap- proach to speaker diarization. W e leverage the work of [ 11 ] to train an LSTM-based text-independent speaker verification model, then combine this model with recent work in non-parametric spectral clustering algorithm to obtain a state-of-the-art speaker diarization system. While sev eral authors ha ve had explored using neural network embeddings for diarization tasks, their work has largely focused on using feed-forward DNNs to directly perform diarization. For exam- ple, [ 14 ] uses DNN embeddings trained on PLD A-inspired loss. In contrast, our work uses RNNs (specifically LSTMs [ 15 ]), which bet- ter capture the sequential nature of audio signals, and our generalized end-to-end training architecture directly simulates the enroll-verify run-time logic. There have been sev eral attempts to apply spectral clustering [ 16 ] to the speaker diarization problem [ 17 , 3 ]. Howe ver , to the authors’ knowledge, our work is the first to combine LSTM-based d-vector embeddings with spectral clustering. Furthermore, as part of our spectral clustering algorithm, we present a nov el sequence of affinity matrix refinement steps which act to de-noise the affinity matrix, and are crucial to the success of our system. The remainder of this paper is organized as follows: In Sec. 2 , we describe how the LSTM-based text-independent speaker verifi- cation model trained with the framework in [ 11 ] can be adapted to featurize raw audio data and prepare it for clustering. In Sec. 3 , we describe four dif ferent clustering algorithms and discuss the pros and cons of each in the context of speaker diarization, culminating with a modified spectral clustering algorithm. Experimental results and discussions are presented in Sec. 4 , and conclusions are in Sec. 5 . 2. DIARIZA TION WITH D-VECTORS W an et al. recently introduced an LSTM-based [ 15 ] speaker embed- ding network for both text-dependent and text-independent speaker verification [ 11 ]. Their model is trained on fixed-length segments extracted from a large corpus of arbitrary speech. They sho wed that the d-v ector embeddings produced by such networks usually signifi- cantly outperform i-v ectors in an enrollment-verification 2-stage ap- plication. W e now describe ho w this model can be modified for pur - poses of speaker diarization. The flo wchart of our diarization system is provided in Fig. 1 . In this system, audio signals are first transformed into frames of width 25ms and step 10ms, and log-mel-filterbank energies of dimension 40 are extracted from each frame as the network input. W e build sliding windows of a fixed length on these frames, and run the LSTM network on each window . The last-frame output of the LSTM is then used as the d-vector representation of this sliding windo w . …… sliding windows window step window size …… d-vectors …… segments …… diarization results Run LSTM Aggregate Cluster Process Fig. 1 : A flowchart of our d-v ector based diarization system. W e use a V oice Activity Detector (V AD) to determine speech segments from the audio, which are further divided into smaller non- overlapping segments using a maximal segment-length limit ( e.g. 400ms in our experiments), which determines the temporal resolu- tion of the diarization results. For each segment, the corresponding d-vectors are first L2 normalized, then averaged to form an embed- ding of the segment. The above process serves to reduce arbitrary length audio input into a sequence of fixed-length embeddings. W e can now apply a clustering algorithm to these embeddings in order to determine the number of unique speakers, and assign each part of the audio to a specific speaker . 3. CLUSTERING In this section, we introduce the four clustering algorithms that we integrated into our diarization system. W e place particular focus on the spectral offline clustering algorithm, which significantly outper- formed the alternativ e approaches across experiments. W e note that clustering algorithms can be separated into two cat- egories according to the run-time latenc y: • Online clustering : A speaker label is immediately emitted once a segment is a vailable, without seeing future se gments. • Offline clustering : Speaker labels are produced after the em- beddings of all segments are a vailable. Offline clustering algorithms typically outperform Online clustering algorithms due to the additional contextual information av ailable in the of fline setting. Furthermore, a final rese gmentation step can only be applied in the of fline setting. Nonetheless, the choice between on- line and offline depends primarily on the nature of the application — where the system is intended to be deployed. For example, latency- sensitiv e applications such as live video analysis typically restrict the system to online clustering algorithms. 3.1. Nai ve online clustering This is a prototypical online clustering algorithm. W e apply a thresh- old on the similarities between embeddings of se gments. T o be con- sistent with the generalized end-to-end training architecture [ 11 ], co- sine similarity is used as our similarity metric. In this clustering algorithm, each cluster is represented by the centroid of all its corresponding embeddings. When a new segment embedding is available, we compute its similarities to centroids of all existing clusters. If they are all smaller than the threshold, then create a new cluster containing only this embedding; otherwise, add this embedding to the most similar cluster and update the centroid. 3.2. Links online clustering Links is an online clustering method we dev eloped to improv e upon the naiv e approach. It estimates cluster probability distributions and models their substructure based on the embedding vectors received so far . The technical details are described in a separate paper [ 18 ]. 3.3. K-Means offline clustering Like in many diarization systems [ 19 , 3 , 20 ], we integrated the K- Means clustering algorithm with our system. Specifically , we use K- Means++ for initialization [ 21 ]. T o determine the number of speak- ers e k , we use the “elbow” of the deriv ati ves of conditional Mean Squared Cosine Distances 1 (MSCD) between each embedding to its cluster centroid: e k = arg max k ≥ 1 | MSCD 0 ( k ) | . (1) 3.4. Spectral offline clustering Our spectral clustering algorithm consists of the following steps: 1. Construct the affinity matrix A , where A ij is the cosine sim- ilarity between i th and j th segment embedding when i 6 = j , and the diaginal elements are set to the maximal value in each row: A ii = max j 6 = i A ij . 2. Apply the following sequence of refinement operations on the affinity matrix A : (a) Gaussian Blur with standard deviation σ ; (b) Row-wise Thresholding: For each row , set elements smaller than this row’ s p -percentile to 0; 2 (c) Symmetrization: Y ij = max( X ij , X j i ) ; (d) Diffusion: Y = X X T ; (e) Row-wise Max Normalization: Y ij = X ij / max k X ik . These refinements act to both smooth and denoise the data in the similarity space as shown in Fig. 2 , and are crucial to the success of the algorithm. The refinements are based on the temporal locality of speech data — contiguous speech segments should ha ve similar embeddings, and hence similar values in the af finity matrix. W e now provide the intuition behind each of these opera- tions: The Gaussian blur acts to smooth the data, and re- duce the effect of outliers. Row-wise thresholding serves to zero-out af finities between embeddings belonging to two dif- ferent speakers. Symmetrization restores matrix symmetry which is crucial to the spectral clustering algorithm. The dif- fusion steps draws inspiration from the Dif fusion Maps algo- rithm [ 22 ], and serves to sharpen the image resulting in clear boundaries between sections of the af finity matrix belonging to distinct speakers. Finally , the row-wise max normalization serves to rescale the spectrum of the matrix to ensure undesir- able scale ef fects do not occur during the subsequent spectral clustering step. 1 W e define cosine distance as d ( x, y ) = 1 − cos( x, y ) / 2 . 2 In practice, it’ s better to use soft thresholding: scale these elements by a small multiplier such as 0 . 01 . Gaussian blur Threshold Symmetrize Diffusion Y=XX T Normalize Fig. 2 : Refinement operations on the affinity matrix. 3. After all refinement operations have been applied, perform eigen-decomposition on the refined affinity matrix. Let the n eigen-values be: λ 1 > λ 2 > · · · > λ n . W e use the maximal eigen-gap to determine the number of clusters e k : e k = arg max 1 ≤ k ≤ n λ k λ k +1 . (2) 4. Let the eigen-vectors corresponding to the largest e k eigen- values be v 1 , v 2 , · · · , v e k . W e replace the i th se gment embed- ding by the corresponding dimension in these eigen-vectors: e i = [ v 1 i , v 2 i , · · · , v e ki ] . Then we use the same K-Means algorithm in Sec. 3.3 to cluster these new embeddings, and produce speaker labels. 3.5. Discussion Speech data analysis is an extremely challenging problem domain, and con ventional clustering algorithms such as K-Means often per- form poorly . This is due to a number of unfortunate properties in- herent to speech data, which include: (i) Non-Gaussian Distributions : Speech data are often Non- Gaussion. In this setting, the centroid of a cluster (central to K-Means clustering) is not a sufficient representation. (ii) Cluster Imbalance : In speech data, it is often the case that one speaker will speak often, while other speakers will speak rarely . In this setting, K-Means may incorrectly split large clusters into sev eral smaller clusters. (iii) Hierarchical Structure : Speakers fall into various groups according to gender , age, accent, etc. This structure is prob- lematic since the difference between a male and a female speaker is much larger than the difference between two fe- male speakers. This makes it difficult for K-Means to dis- tinguish between clusters corresponding to groups, and clus- ters corresponding to distinct speakers. In practice, this often causes K-Means to incorrectly cluster all embeddings corre- sponding to male speakers into one cluster , and all embed- dings corresponding to female speakers into another . The problems caused by these properties are not limited to K- Means clustering, but are endemic to most parametric clustering al- gorithms. Fortunately , these problems can be mitigated by employ- ing a non-parametric connection-based clustering algorithm such as spectral clustering. 4. EXPERIMENTS 4.1. Models W e run experiments with all combinations of both i-vector and d- vector models, with the four clustering algorithms discussed in Sec. 3 . Both models are trained on an anonymized collection of voice searches, which has around 36M utterances and 18K speakers. The i-vector model is trained using 13 PLP coefficients with delta and delta-delta coefficients. The GMM-UBM includes 512 Gaussians, and the total variability matrix includes 100 eigen- vectors. The final i-vectors are reduced to 50-dimensional using LD A. The d-vector model is a 3-layer LSTM network with a final lin- ear layer . Each LSTM layer has 768 nodes, with projection [ 23 ] of 256 nodes. Our V oice Activity Detection (V AD) model is a very small GMM model using the same PLP features as i-vector . It only has two full covariance Gaussians: one for speech, and one for non-speech. W e found this simple V AD generalizes better across domains (from queries to telephone) for diarization than CLDNN [ 24 ] V AD models. 4.2. Datasets W e report Diarization Error Rates (DER) on three standard public datasets: (1) CALLHOME American English [ 25 ] (LDC97S42 + LDC97T14); (2) 2003 NIST Rich T ranscription (LDC2007S10), the English conv ersational telephone speech (CTS) part; and (3) 2000 NIST Speaker Recognition Ev aluation (LDC2001S97), Disk-8. The first two datasets are English only , and are relativ ely smaller . Thus we use these two datasets to compare dif ferent algorithms. The third dataset is used by most diarization papers, and is usu- ally directly referred to as “CALLHOME” in literature. It contains 500 utterances distributed across six languages: Arabic, English, German, Japanese, Mandarin, and Spanish. 4.3. Experiment setup Our diarization ev aluation system is based on the pyannote.metrics library [ 26 ]. The CALLHOME American English dataset has a default 20- vs-20 utterances division for Dev-vs-Ev al. For NIST R T -03 CTS, we randomly divide the 72 utterances into 14-vs-58 Dev and Ev al sets. F or each diarization system, we tune the parameters such as V oice Activity Detector (V AD) threshold, LSTM window size/step (Fig. 1 ), and clustering parameters on the Dev set, and report the DER on the Eval set. For NIST R T -03 CTS, we only report DERs based on those pro- vided un-partitioned ev aluation map (UEM) files. For the other two datasets, as is the standard con vention in literature [ 2 , 3 , 4 , 6 , 14 , 27 ], we tolerate errors less than 250ms in locating segment boundaries. As is typical, for each audio file, multiple channels are merged into a single channel [ 3 , 6 , 20 ], and we do not process the parts that are before the first annotation or after the last annotation. Addi- tionally , as is standard in literature, we exclude overlapped speech (multiple speakers speaking at the same time) from our ev aluation. For offline clustering algorithms, we constrain the system to produce at least 2 speakers. T able 1 : DER (%) on two English-only datasets for different embeddings and clustering algorithms. Embedding Clustering CALLHOME American English Eval NIST R T -03 English CTS Eval Confusion F A Miss T otal Confusion F A Miss T otal i-vector Naiv e 26.41 2.40 3.55 32.36 35.35 4.66 2.62 42.63 Links 25.40 31.36 33.56 40.48 K-Means 22.86 28.81 24.38 31.66 Spectral 14.59 20.54 13.84 21.12 d-vector Naiv e 12.41 1.94 4.51 18.87 18.76 4.09 4.45 27.30 Links 11.02 17.47 18.56 27.10 K-Means 7.29 13.75 7.80 16.34 Spectral 6.03 12.48 3.76 12.30 T able 2 : DER (%) on NIST SRE 2000 CALLHOME. Since we didn’t do rese gmentation, we report others’ work by listing both with & without V ariational Bayesian (VB) resegmentation [ 6 ]. Note that unlike others’ work, our model is trained with out-of-domain data (English voice search vs. multilingual telephone speech). Method Confusion F A Miss T otal d-vector + spectral 12.0 2.2 4.6 18.8 Castaldo et al. [ 2 ] 13.7 — — — Shum et al. [ 3 ] 14.5 — — — Senoussaoui et al. [ 4 ] 12.1 — — — Sell et al. [ 6 ] (+VB) 13.7 (11.5) — — — Romero et al. [ 14 ] (+VB) 12.8 (9.9) — — — 4.4. Results Our experimental results are shown in T able 1 , 2 and 3 . W e report the total DER together with its three components: False Alarm (F A), Miss, and Confusion. F A and Miss are mostly from V oice Activity Detection errors, and partly from the aggregation from frame-level i-vectors or window-le vel d-vectors to segments. The F A and Miss differences between i-vector and d-vector are due to their different window sizes/steps and aggre gation logics. In T able 1 , we can see that d-vector based diarization systems significantly outperform i-vector based systems. For d-vector sys- tems, the optimal sliding window size and step are 240ms and 120ms, respectiv ely . W e also observe that as expected, offline diarization produces significantly better results than online diarization. Specifically , on- line diarization predicts the incorrect number of speakers much more frequently than offline diarization. This problem could potentially be mitigated by the addition of a “burn-in” stage before entering the online mode. In T able 2 , we compare our d-vector + spectral clustering system with others’ work on the same dataset. Though our LSTM model is completely trained on out-of-domain and English-only data, we can still achiev e state-of-the-art performance on this multilingual dataset. The performance could potentially be further improved by using in-domain training data and adding a final resegmentation step. Additionally , in T able 3 , we followed the same practice in [ 27 ] to evaluate our system on a subset of 109 utterances from CALL- HOME American English that hav e 2 speakers (called CH-109 in [ 20 ]). Number of speakers is fixed to 2 for this ev aluation. 4.5. Discussion Though we listed DER metrics from different papers in T able 2 and 3 , we find that it is difficult to fully align these numbers, an unfor- T able 3 : DER (%) on CALLHOME American English 2-speaker subset (CH-109). Method Confusion F A Miss T otal d-vector + spectral 5.97 2.51 4.06 12.54 Zaj ´ ıc et al. [ 27 ] 7.84 — — — tunately common problem in the diarization community . This is due primarily to the large number of moving parts required for a func- tional diarization pipeline. For example, different teams use differ - ent V oice Acti vity Detection marks (not publicly available), different training datasets, and different De v sets for parameter tuning. The e valuation protocols and software also differ from paper to paper . Most teams exclude F A and Miss from their ev aluations, and directly refer to Confusion as their DER. Ho we ver , we observed that a poor V AD with high Miss usually filters out the difficult parts in the speech, and makes the clustering problem much easier . Some papers like [ 20 ] use the non-standard Speaker Clustering Errors in frame percentage as their metric, and also exclude F A and Miss from this error . Additionally , it’ s unclear ho w o verlapped speech is handled in some papers. In our experiments, we do our best to ensure the comparisons are as fair as possible, and a void tuning parameters on Ev al sets. 5. CONCLUSIONS In this paper , we built on the success of d-vector based speaker verifi- cation systems to dev elop a new d-v ector based approach to speaker diarization. Specifically , we combined LSTM-based d-vector audio embeddings with recent work in non-parametric clustering to obtain a state-of-the-art speaker diarization system. W e conducted exper - iments on four clustering algorithms combined with both i-vectors and d-vectors, and reported the performance on three standard pub- lic datasets: CALLHOME American English, NIST R T -03 English CTS, and NIST SRE 2000. In general, we observed that d-vector based systems achiev e significantly lower DER than i-vector based systems. 6. A CKNO WLEDGEMENTS W e would like to thank Dr . Herv ´ e Bredin for the continuous support with the pyannote.metrics library . W e would like to thank Dr . Gregory Sell and Prof. Pietro Laface for helping us understand the ev aluation datasets. W e would like to thank Y ash Sheth and Richard Rose for the helpful discussions. 7. REFERENCES [1] Patrick Kenny , Douglas Reynolds, and Fabio Castaldo, “Di- arization of telephone conv ersations using factor analysis, ” IEEE Journal of Selected T opics in Signal Processing , vol. 4, no. 6, pp. 1059–1070, 2010. [2] Fabio Castaldo, Daniele Colibro, Emanuele Dalmasso, Pietro Laface, and Claudio V air , “Stream-based speaker segmen- tation using speaker factors and eigen voices, ” in Acoustics, Speech and Signal Processing , 2008. ICASSP 2008. IEEE In- ternational Confer ence on . IEEE, 2008, pp. 4133–4136. [3] Stephen H Shum, Najim Dehak, R ´ eda Dehak, and James R Glass, “Unsupervised methods for speaker diarization: An in- tegrated and iterati ve approach, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 21, no. 10, pp. 2015– 2028, 2013. [4] Mohammed Senoussaoui, Patrick K enny , Themos Stafylakis, and Pierre Dumouchel, “ A study of the cosine distance- based mean shift for telephone speech diarization, ” IEEE/A CM T ransactions on Audio, Speech and Language Processing (T ASLP) , v ol. 22, no. 1, pp. 217–227, 2014. [5] Gregory Sell and Daniel Garcia-Romero, “Speaker diariza- tion with plda i-vector scoring and unsupervised calibration, ” in Spoken Language T ec hnology W orkshop (SLT), 2014 IEEE . IEEE, 2014, pp. 413–417. [6] Gregory Sell and Daniel Garcia-Romero, “Diarization re- segmentation in the factor analysis subspace, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE Interna- tional Confer ence on . IEEE, 2015, pp. 4794–4798. [7] Ehsan V ariani, Xin Lei, Erik McDermott, Ignacio Lopez Moreno, and Javier Gonzalez-Dominguez, “Deep neural net- works for small footprint text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2014 IEEE International Conference on . IEEE, 2014, pp. 4052– 4056. [8] Y u-hsin Chen, Ignacio Lopez-Moreno, T ara N Sainath, Mirk ´ o V isontai, Raziel Alvarez, and Carolina Parada, “Locally- connected and conv olutional neural networks for small foot- print speaker recognition, ” in Sixteenth Annual Conference of the International Speech Communication Association , 2015. [9] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Conference on . IEEE, 2016, pp. 5115– 5119. [10] F A Rezaur Rahman Chowdhury , Quan W ang, Li W an, and Ignacio Lopez Moreno, “ Attention-based models for text-dependent speaker verification, ” arXiv pr eprint arXiv:1710.10470 , 2017. [11] Li W an, Quan W ang, Alan Papir , and Ignacio Lopez Moreno, “Generalized end-to-end loss for speaker verification, ” arXiv pr eprint arXiv:1710.10467 , 2017. [12] Guoguo Chen, Carolina Parada, and Georg Heigold, “Small- footprint ke yword spotting using deep neural networks, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2014 IEEE International Conference on . IEEE, 2014, pp. 4087– 4091. [13] Rohit Prabhav alkar , Raziel Alvarez, Carolina Parada, Preetum Nakkiran, and T ara N Sainath, “ Automatic gain control and multi-style training for robust small-footprint ke yword spotting with deep neural networks, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE International Conference on . IEEE, 2015, pp. 4704–4708. [14] Daniel Garcia-Romero, David Snyder , Gregory Sell, Daniel Pov ey , and Alan McCree, “Speaker diarization using deep neu- ral network embeddings, ” in 2017 IEEE International Confer- ence on Acoustics, Speech and Signal Pr ocessing (ICASSP). IEEE , 2017, pp. 4930–4934. [15] Sepp Hochreiter and J ¨ urgen Schmidhuber , “Long short-term memory , ” Neural computation , vol. 9, no. 8, pp. 1735–1780, 1997. [16] Ulrike V on Luxb urg, “ A tutorial on spectral clustering, ” Statis- tics and computing , vol. 17, no. 4, pp. 395–416, 2007. [17] Huazhong Ning, Ming Liu, Hao T ang, and Thomas S Huang, “ A spectral clustering approach to speak er diarization., ” in IN- TERSPEECH , 2006. [18] Philip Andrew Mansfield, Quan W ang, Carlton Downey , Li W an, and Ignacio Lopez Moreno, “Links: A high- dimensional online clustering method, ” arXiv pr eprint arXiv:1801.10123 , 2018. [19] Oshry Ben-Harush, Ortal Ben-Harush, Itshak Lapidot, and Hugo Guterman, “Initialization of iterativ e-based speaker di- arization systems for telephone con versations, ” IEEE T ransac- tions on A udio, Speech, and Langua ge Pr ocessing , vol. 20, no. 2, pp. 414–425, 2012. [20] Dimitrios Dimitriadis and Petr Fousek, “Developing on-line speaker diarization system, ” in INTERSPEECH , 2017. [21] David Arthur and Sergei V assilvitskii, “k-means++: The ad- vantages of careful seeding, ” in Pr oceedings of the eighteenth annual ACM-SIAM symposium on Discr ete algorithms . Soci- ety for Industrial and Applied Mathematics, 2007, pp. 1027– 1035. [22] Ronald R Coifman and St ´ ephane Lafon, “Dif fusion maps, ” Applied and computational harmonic analysis , vol. 21, no. 1, pp. 5–30, 2006. [23] Has ¸ im Sak, Andrew Senior, and Franc ¸ oise Beaufays, “Long short-term memory recurrent neural network architectures for large scale acoustic modeling, ” in F ifteenth Annual Confer ence of the International Speech Communication Association , 2014. [24] Rub ´ en Zazo Candil, T ara N Sainath, Gabor Simko, and Car- olina Parada, “Feature learning with raw-wav eform cldnns for voice activity detection, ” in INTERSPEECH . 2016, Interna- tional Speech and Communication Association. [25] A Canav an, D Graf f, and G Zipperlen, “Callhome american english speech ldc97s42, ” LDC Catalog. Philadelphia: Lin- guistic Data Consortium , 1997. [26] Herv ´ e Bredin, “ pyannote.metrics : a toolkit for repro- ducible ev aluation, diagnostic, and error analysis of speaker diarization systems, ” hypothesis , vol. 100, no. 60, pp. 90, 2017. [27] Zbyn ˘ ek Zaj ´ ıc, Marek Hr ´ uz, and Lud ˘ ek M ¨ uller , “Speaker di- arization using con volutional neural network for statistics ac- cumulation refinement, ” in INTERSPEECH , 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment