A knowledge graph embeddings based approach for author name disambiguation using literals

Scholarly data is growing continuously containing information about the articles from a plethora of venues including conferences, journals, etc. Many initiatives have been taken to make scholarly data available in the form of Knowledge Graphs (KGs). These efforts to standardize these data and make them accessible have also led to many challenges such as exploration of scholarly articles, ambiguous authors, etc. This study more specifically targets the problem of Author Name Disambiguation (AND) on Scholarly KGs and presents a novel framework, Literally Author Name Disambiguation (LAND), which utilizes Knowledge Graph Embeddings (KGEs) using multimodal literal information generated from these KGs. This framework is based on three components: (1) multimodal KGEs, (2) a blocking procedure, and finally, (3) hierarchical Agglomerative Clustering. Extensive experiments have been conducted on two newly created KGs: (i) KG containing information from Scientometrics Journal from 1978 onwards (OC-782K), and (ii) a KG extracted from a well-known benchmark for AND provided by AMiner (AMiner-534K). The results show that our proposed architecture outperforms our baselines of 8–14% in terms of F1 score and shows competitive performances on a challenging benchmark such as AMiner. The code and the datasets are publicly available through Github (https://github.com/sntcristian/and-kge) and Zenodo (https://doi.org/10.5281/zenodo.6309855) respectively.

💡 Research Summary

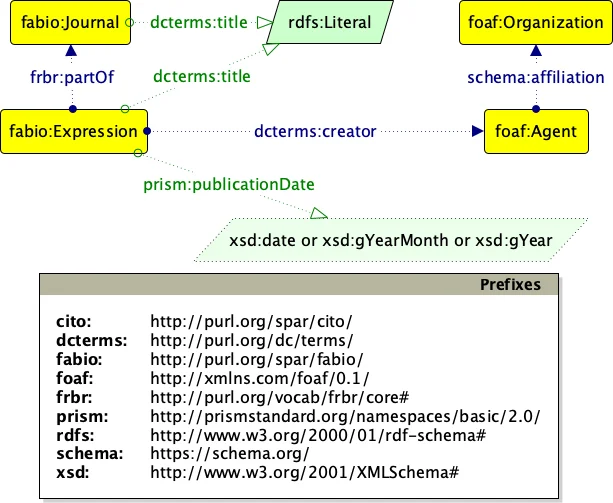

The paper introduces LAND (Literally Author Name Disambiguation), a novel framework for author name disambiguation (AND) that operates directly on scholarly knowledge graphs (KGs). Unlike most existing approaches that rely heavily on rich textual metadata (titles, abstracts, keywords) and often require supervised training data, LAND leverages multimodal knowledge graph embeddings that incorporate both structural relations and literal attributes (e.g., author given name, family name, publication title, venue, year, affiliation identifiers). The core embedding model is LiteralE, which extends traditional KG embedding techniques by jointly learning vector representations for entities, relations, and associated literals, thereby capturing semantic similarity even when textual fields are sparse or missing.

LAND’s pipeline consists of three stages. First, the entire KG is processed with LiteralE to obtain dense embeddings for all relevant entities. Second, a blocking step groups records that share the same last name and first initial (LN‑FI), dramatically reducing the number of pairwise comparisons required for clustering. Third, within each block, pairwise distances between embeddings are computed (typically cosine similarity) and hierarchical agglomerative clustering (HAC) is applied to produce the final author clusters. This unsupervised pipeline eliminates the need for labeled training pairs and can adapt to any KG that provides at least minimal literal information.

The authors evaluate LAND on two newly constructed datasets: OC‑782K, derived from OpenCitations data for the Scientometrics journal (1978‑present), and AMiner‑534K, a well‑known benchmark extracted from the AMiner scholarly network. Both datasets exhibit substantial metadata sparsity, making them ideal testbeds for assessing the benefit of literal‑enhanced embeddings. Compared against a range of baselines—including co‑authorship‑based clustering, TF‑IDF similarity, rule‑based graph methods such as GHOST, and recent heuristic multi‑step approaches—LAND achieves F1 improvements of 8–14 %. The gains are especially pronounced on OC‑782K, where the inclusion of literal information compensates for missing abstracts and keywords. Moreover, the blocking step reduces candidate pair counts by over 90 %, yielding significant runtime savings without sacrificing accuracy.

Key contributions of the work are: (1) Demonstrating that multimodal KG embeddings can serve as effective, label‑free feature extractors for AND; (2) Showing that literal attributes, even when noisy or incomplete, substantially enrich the representation of scholarly entities; (3) Providing an end‑to‑end, scalable pipeline that combines blocking and hierarchical clustering to handle large‑scale graphs. The authors acknowledge limitations, notably the sensitivity of the approach to the quality of literals and the reliance on simple LN‑FI blocking, which may still generate large blocks for common surnames. Future directions include exploring more sophisticated blocking strategies (phonetic, affiliation‑based), integrating graph neural networks for dynamic embedding updates, and extending the framework to multilingual and cross‑domain scholarly corpora. Overall, LAND offers a promising path toward robust, unsupervised author disambiguation in the increasingly heterogeneous landscape of scholarly knowledge graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment