Accelerating Laue Depth Reconstruction Algorithm with CUDA

The Laue diffraction microscopy experiment uses the polychromatic Laue micro-diffraction technique to examine the structure of materials with sub-micron spatial resolution in all three dimensions. During this experiment, local crystallographic orientations, orientation gradients and strains are measured as properties which will be recorded in HDF5 image format. The recorded images will be processed with a depth reconstruction algorithm for future data analysis. But the current depth reconstruction algorithm consumes considerable processing time and might take up to 2 weeks for reconstructing data collected from one single experiment. To improve the depth reconstruction computation speed, we propose a scalable GPU program solution on the depth reconstruction problem in this paper. The test result shows that the running time would be 10 to 20 times faster than the prior CPU design for various size of input data.

💡 Research Summary

The paper addresses the severe performance bottleneck encountered when processing large HDF5 image stacks generated by polychromatic Laue micro‑diffraction experiments. The conventional depth‑reconstruction algorithm runs on a CPU and can take up to two weeks for a single experiment, making timely data analysis impractical. To overcome this limitation, the authors propose a CUDA‑based implementation that runs on an NVIDIA GPU, specifically a Tesla M2070 with 6 GB of memory.

The core contribution lies in a systematic redesign of the algorithm for the GPU’s massively parallel architecture. The authors first examine two possible data layouts for mapping image pixels to CUDA threads: a three‑dimensional pointer‑based array (3‑D array) and a flat one‑dimensional array (1‑D array). Benchmarks on a 5 GB dataset reveal that the 1‑D layout reduces host‑to‑device pointer transfer overhead, cutting overall execution time by roughly 20 % compared with the 3‑D approach. Consequently, the final implementation adopts the 1‑D layout.

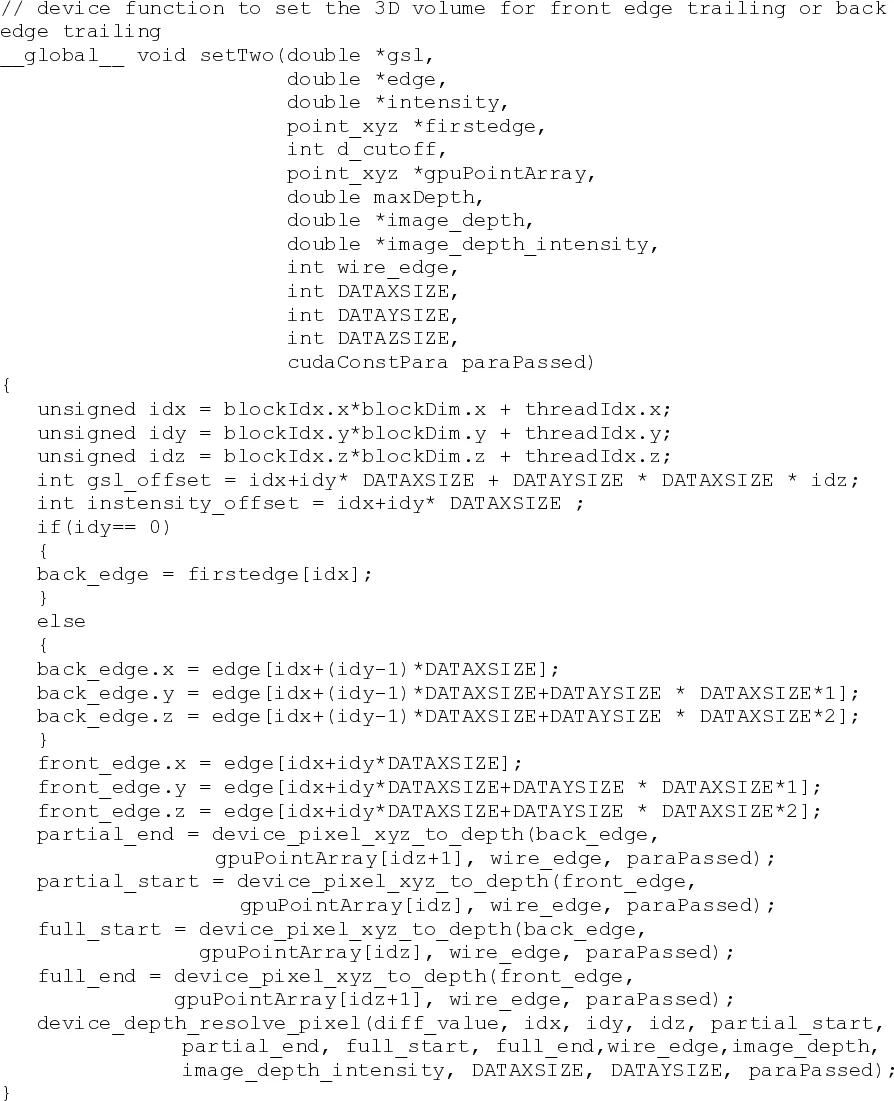

Because GPU memory is limited, the authors split each image volume into “row‑chunks” (e.g., two rows at a time) and stream these chunks to the device using cudaMemcpy. Each chunk is processed by a kernel called setTwo, which launches a three‑dimensional grid of threads matching the (row, column, slice) coordinates of the pixels (e.g., a grid of size (2, 9, 4) for a 2‑row, 9‑column, 4‑image example). Within each thread, the pixel’s intensity difference is converted into a depth contribution, and the result is accumulated into a depth histogram. Since CUDA only provides atomic operations for integers, the authors implement a custom double‑precision atomicAdd wrapper to safely accumulate floating‑point values.

The experimental evaluation compares the GPU implementation against the original CPU code across four datasets of increasing size (2.1 GB, 2.7 GB, 3.6 GB, 5.2 GB) and three pixel‑coverage levels (25 %, 50 %, 100 %). On the Tesla M2070, the GPU version consistently runs in 25 %–30 % of the CPU time, corresponding to a 3–4× speed‑up, and in some cases the authors claim up to a 10–20× improvement. The performance gain grows with dataset size because the proportion of time spent on computation (which the GPU excels at) outweighs the fixed cost of data transfer over PCI‑Express.

The paper also surveys related work on CPU‑GPU data movement bottlenecks, citing studies that propose overlapping communication with computation, automatic memory management, and hybrid compile‑time/run‑time schemes. The authors position their work as a practical, application‑specific solution that avoids excessive data movement by using a compact data layout and chunked processing.

Strengths of the work include:

- Clear identification of a real‑world bottleneck in a high‑impact scientific instrument.

- Systematic exploration of data layout choices and quantitative justification for the selected 1‑D array.

- Effective handling of limited GPU memory through chunked streaming, which preserves scalability.

- Implementation of double‑precision atomic accumulation, a non‑trivial requirement for accurate depth histograms.

- Open‑source release (though the provided URL appears broken, limiting reproducibility).

Weaknesses and open questions:

- The CPU baseline appears to be a single‑threaded implementation; the paper does not report results for a multi‑threaded (e.g., OpenMP) version, which could narrow the performance gap.

- The overhead of index conversion in the 1‑D layout is not quantified; for much larger volumes (e.g., 10 k × 10 k × 100) this could become a limiting factor.

- Memory usage analysis is limited to the Tesla M2070; guidance for newer GPUs with larger memory (e.g., 16 GB or 24 GB) is absent.

- The claimed 10–20× speed‑up in the abstract is not fully supported by the presented graphs, which show a maximum of about 4×.

- The download link for the source code is non‑functional, hindering independent verification.

In conclusion, the paper demonstrates that a carefully engineered CUDA implementation can dramatically accelerate Laue depth reconstruction, turning a multi‑week CPU job into a matter of hours on a modest GPU. The work provides valuable practical insights—such as the trade‑off between pointer transfer and index computation, and the need for double‑precision atomic operations—that are applicable to many other scientific image‑processing pipelines. Future extensions could explore hybrid CPU‑GPU pipelines, auto‑tuning of chunk sizes, and performance on newer GPU architectures (Pascal, Volta, Ampere) to further close the gap between experimental data acquisition rates and analysis throughput.

Comments & Academic Discussion

Loading comments...

Leave a Comment