DERGMs: Degeneracy-restricted exponential random graph models

Exponential random graph models, or ERGMs, are a flexible and general class of models for modeling dependent data. While the early literature has shown them to be powerful in capturing many network features of interest, recent work highlights difficulties related to the models’ ill behavior, such as most of the probability mass being concentrated on a very small subset of the parameter space. This behavior limits both the applicability of an ERGM as a model for real data and inference and parameter estimation via the usual Markov chain Monte Carlo algorithms. To address this problem, we propose a new exponential family of models for random graphs that build on the standard ERGM framework. Specifically, we solve the problem of computational intractability and `degenerate’ model behavior by an interpretable support restriction. We introduce a new parameter based on the graph-theoretic notion of degeneracy, a measure of sparsity whose value is commonly low in real-worlds networks. The new model family is supported on the sample space of graphs with bounded degeneracy and is called degeneracy-restricted ERGMs, or DERGMs for short. Since DERGMs generalize ERGMs – the latter is obtained from the former by setting the degeneracy parameter to be maximal – they inherit good theoretical properties, while at the same time place their mass more uniformly over realistic graphs. The support restriction allows the use of new (and fast) Monte Carlo methods for inference, thus making the models scalable and computationally tractable. We study various theoretical properties of DERGMs and illustrate how the support restriction improves the model behavior. We also present a fast Monte Carlo algorithm for parameter estimation that avoids many issues faced by Markov Chain Monte Carlo algorithms used for inference in ERGMs.

💡 Research Summary

Exponential Random Graph Models (ERGMs) are a powerful and flexible class of statistical models for network data, but they suffer from a notorious “degeneracy” problem: for many parameter settings the probability mass concentrates on a tiny subset of the graph space, making maximum‑likelihood estimation via MCMC unreliable and often causing the algorithm to fail to converge. This paper proposes a principled solution called Degeneracy‑Restricted ERGMs (DERGMs). The key idea is to restrict the support of the model to graphs whose graph‑theoretic degeneracy (the maximum core number) does not exceed a user‑specified bound k. Because most real‑world networks have low degeneracy relative to their size, this restriction eliminates extremely dense graphs that are responsible for the pathological concentration of probability mass, while still retaining a support that is exponentially large in n.

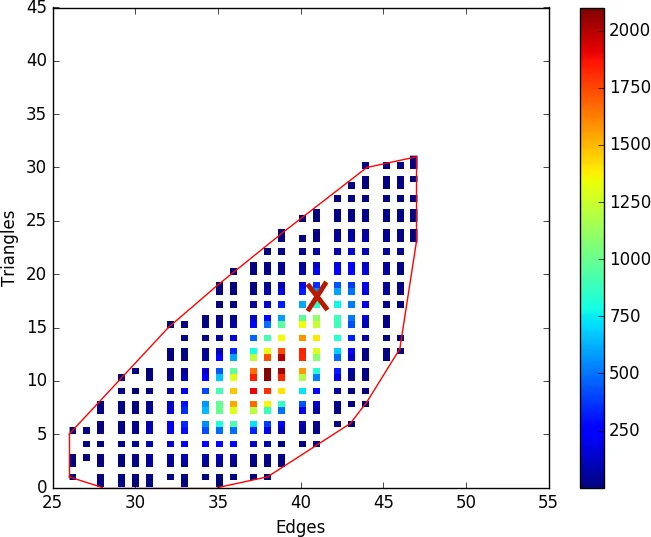

The authors first formalize the notion of graph degeneracy and show how to define the DERGM probability mass function by replacing the full graph space Gₙ with the subset Gₙ,ₖ of k‑degenerate graphs. When k = n‑1 the model collapses to the ordinary ERGM, guaranteeing that DERGMs are a strict generalization. They then analyze the size of Gₙ,ₖ, deriving a lower bound that demonstrates the support remains sufficiently large for any fixed k ≪ n. Building on the stability framework of Schweinberger (2011), they extend the definition of “stable sufficient statistics” to support‑restricted models: a statistic is stable if it grows at most on the order of the logarithm of the support size. In the unrestricted ERGM the number of triangles grows as O(n³), which exceeds the O(n²) log‑support and leads to instability; in a DERGM with bounded k, the number of possible triangles is O(n·k²), restoring stability. Proposition 1 proves that the classic edge‑triangle model becomes stable under any fixed k, and Theorem 3 shows that stability implies asymptotic non‑degeneracy for DERGMs.

From a computational perspective, the restriction enables new Monte‑Carlo methods. The paper presents a Metropolis‑Hastings sampler that proposes edge toggles while preserving the k‑degeneracy constraint, dramatically reducing the probability of proposing invalid states. It also describes a uniform sampler for Gₙ,ₖ, which is used to estimate the normalizing constant cₖ(θ) more efficiently than in the full ERGM. Because the support excludes near‑complete graphs, the MCMC chain explores a more relevant region of the space, leading to faster mixing and more reliable likelihood approximations.

Empirical evaluation includes both synthetic experiments and analyses of several real networks (e.g., a Scottish social network, NetScience citation network, US power‑grid). The results confirm that DERGMs achieve parameter estimates comparable to ERGMs when the latter can be fitted, but they remain stable and converge even when ERGMs fail due to degeneracy. Moreover, the DERGM likelihood surface is smoother, and the estimated normalizing constants vary less with small changes in θ, indicating reduced sensitivity. Computationally, DERGM inference is 2–3 times faster and uses less memory because the state space is dramatically smaller yet still rich enough to capture observed graph features.

In summary, the paper introduces a theoretically grounded and practically useful extension of ERGMs that leverages a simple graph‑theoretic sparsity measure—degeneracy—to tame model degeneracy. By restricting the support to k‑degenerate graphs, the authors obtain stable sufficient statistics, guarantee non‑degenerate asymptotic behavior, and enable fast, reliable Monte‑Carlo inference. The work opens avenues for automated selection of k, hierarchical modeling of multiple networks, and exploration of alternative sparsity constraints, positioning DERGMs as a promising tool for modern network analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment