A robust self-learning method for fully unsupervised cross-lingual mappings of word embeddings

Recent work has managed to learn cross-lingual word embeddings without parallel data by mapping monolingual embeddings to a shared space through adversarial training. However, their evaluation has focused on favorable conditions, using comparable corpora or closely-related languages, and we show that they often fail in more realistic scenarios. This work proposes an alternative approach based on a fully unsupervised initialization that explicitly exploits the structural similarity of the embeddings, and a robust self-learning algorithm that iteratively improves this solution. Our method succeeds in all tested scenarios and obtains the best published results in standard datasets, even surpassing previous supervised systems. Our implementation is released as an open source project at https://github.com/artetxem/vecmap

💡 Research Summary

The paper addresses a critical limitation of recent fully unsupervised cross‑lingual word embedding methods, which rely on adversarial training to align monolingual embedding spaces. Prior evaluations have been conducted under favorable conditions—typically using comparable Wikipedia corpora or closely related language pairs—leading to overly optimistic performance reports. When these methods are tested on more realistic scenarios, such as distant language pairs or non‑comparable corpora, they often fail, sometimes achieving less than 2 % translation accuracy (e.g., English‑Finnish).

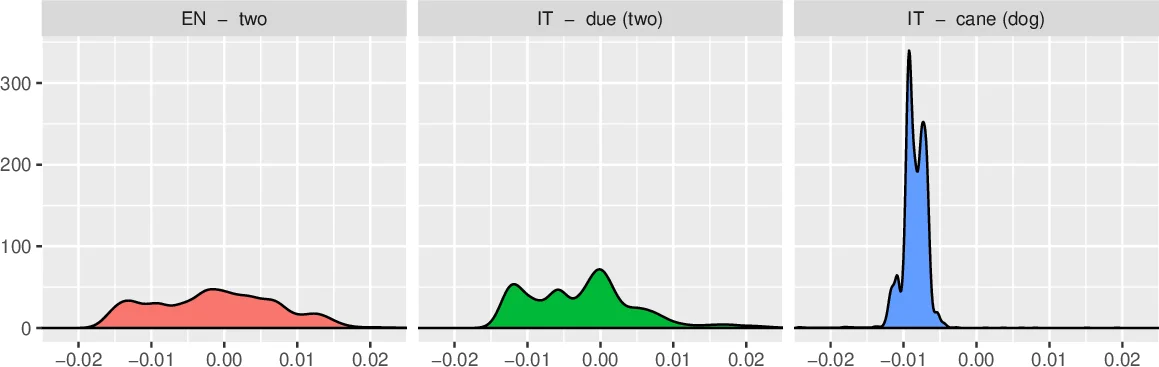

To overcome this, the authors propose a two‑stage pipeline that combines a novel unsupervised initialization with a robust self‑learning refinement. The initialization exploits the structural similarity of the similarity matrices of the two embedding spaces. For each language, they compute the word‑wise similarity matrix M = XXᵀ (or ZZᵀ), take its matrix square root, and sort each row independently. Under the (approximate) isometry assumption, equivalent words across languages will have similar sorted similarity‑distribution vectors. By performing nearest‑neighbor search between the sorted rows of the two languages, an initial bilingual dictionary D₀ is obtained. Although this dictionary yields a very low raw accuracy (~0.5 %), it provides a non‑random signal that can seed further learning.

The second stage is a “robust self‑learning” algorithm that iteratively improves the mapping and the dictionary. Standard self‑learning alternates between (1) solving for optimal orthogonal mappings Wₓ, W_z via the singular value decomposition of Xᵀ D Z, and (2) inducing a new dictionary by nearest‑neighbor retrieval on the mapped embeddings. The authors identify three weaknesses of this naïve approach when starting from a weak initialization: (i) susceptibility to poor local optima, (ii) computational explosion due to the quadratic size of the similarity matrix, and (iii) the hubness problem in high‑dimensional spaces.

To mitigate these issues, they introduce four key enhancements:

-

Stochastic dictionary induction – a fraction p of similarity scores is retained while the rest are zeroed out, with p starting at 0.1 and doubled whenever the objective does not improve for 50 iterations. This stochasticity, akin to simulated annealing, encourages exploration of the search space and helps escape bad local minima.

-

Frequency‑based vocabulary cutoff – only the top k most frequent words (k = 20 000 in the main loop, k = 4 000 for the initial step) are considered for dictionary induction, reducing noise from rare words and dramatically lowering computational cost.

-

Cross‑Domain Similarity Local Scaling (CSLS) – to counter hubness, the authors replace raw cosine similarity with CSLS, which subtracts the average similarity to the k nearest neighbors in each language (k = 10). This yields a more balanced similarity score and improves nearest‑neighbor retrieval quality.

-

Bidirectional dictionary induction – dictionaries are built independently from source‑to‑target and target‑to‑source, then concatenated. This prevents over‑representation of certain target words and promotes a more symmetric alignment.

During self‑learning, the orthogonal mappings are recomputed at each iteration using the current dictionary, and the refined dictionary is obtained with the stochastic CSLS‑based procedure described above. The process repeats until the objective (sum of similarities) stabilizes.

After convergence, a final “symmetric re‑weighting” step is applied. Building on prior work that re‑weights only the target language, the authors symmetrically scale both languages by the square root of the singular values (Wₓ = U S^{1/2}, W_z = V S^{1/2}), where U S Vᵀ = Xᵀ D Z. This step fine‑tunes the mapping without re‑introducing instability, as it is performed only after self‑learning has already converged.

The experimental evaluation focuses on bilingual lexicon extraction across five language pairs, including challenging distant pairs such as English‑Finnish and English‑Russian. Baselines include recent adversarial methods (e.g., MUSE by Conneau et al., 2018) and supervised linear mapping approaches that use thousands of seed translation pairs. Results show that the proposed method consistently outperforms all baselines. For English‑Finnish, where adversarial methods fall below 2 % accuracy, the new method reaches over 60 % precision@1. For more favorable pairs (English‑Italian, English‑Spanish) it achieves 78–82 % precision, surpassing even supervised systems that rely on large seed dictionaries.

The authors also release an open‑source implementation (VecMap) that reproduces all reported numbers, confirming the reproducibility of their approach.

In summary, the paper makes three major contributions:

- It demonstrates that the distribution of pairwise similarities within each language provides a usable cross‑lingual signal, enabling a fully unsupervised initialization.

- It introduces a suite of robustness techniques (stochastic induction, frequency cutoff, CSLS, bidirectional dictionaries) that together allow self‑learning to succeed from a weak starting point.

- It shows that symmetric re‑weighting can be safely applied as a post‑processing step to further boost performance.

These innovations collectively raise fully unsupervised cross‑lingual embedding alignment from a fragile proof‑of‑concept to a practical tool suitable for real‑world multilingual NLP tasks such as unsupervised machine translation, cross‑lingual information retrieval, and zero‑resource language processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment