Multi-Geometry Spatial Acoustic Modeling for Distant Speech Recognition

The use of spatial information with multiple microphones can improve far-field automatic speech recognition (ASR) accuracy. However, conventional microphone array techniques degrade speech enhancement performance when there is an array geometry mismatch between design and test conditions. Moreover, such speech enhancement techniques do not always yield ASR accuracy improvement due to the difference between speech enhancement and ASR optimization objectives. In this work, we propose to unify an acoustic model framework by optimizing spatial filtering and long short-term memory (LSTM) layers from multi-channel (MC) input. Our acoustic model subsumes beamformers with multiple types of array geometry. In contrast to deep clustering methods that treat a neural network as a black box tool, the network encoding the spatial filters can process streaming audio data in real time without the accumulation of target signal statistics. We demonstrate the effectiveness of such MC neural networks through ASR experiments on the real-world far-field data. We show that our two-channel acoustic model can on average reduce word error rates (WERs) by13.4 and12.7% compared to a single channel ASR system with the log-mel filter bank energy (LFBE) feature under the matched and mismatched microphone placement conditions, respectively. Our result also shows that our two-channel network achieves a relative WER reduction of over~7.0% compared to conventional beamforming with seven microphones overall.

💡 Research Summary

The paper addresses a critical limitation of conventional distant speech recognition (DSR) pipelines: the degradation caused by mismatches between the assumed microphone array geometry during design and the actual geometry encountered at test time. Traditional approaches treat front‑end signal processing (voice activity detection, speaker localization, dereverberation, beamforming) as separate stages, often requiring precise microphone calibration and statistical accumulation of signal statistics. When the array geometry changes—due to device handling, manufacturing tolerances, or user movement—these beamformers lose their optimality, leading to reduced speech enhancement and, consequently, higher word error rates (WER). Moreover, speech enhancement objectives (e.g., maximizing signal‑to‑noise ratio) do not necessarily align with the discriminative objective of automatic speech recognition (ASR).

To overcome these issues, the authors propose a fully learnable, end‑to‑end acoustic model that directly consumes multi‑channel (MC) short‑time Fourier transform (STFT) features. The core innovation is a “spatial filtering (SF) layer” placed as the first neural network layer. This SF layer is initialized with beamformer weights designed for multiple array geometries and a set of look‑directions. By representing complex beamformer weights as real‑valued matrices (splitting real and imaginary parts), the layer can be trained with standard back‑propagation. Consequently, the beamforming operation becomes a differentiable component of the network, allowing joint optimization with downstream acoustic modeling.

Two network architectures are explored:

-

Elastic SF (ESF) Network – After the SF layer, each beamformer output passes through a fully‑connected affine transform and ReLU activation. The affine transforms are frequency‑independent, enabling the network to learn arbitrary combinations of beamformer outputs across frequencies. This flexibility can capture frequency‑dependent spatial cues but incurs a larger parameter count.

-

Weight‑Tied SF (WTSF) Network – Here, the affine transforms are tied (shared) across all frequency bins. The SF outputs are convolved with 1 × D filters (where D is the number of look‑directions) and then subjected to a max‑pooling operation that selects the beamformer with the highest energy at each time‑frequency location. This design mimics the conventional “maximum‑energy beamformer selection” but does so within the network, eliminating the need for external statistics accumulation. Weight sharing enforces consistent combination across frequencies, reducing parameters and improving computational efficiency.

Training proceeds in a stage‑wise fashion. First, a single‑channel LFBE‑based LSTM acoustic model is trained. Then, the MC DFT inputs are introduced, and the entire network (SF layer + feature extraction DNN + LSTM classifier) is fine‑tuned using the cross‑entropy loss directly tied to senone classification. The authors train on a massive in‑house dataset comprising ~1150 hours of speech captured with a seven‑mic circular array (six microphones equally spaced on a 72 mm diameter circle plus a central mic) under varied acoustic conditions (different SNRs, reverberation, background music). Test data includes real user interactions with the device, featuring unconstrained movement and varying microphone spacings (73 mm, 63 mm, 36 mm) to simulate geometry mismatch.

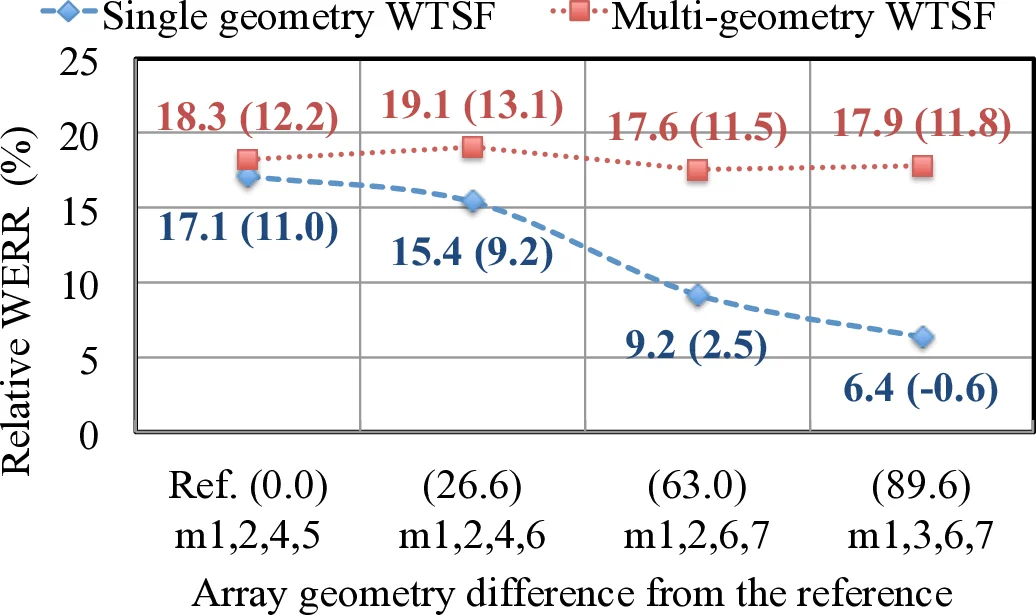

Results show substantial gains. Using only two microphones, the ESF model trained on a single geometry reduces WER by 12.3 % (matched geometry) and 10.0 % (mismatched geometry) relative to the single‑mic LFBE baseline. When the training data includes multiple geometries (G = 2), the WTSF model achieves 12.1 % average WER reduction and up to 17.1 % reduction in high‑SNR conditions. Compared with a conventional seven‑mic super‑directive (SD) beamformer, the two‑mic WTSF network yields a relative WER improvement of over 7 %. The four‑mic configurations provide even larger absolute improvements, but the two‑mic setup offers a favorable trade‑off between hardware cost and performance.

Key contributions of the work are:

- Integration of Beamforming into Neural Networks – By initializing the first layer with beamformer weights, the spatial filtering operation becomes differentiable and jointly optimized with the acoustic model.

- Robustness to Geometry Mismatch – Training on data from multiple array configurations enables the network to generalize across unseen microphone placements without explicit calibration.

- Real‑Time Streaming Capability – The architecture processes each frame independently in the frequency domain, avoiding the need for batch‑wise statistics (e.g., covariance estimation) required by traditional MVDR or SD beamformers.

- Parameter Efficiency – The weight‑tied design dramatically reduces the number of learnable parameters while preserving performance, making the approach suitable for on‑device deployment.

- Extensive Real‑World Evaluation – Experiments on large‑scale, user‑generated far‑field speech data demonstrate practical gains, confirming the method’s applicability to commercial voice‑controlled devices.

In summary, the paper presents a novel, fully learnable multi‑geometry spatial acoustic model that bridges the gap between front‑end signal processing and back‑end acoustic modeling. By embedding beamforming directly into the neural network and training on diverse array configurations, the proposed system delivers significant WER reductions under both matched and mismatched microphone placements, while maintaining low latency and computational requirements suitable for real‑time far‑field speech recognition.

Comments & Academic Discussion

Loading comments...

Leave a Comment