MW-GAN: Multi-Warping GAN for Caricature Generation with Multi-Style Geometric Exaggeration

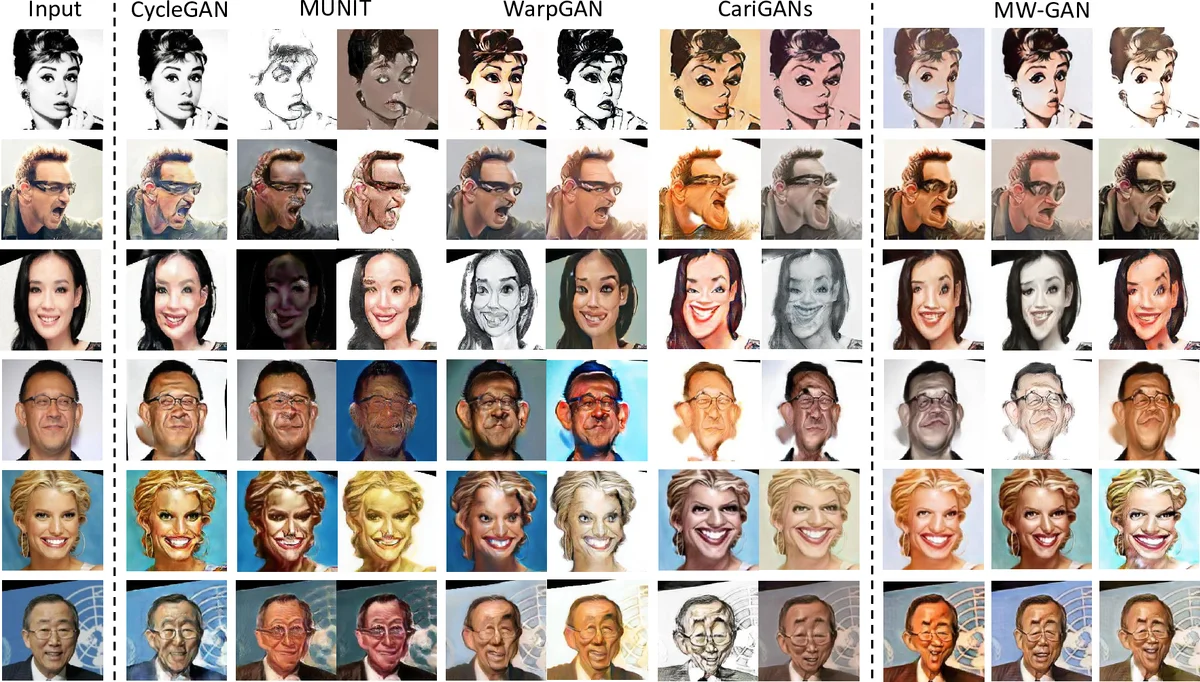

Given an input face photo, the goal of caricature generation is to produce stylized, exaggerated caricatures that share the same identity as the photo. It requires simultaneous style transfer and shape exaggeration with rich diversity, and meanwhile preserving the identity of the input. To address this challenging problem, we propose a novel framework called Multi-Warping GAN (MW-GAN), including a style network and a geometric network that are designed to conduct style transfer and geometric exaggeration respectively. We bridge the gap between the style and landmarks of an image with corresponding latent code spaces by a dual way design, so as to generate caricatures with arbitrary styles and geometric exaggeration, which can be specified either through random sampling of latent code or from a given caricature sample. Besides, we apply identity preserving loss to both image space and landmark space, leading to a great improvement in quality of generated caricatures. Experiments show that caricatures generated by MW-GAN have better quality than existing methods.

💡 Research Summary

**

The paper introduces MW‑GAN, a novel generative adversarial network designed to create caricatures from ordinary face photographs while offering rich diversity in both texture style and geometric exaggeration. Traditional caricature synthesis methods either focus on a single style or produce a fixed geometric deformation, failing to capture the artistic variability seen in hand‑drawn caricatures. MW‑GAN addresses this gap by decomposing the problem into two complementary sub‑tasks: (1) texture‑style transfer and (2) shape exaggeration.

The architecture consists of a style network and a geometric network. The style network follows a MUNIT‑like design, separating content (shared facial structure) from style (color, brushstroke, shading). Two encoders—one for content (E_c) and one for style (E_s)—extract latent codes from photos and caricatures. Style codes (z_s) are regularized to follow a Gaussian distribution, enabling either random sampling for novel styles or extraction from an existing caricature to replicate a specific artistic look. The decoded image preserves the original facial layout while adopting the sampled texture.

The geometric network operates on facial landmarks. It learns a displacement map Δl that warps the stylized image according to a landmark‑style code (z_l) and the content code (z_c). Like the style code, z_l is drawn from a Gaussian prior or inferred from a reference caricature, allowing the system to produce multiple exaggeration patterns for the same face. The final caricature is obtained by applying the learned warp to the stylized image, effectively combining texture and shape transformations.

A key innovation is the dual‑way (bidirectional) design. In addition to the forward photo‑to‑caricature pipeline, the model simultaneously learns the reverse caricature‑to‑photo mapping. This enables a cycle‑consistency loss not only on the reconstructed images but also on the latent codes (z_s, z_l). By forcing the latent representations to be invariant across forward and backward passes, the network ensures that sampled codes correspond to meaningful, controllable styles and exaggerations, mitigating the “code collapse” problem common in single‑direction GANs.

Preserving the subject’s identity is critical. The authors introduce identity preservation losses in two spaces: (1) an image‑space loss using a pre‑trained face recognition network to align the identity embeddings of the input photo and the generated caricature, and (2) a landmark‑space loss that penalizes excessive deviation of warped landmarks from the original geometry. This dual‑space supervision balances the need for dramatic exaggeration with the requirement that the caricature remains recognizable.

Training optimizes a composite objective comprising adversarial losses for both style and geometric discriminators, reconstruction losses for the auto‑encoders, latent‑code cycle losses, and the two identity losses. Regularization terms keep style and warp magnitudes within reasonable bounds.

Experiments on the CelebA‑Caricature dataset and a proprietary high‑resolution caricature collection demonstrate that MW‑GAN outperforms state‑of‑the‑art methods such as WarpGAN and CariGANs. Quantitatively, it achieves lower Fréchet Inception Distance (FID) and higher Learned Perceptual Image Patch Similarity (LPIPS) scores, indicating better realism and diversity. User studies confirm superior performance in perceived texture variety, exaggeration diversity, and identity retention. Ablation studies reveal that removing the bidirectional architecture or the landmark‑space identity loss significantly degrades results, underscoring their importance.

In summary, the paper makes three major contributions: (1) it is the first to explicitly model and control multiple geometric exaggeration styles alongside texture styles in caricature generation; (2) it proposes a dual‑way framework with latent‑code cycle consistency that yields semantically meaningful style and warp codes; and (3) it introduces identity preservation in both image and landmark domains, markedly improving the fidelity of generated caricatures. The approach not only advances automatic caricature synthesis but also offers a blueprint for other tasks requiring simultaneous style transfer and shape manipulation, such as fashion design, avatar creation, and 3D model stylization.

Comments & Academic Discussion

Loading comments...

Leave a Comment