Relational Models of Microarchitectures for Formal Security Analyses

💡 Research Summary

The paper addresses the growing gap between hardware optimizations and software security by introducing Leakage Containment Models (LCMs)—a novel class of formal security contracts that explicitly capture microarchitectural leakage. The authors argue that existing contracts are too abstract, focusing only on ISA‑visible state, and therefore cannot reason about side‑ or covert‑channel attacks that arise from microarchitectural features such as caches, branch predictors, out‑of‑order execution, and multi‑core coherence.

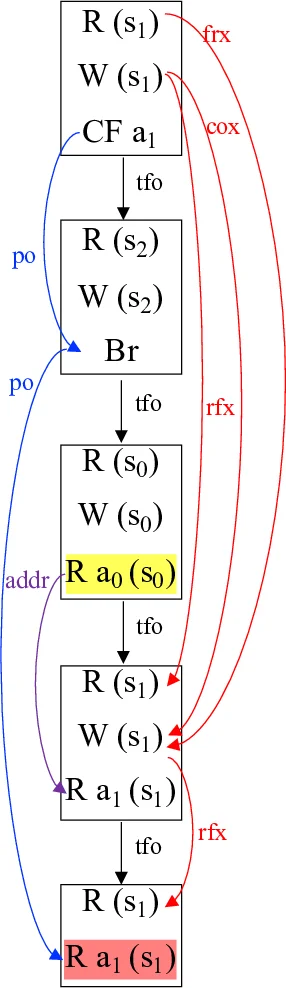

LCMs are built on the well‑established axiomatic memory‑consistency models (MCMs). By reusing the same relational vocabulary (rf, co, fr, po, ppo, dep, fence, etc.), the authors define two distinct semantics for any program:

- Architectural semantics – the set of all ISA‑consistent executions derived from a given MCM. These are the executions that software can observe in the absence of microarchitectural effects.

- Microarchitectural semantics – the set of executions that actually occur on a concrete processor implementation, taking into account hardware mechanisms that may cause additional information flows (e.g., cache hits/misses, speculative windows).

The core insight is that a program is vulnerable to hardware‑induced leakage precisely when there exists an architectural execution whose corresponding microarchitectural execution deviates in a way that can be observed (e.g., timing differences). This deviation is formalized as a mismatch between the two semantics, and the mismatch is captured using the same axioms that define MCM consistency, ensuring that the model remains mathematically rigorous and amenable to automated reasoning.

To demonstrate feasibility, the authors instantiate LCMs for two well‑known Spectre variants (V1 and V4). They show that the classic MCM predicates (such as Total Store Order for x86) are insufficient to explain the observed leaks, whereas the extended LCM semantics correctly predict the existence of a covert channel.

Based on this formal foundation, the paper presents CLOC, a static analysis tool that automatically extracts an event‑structure representation from source code (or LLVM IR), enumerates candidate executions, and checks for microarchitectural mismatches using a configurable LCM description. When a leak is detected, CLOC proposes fence insertions to eliminate the offending speculative paths. The tool was evaluated on 15 Spectre‑V1 and 14 Spectre‑V4 micro‑benchmarks as well as the real‑world libsodium cryptographic library. CLOC identified all known vulnerabilities and required only a handful of fences per program, demonstrating both precision and practicality.

To support further research, the authors also provide SUBROSA, an Alloy‑based toolkit that mechanizes LCM definitions, allows users to model new microarchitectural features, and automatically verifies whether a given hardware design satisfies a specified LCM contract. This bridges the gap between hardware description languages and high‑level security contracts, enabling contract synthesis directly from hardware specifications.

The contributions are summarized as follows:

- Axiomatic security contracts – LCMs extend MCM vocabularies to express both transient and non‑transient leakage, supporting out‑of‑order and multi‑core processors.

- Formal leakage definition – By grounding leakage in the mismatch between architectural and microarchitectural semantics, the definition is both expressive and amenable to automated detection.

- Static analysis implementation (CLOC) – Demonstrates that LCMs can be turned into a practical tool that scales to realistic codebases and automatically repairs detected leaks.

- SUBROSA toolkit – Provides a reusable, formally verified framework for modeling and checking LCMs against hardware designs.

The paper acknowledges limitations: the current focus is on memory‑system‑related leaks, leaving power‑side‑channel and electromagnetic leakage for future work; and the exhaustive enumeration of candidate executions may become costly for very large programs, suggesting the need for abstraction or compositional techniques. Nonetheless, the work establishes a solid theoretical foundation for integrating microarchitectural considerations into formal security contracts, opening a path toward hardware‑aware software verification and contract‑driven processor design.

Comments & Academic Discussion

Loading comments...

Leave a Comment