3D Shape Variational Autoencoder Latent Disentanglement via Mini-Batch Feature Swapping for Bodies and Faces

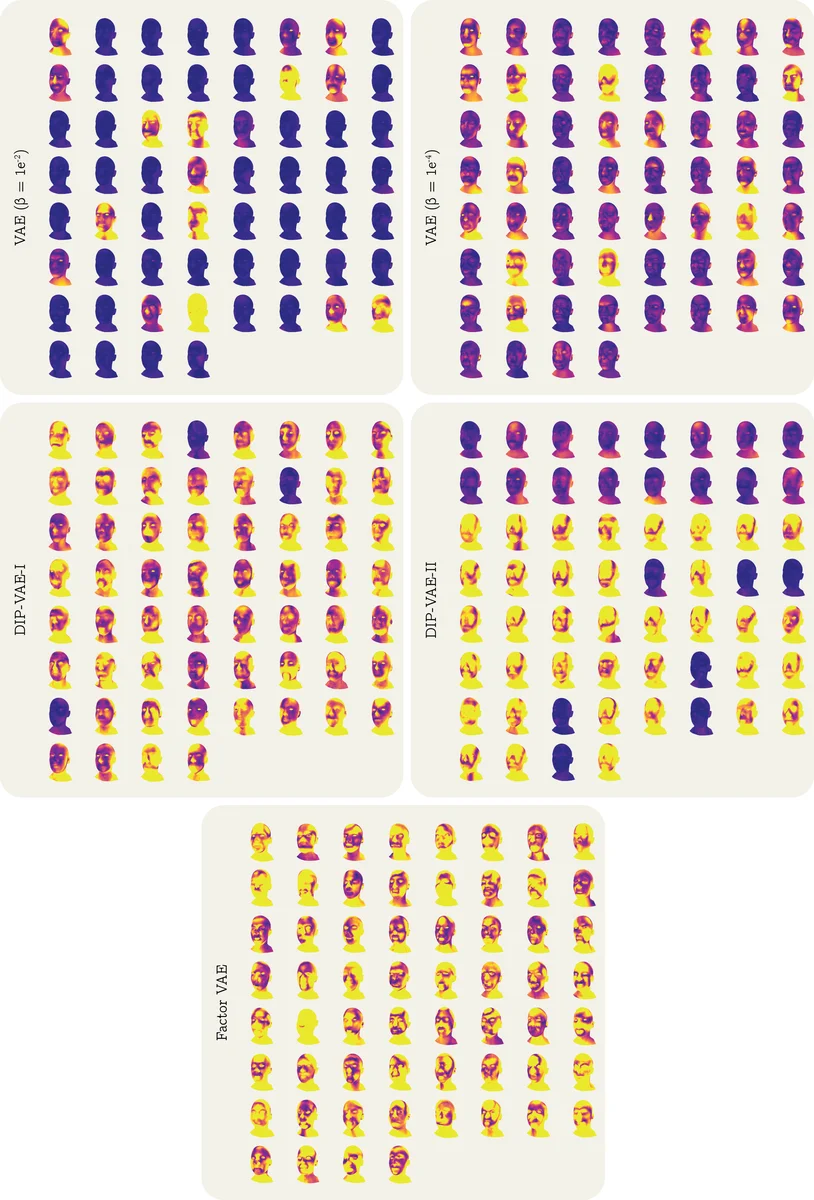

Learning a disentangled, interpretable, and structured latent representation in 3D generative models of faces and bodies is still an open problem. The problem is particularly acute when control over identity features is required. In this paper, we propose an intuitive yet effective self-supervised approach to train a 3D shape variational autoencoder (VAE) which encourages a disentangled latent representation of identity features. Curating the mini-batch generation by swapping arbitrary features across different shapes allows to define a loss function leveraging known differences and similarities in the latent representations. Experimental results conducted on 3D meshes show that state-of-the-art methods for latent disentanglement are not able to disentangle identity features of faces and bodies. Our proposed method properly decouples the generation of such features while maintaining good representation and reconstruction capabilities.

💡 Research Summary

The paper addresses the long‑standing challenge of learning a disentangled, interpretable latent space for 3D generative models of human faces and bodies, with a particular focus on separating identity‑related features. The authors propose a self‑supervised training scheme for a mesh‑based variational auto‑encoder (VAE) that leverages a novel mini‑batch construction technique called “feature swapping.” In this procedure, predefined semantic parts of a mesh (e.g., nose, ears, arms, legs) are manually labeled on a template mesh. Because all meshes share the same topology and point‑wise correspondence, the same vertex indices correspond to the same semantic part across the entire dataset. During training, the authors create mini‑batches by swapping the vertices belonging to a selected part between different meshes, thereby generating new meshes that contain a mixture of identity information: the swapped part originates from one subject while the remaining geometry comes from another.

The key insight is that, after swapping, the encoder should produce latent codes where the sub‑vector responsible for the swapped part (denoted (z_f)) remains consistent with the source of that part, while the complementary sub‑vector ((z_c)) should reflect the rest of the mesh. To enforce this, the authors add a “latent consistency loss” to the standard VAE objective. This loss penalizes differences between the (z_f) vectors of the swapped meshes and the original source meshes, and simultaneously penalizes differences between the (z_c) vectors of meshes that share the same background geometry. The total loss therefore consists of four terms: (1) a reconstruction term (mean‑squared error between input and output vertices), (2) a KL‑divergence term pushing the approximate posterior toward a standard Gaussian prior, (3) a Laplacian smoothing term that encourages smooth surface geometry, and (4) the new latent consistency term weighted by a hyper‑parameter (\gamma).

The underlying VAE architecture follows the state‑of‑the‑art mesh VAE of

Comments & Academic Discussion

Loading comments...

Leave a Comment