The Computational Complexity of Finding Arithmetic Expressions With and Without Parentheses

💡 Research Summary

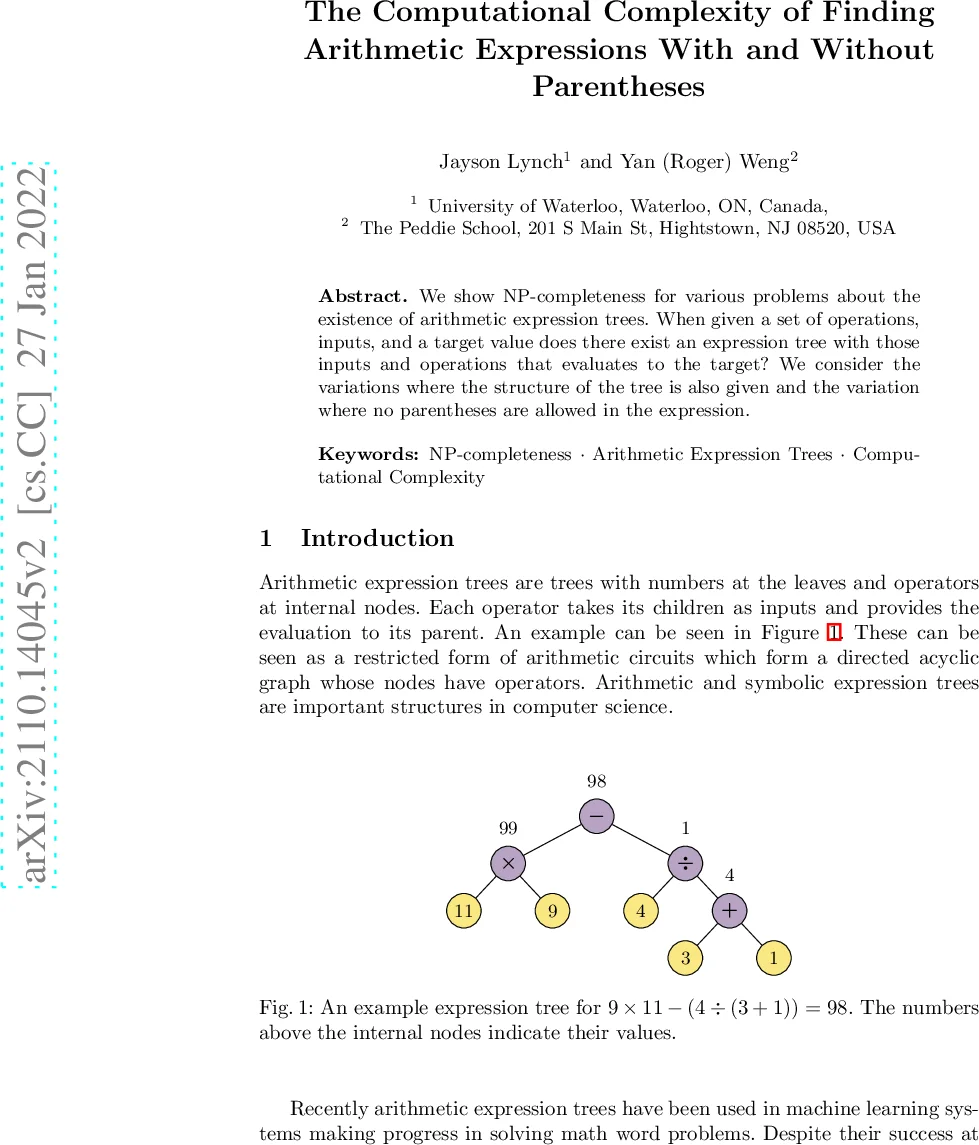

The paper investigates the computational complexity of constructing arithmetic expression trees under two new constraints: (1) Enforced Parentheses (AEC‑EP), where the exact tree structure (i.e., a full parenthesization) is given in advance, and (2) No Parentheses (AEC‑NP), where expressions must be written without any parentheses and are evaluated according to the standard precedence rules (multiplication and division before addition and subtraction).

The classic problem, called Arithmetic Expression Construction (AEC‑Std), asks whether a multiset of input numbers A and a target value t can be combined using any subset of the four basic operators {+, –, ×, ÷} (each input used exactly once) to obtain t. Prior work by Lynch & Wong (2020) gave NP‑completeness results for many operator subsets. This paper extends that line of work by analyzing how the additional structural constraints affect complexity.

A comprehensive table (Table 1) lists the complexity classification for all 16 non‑empty subsets of {+, –, ×, ÷} under both AEC‑EP and AEC‑NP. The main findings are:

- Single‑operator sets {+} and {×} are solvable in polynomial time for both variants.

- The single‑operator set {–} is polynomial for AEC‑NP (no parentheses) but only weakly NP‑complete for AEC‑EP (parentheses forced).

- The single‑operator set {÷} is strongly NP‑complete for both variants, reflecting the fact that division can generate arbitrarily large intermediate values independent of the input size.

- All two‑operator combinations except {×, ÷} are weakly NP‑complete for both variants; {×, ÷} remains strongly NP‑complete.

- Any three‑operator or the full four‑operator set is weakly NP‑complete for both variants.

The authors prove these results using the Rational Function Framework introduced by Leo et al. (2020). This framework translates integer instances into rational functions (polynomials with variables x, y, etc.) so that the effect of each allowed operator can be captured by algebraic manipulation of degrees and coefficients. By constructing appropriate polynomial representations of classic NP‑complete problems—most notably Product Partition (product‑partition‑n/2), 3‑Partition, and a variant involving division—the paper shows polynomial‑time reductions to the AEC‑EP and AEC‑NP problems.

For example, to prove that AEC‑NP with the operator set {+, ÷, ∗} is weakly NP‑hard, the authors start from a product‑partition‑n/2 instance A = {a₁,…,aₙ}. Each aᵢ is turned into a term aᵢ·x, and two auxiliary monomials y^{n/2} and y^{n/2‑1} are added. The target value is set to t = 2·x^{n/2}·∏aᵢ. Because multiplication and division are evaluated before addition, any feasible expression must consist of exactly one addition of two large products; this forces a partition of the original numbers into two equal‑size subsets whose products are equal, i.e., a solution to the original product‑partition problem. The same construction works when the tree shape D is prescribed (AEC‑EP), showing that the forced‑parentheses variant does not lower the difficulty.

Reductions for other operator subsets follow a similar pattern: the authors encode the required arithmetic structure into the degrees of x and y, ensuring that the only way to achieve the target polynomial is to mimic the solution of the source NP‑complete problem. When subtraction is allowed, the reductions are adapted to guarantee that the subtraction terms cancel only in the intended configuration, leading to weak NP‑hardness.

The distinction between strong and weak NP‑completeness is highlighted throughout. Strong NP‑completeness appears only when division is present (single {÷} or the pair {×, ÷}). In these cases, the size of intermediate numbers can grow exponentially relative to the input encoding, so the hardness persists even if the numeric values are bounded by a polynomial in the input length. All other operator sets are only weakly NP‑complete; their hardness stems from a reduction to a subset‑sum‑like problem, which becomes tractable if the numeric values are small (pseudo‑polynomial algorithms exist).

The paper’s contributions are threefold:

- Problem Extension – It formally defines two realistic variants of arithmetic expression construction that correspond to practical scenarios (fixed parenthesization in code generation, or flat expressions as used in many programming languages).

- Comprehensive Complexity Map – By completing the classification for every non‑empty operator subset, it provides a clear guide for researchers and practitioners about which combinations admit polynomial‑time algorithms and which are intractable.

- Methodological Innovation – It demonstrates the power of the rational‑function reduction technique, showing that many seemingly different arithmetic constraints can be captured within a unified algebraic framework.

The results have immediate relevance to fields such as automated math problem solving, neural‑network‑based expression generation, and educational puzzle design (e.g., the “24 game”). They indicate that, except for trivial single‑operator cases, any algorithm that must guarantee exact solutions will inevitably face NP‑hardness, motivating the use of heuristics, approximation, or restriction to special numeric domains.

In conclusion, the paper establishes that both enforced‑parentheses and no‑parentheses variants of arithmetic expression construction are generally NP‑complete, with division being the key operator that elevates the difficulty to strong NP‑completeness. The work deepens our theoretical understanding of arithmetic expression synthesis and sets the stage for future investigations into parameterized algorithms, approximation schemes, and integration with machine‑learning models.

Comments & Academic Discussion

Loading comments...

Leave a Comment