mBART: Multidimensional Monotone BART

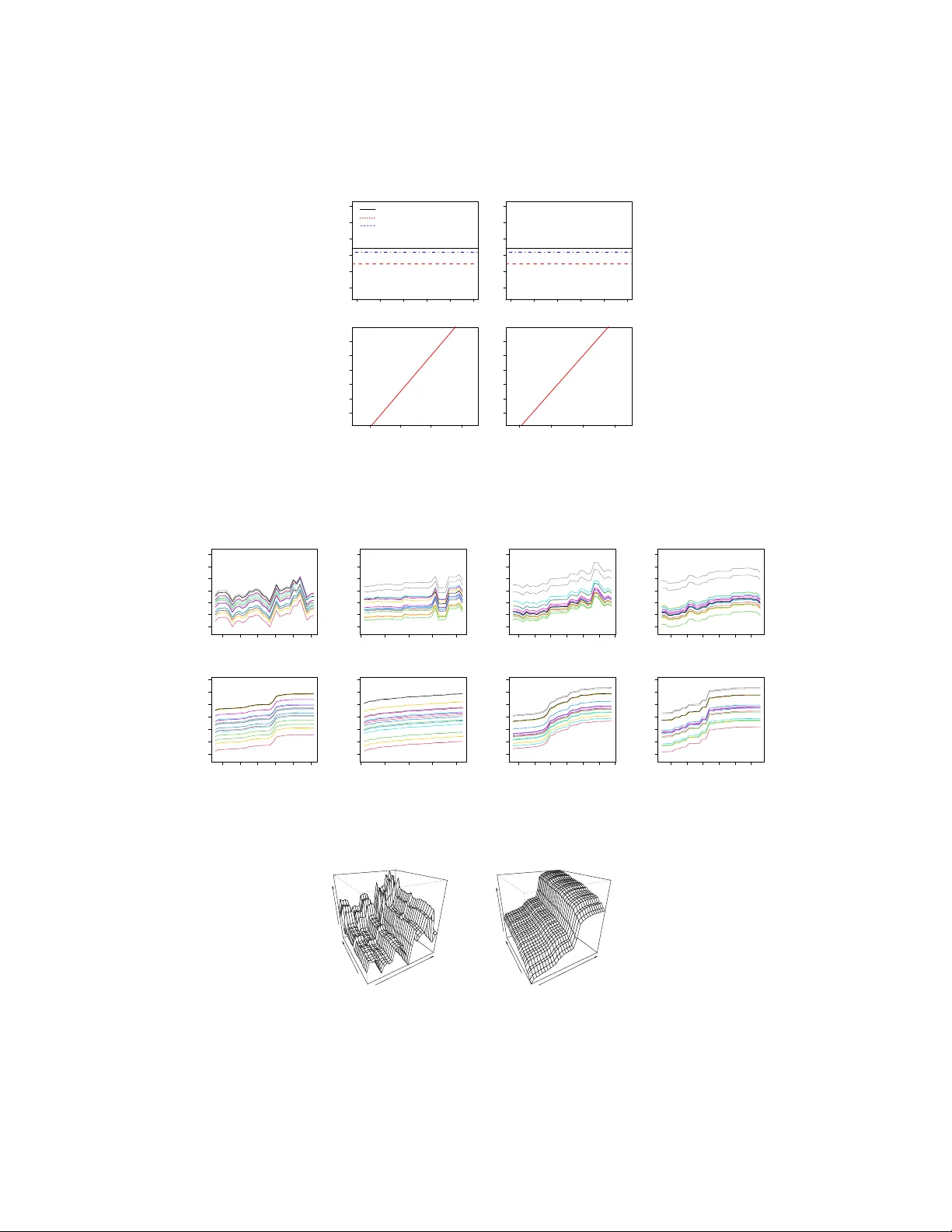

For the discovery of regression relationships between Y and a large set of p potential predictors x 1 , . . . , x p , the flexible nonparametric nature of BART (Bayesian Additive Regression Trees) allows for a much richer set of possibilities than re…

Authors: Hugh A. Chipman, Edward I. George, Robert E. McCulloch