Hyperspectral Unmixing via Deep Autoencoder Networks for a Generalized Linear-Mixture/Nonlinear-Fluctuation Model

Spectral unmixing is an important task in hyperspectral image processing for separating the mixed spectral data pertaining to various materials observed individual pixels. Recently, nonlinear spectral unmixing has received particular attention becaus…

Authors: Min Zhao, Mou Wang, Jie Chen

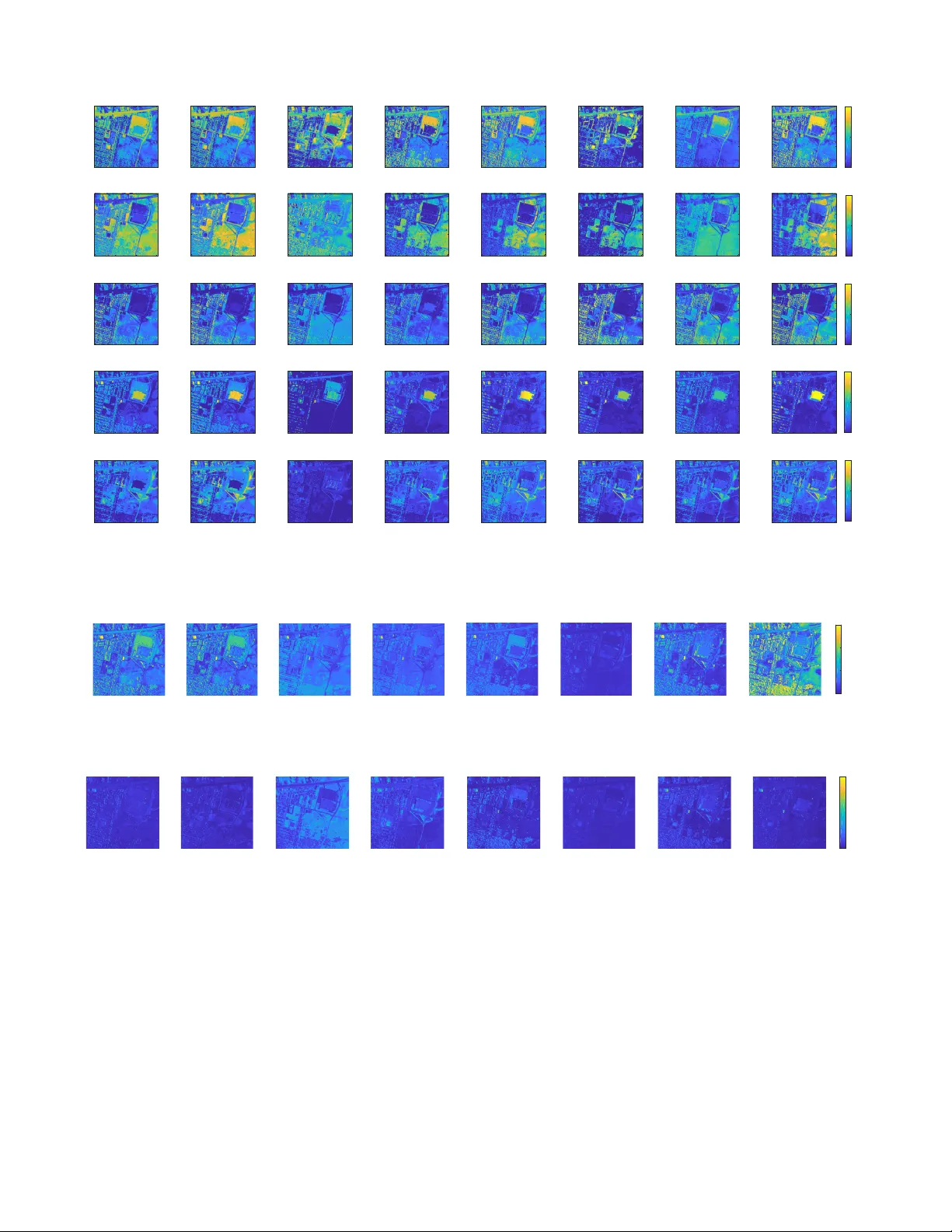

IEEE XX, V OL. XX, NO. XX, 2019 1 Hyperspectral Unmixing via Deep Autoencoder Networks for a Generalized Linear -Mixture/Nonlinear -Fluctuation Model Min Zhao, Student Member , IEEE, Mou W ang, Student Member , IEEE, Jie Chen, Senior Member , IEEE, and Susanto Rahardja, F ellow , IEEE An impro ved version of this work, titled “Hyperspectral Unmixing for Additive Nonlinear Models With a 3-D-CNN A utoencoder Network” has been published on IEEE T rans. Geosci. Remote Sens. at https://ieeexplore.ieee.or g/abstract/document/9503107 (Open Access). Please refer to and cite the formal published version, which uses a 3DCNN to capture spatial correlation simultaneously . Abstract —Spectral unmixing is an important task in hyper - spectral image processing f or separating the mixed spectral data pertaining to various materials observ ed aiming at analyzing the material components in observed pixels. Recently , nonlinear spectral unmixing has recei ved particular attention as hyper - spectral image processing, as there ar e many situations in which the linear mixtur e model may not be appropriate and could be advantageously replaced by a nonlinear one. Existing nonlinear unmixing approaches ar e often based on specific assumptions on the nonlinearity and can be less effectiv e when used for scenes with unknown nonlinearity . This paper pr esents an unsupervised nonlinear spectral unmixing method that addresses a general model that consists of a linear mixture part and an additive nonlinear mixture part. The structure of a deep autoencoder network, which has a clear physical interpr etation, is specifically designed to achie ve this purpose. The pr oposed scheme benefits from the universal modeling ability of deep neural netw orks and learning the nonlinear relation from the data. Extensive exper- iments with synthetic and real data, particularly with labeled laboratory-created data, illustrate the generality and effecti veness of this scheme compared with state-of-the-art methods. Index T erms —Hyperspectral imaging, nonlinear spectral un- mixing, deep learning, autoencoder network. I . I N T RO D U C T I O N H YPERSPECTRAL imaging is a continuously gro wing field of study that has received considerable attention ov er the past decade. Hyperspectral data provide high spectral resolution ov er a wide spectral range that typically extends from the infrared spectrum through the visible spectrum. This rich spectral information facilitates the discrimination of different materials in the observed scene. As a result, hyper- spectral imaging has been widely adopted for a wide range of applications, such as land use analysis, pollution monitoring, wide-area reconnaissance and field surveillance [1]. Howe ver , the spectral content of individual pixels in hyper- spectral images often represent a mixture of sev eral materials The authors are with School of Marine Science and T echnology , North- western Polytechnical University , China. (corresponding author: J. Chen, dr .jie.chen@ieee.org). from the imaged scene due to multiple factors, such as the low spatial resolution of hyperspectral imaging de vices, the div ersity of materials in the imaged scene and multiple reflec- tions of photons from se veral objects. Therefore, separating spectra of individual pix els into a set of spectral signatures (endmembers), and determining the fraction abundances asso- ciated with each endmember is an essential task required for analyzing remotely sensed data. This process is denoted as spectral unmixing or mixed pixel decomposition [2]. Spectral unmixing methods ha ve been dev eloped for this purpose based on both linear and nonlinear mixture models. Among the presently av ailable spectral mixture models, the linear mixture model (LMM) is the most widely used. In LMM, the incident light is assumed to be reflected by each component present in the scene only once prior to collection by the camera sensor , and the observed spectrum is thus a linear combination of the endmembers [2]. Many conv entional unmixing methods based on LMM have been proposed. In [3], the total v ariation regularization is imposed to add spatial information of the hyperspectral image and [4] considered the endmember v ariability problem. While the LMM is simple and physically interpretable, numerous complex conditions arise where the incoming light may under go comple x inter- actions among the individual materials in the scene, resulting in higher -order photon interactions that introduce nonlinear effects in the mixed spectra. Consequently , the analysis of data collected under these conditions requires nonlinear un- mixing methods [5]. A considerable number of studies have recently focused on addressing nonlinear unmixing problems. For example, bilinear models [6] hav e been developed to address conditions of second-order scattering interactions that may occur on complex vegetated surfaces, by adding extra bilinear interaction terms to the linearly composited spectrum. Such models include the Fan model [7] and the generalized bilinear model (GBM) [8]. The polynomial post-nonlinear mixture model (PNMM) applies a polynomial function to the linearly mixed data to approximate the nonlinearity of photon interactions occurring in an imaged scene [9]. A IEEE XX, V OL. XX, NO. XX, 2019 2 bidirectional reflectance model has been de veloped to describe the photon interactions of intimately mixed particles based on the fundamental principles of radiative transfer theory . This model is generally referred to as the intimate mixture model or Hapke model [10]. The multimixture pixel (MMP) model further extended the intimate mixture model by integrating it with the LMM model [11]. The above cases have been general- ized by considering a linear-mixture/nonlinear -fluctuation (K- Hype) model, where the nonlinear fluctuation was described by a function defined in a reproducing kernel Hilbert space (RKHS) [12]. Further extensions of this model have also been proposed with spatial regularization [13] and neighborhood- dependent contrib utions [14]. The multilinear mixing model (MLM) considers an infinite number of photon interactions by introducing a probability of photon undergoing further interactions [15]. The work [16] uses a graph-based model to describe the multiple photon interactions. Ho wever , most of the above models rely heavily on specific assumptions regarding the inherent nonlinearity of the spectral unmixing, and they are therefore not well suited to scenes with unkno wn nonlinearity characteristics. In addition, while the K-Hype model based on the RKHS presented abov e and other kernel- based algorithms provide flexible nonlinear modeling, the selection of appropriate kernels and kernel parameters has been demonstrated to be a non-trivial issue that restricts the application of these approaches. Finally , all of these algorithms assume that the endmembers are kno wn prior , and therefore focus strictly on ev aluating the abundance fractions. In recent years, deep learning has demonstrated its supe- rior performance in addressing various nonlinear problems compared to classical methods. Researchers have also in- vestigated the use of deep neural networks in hyperspectral image analysis. Particular attention has been focused on the hyperspectral image classification problem [17]–[19]. Ho w- ev er , despite the recognized potential of neural networks for solving in verse problems, only a handful of studies have applied neural networks for addressing the spectral unmixing problem. Among these, classifier models have been applied to spectral unmixing [20], [21], but this approach requires a training set with known ground-truths, which must often be generated by theoretical models. In addition, autoencoder networks ha ve also been applied to the blind spectral unmixing problem. An autoencoder is a network that learns to compress an input into a short code which can be uncompressed into something that is close to the original input. Internally , it has a hidden layer that describes that short dimensional code used to represent the input data for reconstructing the output data. This is ideally suited for conducting spectral unmixing because this process can also be considered as finding a low dimensional representation (abundance fractions) of hyperspectral data. For example, approaches employing autoencoder networks hav e exhibited good performance in determining both endmembers and abundance fractions [22]–[29]. Howe ver , these approaches are specifically designed to pre- process the input data or address the linear unmixing problem, and therefore fail to make use of the superior potential of neural networks for addressing nonlinear problems, while linear unmixing is readily addressed using classical methods. These issues were addressed in our previous work [30], where we designed an autoencoder network for conducting blind nonlinear unmixing. This work considered a post-nonlinear spectral mixture, where the post-nonlinearity was modeled by the decoder part of the autoencoder . In there, pretraining and learning rate adjustment techniques were required to ensure the effecti veness of the decoder , and the nonlinear model represented by the decoder was not sufficiently general to cov er multiple nonlinear cases. The present work addresses the deficiencies in previous works by re-examining the nonlinear mixture models and restrictions of existing unmixing schemes based on deep neural networks. Accordingly , this paper presents a new autoencoder network structure for blind nonlinear spectral unmixing. The highlights of this work are summarized as follows. 1) A general spectral mixture model that consists of a linear mixture component and an additi ve nonlinear mixture component is proposed. The significance of an end- member in the nonlinear mixture component is weighted according to its associated abundance fraction. A deep neural network is proposed to represent this nonlinear part and generalizes the existing related models. 2) A deep autoencoder network is designed to conduct the nonlinear unmixing based on the proposed model. The form of the inherent nonlinearity of the nonlinear mixture component is learned from the data itself, rather than relying on an assumed form. The structure of the decoder is designed with particular care so that the nonlinear interactions are imposed on endmembers weighted by abundances, which has a clear physical interpretation and cov ers se veral existing artificial mod- els. Endmembers and abundance fractions are extracted from the outputs and weights of the particular layers of the network. Extra regularizations are also imposed to enhance the unmixing performance. 3) Most existing studies hav e relied on numerically pro- duced synthetic data and an intuiti ve inspection of the results of real data. Lack of publicly av ailable datasets with ground-truths hampers capability to ev aluate and compare the performance of unmixing algorithms in a quantitativ e and objectiv e manner . The proposed algo- rithm is tested using real data with ground-truths created in our laboratory . Using labeled real data provides for more convincing comparison results. The remainder of this paper is organized as follows: Sec- tion II presents the formulation of the nonlinear mixture model. Section III presents the design of the proposed autoen- coder scheme for unmixing. Section IV validates the proposed method with experiments using synthetic and real data. Sec- tion V concludes the work and provides the perspecti ve of the future work. I I . P RO B L E M F O R M U L AT I O N Notation. Normal font x and X denote scalars. Boldface small letters x denote vectors. All vectors are column vectors. Boldface capital letters X denote matrices. Considering an observed pixel data x ∈ R B with B denoting the number IEEE XX, V OL. XX, NO. XX, 2019 3 T ABLE I R E L A T I N G T Y P I C A L N O N L I N E AR M O D EL S W I T H T H E G E N E R I C F O R M E Q . (8) ( N O I S E V E C TO R n I S O M I TT E D F O R S A V I N G S PAC E ) . Model expression Form of Ψ Note Bilinear model x = Ma + P R i =1 P R j = i +1 a i m i a j m j Ψ = P R i =1 P R j = i +1 a i m i a j m j denotes the element-wise product Post-nonlinear model x = Ma + Ma Ma Ψ = Ma Ma Note Ma = m 1 a 1 + · · · + m R a R K-Hype model x i = m i a + ψ ( m λ i ) [Ψ] i = P B i =1 β i κ ( m λ i , m λ j ) ψ is in a RKHS with kernel κ β i are coef ficients to be determined a is ignored in Ψ Multilinear mixing model x = Ma + p Ma ( Ma − 1) / (1 − p Ma ) Ψ = p Ma ( Ma − 1) / (1 − p Ma ) p is the probability of interactions of spectral bands, and M = [ m 1 , · · · , m R ] denotes the ( B × R ) endmember matrix with endmembers m i , R rep- resents the number of endmembers. a = [ a 1 , a 2 , · · · , a R ] > is the abundance vector associated with a pixel. The operator blkdiag {· · · } forms a matrix of size B R × R using v ectors { y i } R i =1 ∈ R B such that blkdiag { y 1 , y 2 , · · · , y R } = y 1 0 B · · · 0 B 0 B y 2 · · · 0 B . . . . . . . . . . . . 0 B 0 B · · · y R ∈ R B R × R (1) with 0 B denoting all zero vectors of length B . Considering using such a matrix Y = blkdiag { y 1 , y 2 , · · · , y R } as the weight matrix of a layer of a deep neural network, re gular matrix product maps the input h = [ h 1 , · · · , h R ] > to the output of the form Yh = h 1 y > 1 , h 2 y > 2 , · · · , h R y > R > . (2) The usefulness of such an operation will be clear when we relate Eq. (24) and Eq. (27) to Eq. (9). The operator col { y 1 , · · · , y N } stacks its vector arguments { y i } N i =1 on the top of each other to generate a connected v ector giv en by col { y 1 , · · · , y N } = [ y > 1 , · · · , y > N ] > = y 1 . . . y N . (3) W e firstly consider the linear mixing model where each observed pixel is assumed to be a linear combination of the endmembers weighted by their associated abundances: x = Ma + n , (4) where n ∈ R B is an additi ve noise vector . As the abundances represent relati ve fractions of each material, they are re- quired to satisfy the ab undance non-ne gati ve constraint (ANC), Eq. (5), and abundance sum-to-one constraint (ASC), Eq. (6), that are ∀ i : a i ≥ 0 (5) R X i =1 a i = 1 . (6) In this work, we consider the following general mixing mech- anism: x = Ma + Ψ( M , a ) + n , (7) which consists of a linear mixture of endmembers M with abundance fractions a , and a nonlinear fluctuation Ψ that defines the interactions of M parameterized by a . Sev eral existing typical nonlinear models are summarized in T able I. W e revised the mixture model defined in Eq. (7) to provide a more tractable form as follows: x = Ma + Ψ( a 1 m 1 , a 2 m 2 , · · · , a R m R ) + n = Ma + Ψ( M diag ( a )) + n . (8) where Ψ represents the nonlinear interaction between the endmembers M weighted by associated abundance a . It is clear that Eq. (8) is a general model that yields the models defined in T able I under different choices of Ψ . W e refer to this model as a ger enalized linear-mixtur e/nonlinear -fluctuation model . This form suggests that the nonlinear interactions of material signatures are in proportion to the abundance fractions of each material. This is reasonable, because, for instance, a material with a negligible abundance will have limited contribution to either the linear component, or the nonlinear component of x . Sev eral existing nonlinear models can be considered as specific cases of Eq. (8) under different definitions of Ψ . T ypical nonlinear mixing models and the relations between these algorithms and Eq. (8) are summarized in T able I. W ith the exception of K-Hype, these algorithms are designed manually to capture the assumed nonlinearities. The linear-mixture/nonlinear -fluctuation model used by the K-Hype algorithm is relati vely more general, and has some similarities with Eq. (8). Howe ver , in addition to the non- trivial issue associated with the selections of kernel and kernel parameters discussed above, this model suffers from the use of a nonlinear fluctuation function that is independent of the abundance fractions. Hence, the endmembers contrib ute equiv- alently to the nonlinear component of the observed spectrum. The present model clearly addresses this restriction by ex- plicitly including the abundance fractions of the endmembers. In addition, the restriction associated with the selections of kernels and kernel parameters is addressed in the proposed approach by not assigning Ψ in Eq. (8) with any specific form. Instead, we devise a method to learn it from the data itself via an autoencoder network. In order to facilitate to present the structure of the autoen- coder network, we write Eq. (7) in the following equiv alent form x = T ( M D a ) + Ψ( M D a ) + n (9) where M D = blkdiag { m 1 , m 2 , · · · , m R } ∈ R B R × R (10) IEEE XX, V OL. XX, NO. XX, 2019 4 and T : R B R 7→ R B is a step-wise summation operator , i.e., for a giv en vector y ∈ R B R T ( y ) = R X i =1 y B × ( i − 1)+1 , · · · , R X i =1 y B × ( i − 1)+ B ! > . (11) W ith the abov e notation, we hav e M D a = col { a 1 m 1 , a 2 m 2 , · · · , a R m R } , (12) and T ( M D a ) = Ma . (13) Then the linear component and the nonlinear component shares the same input M D a . I I I . P RO P O S E D A P P R OA C H In this section, we present a thorough presentation of the proposed method that solves the nonlinear unmixing problem using deep autoencoder networks. A. General structur e The structure of an autoencoder network consists of two parts, namely an encoder and a decoder . Encoder f E com- presses the input x into a lo w dimensional representation h ∈ R R , i.e. h = f E ( x ) , (14) with f E : R B × 1 → R R × 1 . Recall that B represents the number of bands and R denotes the number of endmembers. Decoder f D uncompresses the hidden representation vector h to reconstruct the original input data, i.e., ˆ x = f D ( h ) , (15) with f D : R R × 1 → R B × 1 . The network trains the parameters and representations by minimizing the average reconstruction error between the input x and its reconstructed counterpart ˆ x i = f D ( f E ( x i )) given by L ( x , ˆ x ) = 1 N N X i =1 ˆ x i − x i 2 . (16) W ith the output of the encoder h ∈ R R , in this work the decoder is designed to reconstruct the input x with the following specific structure: ˆ x = T ( V (1) h ) + Φ( V (1) h ) (17) where V (1) are weights of the first layer of the decoder , as to be defined in Eq. (23), Φ is the nonlinear function constructed by the nonlinear part of our decoder , and It is expected that after the learning process, Φ mimics the generati ve model Ψ . Comparing this structure to Eq. (9), the decoder mimics the output in accordance to this model. Therefore, after the network parameters are learnt with data, blind unmixing of the same input data can be conducted by: Abundance estimation : h ⇒ ˆ a (18) Endmember extraction : V (1) ⇒ c M D . (19) Both encoder and decoder can either be shallow or deep, but generally , it is belie ved that deep networks possess a superior modeling capability . The schema of the proposed autoencoder network is illustrated in Figure 1 in order that readers can better understand the proposed structure. W e elaborate the design of encoder and decoder in the following subsections. B. Encoder In this work, a regular deep network is designed as the encoder with a useful specific structure reported in the upper - part of T able II. No specific constraints are imposed on the encoder in order to fully use the capacity of the network and reduce the information loss. The number of units of the input layer is the same as the number of spectral bands B , and the number of units of the last layer is the number of endmembers R . Note that the number of endmembers R is assumed priorly known in our work. The four fully connected layers gradually narrow down from the input layer of dimension B to deep layers until reaching the size R . Except for the last hidden layer , the first three layers adopt the same activ ation functions φ , such as Sigmoid, ReLU, and Leaky ReLU (LReLU). ReLU is one of the most notable activ ation function in modern deep learning systems, and the LReLU is considered an improved version as it has nonzero gradient for all inputs. W e hav e conducted extensiv e experiments to v alidate the enhanced performance of LReLU. Hence, LReLU is preferred in this work. The relation between the input and the output of layer i of the encoder is given by h ( i ) = φ U ( i ) h ( i − 1) + b ( i ) , (20) where h ( i − 1) and h ( i ) represent the outputs of the previous layer and the current layer respectively , U ( i ) and b ( i ) are the weight matrix and the bias vector of the current layer . The non-negativity and sum-to-one constraints imposed on abundance vector a should be carefully addressed. In order to meet the ANC, the work [25] uses a threshold to enforce the vector to be non-negati v e, and the work [27] uses a non- negati ve autoencoder to guarantee the ANC o ver the whole network. The former strate gy deacti v ates a large number of nodes in the network, and the capability of network is thus not fully utilized. The latter strategy imposes strong constraints on the network and makes it difficult to design the network. For the ASC, the works [25] and [27] add a regularization to encourage the ASC, and [23] uses a normalization operator on a . In this work, we address the ANC and ASC using the strategy proposed in our previous work [30]. Absolute value rectification is used to enforce h , the output of the encoder network (abundance estimation), to be non-neg ativ e. Then this non-negati ve vector is normalized by sum of its entries to satisfy sum-to-one, namely , h i = | h i | P R i =1 | h i | , (21) where h i is the i th element of the abundance vector h . IEEE XX, V OL. XX, NO. XX, 2019 5 ⊕ a b s n o r m a l i z a t i o n E nc ode r D e c ode r A b u n d a n c e e s t i m a t e s f r o m out put i npu t H ype r s pe c t r a l i m a ge P i xe l s D a t a ( pi xe l l e ve l ) R e c ons t r uc t e d da t a L i ne a r c om pon e nt E n d m e m b e r e s t i m a t e s f r o m N onl i ne a r c om pon e nt E n d m e m b e r # 1 E n d m e m b e r # 2 . . . E n d m e m b e r # E n d m e m b e r # 1 E n d m e m b e r # 2 . . . E n d m e m b e r # Fig. 1. Diagram of the proposed system. As presented in Sec. III, U are the weights of the encoder, h is the output of the utility layer (Eq. (21)), V (1) are the weights of the first-layer of the decoder, and V nlin denotes the weights of the nonlinear part of the decoder . T ABLE II T H E S T RU C T UR E O F N ET W O RK . ( “ U TI L I TY ” D E N OT ES A L A Y E R T H A T P E RF O R M S S O M E TR A N S FO R M S OT HE R T H A N T H E R EG U L A R A C TI V A T I O N S O N TH E O U T PU T S O F T H E P RE V I O US L AYE R . ) Encoder Layers Activ ation function unit Input layer - B Hidden layer LReLU 32 R Hidden layer LReLU 16 R Hidden layer LReLU 4 R Hidden layer - R Utility abs + normalization - R Decoder Linear part Hidden layer ReLU B R Nonlinear part Hidden layer LReLU B Hidden layer LReLU B Output layer ReLU B C. Decoder The decoder is designed to reconstruct the input with a linear structure and a parallel nonlinear structure. The specific setting of this structure is reported in the lower -part of T able II. Recalling the operators and symbols defined in Eq. (9) to Eq. (13) and the decoder structure given by Eq. (17), the first layer of the decoder is then designed by o (1) = V (1) h , (22) where V (1) is defined as weights of the first layer of the decoder . Endmember extracted by linear algorithms, like the VCA algorithm can be used to initialize the learning process of V (1) . V (1) is constrained with the following form V (1) = blkdiag { v (1) 1 , · · · , v (1) R } . (23) Consequently , the product V (1) h equals to V (1) h = col { h 1 v (1) 1 , · · · , h R v (1) R } . (24) V ectors { h i v (1) i } R i =1 are generated as estimates of the end- members weighted by the associated abundances. The output o (1) of this layer is used as the input of T ( · ) so that T ( o (1) ) generates the linear component of the spectrum, and o (1) is also used as the input of the nonlinear component defined by a fully connected network without bias weights. This nonlinear component of decoder is designed to represent the nonlinear interactions among the endmembers weighted by the associ- ated abundances. Studies show that a neural network with two hidden layers can represent arbitrary nonlinear relation among the input [31]. In our scheme, we use two hidden layers to learn the nonlinear relation among the endmembers, since very high-order photon interactions, though may exist, are usually weak in practice. T o avoid over -fitting, a parameter norm penalty is added to the weights of the nonlinear com- ponent. W e shall elaborate this parameter penalty in the next subsection. This network thus learns the nonlinearity from the data and models all nonlinear interactions among { h i v ( i ) i } R i =1 . Finally , the outputs of these two parallel structures are added to reconstruct the estimate ˆ x by: ˆ x = f D ( h ) (25) = ˆ x lin + ˆ x nlin (26) = T ( o (1) ) + Φ( o (1) ) . (27) IEEE XX, V OL. XX, NO. XX, 2019 6 The energy of component ˆ x nlin allows to indicate where the nonlinear effects spatially appear , which can be useful in many applications. D. Objective function Sev eral components are considered to formulate the objec- tiv e function of the proposed autoencoder . The mean-square error between the input and reconstructed data is employed for the data fitting: J data ( W ) = L ( x , ˆ x ) = 1 N N X i =1 k f D ( f E ( x i )) − x i k 2 . (28) Blind unmixing problem with both endmember and abundance unknown can be a difficult in verse problem. Regularization is often imposed to condition the problem with reasonable prior information. In this work, we first consider the regularity of the nonlinear function Ψ , as proposed in [12]. Thus the ` 2 -norm of the weights of the nonlinear part of the decoder (denoted by V nlin ) given by J reg ( V nlin ) = k V nlin k 2 (29) is used as the regularization to drive the weights to decay and av oid o ver -fitting. Further , a first-order TV -norm regularization giv en by J smth ( V (1) ) = R X i =1 B − 1 X j =1 [ v (1) i ] j +1 − [ v (1) i ] j (30) is imposed on { v (1) i } R i =1 . Because { v (1) i } R i =1 are the estimates of the endmembers, such a regularization encourages the smoothness of the endmembers and reduces the estimation noise. Finally , the objectiv e function is formulated by J ( W ) = J data ( W ) + λ J reg ( V nlin ) + γ J smth ( V (1) ) (31) where positi ve parameters λ and γ control the strengths of the two regularization terms. I V . E X P E R I M E N T S In this section, the proposed unmixing scheme was imple- mented and its performance was compared with several typ- ical state-of-the-art unmixing methods, using synthetic data, labeled laboratory-created data, and real airborne image data. Note that the general network structure and number of layers are the same for all experiments and all data are normalized between 0 and 1. The performance of abundance estimation was measured by the root mean square error (RMSE) defined by RMSE = v u u t 1 N R N X i =1 a i − ˆ a i 2 (32) where N represents the number of pixels, a i and ˆ a i denote the true and estimated abundance vectors of the i th pixel. The accuracy of the endmember estimation was ev aluated using the spectral angle distance (SAD) and the spectral information diver gence (SID) giv en by SAD = cos − 1 m T b m k m kk b m k SID( m | b m ) = P j p j log p j ˆ p j , (33) where m represents an endmember and b m represents its estimate, p = m 1 > m is the probability distrib ution v ector of each endmember, and ˆ p = ˆ m 1 > ˆ m . The following typical algorithms were compared: • The endmember extraction with VCA and ab undance estimation with K-Hype [12]: VCA is a classic geo- metric method used for endmember extraction. The K- Hype algorithm considers the linear-mixture/nonlinear - fluctuation model and approximates the nonlinearity by the kernel trick. • The endmember extraction with VCA and ab undance estimation with multilinear model (MLM) [15]: MLM is based on a Markov chain interpretation of the reflection process of a single light ray . A probability parameter is used to describe the possibility of interacting with the next material. • The endmember extraction with N-FINDR and ab un- dance estimation with NDU [14] N-FINDR [32] is a classic method that used to extract endmember . NDU is a nonlinear abundance estimation method that is band- dependent and uses neighborhood information. • The rob ust non-negative matrix factorization (rNMF) [33]: rNMF is an NMF-based nonlinear method that determines the endmembers and abundances simultaneously via a block-coordinate descent algorithm that inv olv es majorization-minimization updates. • A deep autoencoder network for nonlinear unmixing (NAE) [30]: N AE is a nov el scheme for blind nonlinear unmixing based on a deep autoencoder network that addresses the post-nonlinear mixture problem. • NUSAL [34]: it is a kernel based method for nonlin- ear unmixing by variable splitting and augmented La- grangian. The method also assumes a linear mixing model corrupted by an additiv e term whose expression can be adapted to account for multiple scattering nonlinearities. • SAE [35]: it is a supervised ab undance estimation method for nonlinear unmixing based on a classifier model. This method uses stacked autoencoder scheme to learn the mapping between pixels spectra and the fractional abundances. Note that the linear endmember extraction algorithms were used for the first three methods that are focused on the abun- dance estimation. These geometrical algorithms still pro vide sufficiently good results when the nonlinearity degree in data is moderate, as they are able to extract vertices from distorted data clouds [36]. Our experiments will also confirm their performance. All unsupervised nonlinear unmixing methods, namely rNMF , NAE and our proposed method, are initialized by the same VCA result. IEEE XX, V OL. XX, NO. XX, 2019 7 50 100 150 200 Spectral Bands 0 0.2 0.4 0.6 0.8 Reflectance Value (a) 50 100 150 200 Spectral Bands 0 0.2 0.4 0.6 0.8 Reflectance Value (b) 50 100 150 200 Spectral Bands 0 0.2 0.4 0.6 0.8 Reflectance Value (c) 50 100 150 200 Spectral Bands 0 0.2 0.4 0.6 0.8 Reflectance Value (d) Fig. 2. Illustration of extracted four endmembers from the data with the linear model mixture under SNR=20 dB. Red curv es represent the ground-truth. The blue and green curves represent the extracted endmembers with γ = 0 and γ = 10 − 3 respectiv ely . Proper regularization increases the smoothness of the estimated endmembers. T ABLE III A B U ND A N CE R M S E C O M P A R I S ON O F T H E S YN T H E T I C D A TA . SNR=20dB SNR=30dB SNR=40dB linear bilinear PNMM linear bilinear PNMM linear bilinear PNMM VCA-K-Hype 0.0515 0.0594 0.0443 0.0422 0.0698 0.0443 0.0362 0.0384 0.0436 VCA-MLM 0.0273 0.0796 0.0360 0.0098 0.0658 0.0438 0.0090 0.0560 0.0299 N-FINDR-NDU 0.1186 0.1141 0.0819 0.1165 0.1112 0.0747 0.1140 0.1069 0.0718 rNMF 0.0882 0.0935 0.0746 0.0814 0.0859 0.0710 0.0814 0.0816 0.0716 N AE 0.0241 0.0427 0.0373 0.0211 0.0427 0.0372 0.0189 0.0200 0.0368 NUSAL 0.0395 0.0434 0.0429 0.0384 0.0430 0.0297 0.0377 0.0385 0.0271 SAE 0.0835 0.0944 0.0953 0.0256 0.0774 0.0464 0.0208 0.0460 0.0660 Proposed method 0.0241 0.0420 0.0304 0.0091 0.0402 0.0292 0.0084 0.0154 0.0239 Boldface numbers denote the lowest RMSEs A. Experiments with synthetic data 1) Data description: The synthetic data were generated with the linear mixutre model and two nonlinear models. The endmembers used to generate the data were extracted from USGS digital spectral library . These spectra consist of 224 contiguous bands. The linear mixture model is giv en by Eq. (4). The bilinear mixture model x = Ma + R − 1 X i =1 R X j = i +1 a i a j ( m i m j ) + n , (34) and the post-nonlinear mixing model (PPNM): x = Ma + Ma Ma + n , (35) were used as the two nonlinear models. In this experiment, four pure material spectra ( R = 4 ) were considered and the ab undance fractions were generated from Dirichlet dis- tribution. A total number of 3 × 10 5 pixels were generated to ev aluate the performance. Zero-mean Gaussian noise was added with the signal-to-noise ratio (SNR) set to 20 dB, 30 dB and 40 dB, respectiv ely . Our proposed scheme was implemented using PyT orch and T orch. During the learning process, the data was divided into a number of blocks ac- cording to the batch size, which was the number of samples used for a forward operation and a backward propagation operation. In an epoch, the number of iteration was equal to the total number of samples divided by batch size. The weights of the network were updated after learning with each batch. W e used Adam optimizer to train the network. Adam is a simple and computationally efficient algorithm for gradient-based optimization of stochastic objective functions. The learning rate was a tuning parameter that determines the step size at each iteration while moving toward a minimum of a loss function. The specific parameters are given in the following subsections. 2) Results: T able II summarizes the network configurations used in this experiment. The learning rate was set to 1 × 10 − 4 . The batch size was set to 1024. Note that a larger batch size leads to more accurate descent directions but increases the possibility of reaching a local optimum, while a small batch size may result in difficulties in con ver gence. The number of training epochs was set to 30. The parameter λ was set to 1 × 10 − 3 , and the smoothing regularization parameter γ was set to 1 × 10 − 3 . Figure 3 shows the con vergence curve during learning process with the data generated by the bilinear model under SNR = 30 dB. W e also studied the sensitivity of the proposed method with the algorithm parameters λ and γ with this data. The result is shown in Figure 4. It can be seen the method e xhibits satisfactory RMSE within a reasonable range around the optimal parameter values. T ables III, IV and V report the RMSE, SAD and SID results of the compared methods under different models and SNR settings. It is clear that our proposed method achie ves the best ab undance estimation performance, and sufficiently good endmember estimation performance with both linear and nonlinear models. Note that when the mixtures are affected IEEE XX, V OL. XX, NO. XX, 2019 8 5 10 15 20 25 30 Iterations over entire dataset 0 0.002 0.004 0.006 0.008 0.01 0.012 Training cost Fig. 3. Conver gence curv e during 30 epochs. 0.02 0.0001 0.00001 0.025 0.03 0.035 0.0005 0.00005 0.04 0.045 0.05 0.001 0.0001 0.005 0.0005 0.1 0.001 Fig. 4. RMSE as a function of the regularization parameters for the proposed method. by moderate nonlinearities, geometrical endmember extraction algorithms based on linear model can still provide suffi- ciently good results for nonlinear mixtures, in particular when constraints on simplex volumes are imposed [36]. Among compared algorithms, MLM is a nonlinear unmixing method with a specific assumption on the nonlinearity . Both K-Hype and NDU are kernel-based methods, and the selection of the kernel and its parameters notably af fect their performance. The proposed method builds a model by learning the nonlinearity from the observed data, and therefore the issue of the ker - nel selection is then av oided. Compared to the state-of-the- art unsupervised nonlinear unmixing methods, namely rNMF and N AE with the same initialization, our proposed method almost alw ays improv es the ab undance estimation accuracy . Moreov er , benefiting from the fact that the low-dimensional vector generated from encoder maintains the main information and gets rid of redundant information and noise, the proposed method is rob ust to noise. In order to understand the ef fect of the smoothing regularization, we show in Figure 2 the extracted endmembers with γ set to 0 and 1 × 10 − 3 in the linear case with SNR = 20 dB. Removing this regularization ( γ = 0 ) leads to noisy estimated endmember curv es. The usefulness of this smoothing effect is clearly illustrated. B. Experiments with real data 1) Experiment with laboratory-cr eated data: In order to perform quantitati ve ev aluation of unmixing performance with real data, we designed se veral e xperimental scenes with kno wn ground-truth in our laboratory . Our data were collected by the GaiaField and GaiaSorter systems in our laboratory . Our GaiaField (Sichuan Dualix Spectral Image T echnology Co. Ltd., GaiaField-V10) is a push-broom imaging spectrometer with an HSIA-OL50 lens, covering the visible and NIR wa velengths ranging from 400 nm to 1000 nm, with a spectral resolution up to 0.58 nm. GaiaSorter sets an en vironment that isolates external lights, and is endo wed with a con veyer to mov e samples for the push-broom imaging. T wo non-uniform mixtures of colored quartz sand with spatial patterns of Scene-II in our published dataset [37] were used. The experimental settings were strictly controlled so that pure material spectral signatures and material compositions were known. The data consist of 256 spectral bands. Dif ferent colors of quartz sands with uniform size were used as pure materials shown in Figure 5 (a)–(c). The mixtures are shown in Figure 5 (d)–(e). T o calculate the ground-truth, the aligned high-resolution RGB images of these scenes were captured and linked to hyperspectral pixels using the spatial resolution ratio, and then the percentage of each colored sand in a low-resolution hyperspectral pixel could be analyzed with the help of the associated RGB image. In our experiments, sub- image of 60-by-60 were clipped out from the center of each subfigures. In this set of experiments, the learning rate w as also set to 1 × 10 − 4 , and the Adam optimizer was used to train the network. The batch size of this experiment was set to 100 and the number of training epochs was set to 50. The parameter λ was set to 1 × 10 − 4 , and γ was set to 1 × 10 − 6 . Figures 6 and 7 illustrate the estimated ab undance maps of these algorithms. The ground-truth abundance maps are shown in the first columns of Figure 6 and 7. The abundance maps estimated using the compared algorithms and our proposed method are shown alongside. The proposed algorithm results in sharper abundance maps, and the general spatial patterns of the estimated maps are more consistent with the ground-truth. The quantitative RMSE, SAD and SID results are compared in T able VI. W e observe that the proposed algorithm achieves the lowest RMSEs and suf ficiently good endmember estimation performance. These unmixing results with labeled real data highlight the superior performance of the proposed method. 2) Experiment with r eal airborne data: T wo real airborne images, namely , Jasper Ridge dataset and urban dataset, were used to validate the proposed scheme. Jasper Ridge is a widely used hyperspectral dataset. A subimage of 100 × 100 pixels were used to test the perfor- mance of our proposed method and se veral other compared algorithms. Each pixel was recorded at 224 channels ranging from 380 nm to 2500 nm with spectral resolution up to 9.46 nm. After remo ving the channels affected by water vapor and the atmospheric en vironment, 198 channels were kept. The number of endmembers w as set to 5, including water , tree, soil, road, and the 5th endmember . The same network defined in T able II was used for this data, with B = 198 , and R = 5 , with the learning rate set to 1 × 10 − 4 . The batch size used in this experiment was set to 512, and the number of training epochs was set to 50. The parameter λ was set to 1 × 10 − 3 , and γ was set to 1 × 10 − 8 in this experiment. Figure 8 illustrates the extracted endmembers IEEE XX, V OL. XX, NO. XX, 2019 9 T ABLE IV E N D M EM B E R S AD C O M P A R I SO N O F T H E S Y N T H E T I C DAT A . SNR=20dB SNR=30dB SNR=40dB linear bilinear PNMM linear bilinear PNMM linear bilinear PNMM VCA-K-Hype/MLM 1.4615 2.4438 5.0424 0.6747 3.3831 4.9690 0.4238 1.0350 5.0644 N-FINDR-NDU 5.9772 6.2460 5.0186 2.0760 3.1794 5.1652 0.7633 0.9039 5.2979 rNMF 9.6746 9.4880 11.6248 8.0587 7.9005 11.5988 7.7533 8.1922 11.6332 N AE 1.3341 2.3112 5.4361 0.4415 3.1273 5.3542 0.3611 0.9000 5.3279 NUSAL 2.2180 4.3522 7.0544 0.7918 5.3761 5.7092 0.5370 2.2496 5.3339 SAE 2.0868 3.3338 5.4453 0.7376 4.6808 5.2390 0.4228 1.7467 5.0892 Proposed method 1.5544 1.9214 5.2590 0.5886 3.1201 5.0861 0.3434 0.9900 5.0036 Boldface numbers denote the lowest SADs. T ABLE V E N D M EM B E R S ID C O M P A R I SO N O F T H E S Y N T H E T I C DAT A . SNR=20dB SNR=30dB SNR=40dB linear bilinear PNMM linear bilinear PNMM linear bilinear PNMM VCA-K-Hype/MLM 0.0014 0.0045 0.0133 0.0005 0.0087 0.0130 0.0002 0.0006 0.0140 N-FINDR-NDU 0.0291 0.0346 0.0112 0.0036 0.0089 0.0123 0.0006 0.0008 0.0134 rNMF 0.0863 0.0875 0.1282 0.0545 0.0529 0.1314 0.0504 0.0627 0.1301 N AE 0.0012 0.0037 0.0158 0.0001 0.0074 0.0154 0.0001 0.0007 0.0153 NUSAL 0.0091 0.0096 0.0256 0.0005 0.0136 0.0143 0.0014 0.0027 0.0158 SAE 0.0043 0.0085 0.0157 0.0005 0.0134 0.0137 0.0002 0.0014 0.0141 Proposed method 0.0015 0.0022 0.0151 0.0004 0.0076 0.0136 0.0001 0.0006 0.0148 Boldface numbers denote the lowest SIDs. (a) (b) (c) (d) (e) Fig. 5. Laboratory-created data for unmixing performance ev aluation (RGB images). Subfigures from (a) to (c): pure quartz sand with the diameter of 0.3 mm of three colors. They serve as pure materials for providing endmembers. Subfigures (d), (e): mixtures of sand with spatial patterns. Square regions of 60-by-60 pixels (0.86 mm / pixel) in the center of each subfigures are clipped out and used in experiments. 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 20 40 60 20 40 60 Fig. 6. Abundances maps of the 1st mixture of the laboratory-created data. From left to right columns: ground-truth, estimated results of VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method respectiv ely . From top to bottom: abundance maps of red quartz sand, green quartz sand and blue quartz sand respectively . by the proposed algorithm. Figure 9 illustrates the estimated abundance maps of the fi ve endmembers obtained by these algorithms. W e observe that the proposed algorithm provides a shaper and clearer map of different materials. Figure 10 shows the energy of the nonlinear components estimated by these algorithms, and the nonlinear energy of the pixel is IEEE XX, V OL. XX, NO. XX, 2019 10 T ABLE VI R M SE , S A D A N D S I D C O M P A RI S O N O F U N M I XI N G R ES U LTS O F T H E L A B O RATO RY - CR E A T ED D A TA . VCA-K-Hype VCA-MLM N-FINDR-NDU rNMF N AE NUSAL SAE Proposed RMSE Mixture 1 0.1957 0.2050 0.2135 0.2315 0.2035 0.2136 0.2296 0.1942 Mixture 2 0.1764 0.2198 0.1961 0.2212 0.1797 0.2222 0.2072 0.1729 SAD Mixture 1 10.7889 — 9.2097 19.8765 10.7815 13.0731 12.1681 10.0852 Mixture 2 9.3823 — 12.2489 15.5301 9.3736 14.3726 12.3268 9.1427 SID Mixture 1 0.0995 — 0.0325 0.2128 0.0994 0.1089 0.0797 0.0986 Mixture 2 0.0326 — 0.0568 0.1233 0.0325 0.1170 0.0552 0.0327 Boldface numbers denote the lowest RMSEs, SADs and SIDs. 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 20 40 60 0 0.5 1 Fig. 7. Abundances maps of the 2nd mixture of the laboratory-created data. From left to right columns: ground-truth, estimated results of VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method respectiv ely . From top to bottom: abundance maps of red quartz sand, green quartz sand and blue quartz sand respectively . 20 40 60 80 100 120 140 160 180 Spectral Bands 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Reflectance Value water tree soil road Endm. #5 Fig. 8. Extracted endmembers from the Jasper Ridge data by the proposed algorithm. the sum of the nonlinear component of all spectral bands. These maps demonstrate that nonlinear components are activ e at the boundary or transition parts of dif ferent regions, e.g. at the water shore. The proposed algorithm provides a clear map of nonlinear components with several particular locations emphasized. Note that this real data is extensi vely used in hyperspectral unmixing, howe v er , no ground-truth information is a v ailable for a quantitati ve performance ev aluation of abundance. Thus, the reconstruction error (RE) defined by: RE = v u u t 1 N R N X i =1 k x i − b x i k 2 (36) is used for a quantitative comparison, where x i and b x i denote the true and reconstructed vector of the i th pixel, N represents the total number of pixels, though RE may not be proportional to the ab undance estimation accuracy . The RE results of different algorithms are reported in T able VII, and the reconstruction error maps are illustrated in Figure 11. W e observe that our method leads to the lowest reconstruction error in the mean sense and in the spatial distribution. Urban is a widely used hyperspectral dataset for unmixing task. The urban image 1 has 307 × 307 pixels. All the pixels were used to ev aluate the unmixing performance. The data consist of 210 spectral bands ranging from 400 nm to 2500 nm with spectral resolution up to 10 nm. After removing channels [1-4, 76, 87, 101-111, 136-153, 198-210] af fected by dense water v apor and the atmosphere, 162 channels were remained. Fiv e prominent endmembers exist in this data, namely , asphalt, grass, tree, roof, and dirt. In this experiment, the same network, learning rate, and optimizer were used to conduct the unmixing study . The batch size was set to 512, with the number of epoch set to 80. The parameter λ was set to 1 × 10 − 4 and γ was set to 1 × 10 − 6 . Figure 12 shows the extracted endmembers by the proposed method. 1 http://www .agc.army .mil/hypercube/ IEEE XX, V OL. XX, NO. XX, 2019 11 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 20 60 100 20 40 60 80 100 0 0.5 1 20 60 100 20 40 60 80 100 0 0.5 1 20 60 100 20 40 60 80 100 0 0.5 1 20 60 100 20 40 60 80 100 0 0.5 1 20 60 100 20 40 60 80 100 0 0.5 1 Fig. 9. Estimated abundance maps of Jasper Ridge data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method. From top to bottom: water , tree, soil, road, and the 5th endmember respecti vely . 10 20 30 Fig. 10. Energy of the nonlinear components of the Jasper Ridge data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method. 0 0.1 0.2 0. 3 Fig. 11. Maps of reconstruction error of the Jasper Ridge data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method respectiv ely . T ABLE VII R E CO M PAR I S O N O F T H E J AS P E R R I D G E DA T A . Algorithm VCA-K-Hype VCA-MLM N-FINDR-NDU rNMF N AE NUSAL SAE Proposed RE 0.0128 0.0250 0.0866 0.0517 0.0298 0.0114 0.0937 0.0111 Boldface numbers denote the lowest RE v alue. The estimated abundance maps of fiv e endmembers are shown in Figure 13. These figures clearly indicate that our pro- posed method provides a smoother and clearer map. Figure 14 shows the energy of the nonlinear components estimated by these algorithms. These maps demonstrate that nonlinear components are acti ve at vegetated regions and boundary or transition parts of different regions. The proposed algorithm provides a clearer map of nonlinear components. The RE results achieved by different algorithms are reported in T able VIII, and the reconstructed error maps are sho wn in Figure 15. W e observed that our method leads to the lo west reconstruction error . IEEE XX, V OL. XX, NO. XX, 2019 12 T ABLE VIII R E CO M PAR I S O N O F T H E U R B AN D A TA . Algorithm VCA-K-Hype VCA-MLM N-FINDR-NDU rNMF N AE NUSAL SAE Proposed RE 0.0194 0.0139 0.0241 0.0176 0.0211 0.0123 0.0154 0.0113 Boldface numbers denote the lowest RE v alue. 20 40 60 80 100 120 140 160 Spectral Bands 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 Reflectance Value asphalt grass tree roof dirt Fig. 12. Extracted endmembers from the urban data by the proposed algorithm. V . C O N C L U S I O N This paper presented an unsupervised nonlinear spectral unmixing method based on a deep autoencoder network that applied a general mixture model consisting of a linear mixture component and an additiv e nonlinear mixture component. The proposed approach benefits from the universal modeling ability of deep neural networks to learn the inherent nonlinearity of the nonlinear mixture component from the data itself via the autoencoder network. The superior performance of the proposed method was validated with both synthetic and real data, particularly with laboratory-created labeled data. Future work will integrate contexture information of the image into the autoencoder network and consider the spectral variability problem to further enhance its performance. R E F E R E N C E S [1] J. M. Bioucas-Dias, A. Plaza, N. Dobigeon, M. Parente, Q. Du, P . Gader, and J. Chanussot, “Hyperspectral unmixing overvie w: Geometrical, statistical, and sparse regression-based approaches, ” IEEE J. Sel. T op. Appl. Earth Observat. Remote Sens. , v ol. 5, no. 2, pp. 354–379, 2012. [2] N. K eshav a and J. F . Mustard, “Spectral unmixing, ” IEEE Signal Proc. Mag. , vol. 19, no. 1, pp. 44–57, 2002. [3] J. Y ao, D. Meng, Q. Zhao, W . Cao, and Z. Xu, “Nonconv ex-sparsity and nonlocal-smoothness-based blind hyperspectral unmixing, ” IEEE T rans. Image Process. , vol. 28, no. 6, pp. 2991–3006, 2019. [4] D. Hong, N. Y okoya, J. Chanussot, and X. Zhu, “ An augmented linear mixing model to address spectral v ariability for hyperspectral unmixing, ” IEEE T rans. Imag e Pr ocess. , vol. 28, no. 4, pp. 1923–1938, 2018. [5] R. Heylen, M. Parente, and P . Gader, “ A revie w of nonlinear hyperspec- tral unmixing methods, ” IEEE J. Sel. T op. Appl. Earth Observat. Remote Sens. , v ol. 7, no. 6, pp. 1844–1868, 2014. [6] C. Borel and S. Gerstl, “Nonlinear spectral mixing models for v egetati ve and soil surfaces, ” Remote Sens. Envi. , v ol. 47, no. 3, pp. 403–416, 1994. [7] W . Fan, B. Hu, J. Miller, and M. Li, “Comparativ e study between a new nonlinear model and common linear model for analysing laboratory simulated-forest hyperspectral data, ” Int. J. Remote Sens. , vol. 30, no. 11, pp. 2951–2962, 2009. [8] A. Halimi, Y . Altmann, N. Dobigeon, and J. T ourneret, “Unmixing hy- perspectral images using the generalized bilinear model, ” in Geoscience Remote Sensing Symposium , 2011. [9] Y . Altmann, A. Halimi, N. Dobigeon, and J. T ourneret, “Supervised nonlinear spectral unmixing using a postnonlinear mixing model for hyperspectral imagery , ” IEEE T rans. Image Pr ocess. , vol. 21, no. 6, pp. 3017–3025, 2012. [10] B. Hapke, “Bidirectional reflectance spectroscopy: 1. theory , ” J. Geo- phys. Res. , vol. 86, no. B4, pp. 3039–3054, 1981. [11] R. Close, P . Gader, J. Wilson, and A. Zare, “Using physics-based macroscopic and microscopic mixture models for hyperspectral pixel unmixing, ” Pr oc. Soc. Photo-Opt. Instrum. Eng. (SPIE), Algorithms T echnol. Multispectral Hyper spectral Ultr aspectral Imagery XVIII , v ol. 8390, no. 1, pp. 83 901L–1, 2012. [12] J. Chen, C. Richard, and P . Honeine, “Nonlinear unmixing of hyperspec- tral data based on a linear-mixture/nonlinear-fluctuation model, ” IEEE T rans. Signal Pr ocess. , vol. 61, no. 2, pp. 480–492, 2013. [13] ——, “Nonlinear estimation of material ab undances in hyperspectral im- ages with ` 1 -norm spatial regularization, ” IEEE T rans. Geosci. Remote Sens. , v ol. 52, no. 5, pp. 2654–2665, 2014. [14] R. Ammanouil, A. Ferrari, C. Richard, and S. Mathieu, “Nonlinear unmixing of hyperspectral data with vector-v alued kernel functions, ” IEEE T rans. Imag e Pr ocess. , vol. 26, no. 1, pp. 340–354, 2017. [15] R. Heylen and P . Scheunders, “ A multilinear mixing model for nonlinear spectral unmixing, ” IEEE T rans. Geosci. Remote Sens. , vol. 54, no. 1, pp. 240–251, 2016. [16] R. Heylen, V . Andrejchenko, Z. Zahiri, M. P arente, and P . Scheunders, “Nonlinear hyperspectral unmixing with graphical models, ” IEEE T rans. Geosci. Remote Sens. , vol. 57, no. 7, pp. 4844–4856, 2019. [17] Y . Chen, Z. Lin, X. Zhao, G. W ang, and Y . Gu, “Deep learning-based classification of hyperspectral data, ” IEEE J. Sel. T op. Appl. Earth Observat. Remote Sens. , vol. 7, no. 6, pp. 2094–2107, 2014. [18] L. Mou, P . Ghamisi, and X. Zhu, “Deep recurrent neural networks for hyperspectral image classification, ” IEEE T rans. Geosci. Remote Sens. , vol. 55, no. 7, pp. 3639–3655, 2017. [19] Y . Chen, X. Zhao, and X. Jia, “Spectral–spatial classification of hyper- spectral data based on deep belief network, ” IEEE J. Sel. T op. Appl. Earth Observat. Remote Sens. , vol. 8, no. 6, pp. 2381–2392, 2015. [20] G. Licciardi and F . Del Frate, “Pixel unmixing in hyperspectral data by means of neural networks, ” IEEE T rans. Geosci. Remote Sens. , vol. 49, no. 11, pp. 4163–4172, 2011. [21] X. Zhang, Y . Sun, J. Zhang, P . W u, and L. Jiao, “Hyperspectral unmixing via deep con volutional neural networks, ” IEEE Geosci. Remote Sens. Lett , no. 99, pp. 1–5, 2018. [22] R. Guo, W . W ang, and H. Qi, “Hyperspectral image unmixing using autoencoder cascade, ” in WHISPERS 2015 . IEEE, 2015, pp. 1–4. [23] B. Palsson, J. Sigurdsson, J. Sveinsson, and M. Ulfarsson, “Hyperspec- tral unmixing using a neural network autoencoder , ” IEEE Access , v ol. 6, pp. 25 646–25 656, 2018. [24] Y . Qu, R. Guo, and H. Qi, “Spectral unmixing through part-based non- negati ve constraint denoising autoencoder , ” in Proc. IEEE International Geoscience and Remote Sensing Symposium (IGARSS) , 2017, pp. 209– 212. [25] Y . Qu and H. Qi, “uD AS: An untied denoising autoencoder with sparsity for spectral unmixing, ” IEEE T rans. Geosci. Remote Sens. , v ol. 57, no. 3, pp. 1698–1712, 2018. [26] Y . Su, A. Marinoni, J. Li, A. Plaza, and P . Gamba, “Nonnegativ e sparse autoencoder for robust endmember e xtraction from remotely sensed hyperspectral images, ” in Pr oc. IEEE International Geoscience and Remote Sensing Symposium (IGARSS) , 2017, pp. 205–208. [27] Y . Su, J. Li, A. Plaza, A. Marinoni, P . Gamba, and S. Chakravortty , “D AEN: Deep autoencoder networks for hyperspectral unmixing, ” IEEE T rans. Geosci. Remote Sens. , vol. 57, no. 7, pp. 4309–4321, 2019. [28] S. Ozkan, B. Kaya, and G. B. Akar , “EndNet: Sparse autoencoder network for endmember extraction and hyperspectral unmixing, ” IEEE T rans. Geosci. Remote Sens. , vol. 57, no. 1, pp. 482–496, 2018. IEEE XX, V OL. XX, NO. XX, 2019 13 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 100 300 100 200 300 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 0 0.5 1 Fig. 13. Estimated abundance maps of urban data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , NAE, NUSAL, SAE and the proposed method. From top to bottom: asphalt, grass, tree, roof, dirt. 0 0.1 0.2 0 .3 Fig. 14. Energy of the nonlinear components of the urban data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , NAE, NUSAL, SAE and the proposed method. 0.1 0.2 0.3 0 Fig. 15. Maps of reconstruction error the urban data. From left to right: VCA-K-Hype, VCA-MLM, N-FINDR-NDU, rNMF , N AE, NUSAL, SAE and the proposed method. [29] R. Borsoi, T . Imbiriba, and J. Bermudez, “Deep generati ve endmember modeling: An application to unsupervised spectral unmixing, ” IEEE T rans. Comput. Imag . , pp. 1–1, 2019. [30] M. W ang, M. Zhao, J. Chen, and S. Rahardja, IEEE Geosci. Remote Sens. Lett , vol. 16, no. 9, pp. 1467–1471, 2019. [31] I. Goodfello w , Y . Bengio, and A. Courville, Deep learning . MIT press, 2016. [32] M. E. Winter , “N-FINDR: An algorithm for fast autonomous spectral end-member determination in hyperspectral data, ” in Imaging Spectrom- etry V , vol. 3753. International Society for Optics and Photonics, 1999, pp. 266–276. [33] C. F ´ evotte and N. Dobigeon, “Nonlinear hyperspectral unmixing with robust nonnegativ e matrix factorization. ” IEEE T rans. Image Pr ocess. , vol. 24, no. 12, pp. 4810–4819, 2015. [34] A. Halimi, J. Bioucas-Dias, N. Dobigeon, G. Buller , and S. McLaughlin, “Fast h yperspectral unmixing in presence of nonlinearity or mismodeling effects, ” IEEE T rans. Computational Imaging , v ol. 3, no. 2, pp. 146–159, 2016. [35] X. Xu, Z. Shi, and B. P an, “ A supervised abundance estimation method for hyperspectral unmixing, ” Remote sens. lett. , vol. 9, no. 4, pp. 383– 392, 2018. [36] N. Dobigeon, J. T ourneret, C. Richard, J. Bermudez, S. McLaughlin, and A. Hero, “Nonlinear unmixing of hyperspectral images: Models and algorithms, ” IEEE Signal Process. Mag. , vol. 31, no. 1, pp. 82–94, 2014. [37] M. Zhao, J. Chen, and Z. He, “ A labortary-created dataset with ground- truth for hyperspectral unmixing ev aluation, ” IEEE J. Sel. T op. Appl. Earth Observat. Remote Sens. , vol. 12, no. 7, pp. 2170–2183, 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment