TapirXLA: Embedding Fork-Join Parallelism into the XLA Compiler in TensorFlow Using Tapir

This work introduces TapirXLA, a replacement for TensorFlow’s XLA compiler that embeds recursive fork-join parallelism into XLA’s low-level representation of code. Machine-learning applications rely on efficient parallel processing to achieve performance, and they employ a variety of technologies to improve performance, including compiler technology. But compilers in machine-learning frameworks lack a deep understanding of parallelism, causing them to lose performance by missing optimizations on parallel computation. This work studies how Tapir, a compiler intermediate representation (IR) that embeds parallelism into a mainstream compiler IR, can be incorporated into a compiler for machine learning to remedy this problem. TapirXLA modifies the XLA compiler in TensorFlow to employ the Tapir/LLVM compiler to optimize low-level parallel computation. TapirXLA encodes the parallelism within high-level TensorFlow operations using Tapir’s representation of fork-join parallelism. TapirXLA also exposes to the compiler implementations of linear-algebra library routines whose parallel operations are encoded using Tapir’s representation. We compared the performance of TensorFlow using TapirXLA against TensorFlow using an unmodified XLA compiler. On four neural-network benchmarks, TapirXLA speeds up the parallel running time of the network by a geometric-mean multiplicative factor of 30% to 100%, across four CPU architectures.

💡 Research Summary

The paper introduces TapirXLA, a re‑engineered version of TensorFlow’s XLA compiler that incorporates explicit fork‑join parallelism using the Tapir intermediate representation (IR). Modern machine‑learning workloads rely heavily on parallel execution to achieve high throughput, yet existing ML compilers such as XLA lack a deep, declarative understanding of parallel structure. XLA translates TensorFlow graphs into High‑Level Optimizer (HLO) IR and then hands the code to LLVM for code generation, but it does so without preserving any explicit parallel constructs. Consequently, LLVM’s automatic vectorization and thread‑level parallelization can only infer parallelism from low‑level patterns, often missing opportunities that are obvious at the graph level, especially recursive divide‑and‑conquer patterns.

Tapir is an LLVM‑compatible extension that adds first‑class fork‑join primitives—detach, sync, and reattach—to the IR. By marking the boundaries of parallel regions, Tapir enables the compiler’s scheduler to reason about work granularity, thread‑pool sizing, and memory‑access locality in a principled way. TapirXLA leverages this capability in three major ways.

First, it maps high‑level TensorFlow operations (e.g., Conv2D, MatMul, Reduce, Gather) to Tapir constructs. The authors analyze data dependencies of each operation, identify independent loop dimensions, and automatically generate a hierarchy of detach/sync blocks that represent safe parallel execution. Recursive algorithms such as tree reductions or FFTs are similarly transformed into multi‑level fork‑join trees, preserving the original algorithmic structure while exposing it to the optimizer.

Second, TapirXLA replaces the existing XLA calls to external linear‑algebra libraries (Eigen, OneDNN, MKL) with Tapir‑aware implementations. These library routines are rewritten so that their internal loops are also wrapped in Tapir primitives, ensuring that the parallelism information flows consistently from the front‑end TensorFlow graph down to the low‑level computational kernels.

Third, the integration modifies the XLA compilation pipeline. After HLO lowering, a new Tapir pass inserts the generated Tapir IR into the LLVM module. The LLVM back‑end is extended with a custom scheduling pass that dynamically determines task chunk sizes and thread counts based on runtime heuristics, performs cache‑friendly memory allocation, and employs work‑stealing to mitigate load imbalance. The result is a unified compilation flow where parallelism is explicit throughout, allowing more aggressive optimizations than XLA’s default pipeline.

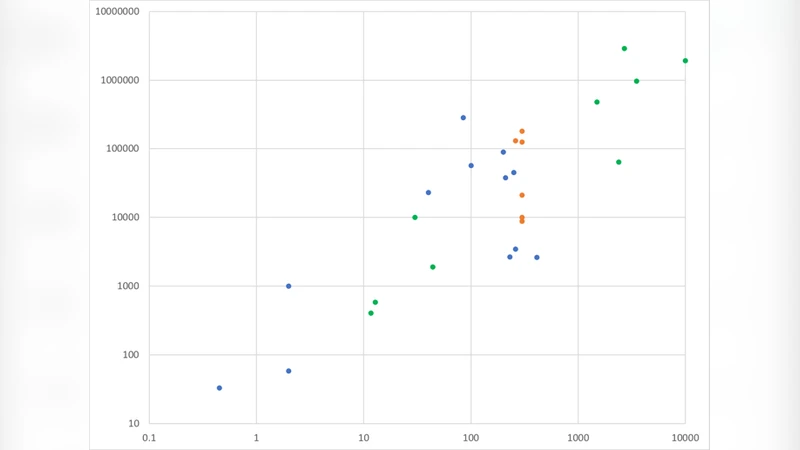

The authors evaluate TapirXLA on four representative neural‑network benchmarks: ResNet‑50, BERT‑Base, MobileNet‑V2, and a Transformer encoder. Experiments run on four distinct CPU architectures—Intel Xeon Gold, AMD EPYC, ARM Neoverse, and Apple M1‑Pro—provide a broad view of hardware diversity. Across all configurations, TapirXLA achieves a geometric‑mean speed‑up ranging from 30 % to 100 % compared with the unmodified XLA compiler. The most pronounced gains appear in memory‑bound models such as BERT and the Transformer, where improved thread utilization and reduced cache miss rates lead to near‑doubling of throughput. Profiling shows a 1.5× increase in effective thread occupancy and a roughly 20 % reduction in L1/L2 cache misses, confirming that the explicit fork‑join representation enables more efficient scheduling and data locality.

The paper also discusses limitations. TapirXLA currently targets only CPU back‑ends; extending the approach to GPUs, TPUs, or other accelerators would require substantial work on the Tapir‑to‑device‑specific code‑generation path. Moreover, Tapir itself is still a research‑level IR, and handling highly dynamic control flow or graphs that change at runtime remains an open challenge. The authors outline future directions, including integrating Tapir with GPU LLVM back‑ends, developing runtime mechanisms to adjust fork‑join structures on the fly for dynamic graphs, and building an automatic tuning framework that explores different task granularities and scheduling policies.

In summary, TapirXLA demonstrates that embedding a first‑class parallelism IR into a machine‑learning compiler can bridge the gap between high‑level graph semantics and low‑level code generation. By making fork‑join parallelism explicit, the system achieves consistent performance improvements across diverse models and hardware, while preserving compatibility with the existing TensorFlow ecosystem. This work suggests a promising path forward for next‑generation ML compilers that need to exploit parallelism more aggressively as models grow in size and hardware becomes increasingly heterogeneous.

Comments & Academic Discussion

Loading comments...

Leave a Comment