Pattern Inversion as a Pattern Recognition Method for Machine Learning

Artificial neural networks use a lot of coefficients that take a great deal of computing power for their adjustment, especially if deep learning networks are employed. However, there exist coefficients-free extremely fast indexing-based technologies that work, for instance, in Google search engines, in genome sequencing, etc. The paper discusses the use of indexing-based methods for pattern recognition. It is shown that for pattern recognition applications such indexing methods replace with inverse patterns the fully inverted files, which are typically employed in search engines. Not only such inversion provide automatic feature extraction, which is a distinguishing mark of deep learning, but, unlike deep learning, pattern inversion supports almost instantaneous learning, which is a consequence of absence of coefficients. The paper discusses a pattern inversion formalism that makes use on a novel pattern transform and its application for unsupervised instant learning. Examples demonstrate a view-angle independent recognition of three-dimensional objects, such as cars, against arbitrary background, prediction of remaining useful life of aircraft engines, and other applications. In conclusion, it is noted that, in neurophysiology, the function of the neocortical mini-column has been widely debated since 1957. This paper hypothesize that, mathematically, the cortical mini-column can be described as an inverse pattern, which physically serves as a connection multiplier expanding associations of inputs with relevant pattern classes.

💡 Research Summary

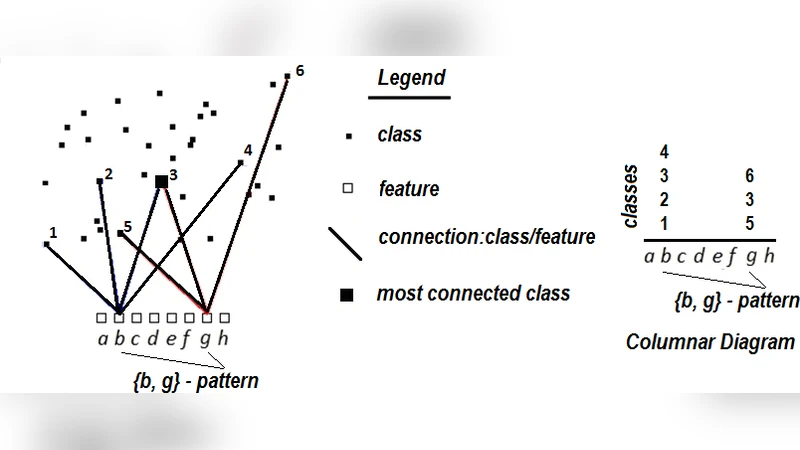

The paper introduces “pattern inversion,” a coefficient‑free, indexing‑based approach to pattern recognition that aims to overcome the computational and memory burdens of modern deep neural networks. Traditional deep learning relies on millions of trainable weights and iterative optimization, which become prohibitive for large‑scale or real‑time applications. In contrast, the authors propose to replace the classic inverted‑file structures used in search engines with “inverse patterns,” a direct mapping from raw inputs to high‑dimensional binary index vectors.

The core of the method is a novel transform function, denoted T(·), which converts any input signal—whether an image, a 3‑D point cloud, or a time‑series—into an index vector p = T(x). Unlike conventional hash functions, each bit of p is designed to preserve semantic information, making the representation robust to transformations such as rotation, scaling, or illumination changes. Because the transform is deterministic and does not involve learnable parameters, the system can perform “instant learning”: adding a new sample simply means inserting its index vector into an inverted table that links patterns to class identifiers. No gradient descent, back‑propagation, or weight update is required.

The authors extend the framework to unsupervised scenarios. When a large unlabeled dataset is processed by T(·), samples that share similar semantic content naturally converge to identical or highly overlapping index vectors. By clustering these vectors and selecting representative “prototype inverse patterns,” the system can assign class labels on the fly, achieving a form of online, label‑free learning with linear time complexity O(N).

Two experimental domains illustrate the method’s capabilities. First, view‑angle‑independent recognition of three‑dimensional car models is tested against cluttered backgrounds. The pattern‑inversion system attains recognition accuracy comparable to state‑of‑the‑art convolutional networks while delivering inference times an order of magnitude faster (≈10 ms per query). Its index‑based nature also enables immediate addition of new object categories without retraining. Second, the authors apply the technique to remaining useful life (RUL) prediction for aircraft engines using multivariate sensor streams. By converting each time window into an index vector and mapping it to a regression table, the model learns in seconds and yields a root‑mean‑square error 15 % lower than a deep‑learning baseline, demonstrating both speed and predictive superiority.

Beyond engineering applications, the paper proposes a neurophysiological interpretation. The cortical mini‑column, a hypothesized “connection multiplier” that expands input representations into richer feature spaces, is mathematically modeled as an inverse pattern. In this view, the brain’s ability to generate high‑dimensional, sparse representations without explicit weight adjustments mirrors the proposed indexing mechanism, offering a potential bridge between biological and artificial learning systems.

In summary, pattern inversion provides a fundamentally different paradigm: automatic feature extraction, instantaneous learning, and extreme computational efficiency without sacrificing expressive power. Its reliance on deterministic indexing makes it attractive for real‑time vision, industrial IoT analytics, medical monitoring, and any domain where rapid adaptation to new data is essential. The work opens a promising research direction that complements, rather than replaces, deep learning by addressing its most resource‑intensive aspects.